Official statement

Other statements from this video 13 ▾

- □ Pourquoi Google préfère-t-il les données structurées au machine learning pour comprendre vos pages ?

- □ Faut-il encore se fatiguer avec les données structurées si le machine learning fait le boulot ?

- □ Les données structurées donnent-elles vraiment du contrôle aux webmasters sur l'affichage Google ?

- □ Pourquoi Google recommande-t-il de commencer par les données structurées génériques ?

- □ Pourquoi votre Schema.org valide peut être rejeté par Google ?

- □ Faut-il implémenter des données structurées même si Google ne les utilise pas encore ?

- □ Les données structurées influencent-elles vraiment la compréhension du sujet d'une page par Google ?

- □ Les données structurées sont-elles vraiment utiles si Google comprend déjà votre page ?

- □ Faut-il vraiment bourrer vos pages de données structurées pour mieux ranker ?

- □ Faut-il abandonner JSON-LD au profit de Microdata pour les données structurées ?

- □ Le JSON-LD externe pose-t-il vraiment des problèmes de synchronisation pour Google ?

- □ Les outils de test Google sont-ils vraiment fiables pour détecter vos données structurées manquantes ?

- □ Les données structurées doivent-elles systématiquement refléter le contenu visible de la page ?

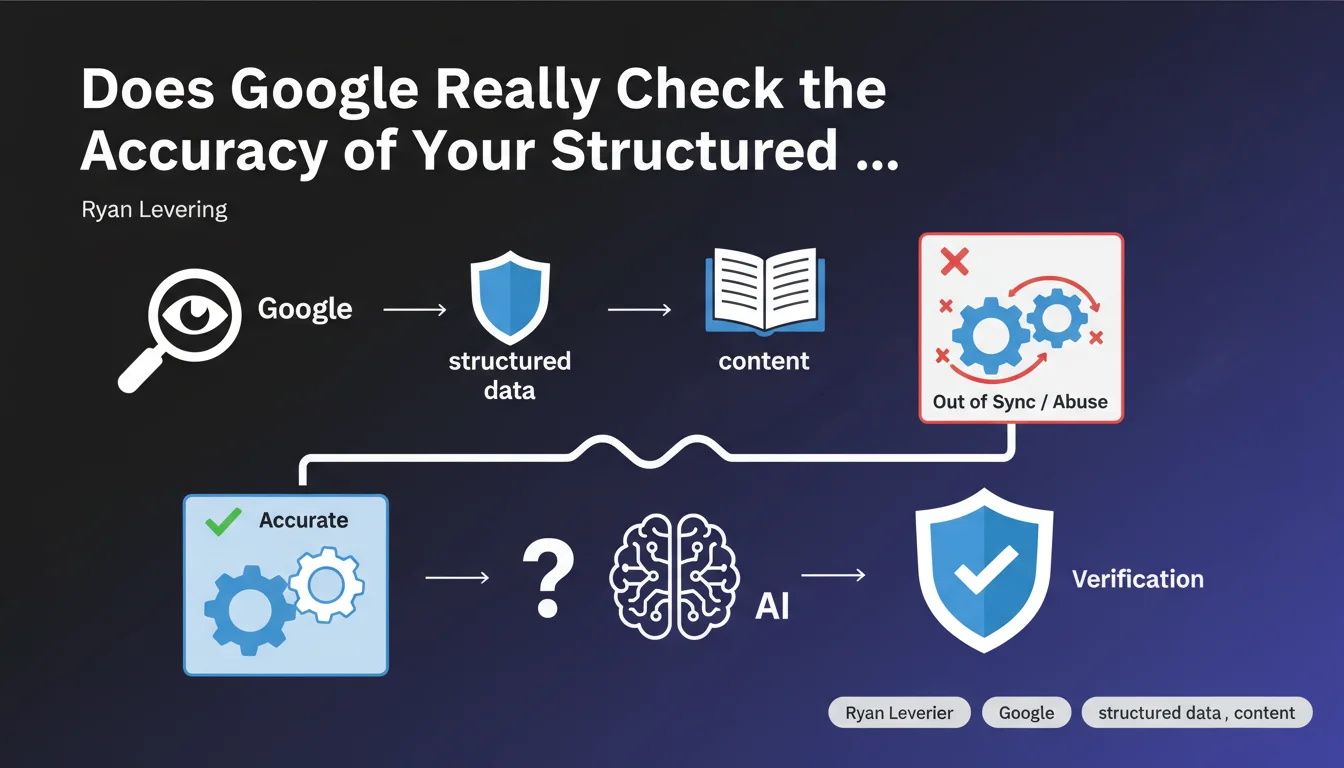

Google confirms it validates the accuracy of structured data on your pages. The real challenge for its algorithm: distinguishing intentional abuse (misleading markup) from accidental desynchronizations between the markup and visible content. This statement raises more questions than it answers about detection mechanisms.

What you need to understand

Ryan Levering, an engineer at Google, reveals a poorly documented aspect of structured data processing. Beyond simple syntax validation (which the Rich Results Test already does), Google evaluates whether the markup truly matches the content perceived by the user.

This verification targets two distinct problems: deliberate spam (tagging a non-existent price to obtain rich snippets) and technical errors (structured data that becomes outdated when content changes without the JSON-LD being updated).

Why does Google talk about "synchronization issues"?

An e-commerce site updates a price via its CMS. The HTML content changes, but the JSON-LD remains frozen with the old price because the cache wasn't invalidated or the generation script works asynchronously.

Google crawls the page and detects a discrepancy between what the markup announces (€25) and what the page displays (€35). Is it a bug? An attempt to manipulate? Levering admits that's where it gets tricky.

How does Google distinguish abuse from technical error?

The statement remains vague. Google likely has heuristics that cross multiple signals: frequency of discrepancies, site history, magnitude of the gap between markup and visible content.

A site with 2% occasional desynchronizations won't be treated like a site that systematically displays prices 30% lower in its rich snippets than on the actual page. But the exact thresholds? Mystery.

Which structured data types are scrutinized as a priority?

Logically, those that directly impact the SERPs: Product (price, availability), Recipe (cooking time, ratings), Review (stars), Event (dates). Less critical: Organization, Breadcrumb.

Google has every incentive to verify what generates clicks. A misleading rich snippet deteriorates user experience and therefore trust in search results.

- Google validates the accuracy of structured data, not just its syntax

- The engine distinguishes between intentional abuse and accidental desynchronization — at least in theory

- The inconsistencies between JSON-LD and visible content are the primary warning signal

- No clarification on detection methods or tolerance thresholds

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes and no. We do observe that sites lose their rich snippets after displaying inconsistent structured data for several weeks. Conversely, sites with occasional bugs (desynchronized prices on a few products) don't seem to be systematically penalized.

The problem: Google never explains why a rich snippet disappears. "Issue detected," period. Impossible to know whether it's a desynchronization, suspected abuse, or simply a change in Google's display logic. [To verify]: the existence of a scoring system to assess the "reliability" of a site's markup over time.

What nuances should be added to this announcement?

Levering uses the word "challenge" — suggesting the system is not foolproof. Google probably misses sophisticated abuse (conditional markup displayed only to the bot) and perhaps wrongly penalizes sites with transient bugs.

The notion of "synchronization" is misleading. A dynamic site generates content on the fly: price changes based on geolocation, stock, currency. Should the JSON-LD reflect the US or FR version? Desktop or mobile? Google crawls with a US desktop user-agent by default. If your markup is based on FR mobile data, you're creating artificial desynchronization.

In what cases does this rule become problematic?

Sites with dynamic pricing (yield management, A/B testing on prices). The markup can be technically accurate at generation time but obsolete 10 minutes later.

Multilingual sites where JSON-LD is translated with a delay relative to HTML content. Sites using client-side rendering where visible content loads after initial JSON-LD.

Practical impact and recommendations

What should you do concretely to avoid desynchronizations?

Audit the consistency between your structured data and visible content. Crawl your site with a tool that extracts both the DOM and JSON-LD, then programmatically compare key values (price, ratings, dates).

Implement automatic validation: with each deployment, verify that a sample of pages generates markup consistent with the rendered HTML. Ideally, integrate this test into your CI/CD.

For sites with dynamic content, prioritize generating JSON-LD server-side with the same data displayed to users. If you must go client-side, ensure the script executes with the same logic as HTML rendering.

What mistakes to absolutely avoid?

Never tag a price, rating, or availability that doesn't appear anywhere on the visible page. Even if technically the product exists at that price in your database, if the user can't see it or buy it at that price, it's spam in Google's eyes.

Avoid cache systems that update HTML but not JSON-LD (or vice versa). This is the #1 source of accidental desynchronizations on large sites.

Don't attempt to "over-optimize" by displaying structured data more flattering than reality. Google increasingly crosses multiple signals — a discrepancy will be detected sooner or later.

How to verify your site is compliant?

- Crawl a sample of pages and extract JSON-LD + rendered HTML content

- Automatically compare critical fields (price, ratingValue, availability, datePublished)

- Identify pages with gaps > 5% (price) or qualitative differences (availability, ratings)

- Verify markup generates with the same data as user rendering

- Test Googlebot rendering via Search Console (URL inspection) to detect JS issues

- Set up an alert if your rich snippets suddenly disappear from the SERPs

- Document your choices: which version (US/FR, desktop/mobile) serves as reference for JSON-LD?

❓ Frequently Asked Questions

Google pénalise-t-il un site si les données structurées sont désynchronisées par erreur ?

Quels types de données structurées sont les plus scrutés par Google ?

Comment Google détecte-t-il une désynchronisation entre JSON-LD et contenu visible ?

Faut-il privilégier le JSON-LD côté serveur ou client-side pour éviter les problèmes ?

Un site avec du contenu dynamique (prix variables) peut-il utiliser des données structurées sans risque ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 07/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.