Official statement

Other statements from this video 11 ▾

- □ Pourquoi Google avait-il tant de mal à comprendre les mots de liaison comme 'not' dans les requêtes ?

- □ Comment Google évalue-t-il réellement la qualité de son moteur : mesures globales ou analyse segmentée ?

- □ La pertinence topique est-elle devenue un critère SEO dépassé ?

- □ Google applique-t-il vraiment un principe d'équilibre entre types de sites dans ses résultats ?

- □ Pourquoi vos stratégies de mots-clés longue traîne sont-elles dépassées depuis l'arrivée du NLU ?

- □ Google privilégie-t-il vraiment la promotion plutôt que la pénalité ?

- □ Pourquoi Google a-t-il conçu les Featured Snippets autour de la compréhension sémantique plutôt que du matching de mots-clés ?

- □ Comment Google mesure-t-il vraiment la satisfaction des utilisateurs dans ses résultats de recherche ?

- □ E-E-A-T est-il vraiment un facteur de ranking ou juste un mythe SEO ?

- □ Pourquoi Google se méfie-t-il du volume de requêtes comme indicateur de qualité ?

- □ Les Quality Rater Guidelines sont-elles vraiment un mode d'emploi pour le SEO ?

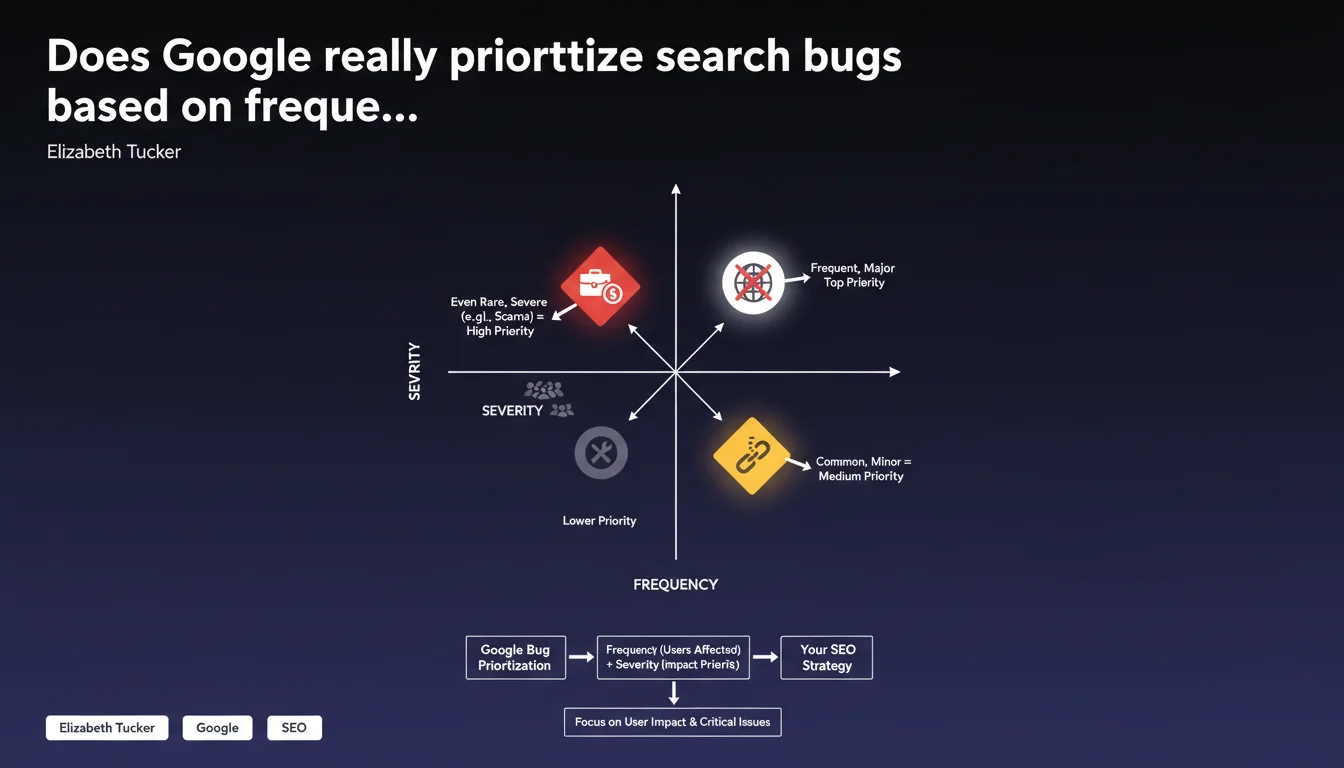

Google ranks its bug fixes according to two criteria: frequency (number of users affected) and severity (impact severity). A rare but serious problem, such as financial scams, will take priority over a frequent but minor bug. For SEO, this means that a technical issue affecting few but critical pages can be treated before a widespread but benign malfunction.

What you need to understand

Why does Google adopt this prioritization logic?

Every day, Google receives thousands of bug reports — indexing problems, incorrect results, featured snippets wrongly attributed, pages disappearing without reason. It's impossible to handle everything simultaneously.

The frequency/severity matrix allows for rational sorting. A bug affecting 0.01% of queries but exposing users to financial scams rises to the top of the list. Conversely, a visual glitch present on 5% of results but with no concrete consequence will wait.

What does this mean concretely for a website?

If your site suffers from a rare but critical indexing problem — say, a strategic page invisible for three weeks while the rest is performing well — don't count on a quick resolution. Google will likely judge the overall impact as minimal.

On the other hand, if the same problem affects thousands of sites simultaneously (massive deindexation after an update), frequency increases and Google reacts. That's why we sometimes see fixes deployed within 48 hours for widespread bugs, and nothing for months on isolated cases.

What are Google's exact severity criteria?

Google remains deliberately vague about thresholds. We know severity includes: exposure to dangerous content, revenue loss for legitimate sites, critical user experience degradation.

But there's no public scoring grid. Will an indexing problem costing an e-commerce business $10,000 per day be deemed "severe"? Probably not if only one site is affected. That's where the shoe pinches: severity perception is asymmetrical between Google (thinking ecosystem-wide) and webmasters (thinking survival).

- Frequency: number of users or queries affected, not just the number of sites

- Severity: impact on security, result reliability, financial integrity

- Scams and dangerous content are always prioritized, even if rare

- Purely cosmetic bugs or those affecting ultra-specific niches move to the back of the queue

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes and no. We do observe that certain massive bugs — like the accidental deindexation of millions of pages last March — are fixed within days. But on the "severity" side, it's more debatable.

Sites victimized by negative SEO through massive spam or duplicate content attacks sometimes wait months before Google reacts, even though the financial impact is catastrophic for them. Google seems to define "severe" on the scale of the global ecosystem, not on the scale of an individual site. [To verify]: is there a threshold number of affected sites for a bug to become "frequent"?

What nuances should we add to this logic?

The statement doesn't clarify how Google measures frequency. Is it the number of sites? Query volume? Lost traffic? A bug affecting 100 small blogs versus one affecting 5 sites generating 10 million sessions per month — which one is "more frequent"?

Another point: Google uses "financial scams" as an example of maximum severity. Fair enough, but what about incorrect medical content, misinformation during elections, or biased results for sensitive queries? The severity hierarchy is never made public.

In what cases does this rule not apply?

When a problem affects a site with very high authority or a strategic partner (major press, institutions), responsiveness is often superior — even though Google officially denies preferential treatment. Statistical coincidence? Perhaps. But observations suggest otherwise.

Also, if a bug generates negative media coverage or an uproar on social media, "severity" climbs artificially. Google has fixed minor issues within 24 hours simply because they were becoming a public relations nightmare.

Practical impact and recommendations

What should you concretely do when facing an indexing or ranking problem?

First, document extensively. If you report a bug through Search Console or official forums, prove the problem is frequent: screenshots, affected URLs, Analytics data showing traffic drop, testimonials from other sites.

The more you demonstrate others are affected, the more you increase perceived "frequency" — and thus your chances of Google intervention. An isolated report without context ends up in the trash.

What mistakes should you avoid when reporting a problem to Google?

Don't just say "my site disappeared." Quantify the impact: number of deindexed pages, lost traffic volume, problem duration. Google responds to data, not emotions.

Also avoid diluting your report with unverified assumptions ("it's surely a Googlebot bug"). Stay factual. If you suspect a Google-side issue, show that all site-side causes have been ruled out: crawl budget OK, clean robots.txt, sitemap up to date, proper response times.

- Compile a precise list of affected URLs with dates and symptoms

- Verify the problem isn't caused by internal technical error (server logs, redirects, canonicals)

- Check if other sites report the same issue (forums, Twitter, SEO communities)

- Document quantified impact: lost traffic, deindexed pages, affected revenue

- Report through official channels (Search Console, feedback forms) with solid data

- Prepare a workaround plan: alternative pages, temporary redirects, user communication

How can you anticipate Google's priorities to avoid unpleasant surprises?

Accept that rare bugs that are serious for you will likely not be fixed quickly. If your business relies on a handful of critical pages, diversify your traffic sources: paid search, social, email, direct.

Invest in robust technical infrastructure: real-time monitoring, automatic alerts on indexation drops, regular position backups, access logs to trace Googlebot visits. The earlier you detect issues, the more damage you limit.

❓ Frequently Asked Questions

Comment Google mesure-t-il la fréquence d'un bug de recherche ?

Un problème d'indexation grave sur mon site sera-t-il traité rapidement par Google ?

Pourquoi certains bugs sont-ils corrigés en 48h et d'autres ignorés pendant des mois ?

Que signifie concrètement la notion de 'sévérité' pour Google ?

Comment maximiser mes chances que Google traite mon signalement de bug ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 27/06/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.