Official statement

Other statements from this video 11 ▾

- □ Comment Google évalue-t-il réellement la qualité de son moteur : mesures globales ou analyse segmentée ?

- □ La pertinence topique est-elle devenue un critère SEO dépassé ?

- □ Google applique-t-il vraiment un principe d'équilibre entre types de sites dans ses résultats ?

- □ Pourquoi vos stratégies de mots-clés longue traîne sont-elles dépassées depuis l'arrivée du NLU ?

- □ Google privilégie-t-il vraiment la promotion plutôt que la pénalité ?

- □ Pourquoi Google a-t-il conçu les Featured Snippets autour de la compréhension sémantique plutôt que du matching de mots-clés ?

- □ Comment Google mesure-t-il vraiment la satisfaction des utilisateurs dans ses résultats de recherche ?

- □ E-E-A-T est-il vraiment un facteur de ranking ou juste un mythe SEO ?

- □ Pourquoi Google se méfie-t-il du volume de requêtes comme indicateur de qualité ?

- □ Les Quality Rater Guidelines sont-elles vraiment un mode d'emploi pour le SEO ?

- □ Comment Google priorise-t-il les bugs de recherche et qu'est-ce que ça change pour le SEO ?

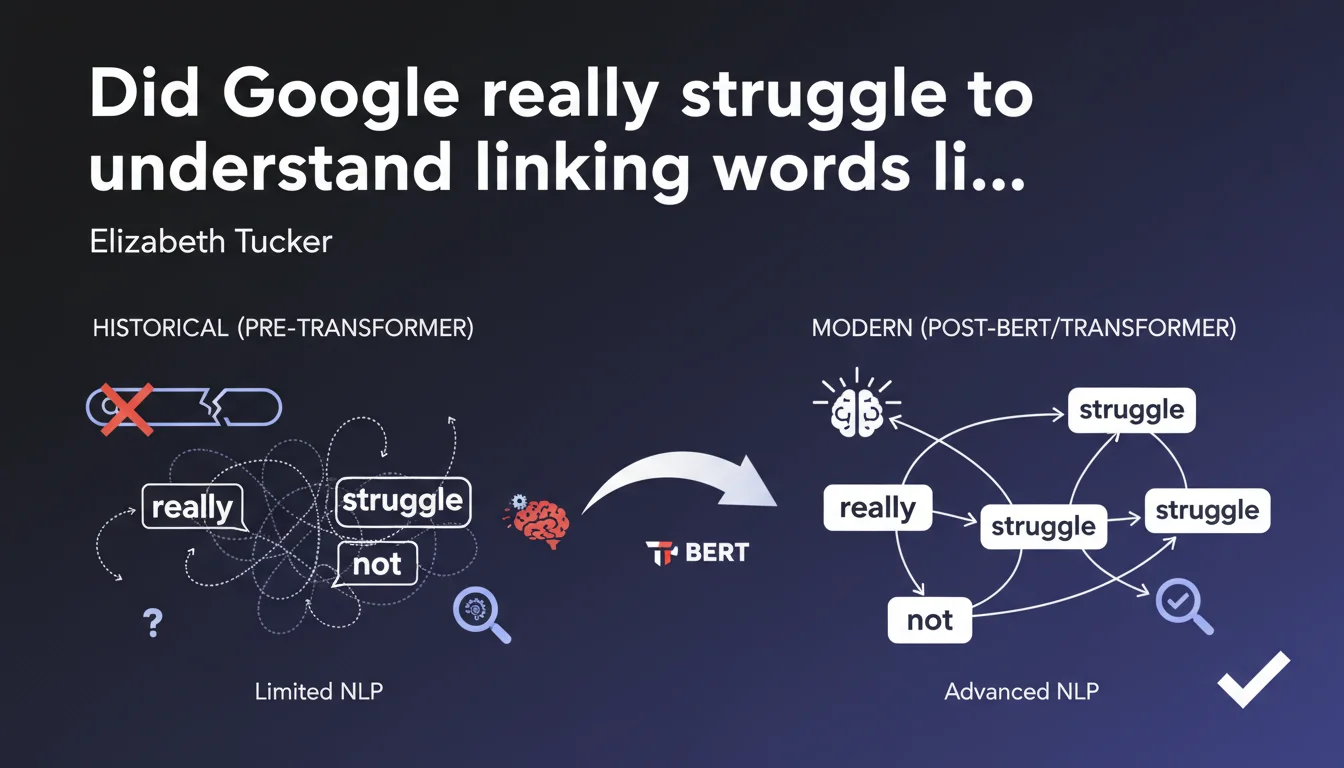

Prepositions and linking words (like 'not') have long been the Achilles' heel of Google's algorithms. The arrival of Transformer models and BERT revolutionized natural language understanding, finally making it possible to grasp nuances and negations. For SEO professionals, this changes the game when it comes to semantic optimization and search intent.

What you need to understand

Why did linking words pose problems for older Google systems?

Google's early algorithms relied on a keyword matching approach. A word like 'not' was often ignored or misinterpreted, because systems lacked the capacity to understand negation or the overall context of a sentence.

Concrete result: a query like "restaurants not expensive" could return results for "restaurants expensive", because the algorithm focused on main terms and dismissed prepositions as non-significant.

What changed with Transformer and BERT?

Transformer models introduce an architecture that processes words in their complete context, not in isolation. BERT (Bidirectional Encoder Representations from Transformers) goes even further: it analyzes words in both directions — before and after — to grasp nuances.

Concretely? Google can now distinguish "car without a license" from "car with a license", or "gluten-free recipe" from "gluten recipe". Linking words finally become determining factors in intent interpretation.

What's the direct implication for content optimization?

Content must now reflect the semantic variations that real users actually search for. If your target audience searches "freelancer without degree", writing just "freelancer" won't be enough anymore — the qualifier fundamentally changes the intent.

Long-tail strategies take on a new dimension: every word counts, including those we once overlooked.

- Linking words (not, without, with, for, against, etc.) are now correctly interpreted by Google

- Transformer architecture enables bidirectional understanding of context

- BERT marked a turning point in handling nuances and negations in queries

- Semantic optimization must integrate these variations to match actual user intent

- Generic or overly broad content loses relevance compared to precise and contextualized content

SEO Expert opinion

Does this explanation really account for why certain content is losing ground?

Yes, and it's a crucial point often underestimated. Sites producing generic content by targeting only main terms find themselves outpaced. If your "recipes" page ignores qualifiers ("sugar-free", "no-cook", "for beginners"), it loses against more specific content.

We've observed for several years that featured snippets favor answers that precisely integrate the query's nuances. This is no accident — it's BERT in action.

Should you multiply variations infinitely?

No, and that's where it gets tricky. Some SEO professionals fall into the semantic over-optimization trap: creating 50 pages to cover every combination of prepositions. Bad idea.

Google understands synonyms and variations — no need to create a "shoes without laces" page, another "shoes without velcro", etc. A single well-structured page, with targeted sections, does the job better. [To verify]: Google has never specified the threshold at which two variations justify two distinct pages.

Does this evolution really change keyword research strategy?

Absolutely. Traditional tools (search volume, CPC) don't capture nuanced intentions. "Loan without down payment" and "loan with down payment" have similar volumes, but radically different intentions.

SEO professionals must now cross-reference multiple sources: Search Console (actual queries), People Also Ask (natural variations), and NLP tools to identify relevant semantic clusters. Content strategies based solely on search volumes are becoming obsolete.

Practical impact and recommendations

What needs reviewing in your existing content architecture?

Start by auditing your pillar pages. Identify queries with qualifiers ("without", "with", "for", "against") that generate traffic but land on generic pages. That's where you're losing engagement.

Then restructure: create dedicated sections or FAQs that explicitly address these nuances. A "Digital Marketing Training" page should include sections like "Training with no prerequisites", "Training with certification", etc.

How do you identify high-potential content opportunities?

Leverage Search Console: filter queries by impressions (>100) with low CTR (<5%). You'll often find variations with prepositions that your current content doesn't explicitly address.

Cross-reference with Answer the Public or AlsoAsked to map natural questions including these linking words. This is qualified traffic searching for precise answers.

What mistakes should you avoid in post-BERT semantic optimization?

First mistake: semantic keyword stuffing. Repeating "car without license" 15 times in 300 words adds nothing. BERT understands context — write naturally.

Second trap: neglecting thematic consistency. If your page covers "loan without down payment", all content must stay consistent with this specific constraint. No drifting toward standard loans.

- Audit Search Console queries containing prepositions and negations

- Identify generic pages receiving traffic on specific intentions

- Create dedicated sections or distinct pages based on semantic distance

- Use structured FAQs to address natural variations

- Integrate qualifiers in title tags and H2s when relevant

- Avoid cannibalization: minor variations don't always justify separate pages

- Test featured snippets: reformat to precisely match queries with prepositions

- Monitor bounce rates on generic pages: indicator of intent mismatch

❓ Frequently Asked Questions

BERT a-t-il remplacé tous les anciens algorithmes de Google ?

Faut-il créer une page séparée pour chaque variation avec préposition ?

Les outils de recherche de mots-clés traditionnels sont-ils obsolètes ?

Comment vérifier si mon contenu est optimisé pour BERT ?

Cette évolution favorise-t-elle les contenus longs ou courts ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 27/06/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.