Official statement

Other statements from this video 12 ▾

- □ Faut-il vraiment se préoccuper du crawl budget pour votre site ?

- □ Comment Google définit-il réellement le crawl budget et quels leviers peut-on actionner ?

- □ Google n'indexe-t-il vraiment qu'une fraction du web à cause de ses coûts de stockage ?

- □ Les requêtes POST plombent-elles vraiment votre crawl budget ?

- □ Le crawl budget d'une nouvelle section est-il hérité de la qualité du site principal ?

- □ Les codes 503 et 429 peuvent-ils vraiment réduire votre crawl budget ?

- □ Peut-on vraiment piloter son crawl budget depuis Google Search Console ?

- □ HTTP/2 améliore-t-il vraiment votre crawl budget ?

- □ Pourquoi vos URLs 'découvertes mais non crawlées' révèlent-elles un problème de fond ?

- □ Faut-il bloquer l'indexation de vos fichiers JavaScript pour optimiser le crawl budget ?

- □ Les 404 et robots.txt gaspillent-ils vraiment votre crawl budget ?

- □ Faut-il bloquer vos fichiers JavaScript décoratifs pour optimiser votre crawl budget ?

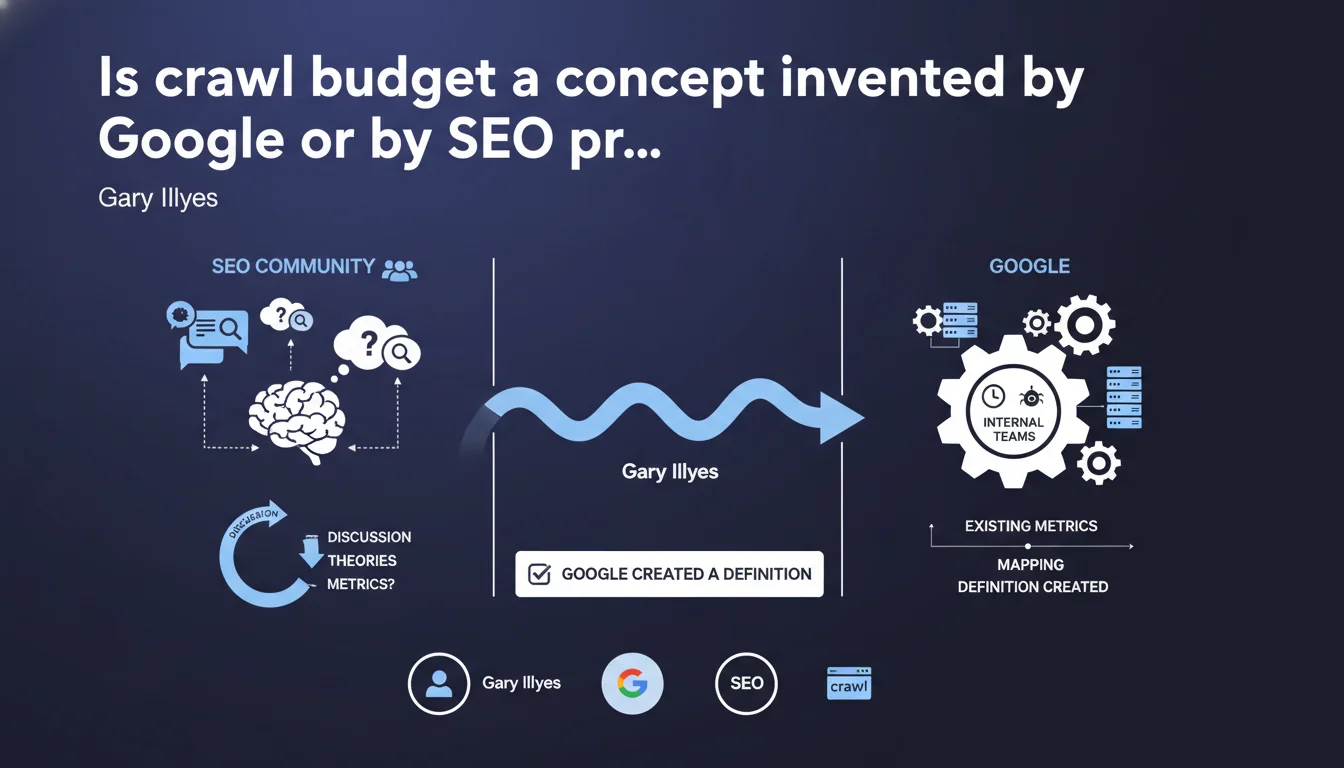

Google didn't have an official definition of crawl budget from the beginning. Faced with persistent discussions from the SEO community, the company eventually mapped existing internal metrics to create a definition that matched what practitioners were already calling "crawl budget". In other words: the concept existed in the field before being formalized by Google.

What you need to understand

Why did Google eventually define crawl budget?

For years, Google kept repeating that there was no crawl budget — at least not under that name. Yet SEOs were observing clear behaviors: certain pages were never crawled, others were crawled multiple times a day.

Faced with this disconnect, Google decided to formalize a definition internally. Multiple teams mapped existing metrics to give an official framework to what the community was already calling "crawl budget". It's not a new system — it's a belated recognition of a phenomenon observed in the field.

What does this reveal about Google's communication?

This statement illustrates a recurring pattern: Google denies the existence of a concept until it becomes impossible to ignore. Then, an official definition appears, often vague, which allows them to answer questions without really committing.

Crawl budget wasn't born in Mountain View's labs. It was theorized by practitioners, validated through observation, then co-opted by Google to prevent the community from creating its own parallel taxonomy.

What are the concrete implications for an SEO?

First, it confirms that field observations are worth as much as official statements — sometimes even more. If you notice that a site with 50,000 pages only sees 2,000 pages crawled per month, that's not a hallucination, it's a real problem.

Second, it reminds us that Google adapts its communication based on external pressure. The metrics already existed internally, but without a public definition, it was impossible to formulate clear recommendations. Now, at least we can communicate using a shared vocabulary.

- Crawl budget is not a myth — Google acknowledged it after years of denial.

- Internal metrics existed before the public definition.

- Formalization was triggered by pressure from the SEO community.

- This pattern repeats: Google denies, then admits, then defines in its own way.

- Field observations remain a more reliable diagnostic tool than vague statements.

SEO Expert opinion

Is this statement really an admission of helplessness?

Yes and no. On one hand, Gary Illyes acknowledges that Google had to adapt to SEO vocabulary rather than impose its own. That's rare. It shows that the community succeeded in forcing minimal transparency on a topic Google would have preferred to keep murky.

On the other hand, this "definition" remains a black box. We know crawl budget exists, that it depends on factors like site quality, publication velocity, and page popularity. But the thresholds? The precise algorithms? The exact levers to optimize it? Still in the fog. [To verify]: Google talks about "mapped metrics", but never specifies which ones or how they interact.

Were practitioners right before Google?

Absolutely. As early as the 2010s, SEOs like Patrick Stox or Christoph Cemper documented clear patterns: crawl slowdown on large poorly-structured sites, crawl explosion on news sites, server speed impact, crawl trap effects, duplicate content impact.

Google invented nothing — it labeled a phenomenon already documented. The only novelty is that you can now use the term "crawl budget" in a client email without sounding like a charlatan.

What to do in the face of this persistent opacity?

Let's be honest: Google's statement changes nothing about how you work. You continue analyzing logs, monitoring crawl frequency in Search Console, tracking orphaned pages and crawl traps. Empirical methods remain more reliable than official statements.

If Google refuses to give precise thresholds, it's probably because they're dynamic, vary by site, and are adjusted in real time. Or because revealing them would allow bad actors to exploit the system. Either way, you work with what you have: observations, hypotheses, tests.

Practical impact and recommendations

What do you actually need to do to optimize crawl budget?

Start by analyzing your server logs — that's the only way to know which pages Google actually crawls, how frequently, and which ones are ignored. If you don't have log access, Search Console will give you a partial view through the coverage report.

Next, track down crawl traps: infinite pagination, poorly managed facets, unnecessary URL parameters, massive duplicate content. Every page crawled for nothing eats up budget that could have been used to index a strategic page.

- Audit your server logs to identify crawled vs. ignored pages

- Block unnecessary pages via robots.txt or noindex (facets, internal search pages, duplicates)

- Optimize server speed and response time — a slow server slows down crawling

- Prioritize internal linking to strategic pages to guide Googlebot

- Clean up redirect chains and 404 errors that unnecessarily saturate crawl

- Use XML sitemap to signal important pages, without drowning Google in 100,000 URLs

What mistakes must you absolutely avoid?

Don't rely solely on Search Console to diagnose a crawl problem. It shows you what Google wants to show you, not the complete reality. Logs are the ground truth.

Another classic mistake: thinking an XML sitemap is enough to force crawling of a massive site. No. If your structure is poor, if your pages are slow, if your content is 80% duplicated, a sitemap won't save you. Crawl budget is a consequence of overall site quality, not an isolated lever.

How do you verify your site is well optimized?

Compare crawl frequency of strategic pages vs. secondary pages. If Google crawls your legal notice three times a day but ignores your flagship product page, there's a importance signal problem.

Also check the ratio of crawled pages to indexed pages. If Google crawls 10,000 pages per month but only indexes 1,000, then 9,000 pages are deemed unnecessary or low quality. That's a clear indicator of crawl budget waste.

In summary: Crawl budget exists, Google acknowledged it, but optimization levers haven't changed. Clean structure, unique content, server speed, strategic linking. Log analysis remains the reference diagnostic tool.

These technical optimizations can be complex to deploy alone, especially on large or poorly documented sites. If you find yourself stuck between fuzzy priorities and contradictory metrics, calling on a specialized SEO agency can save you months — and prevent costly mistakes that would tank your crawl budget for a long time.

❓ Frequently Asked Questions

Google a-t-il vraiment créé le concept de crawl budget ou l'a-t-il simplement reconnu ?

Le crawl budget affecte-t-il tous les sites de la même manière ?

Peut-on augmenter son crawl budget manuellement ?

La Search Console suffit-elle pour diagnostiquer un problème de crawl budget ?

Un sitemap XML peut-il compenser une mauvaise architecture de site ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 25/08/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.