Official statement

Other statements from this video 12 ▾

- □ Faut-il vraiment se préoccuper du crawl budget pour votre site ?

- □ Comment Google définit-il réellement le crawl budget et quels leviers peut-on actionner ?

- □ Le crawl budget est-il un concept inventé par Google ou par les SEO ?

- □ Les requêtes POST plombent-elles vraiment votre crawl budget ?

- □ Le crawl budget d'une nouvelle section est-il hérité de la qualité du site principal ?

- □ Les codes 503 et 429 peuvent-ils vraiment réduire votre crawl budget ?

- □ Peut-on vraiment piloter son crawl budget depuis Google Search Console ?

- □ HTTP/2 améliore-t-il vraiment votre crawl budget ?

- □ Pourquoi vos URLs 'découvertes mais non crawlées' révèlent-elles un problème de fond ?

- □ Faut-il bloquer l'indexation de vos fichiers JavaScript pour optimiser le crawl budget ?

- □ Les 404 et robots.txt gaspillent-ils vraiment votre crawl budget ?

- □ Faut-il bloquer vos fichiers JavaScript décoratifs pour optimiser votre crawl budget ?

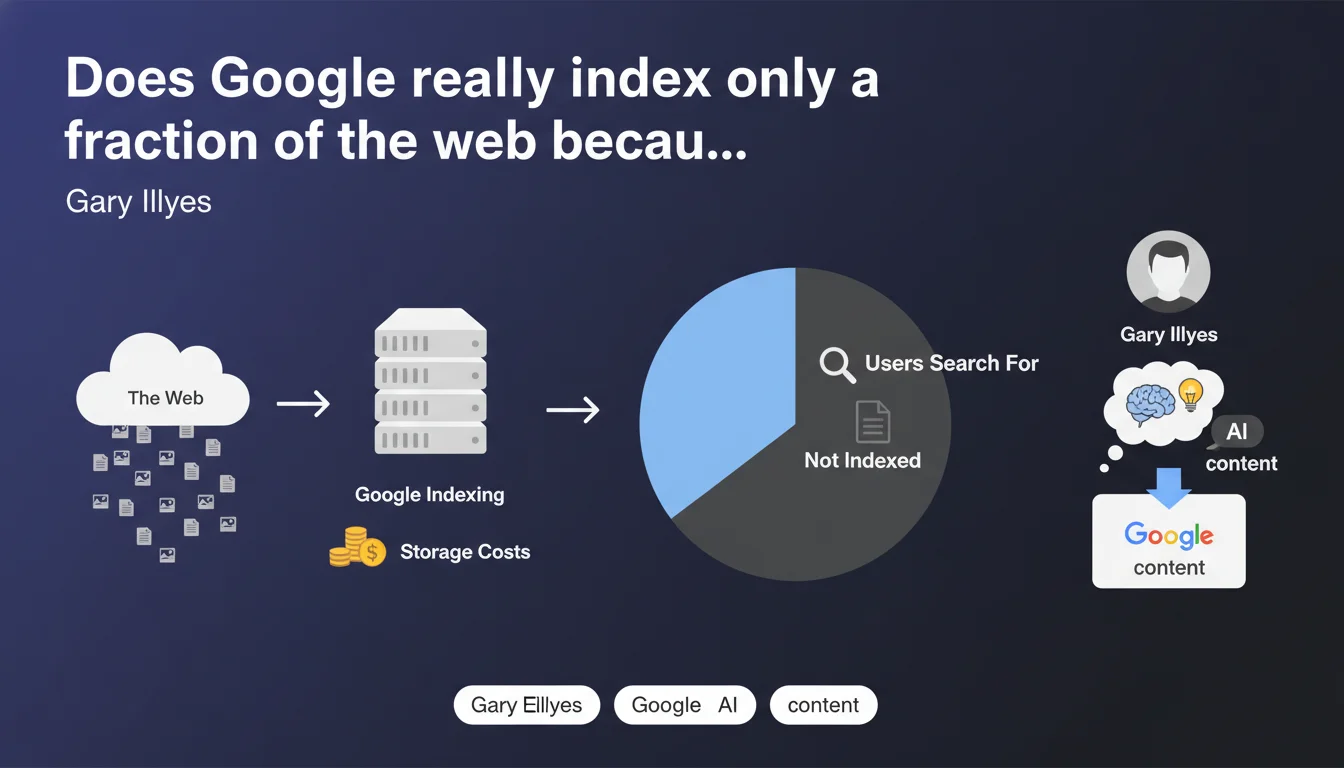

Google openly acknowledges that its storage capacity is not infinite and that indexing is expensive. The result: only content likely to be searched by users gets indexed. For SEO practitioners, this means optimizing the "desirability" of your pages in Google's eyes becomes just as critical as making them technically crawlable.

What you need to understand

Why does Google publicly admit its technical limitations?

Contrary to the image of an infrastructure without limits, Google acknowledges here that indexing has a real cost — hard drives, SSDs, memory, electricity, maintenance. This statement from Gary Illyes shatters the myth of an engine that would index everything by default.

The real insight: Google makes strategic indexing choices based on the probability that content will be searched. It's not about raw volume, but anticipated relevance.

What does this concretely change for a website?

If your content is not deemed "desirable" by Google — meaning: likely to generate clicks from search results — it may simply never enter the index. Even if your site is technically perfect.

This aligns with field observations: orphaned pages ignored, low-traffic-potential content excluded, entire sites overlooked despite regular crawling. Crawl budget does not guarantee indexation.

What signals does Google use to decide?

Google doesn't detail its exact criteria, but we can infer several axes: site popularity, content freshness, existing behavioral signals, thematic authority, internal and external links. Isolated content, without context, without links, without preexisting traffic has little chance of being prioritized.

- Google does not index all of the web, only what it deems potentially searchable

- Storage cost is a real economic factor that influences indexing decisions

- The technical ability to crawl content does not guarantee its indexation

- Sites must prove that their content deserves to be stored and served to users

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Absolutely. For years, we've seen technically accessible pages never get indexed. Google Search Console is full of URLs marked "Crawled, currently not indexed" — a status that perfectly illustrates what Illyes is saying.

The nuance: Google doesn't say how much this storage costs, or what percentage of the web is actually indexed. [To verify] We lack official figures on the actual crawl/indexation ratio. External estimates vary greatly.

In what cases does this rule not really apply?

Sites with strong authority — national media, established brands, government websites — benefit from far greater tolerance. Their pages are indexed massively, even those with low traffic potential.

For small sites or new entrants, it's a different story. Each page must justify its existence in the index. Let's be honest: Google doesn't apply the same selectivity rules to everyone.

What about content that deserves to be indexed but isn't?

That's where it gets tricky. If your content is objectively useful but ignored by Google, you must create artificial signals of desirability for it: strategic internal links, external mentions, direct traffic, social engagement. Anything that proves there's demand.

Practical impact and recommendations

What should you do concretely to maximize your chances of indexation?

First, prioritize ruthlessly. If you have 10,000 pages and Google indexes only 3,000, maybe 7,000 don't actually deserve to be indexed. Audit your content and delete or consolidate what adds nothing.

Next, focus your efforts on high-potential pages: dense internal linking to them, external mentions, regular updates, engagement signals. Google must understand that these pages are actively searched for or visited.

What mistakes should you avoid at all costs?

Stop believing that an XML sitemap guarantees indexation. Stop producing content in bulk without a distribution strategy. And most importantly, stop thinking Google has a moral obligation to index your site.

The classic pitfall: automatically generate thousands of product pages or fine-grained categories, then be surprised they don't get indexed. Google sees that as noise with no added value.

How do you verify your strategy is working?

Monitor the ratio between crawled and indexed URLs in Google Search Console. If the gap widens, it means Google considers your content non-priority. Also compare monthly evolution: a healthy site sees its indexation rate stable or growing.

- Regularly audit "Crawled, currently not indexed" pages and decide: improve, merge, or delete

- Strengthen internal linking to strategic pages Google is ignoring

- Remove weak or duplicate content that dilutes your crawl budget

- Create signals of user demand (direct traffic, external links, shares)

- Prioritize quality and specificity over page volume

- Monitor indexation rate evolution monthly in GSC

❓ Frequently Asked Questions

Google indexe-t-il vraiment moins de pages qu'avant à cause de ces contraintes ?

Si ma page est crawlée mais non indexée, est-ce définitif ?

Le coût de stockage explique-t-il la dépriorisation des sites de niche ?

Faut-il bloquer le crawl des pages qu'on ne veut pas indexer pour économiser le crawl budget ?

Cette logique s'applique-t-elle aussi aux images, vidéos et PDFs ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 25/08/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.