Official statement

Other statements from this video 11 ▾

- □ Pourquoi Google multiplie-t-il les fonctionnalités enrichies au détriment des liens bleus classiques ?

- □ Google retire-t-il des fonctionnalités de recherche uniquement en fonction des clics ?

- □ Faut-il vraiment optimiser les éléments invisibles ou peu cliqués sur une page ?

- □ Google cherche-t-il vraiment à satisfaire l'utilisateur ou à maximiser ses revenus publicitaires ?

- □ Google mesure-t-il la satisfaction de vos pages via les recherches répétées ?

- □ Comment Google choisit-il les fonctionnalités à prioriser dans son algorithme ?

- □ Google peut-il continuer d'exiger toujours plus de travail aux propriétaires de sites ?

- □ Faut-il se réjouir quand Google retire des fonctionnalités SEO ?

- □ Comment Google déploie-t-il réellement ses changements d'algorithme ?

- □ Google est-il obligé d'annoncer publiquement le retrait de toutes ses fonctionnalités SEO ?

- □ Google limite-t-il vraiment ses résultats à un seul par domaine ?

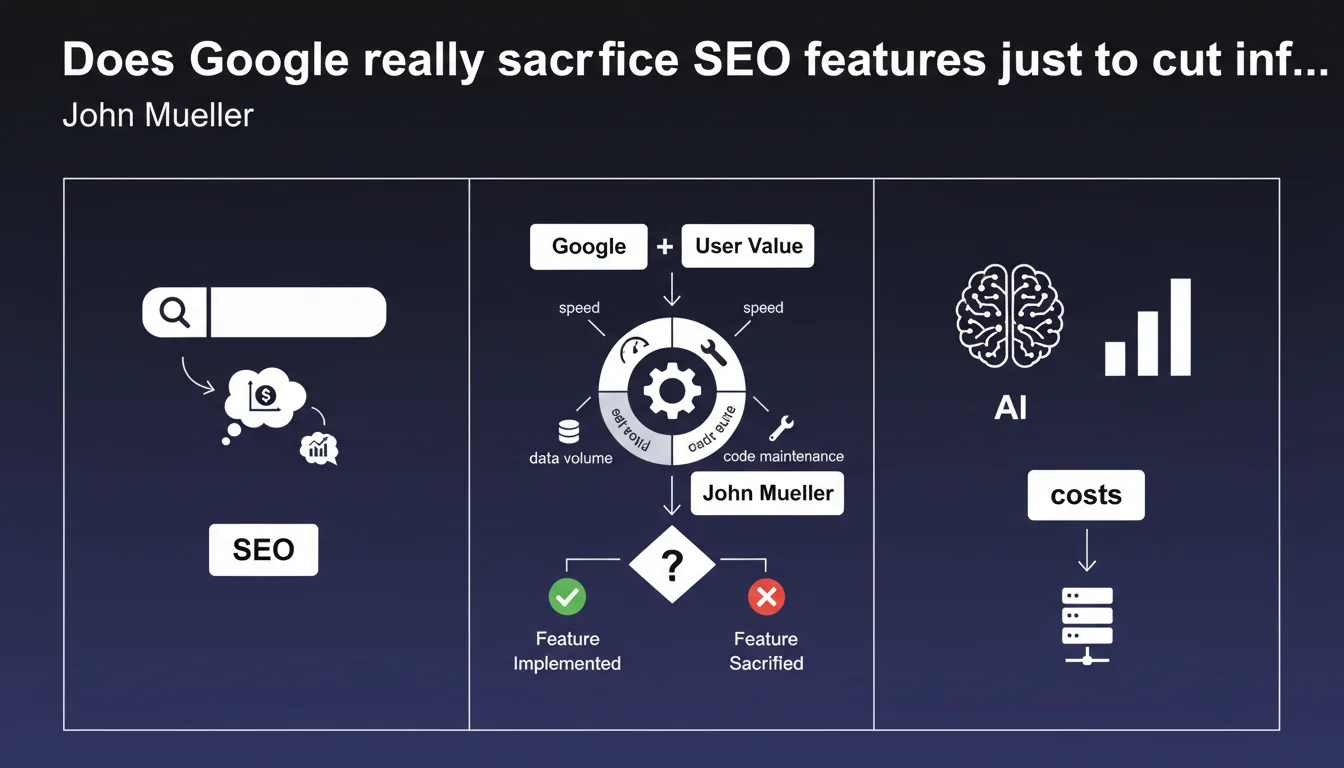

Google doesn't deploy every technically possible feature: each feature is evaluated based on its infrastructure cost (speed, storage, code maintenance). In other words, a feature might theoretically benefit users but get rejected if it weighs too heavily on Google's systems. For SEOs, this explains why certain recurring requests (more frequent crawling, instant indexing, etc.) keep falling on deaf ears.

What you need to understand

Why does Google refuse certain improvements that could actually be useful?

Mueller's statement reminds us of a reality often overlooked: Google is a company with infrastructure constraints. Each feature represents a tradeoff between user value and operational cost.

Concretely, three decisive criteria: result generation speed (acceptable latency for the user), volume of data to store (servers, replication, backups), and amount of code to maintain (technical debt, potential bugs, teams mobilized).

What does this mean for indexation and crawling?

This logic explains why Google limits crawl budget on poorly performing or redundant sites, why certain pages are never indexed despite being submitted, or why features like instant indexing were discontinued.

If storing or analyzing a page costs more than the value it brings to users, Google deprioritizes or ignores it. It's brutal, but consistent with their business model.

How does this constraint influence ranking algorithms?

Some SEO signals — even though they're relevant — aren't exploited because they require too much real-time computation. For example, deep semantic analysis of each long-tail query could improve results, but if it slows down the SERP by 200ms, it gets abandoned.

Google favors signals that are cheap to calculate and maintain: backlinks (static graph structure), domain popularity (aggregated metrics), Core Web Vitals (data already collected via Chrome). Complex signals, even if high-performing, get left behind if the cost-benefit equation tips the wrong way.

- User value vs infrastructure cost: each feature is an economic compromise, not just a technical one

- Limited crawl budget: Google doesn't crawl everything because it's expensive, not out of spite

- Ranking signals: algorithms favor metrics that are cheap to calculate and store

- Feature discontinuations: when cost exceeds value, Google cuts it (example: instant indexing)

- Technical debt: more code = more bugs, more teams, so reluctance to multiply features

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Absolutely. We've seen Google cutting back on tools or features deemed too expensive for years. The discontinuation of the instant indexing API for non-news sites, the drastic reduction in crawling for sites with massive duplicate content, the refusal to index certain page categories (poorly managed filters and facets) — it all makes sense through this accounting logic.

SEOs tend to think that if a feature is technically possible, Google should implement it. Except Google is a company that must justify its infrastructure spending. If a webmaster wants to be crawled more frequently, they first need to prove their site deserves this additional cost — through unique content, regular freshness, established popularity.

What nuances should we add to this purely economic view?

Be careful not to reduce everything to infrastructure ROI. Google also faces political, legal, and reputation constraints. Some features aren't deployed because they'd create privacy issues (excessive data collection), others because they'd open the door to manipulation (instant indexing = massive spam).

Technical cost is one filter among many, not the only one. But it's a powerful filter often underestimated by SEOs who only think in terms of "relevance" or "user experience".

In what cases does this rule not apply or get circumvented?

Google sometimes makes exceptions for strategic sectors. News sites get ultra-fast crawling and near-instant indexing — not because it's free, but because Google needs to be competitive in news against Twitter, TikTok, etc.

Similarly, large e-commerce sites (Amazon, eBay) are crawled massively despite millions of redundant pages. Why? Because their organic traffic generates ad clicks via Google Ads, so the crawling cost gets offset elsewhere in the ecosystem. [To verify]: this hypothesis is never officially confirmed, but it aligns with field observations.

Practical impact and recommendations

What should you concretely do to minimize your site's cost in Google's eyes?

Reduce noise: fewer useless pages = less wasted crawl, so better crawl budget allocation. Each indexed page needs to have clear user value and sufficient differentiation. E-commerce filters generating 10,000 near-identical URLs? Noindex or aggressive canonicalization.

Technically, this means rigorous SEO architecture work: robots.txt to block unnecessary directories, canonical tags to unify variants, clean XML sitemaps (no blocked or noindex URLs), optimized server response time to avoid slowing down crawls.

What mistakes should you avoid to not unnecessarily overload Google's infrastructure?

Don't multiply navigation facets without control. Each new URL parameter (sorting, filtering, pagination) multiplies the number of crawlable combinations. If Google detects that 90% of these pages add zero value, it will throttle your crawl budget — and your real strategic pages will suffer.

Another classic trap: cascading redirects (A → B → C) or chains of canonicals. Each hop requires an additional HTTP request, slows crawling, consumes server time. Google eventually abandons or deprioritizes.

How can you verify that your site aligns with this technical cost logic?

Analyze your server logs to identify crawled but non-strategic URLs. If Googlebot spends 60% of its time on noindex pages, orphaned PDFs, or session-based URLs, you have a crawl efficiency problem.

Use Search Console to spot pages in "Discovered, currently not indexed": often these are pages Google deems too expensive or worthless to index. If they're strategic, improve their content, internal linking, and speed. If they're peripheral, block them properly.

- Audit your architecture: how many truly unique pages vs how many crawlable URLs?

- Clean up e-commerce facets: noindex on non-strategic filter combinations

- Remove cascading redirects and canonical chains

- Optimize server response time (TTFB) to speed up crawling

- Analyze logs: identify crawl budget waste on useless URLs

- Check Search Console: discovered non-indexed pages = signal of low perceived value

- Prioritize internal linking to your strategic pages to direct crawling

❓ Frequently Asked Questions

Google refuse-t-il d'indexer des pages uniquement pour des raisons de coût ?

Pourquoi Google a-t-il supprimé l'API d'indexation instantanée pour les sites non-actualités ?

Comment savoir si mon site coûte trop cher à Google en termes de crawl ?

Est-ce que réduire le nombre de pages améliore forcément mon SEO ?

Google privilégie-t-il certains sites malgré leur coût technique élevé ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 07/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.