Official statement

Other statements from this video 11 ▾

- □ Pourquoi Google multiplie-t-il les fonctionnalités enrichies au détriment des liens bleus classiques ?

- □ Google retire-t-il des fonctionnalités de recherche uniquement en fonction des clics ?

- □ Faut-il vraiment optimiser les éléments invisibles ou peu cliqués sur une page ?

- □ Google cherche-t-il vraiment à satisfaire l'utilisateur ou à maximiser ses revenus publicitaires ?

- □ Google mesure-t-il la satisfaction de vos pages via les recherches répétées ?

- □ Comment Google choisit-il les fonctionnalités à prioriser dans son algorithme ?

- □ Google sacrifie-t-il certaines fonctionnalités SEO pour des raisons de coût technique ?

- □ Google peut-il continuer d'exiger toujours plus de travail aux propriétaires de sites ?

- □ Faut-il se réjouir quand Google retire des fonctionnalités SEO ?

- □ Google est-il obligé d'annoncer publiquement le retrait de toutes ses fonctionnalités SEO ?

- □ Google limite-t-il vraiment ses résultats à un seul par domaine ?

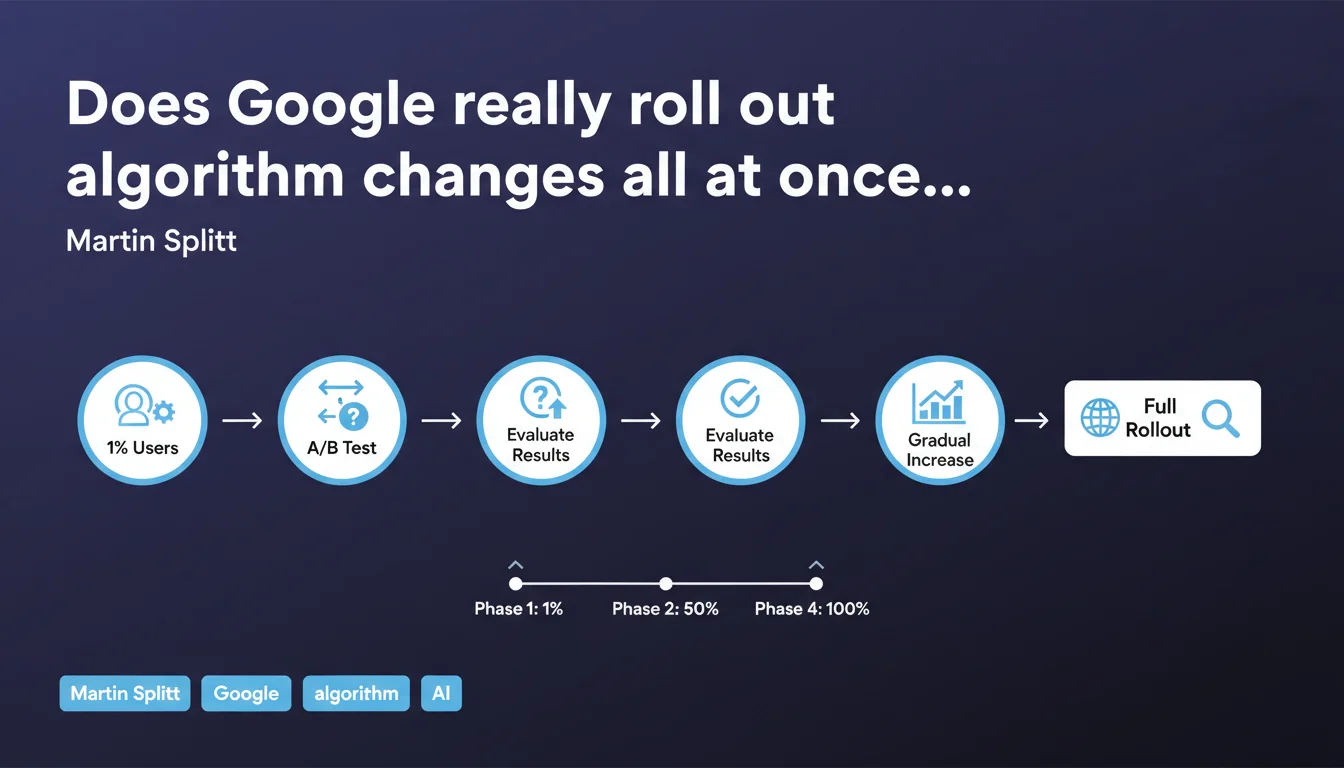

Google first tests any feature change on approximately 1% of its users, then gradually increases exposure based on results. This progressive A/B testing approach explains why some sites observe fluctuations before others, and why rollouts often span several weeks.

What you need to understand

Why does Google use progressive A/B testing?

The answer is simple: to limit risk. Rolling out an algorithmic change to 100% of traffic all at once is like playing Russian roulette with billions of daily queries.

By starting with 1% of traffic, Google can observe the real-world impact without jeopardizing the overall user experience. If a critical bug emerges or satisfaction metrics drop, the rollback only affects a tiny fraction of traffic. It's production testing, but controlled.

What does this actually mean for SEOs?

It explains why your rankings can fluctuate erratically over several days or weeks during an update. Your site might be in the group exposed to the change, then not be, then be again depending on the rollout progression.

Position tracking tools aggregate data that can reflect multiple algorithm versions simultaneously. A ranking 5 in Paris, a ranking 12 in Lyon — not necessarily geographic personalization, perhaps just two different user groups in the A/B test.

Are all rollouts progressive?

Martin Splitt is talking about feature changes here, not necessarily all updates. Core Updates, for example, are announced as complete rollouts that take 1-2 weeks — but nothing says there isn't an undisclosed testing phase beforehand.

What's certain: this progressive approach has become the industry standard in tech. Imagining Google deploys everything at once is naive.

- 1% initial exposure limits the impact of a critical bug

- Progressive increase based on user satisfaction metrics

- Multiple algorithm versions coexist during rollout

- Erratic fluctuations normal during testing phases

- Not all changes are necessarily announced publicly

SEO Expert opinion

Does this statement match what we observe in the field?

Absolutely. SEOs have reported sawtooth fluctuations during major updates for years. A site gains 30% traffic one day, loses 15% the next, stabilizes a week later.

The progressive A/B testing explanation is the most coherent with these observations. It also explains why some sites appear impacted before an official Core Update announcement — they were probably in the initial 1% group.

What nuances should we add?

Google doesn't say how long this progressive ramp-up takes. A week? Three weeks? A month? [To verify] — no public data on this.

Another point: Martin Splitt mentions "results obtained," but which ones exactly? Dwell time? Bounce rate on the SERP? Clicks on organic results? User satisfaction measured how? Google remains vague, as always.

Finally, this approach means that for several days, two algorithm versions coexist. Technically complex, but more importantly: it makes causal attribution difficult for SEOs. Your traffic drop, is it the new algo, or just statistical noise from the fact that you're not yet exposed?

Should you panic at every fluctuation?

No. And that's precisely the trap. If Google continuously tests micro-changes on sub-groups, your traffic will naturally oscillate without it being significant.

The real question becomes: how do you distinguish a fluctuation tied to a temporary A/B test from a lasting change? Honest answer: it's almost impossible in real-time. You need to wait for the rollout to complete before drawing conclusions.

Practical impact and recommendations

What should you do during a progressive rollout?

Don't change anything hastily. That's the golden rule. If your rankings drop on day 2 of a 14-day Core Update announcement, wait until day 15 before concluding anything.

Document the fluctuations, but resist the temptation to modify your strategy in immediate reaction. You could be fixing a problem that doesn't exist, or worse, introducing a real problem trying to counter a temporary fluctuation.

How should you interpret analytics data during this period?

Segment by 3-4 day chunks rather than day by day. Daily variations are too influenced by the rollout mechanics to be significant.

Also compare with direct competitors: if your entire sector fluctuates, it's probably the algorithm. If you're the only one, look elsewhere (seasonality, technical issues, competition).

What errors should you absolutely avoid?

First error: drastically modifying your content mid-rollout. You create a confounding variable that makes post-update analysis impossible.

Second error: confusing correlation with causation. Your ranking 8 dropping to 12 on the day Google announces an update — it might not be the update, just temporal coincidence.

- Wait for the complete end of the rollout before any major action (minimum 2 weeks post-announcement)

- Document fluctuations with timestamped screenshots and analytics exports

- Segment your analysis by 3-4 day periods, not day by day

- Compare your evolution to that of direct competitors for context

- Only modify one variable at a time if you decide to intervene post-update

- Monitor official Google announcements to know when a rollout is complete

❓ Frequently Asked Questions

Tous les changements d'algorithme Google passent-ils par des tests A/B ?

Comment savoir si mon site est dans le groupe test ou le groupe contrôle ?

Combien de temps dure généralement un déploiement progressif ?

Les tests A/B expliquent-ils pourquoi certains sites voient des impacts avant l'annonce officielle ?

Faut-il attendre la fin du déploiement pour analyser l'impact sur mon site ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 07/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.