Official statement

Other statements from this video 11 ▾

- □ Pourquoi Google multiplie-t-il les fonctionnalités enrichies au détriment des liens bleus classiques ?

- □ Google retire-t-il des fonctionnalités de recherche uniquement en fonction des clics ?

- □ Faut-il vraiment optimiser les éléments invisibles ou peu cliqués sur une page ?

- □ Google cherche-t-il vraiment à satisfaire l'utilisateur ou à maximiser ses revenus publicitaires ?

- □ Google mesure-t-il la satisfaction de vos pages via les recherches répétées ?

- □ Google sacrifie-t-il certaines fonctionnalités SEO pour des raisons de coût technique ?

- □ Google peut-il continuer d'exiger toujours plus de travail aux propriétaires de sites ?

- □ Faut-il se réjouir quand Google retire des fonctionnalités SEO ?

- □ Comment Google déploie-t-il réellement ses changements d'algorithme ?

- □ Google est-il obligé d'annoncer publiquement le retrait de toutes ses fonctionnalités SEO ?

- □ Google limite-t-il vraiment ses résultats à un seul par domaine ?

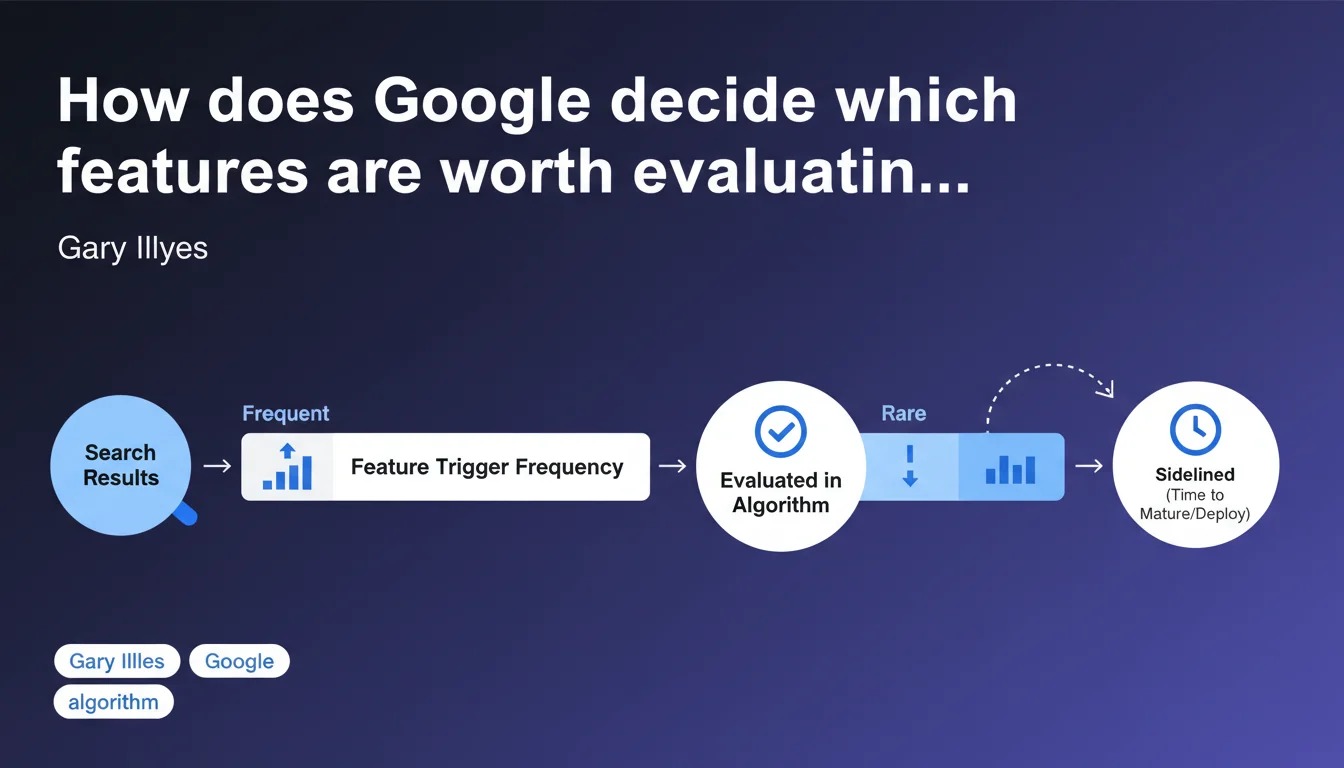

Google focuses its evaluation efforts on features and signals that trigger frequently in search results. Features rarely observed are set aside, giving them time to mature or be more widely deployed across websites. In concrete terms: if a technology is adopted by only 2% of sites, it won't be an algorithmic priority.

What you need to understand

What exactly does "prioritizing features" mean?

Google cannot evaluate with the same intensity all the signals, tags, and technologies available on the web. The infrastructure must make strategic choices. Gary Illyes confirms that the frequency at which a feature appears determines its evaluation priority.

A feature can be a specific schema.org markup, an HTML attribute, a performance optimization technique, or even a type of structured content. If it appears rarely in the index, Google won't deploy significant algorithmic resources to analyze it thoroughly.

Why does this prioritization logic exist?

There are multiple reasons. First, the efficiency of evaluation systems: testing and refining an algorithm requires sufficient data volumes. It's impossible to validate a signal's relevance if only a few thousand pages use it across billions indexed.

Second, business logic — Google optimizes for maximum impact on overall search results quality. Investing in evaluating an ultra-marginal feature yields little compared to refining a signal present on 60% of pages.

What qualifies a feature as "rarely triggered"?

Google obviously won't provide a specific threshold, but we can reasonably assume it refers to adoption rates below 5-10% of the relevant index. A new type of structured data, an experimental attribute, an emerging web API — as long as deployment remains confidential, evaluation remains superficial.

Conversely, widely adopted features like Open Graph tags, Core Web Vitals, or Article markup receive constant and in-depth algorithmic attention.

- Frequency of appearance = decisive criterion for algorithmic prioritization

- Marginal features are set aside, not permanently ignored

- Google waits for adoption to reach critical mass before investing in evaluation

- This logic applies equally to technical tags and content formats

- Maturation time also allows Google to observe real-world usage and potential abuse

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Absolutely. We've seen for years that Google reacts proportionally to adoption. Take FAQ schema.org markup: ignored for a long time, it exploded in SERPs once a critical mass of sites implemented it. Massive adoption generally precedes algorithmic optimization, not the other way around.

Same logic for Core Web Vitals — Google waited for measurement tools and optimization practices to become mainstream before making it an official ranking signal. [To verify]: how far exactly does this "setting aside" logic go? A completely ignored signal or simply under-weighted?

What risks does this approach carry for innovators?

The early adoption paradox, precisely. If you implement a new Google recommendation (say, a new type of structured data) upon its announcement, you'll likely see no immediate benefit. The effort is made, but the algorithmic return comes only months or years later, once adoption becomes widespread.

Let's be honest — it's frustrating for early adopters. But it's also a safeguard: it prevents Google from overvaluing immature signals that could be easily manipulated. Maturation time helps identify legitimate use cases versus abuse.

In which cases does this rule not apply?

Google can make exceptions for strategic features, even with limited initial adoption. AMP pages are the perfect example: maximum algorithmic priority from launch, well before massive adoption. Why? Because it was a Google project with clear business objectives.

Another exception: safety signals. HTTPS wasn't universal when Google made it a ranking signal. Some priorities transcend purely statistical logic — security, accessibility, critical user experience can justify priority evaluation even with limited adoption.

Practical impact and recommendations

Should you wait for massive adoption before implementing new features?

No, but adjust your expectations. Implementing new markup recommended by Google remains relevant for future compatibility and overall technical quality. Simply don't count on an immediate visibility boost. The investment is long-term.

Prioritize widely adopted and documented features known to have impact: classic structured data (Article, Product, BreadcrumbList), Core Web Vitals optimization, standard semantic markup. That's where effort pays off today.

How do you identify what's really a priority for Google right now?

Observe the rich results available in your niche. If Google displays review carousels, enriched FAQs, structured recipes — that means these features are actively evaluated. Align yourself with these formats.

Check Search Console reports to see which types of structured data Google recognizes and exploits on your site. Errors reported on certain markup types indicate Google is crawling and actively evaluating them.

Analyze Google Search Central docs: features with detailed documentation, dedicated testing tools, and presence in Search Console reports are the ones that really matter.

What should you concretely do with this information?

- Audit features already widely adopted in your sector that you haven't yet exploited

- Implement structured data matching the rich results displayed for your target queries

- Prioritize optimizing Core Web Vitals signals, massively evaluated since their generalization

- Test your implementations via official Google tools (Rich Results Test, PageSpeed Insights)

- Don't neglect emerging best practices, but don't prioritize them over fundamentals

- Monitor the evolution of adoption for new features in your sector

- Regularly reassess your technical priorities based on ecosystem changes

The optimal strategy: solid foundations first (what is already massively evaluated by Google), monitoring and anticipation second (progressive implementation of promising innovations). Don't skip the basics chasing every new trend at the expense of the fundamentals.

These strategic arbitrations between immediate optimizations and long-term investments can prove complex, especially when technical resources are limited. Prioritization challenges, ROI measurement, and compliant implementation often require specialized support to avoid pitfalls and maximize the effectiveness of each action.

❓ Frequently Asked Questions

Google ignore-t-il complètement les fonctionnalités peu adoptées ?

À partir de quel seuil d'adoption une fonctionnalité devient-elle prioritaire ?

Est-ce que cela vaut le coup d'être early adopter sur les nouveautés Google ?

Comment savoir si une fonctionnalité est actuellement évaluée par Google ?

Cette logique s'applique-t-elle aussi aux signaux négatifs comme les pénalités ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 07/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.