Official statement

What you need to understand

What is crawl budget according to Google?

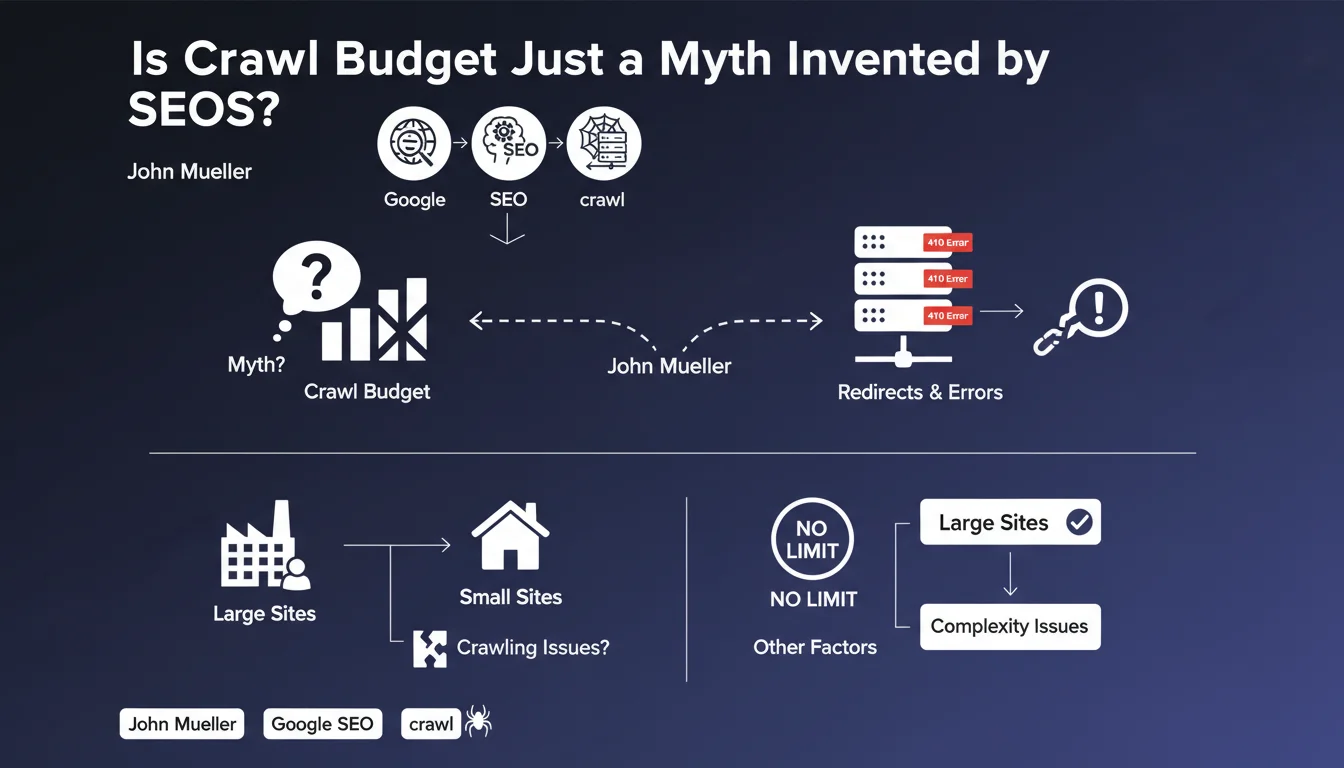

John Mueller, from Google, has clarified a widely spread notion in the SEO community: crawl budget would be more of a concept popularized by SEO professionals than a strict technical limit imposed by Google. According to him, there is no fixed quota determining how many pages Googlebot can crawl on a site.

This statement nuances the usual perception of crawling. Google does not impose an arbitrary ceiling, but adjusts its exploration based on the perceived interest of the content and the server's ability to respond effectively.

Why does Google crawl some sites less frequently?

If Googlebot limits its exploration of a site, it's not necessarily due to a budget overrun. For smaller sites, this generally means that Google doesn't consider it useful to explore more pages.

This situation may reflect a lack of added value in the content, low site popularity, or insufficient quality signals. Large sites with millions of pages remain the most concerned by crawl optimization questions.

What is Google's position on 301 redirects to 410 errors?

Mueller confirmed that the combination of 301 redirects followed by 410 HTTP codes (Gone) is a perfectly acceptable practice for Google. This redirect chain does not exhaust a hypothetical crawl budget.

However, it should be noted that other factors genuinely influence exploration: server response speed, technical stability, and Google's willingness not to overload the infrastructure. These pragmatic elements naturally limit crawl intensity.

- Crawl budget is not a strict limit imposed by Google

- Crawl issues on small sites often reveal a lack of perceived interest by Google

- 301 → 410 chains are technically acceptable

- Server speed and stability genuinely influence crawling

- Large sites remain the most concerned by crawl optimization

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Mueller's statement does indeed correspond to field observations, but requires a nuanced interpretation. In practice, even without an official fixed limit, we do observe exploration ceilings that vary by site.

These ceilings result from a combination of factors: domain authority, content freshness, page popularity, and server technical constraints. Saying there is no crawl budget strictly speaking doesn't mean there are no practical limitations.

What important nuances should be added to this position?

The main nuance concerns the distinction between small and large-scale sites. For a site with a few hundred pages, the concept of crawl budget is indeed not very relevant. Google can easily crawl the entirety regularly.

On the other hand, for e-commerce sites with tens of thousands of products or media sites with millions of articles, crawl management becomes crucial. Googlebot does indeed allocate differentiated resources according to the site's strategic importance.

In which cases does crawl management remain strategic?

For marketplaces, sites with complex navigation facets, or platforms generating dynamic content, optimizing crawl remains essential. It's less about respecting a budget than prioritizing the exploration of high-value pages.

Sites with a lot of duplicate content, multiple redirect chains, or orphan pages must absolutely structure their architecture to guide Googlebot toward strategic content. The robots.txt, optimized XML sitemaps, and internal link structure remain major levers.

Practical impact and recommendations

What should you do concretely to optimize your site's crawl?

Rather than focusing on a hypothetical quota, concentrate on making Googlebot's job easier. Ensure your server responds quickly and stably, particularly during crawl peaks.

Optimize your internal link architecture so that important pages are accessible within 3 clicks from the homepage. Use the robots.txt file to block sections without SEO value (member areas, multiple sorting parameters, print versions).

Submit a clean XML sitemap containing only indexable URLs with high value. Avoid including noindex pages, redirects, or low-quality content that would dilute Google's attention.

What technical errors should you absolutely avoid?

Don't create excessive redirect chains, even if the 301 → 410 combination is acceptable. Always favor direct redirection to the final destination to save resources.

Avoid redirect loops and server errors (5xx) that consume crawl time without providing value. Regularly monitor Search Console to identify crawl issues reported by Google.

Don't accidentally block important resources (CSS, JavaScript) that would prevent Google from correctly understanding your pages. Modern rendering requires access to these files.

How can you verify that Google is crawling your site effectively?

Use the crawl stats report in Google Search Console to monitor the number of pages crawled daily, average download time, and response sizes.

Analyze server log files to identify crawl patterns, sections most visited by Googlebot, and potential resource waste on non-strategic pages.

- Check server response speed (ideally < 200ms)

- Audit internal link architecture and reduce depth

- Clean up XML sitemap by keeping only strategic URLs

- Block sections without SEO value via robots.txt

- Eliminate redirect chains and 404/5xx errors

- Regularly monitor Search Console crawl statistics

- Analyze server logs to identify inefficiencies

- Improve overall site loading speed

- Prioritize indexing of high business-value pages

Crawl budget is not a strict limit imposed by Google, but rather a dynamic balance between the perceived interest of content and technical constraints. For small sites, crawl issues generally reveal a lack of value detected by Google.

Crawl optimization remains however strategic for large sites: improving server speed, structuring architecture, cleaning up technical errors, and prioritizing exploration of important pages constitute the real best practices.

These technical optimizations often require in-depth expertise and a comprehensive vision difficult to acquire alone. For complex or large-scale sites, calling upon a specialized SEO agency allows you to benefit from personalized support, advanced technical audits, and recommendations adapted to your specific context.

💬 Comments (0)

Be the first to comment.