Official statement

What you need to understand

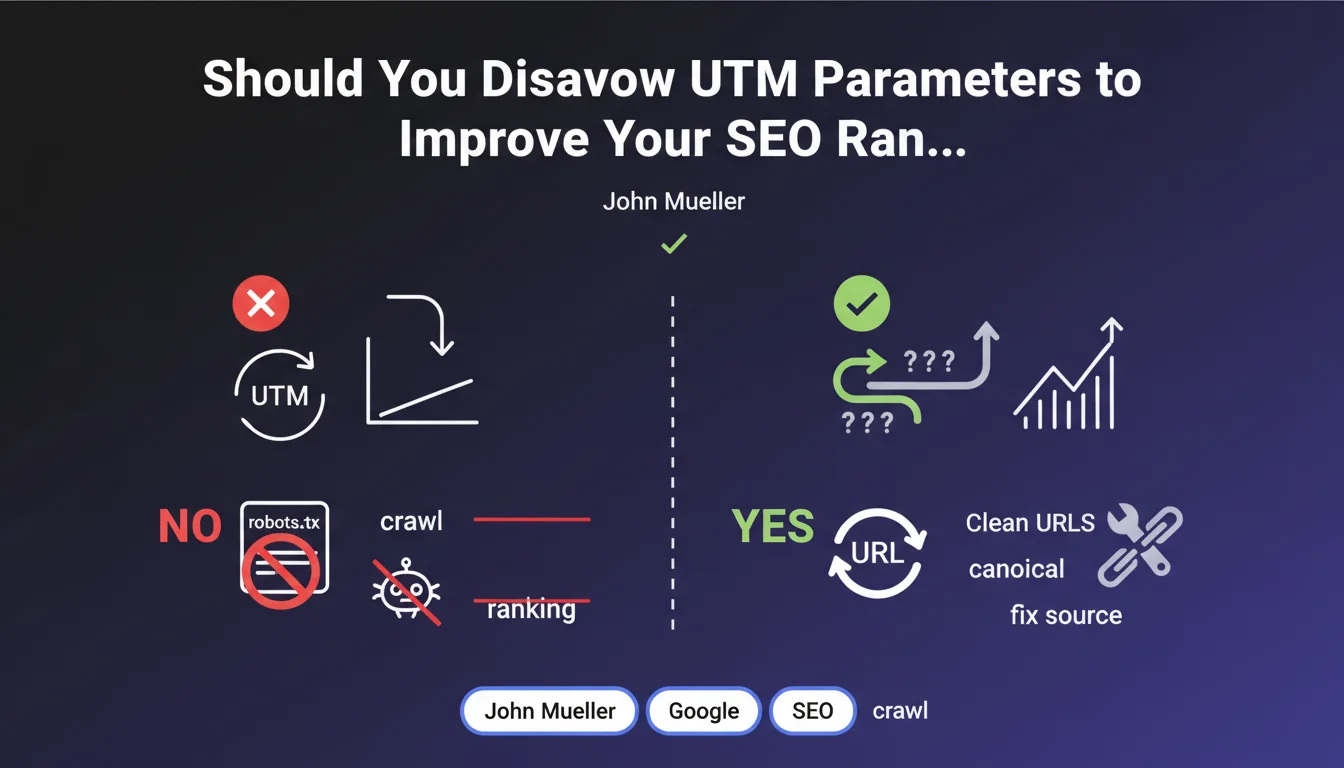

Why does this question about UTM parameters keep coming up in SEO?

UTM parameters are codes added to URLs to track traffic sources in analytics tools. They create URL variants for the same page, which often worries SEO professionals who fear duplicate content issues.

This multiplication of URLs can generate dozens of different versions of the same page. Many practitioners then think they need to block these URLs or disavow them to preserve their crawl budget and avoid diluting their authority.

What exactly does Google say about handling UTM parameters?

Google clearly states that blocking UTM parameters provides no benefit in terms of crawling or rankings. The search engine is capable of handling these URL variants without negatively impacting your site.

The official recommendation favors using canonical tags to signal the main version of each page. This approach allows Google to consolidate signals without requiring active blocking.

What is Google's recommended solution?

The advised approach consists of treating the problem at its source rather than multiplying technical patches. This means maintaining consistent URLs and avoiding unnecessarily creating random parameters.

Canonical tags allow for gradually cleaning up URL variants. Google explicitly discourages using robots.txt to block these parameters, as this would prevent the search engine from discovering canonical signals.

- UTM parameters do not negatively impact crawling or rankings

- Canonical tags are the preferred solution for managing these variants

- Do not block these URLs via robots.txt to allow Google to process them

- Favor a preventive approach by maintaining clean and consistent URLs

- Cleanup via canonical happens gradually over time

SEO Expert opinion

Is this statement consistent with practices observed in the field?

My 15 years of experience completely confirms this position. I've analyzed hundreds of sites extensively using UTM parameters without observing any negative impact on their rankings, provided that canonicals are correctly implemented.

The cases where I've observed problems mainly concerned sites that blocked these URLs via robots.txt, preventing Google from properly consolidating signals. Blocking creates more problems than it solves in 95% of cases.

What nuances should be added to this recommendation?

While Google handles standard UTM parameters well, the situation can become problematic with random or session-based parameters. Session identifiers, timestamps, or unique tokens can indeed impact crawl budget on very large sites.

In these specific cases, Search Console allows you to manage URL parameters to indicate their function to Google. This is a more surgical approach than brute-force blocking via robots.txt.

In what cases might this approach show its limitations?

On e-commerce sites with thousands of parameter combinations (filters, sorting, pagination), the issue goes beyond simple UTMs. A more sophisticated strategy is then needed, combining canonical, selective noindex, and fine-grained management in Search Console.

Sites with a highly constrained crawl budget (millions of pages, low authority) need to be more vigilant. In these cases, every crawled URL counts, and a poorly managed URL parameter strategy can actually slow down the indexing of important pages.

Practical impact and recommendations

What should you actually do on your site?

The first action is to audit your canonical tags to verify that they systematically point to the clean version of your URLs, without parameters. This canonicalization must be consistent across the entire site.

Next, check that your UTM parameters are not blocked in robots.txt. If they are, remove these rules to allow Google to crawl these URLs and discover the canonical signals.

For complex sites, use the URL parameters feature in Search Console to indicate to Google how to handle each type of parameter. This refines processing without requiring blocking.

What mistakes should you absolutely avoid?

The most common error is blocking URLs with parameters via robots.txt thinking you're saving crawl budget. This approach prevents Google from understanding your URL structure and properly consolidating signals.

Another classic pitfall: implementing canonicals that point to URLs that are also parameterized. The canonical must always point to the cleanest and most stable version of the page.

Finally, avoid generating random or variable parameters that create unique URLs for each session. These parameters have no place in your public URLs and should be managed differently (cookies, local storage).

How can you verify that your site correctly applies these recommendations?

Start with a crawl of your site with Screaming Frog or a similar tool by filtering URLs containing utm_. Verify that each one has a canonical tag pointing to the clean version.

In Search Console, analyze the coverage report to identify any indexed URLs with parameters. If you find many, it means your canonicals are not being properly taken into account.

Also monitor your crawl budget in the Search Console crawl stats report. Excessive consumption on parameterized URLs would indicate a parameter management problem that needs resolving.

- Audit all canonical tags to ensure they point to clean URLs

- Remove any blocking of UTM parameters from the robots.txt file

- Configure URL parameters in Search Console for complex sites

- Avoid generating random parameters in public URLs

- Verify the absence of canonicals pointing to parameterized URLs

- Monitor the Search Console coverage report to detect indexed parameterized URLs

- Regularly analyze crawl budget to identify inefficiencies

💬 Comments (0)

Be the first to comment.