Official statement

Other statements from this video 7 ▾

- □ Le système de contenu utile de Google peut-il vraiment distinguer l'intention éditoriale ?

- □ Faut-il vraiment lire les guidelines Google pour comprendre leurs critères de qualité ?

- □ Le robots.txt suffit-il vraiment à contrôler le crawl de zones spécifiques de votre site ?

- □ Comment Google Extended permet-il de bloquer l'indexation pour Bard et Vertex AI ?

- □ Le robots.txt est-il vraiment respecté par tous les crawlers ?

- □ Les robots meta tags permettent-ils vraiment un contrôle précis de l'indexation ?

- □ Les CMS intègrent-ils vraiment les nouvelles options SEO aussi rapidement que Google le prétend ?

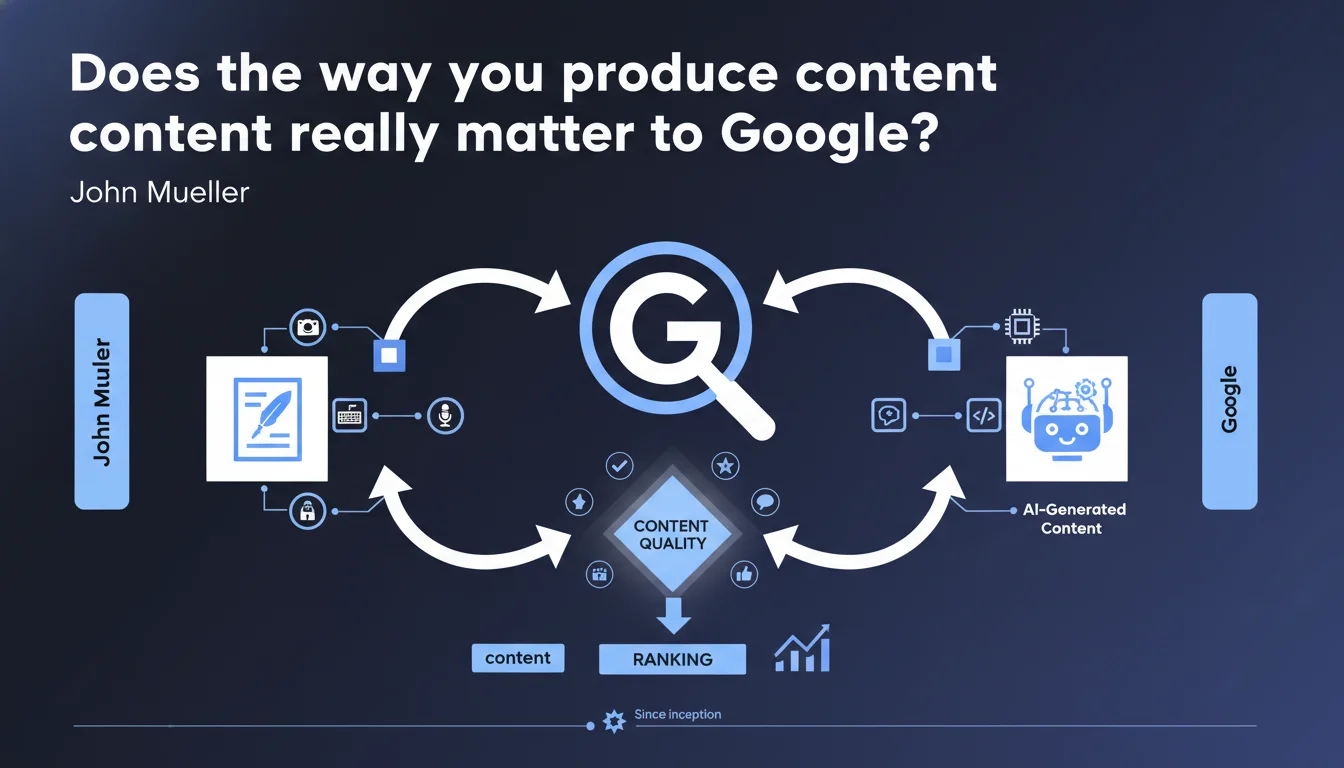

Google claims to focus solely on content quality, not production method — including for generative AI. This official position validates the use of automated tools as long as the final result meets E-E-A-T quality standards. In practice: the method doesn't matter, only the result does.

What you need to understand

Is Google really abandoning all control over production methods?

This statement marks a strategic shift in Google's communication. Historically, the search engine penalized low-quality automated content through filters like Panda. Today, Mueller states that the method matters little — only the final result counts.

This change in messaging coincides with the explosion of generative AI tools. Google is positioning itself this way: if your AI content meets quality standards, it will be treated like any other content. No automatic penalty, no bonus either.

What are the actual quality criteria Google evaluates?

Mueller doesn't detail the precise metrics — and that's where it gets sticky. Google constantly references E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) without ever providing measurable thresholds. It remains vague.

In practice, signals likely include: depth of analysis, citations of reliable sources, absence of hollow generalities, unique value delivery. But how does Google automatically detect these elements? Mystery. Quality Raters evaluate manually, but at what scale?

Does this philosophy apply uniformly across all sectors?

No. And Mueller doesn't clarify this, which is problematic. YMYL sectors (Your Money Your Life) — health, finance, legal — undergo much stricter filtering. AI-generated medical content without validation by certified professionals will likely be demoted, regardless of its apparent "quality."

Generic informational queries? There, Google does seem more permissive. But claiming the method never matters is a dangerous oversimplification for practitioners.

- Quality officially takes precedence over production method according to Google

- AI content is neither penalized nor automatically favored

- E-E-A-T remains the reference framework, but its evaluation criteria remain opaque

- YMYL sectors face much stricter examination despite this generic statement

- No quantifiable metrics are provided to measure this "quality"

SEO Expert opinion

Is this statement consistent with on-the-ground observations?

Partially. We do observe that well-crafted AI content performs correctly on informational long-tail queries. No systematic penalty. But claiming the method never matters? [Requires verification] — several documented cases show traffic drops on sites that massively switched to raw generative AI without human editing.

The problem: Mueller doesn't distinguish between "100% automated content published as-is" and "AI-assisted content then reworked by an expert." These two approaches produce radically different results in terms of depth and originality. Can Google really evaluate them the same way? I doubt it.

What nuances does this official position omit?

First nuance: detectability. Google claims not to penalize AI, but can its algorithms identify linguistic patterns typical of LLMs? Probably. And if these patterns correlate with signals of low quality — lack of sources, generalities, predictable structure — the content will be demoted. Not because it's AI, but because it's mediocre.

Second nuance: scalability. A site publishing 500 AI articles per month sends different behavior signals than a site publishing 10 well-crafted articles. Google also analyzes publication patterns, thematic consistency, format diversity. Ignoring these dimensions is naive.

In what cases does this rule clearly not apply?

First case: YMYL sectors. AI-generated health content without medical expert signatures will be systematically demoted, regardless of its editorial quality. Google verifies author authority, institutional affiliations, certifications. Production method becomes secondary to these authority criteria.

Second case: duplicate or near-duplicate content. If your AI merely paraphrases existing sources without adding original value, Google will detect it. Again, it's not the AI that's the problem, but the lack of value addition. Except AI makes this type of large-scale production easier — which Mueller doesn't mention.

Practical impact and recommendations

What should you concretely do if you're using AI to produce content?

Systematically edit generated content. Raw AI articles almost always contain generalities, inaccuracies, even factual errors. Add concrete examples, quantified data, case studies. Inject real expertise.

Check depth vs competition. If the top 10 results cover a topic in 2000 words with infographics and videos, your 800-word AI article won't hold up. Quality is relative to the competitive environment — Google compares; it doesn't score in a vacuum.

Integrate authority signals: identifiable author signatures, complete bios, links to LinkedIn profiles or personal websites. Google increasingly evaluates the author entity as a trust factor, especially in YMYL.

What mistakes should you absolutely avoid with AI-assisted content?

Never publish AI content without rigorous fact-checking. LLMs regularly hallucinate statistics, dates, citations. A single flagrant factual error can destroy your entire site's credibility in the eyes of Quality Raters.

Avoid massive simultaneous publication. A site moving from 10 to 200 articles in one month sends an alarm signal. Stagger releases, vary formats, alternate AI and fully human content. Maintain consistent editorial rhythm.

Don't neglect internal linking and structure. AI content often creates incoherent thematic silos. Google evaluates overall site coherence — a collection of disconnected articles will underperform even if each article individually is "correct."

How do you measure if your AI content meets quality standards?

Compare your time on page and bounce rate metrics before/after migration to AI. If time drops significantly, your content isn't engaging — regardless of what Google officially thinks.

Analyze featured snippets and position zero placement. If your AI content never wins these placements while your previous human content did, that's a clear signal of perceived quality degradation by Google.

Monitor Core Web Vitals and technical performance. Massive AI content rollouts often come with technical negligence — heavier pages, unoptimized images. Google evaluates overall experience quality, not just text.

- Systematically edit all AI content before publishing — never raw output

- Fact-check every quantified data point, citation, and technical reference

- Add concrete examples, case studies, and original expertise

- Integrate authority signals: identifiable authors with complete bios

- Analyze depth vs competition for each targeted query

- Stagger publications to avoid suspicious volume spikes

- Verify internal linking consistency and overall structure coherence

- Monitor time spent, bounce rate, and featured snippet deplacements

❓ Frequently Asked Questions

Google pénalise-t-il automatiquement le contenu généré par IA ?

Peut-on publier du contenu IA sans édition humaine ?

Les secteurs YMYL sont-ils traités différemment pour le contenu IA ?

Comment Google détecte-t-il qu'un contenu est généré par IA ?

Quelle est la différence entre contenu IA acceptable et contenu IA pénalisé ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 01/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.