Official statement

Other statements from this video 7 ▾

- □ La méthode de production du contenu importe-t-elle vraiment pour Google ?

- □ Le système de contenu utile de Google peut-il vraiment distinguer l'intention éditoriale ?

- □ Faut-il vraiment lire les guidelines Google pour comprendre leurs critères de qualité ?

- □ Le robots.txt suffit-il vraiment à contrôler le crawl de zones spécifiques de votre site ?

- □ Le robots.txt est-il vraiment respecté par tous les crawlers ?

- □ Les robots meta tags permettent-ils vraiment un contrôle précis de l'indexation ?

- □ Les CMS intègrent-ils vraiment les nouvelles options SEO aussi rapidement que Google le prétend ?

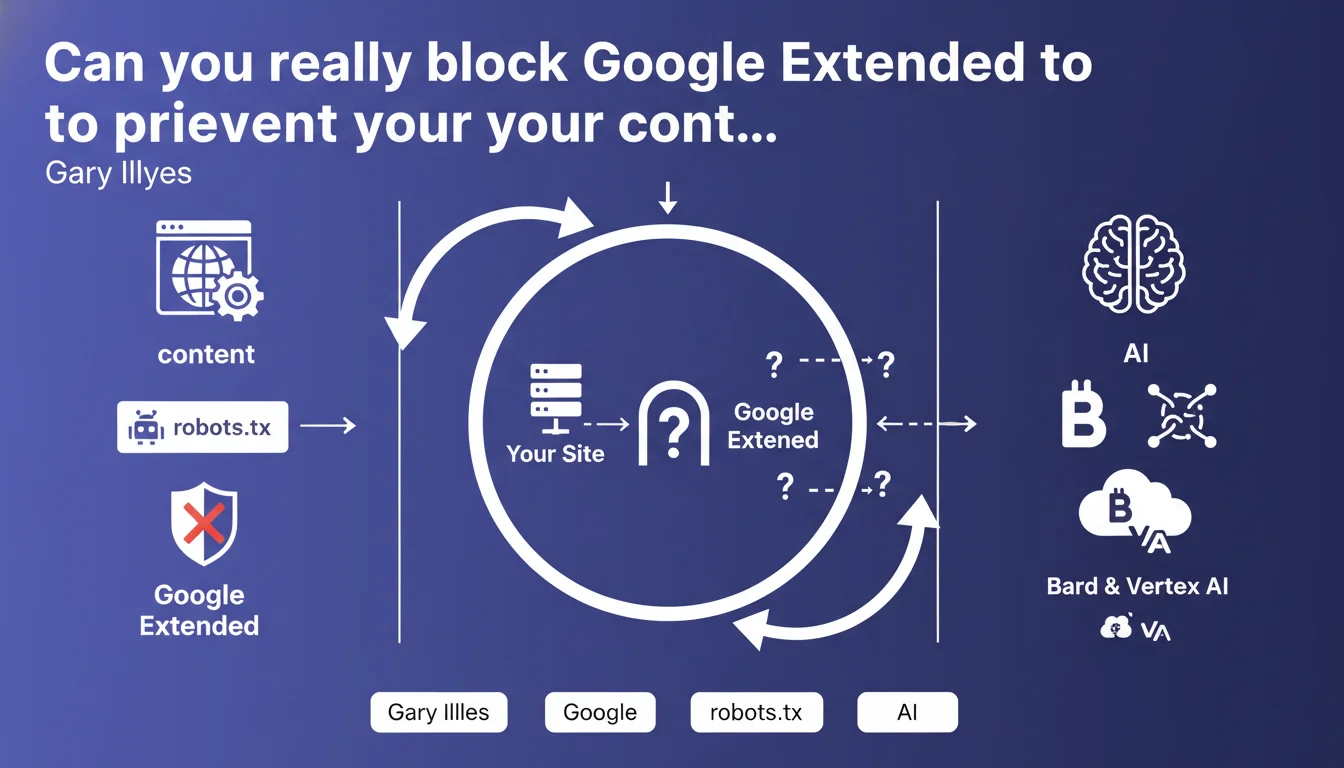

Google has introduced a dedicated user agent, Google Extended, which web publishers can block via robots.txt to prevent their content from feeding Bard and Vertex AI. This mechanism offers granular control distinct from classic Googlebot, allowing you to refuse AI model training without impacting your natural search rankings.

What you need to understand

What exactly is Google Extended?

Google Extended is a specific user agent deployed by Google to crawl content designed to improve its generative artificial intelligence products — primarily Bard (conversational) and Vertex AI (enterprise platform). Unlike the traditional Googlebot that indexes for Search, Google Extended focuses exclusively on collecting training data for language models.

Concretely, this means Google now separates two distinct uses: classic search engine optimization and AI training. A site can therefore appear in search results while refusing to let its content train generative models.

Why is this separation strategically important for Google?

The distinction between Googlebot and Google Extended responds to growing pressure from publishers and content creators. Many fear that their content, the result of substantial editorial investments, will be used to train AIs that subsequently directly compete with their own sources.

By offering this control lever, Google attempts to defuse criticism while maintaining access to data for its AI products. It's a delicate balance: giving the impression of choice without drying up training sources too much.

How do you block Google Extended in robots.txt?

The syntax is standard. Simply add a specific directive to your robots.txt file:

User-agent: Google-Extended

Disallow: /

This instruction blocks the entire site. You can also allow certain sections while blocking others, exactly like with any user agent. Granularity remains complete.

- Google Extended is a distinct user agent from Googlebot, dedicated to generative AI

- Blocking Google Extended does not impact classic indexing in Google Search

- Control is exercised via robots.txt, using standard syntax

- This separation aims to ease tensions between publishers and AI giants

SEO Expert opinion

Does this announcement really change the game for publishers?

Let's be honest: the gesture is symbolic, but its effectiveness remains limited. Blocking Google Extended does prevent future training of Google models, but it changes nothing about data already collected. Current Bard and Vertex AI models have already ingested massive amounts of content before this blocking option became available.

Furthermore, this directive only concerns Google. OpenAI, Anthropic, Meta and other AI players have their own user agents (GPTBot, ClaudeBot, etc.). Managing all of these crawlers requires constant monitoring and regular robots.txt updates — a non-negligible operational burden.

Should you systematically block Google Extended?

Not necessarily. It all depends on your business model and visibility strategy. A paid media outlet or proprietary database has every incentive to block and preserve the value of its exclusive content. Conversely, a site betting on visibility and brand awareness may consider appearing in Bard's responses as a form of complementary distribution.

[To verify] Google has provided no data on the volume of Google Extended crawls, nor on the actual impact of blocking on model performance. It's therefore impossible to precisely quantify the consequences of refusal.

What are the hidden risks of this strategy?

The main risk is information asymmetry. Google knows perfectly well which sites block Google Extended and could theoretically adjust its ranking algorithms accordingly — though nothing officially indicates such a mechanism. But the precedent of "helpful content" reminds us that Google knows how to create unexpected correlations.

Another point to watch: blocking Google Extended means giving up any future analysis of the value brought by these crawls. If Bard becomes a significant acquisition channel in two years, reverting will be technically easy, but the accumulated delay will be hard to make up.

Practical impact and recommendations

What concrete steps should you take if you want to block Google Extended?

First step: audit your robots.txt to verify its compliance and accessibility. A poorly formatted or inaccessible file renders any directive moot. Then add the blocking directive while respecting exact syntax. Test with Google Search Console's robots.txt testing tool to confirm proper recognition.

Second step: document this decision internally. Blocking an AI crawler is a strategic decision that should be assumed and reviewed periodically. Create a quarterly review process to reassess the relevance of blocking in light of AI landscape evolution and your business objectives.

What mistakes should you avoid when managing Google Extended?

Classic mistake: blocking Google Extended by default, without prior analysis of your content. Not all page types have the same strategic value. A corporate blog can accept AI training to gain visibility, while a premium section must remain protected.

Another trap: forgetting to monitor other AI user agents. Google Extended is just one player among many. GPTBot (OpenAI), CCBot (Common Crawl used by many labs), Anthropic-AI, Meta-ExternalAgent… The list grows every quarter. Coherent management requires mapping all these crawlers and defining a global policy.

How do you verify that blocking actually works?

Unfortunately, Google provides no reporting on Google Extended activity in Search Console. Unlike classic Googlebot, you won't have dedicated crawl statistics. The only possible verification remains manual robots.txt testing and server log analysis — provided you correctly identify the user agent in your log files.

For rigorous tracking, set up an automated alert on robots.txt modifications. An undocumented change could inadvertently reauthorize Google Extended access. Some CMS or SEO plugins sometimes modify this file without warning.

- Audit and validate robots.txt file syntax before any modification

- Add the

User-agent: Google-Extendeddirective followed byDisallow: /or specific paths - Test the directive with Search Console's robots.txt tool

- Document the decision and plan quarterly reviews

- Map and manage all AI user agents (GPTBot, CCBot, Anthropic-AI…)

- Set up server log monitoring to detect any unauthorized crawls

- Create alerts on undocumented robots.txt modifications

❓ Frequently Asked Questions

Bloquer Google Extended impacte-t-il mon référencement dans Google Search ?

Est-ce que bloquer Google Extended empêche Bard de citer mon site ?

Dois-je bloquer tous les user agents IA ou seulement Google Extended ?

Comment savoir si Google Extended crawle actuellement mon site ?

Puis-je autoriser Google Extended sur certaines sections seulement ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 01/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.