Official statement

Other statements from this video 11 ▾

- □ Google rend-il vraiment toutes les pages HTML indexables sans exception ?

- □ Googlebot suit-il vraiment Chrome en temps réel ?

- □ Les données structurées injectées en JavaScript sont-elles vraiment crawlées par Google ?

- □ Les redirections JavaScript sont-elles vraiment traitées comme des redirections serveur par Google ?

- □ Pourquoi le rendu Google ne sera jamais vraiment celui d'un navigateur standard ?

- □ Faut-il vraiment débloquer toutes vos ressources dans robots.txt pour éviter les problèmes d'indexation ?

- □ Google conserve-t-il vraiment les cookies entre chaque rendu de page ?

- □ Pourquoi Google ignore-t-il les bannières de consentement des cookies lors du crawl ?

- □ Pourquoi la gestion d'erreurs JavaScript conditionne-t-elle désormais votre capacité à être indexé par Google ?

- □ L'outil d'inspection d'URL est-il vraiment fiable pour tester le rendu par Googlebot ?

- □ Pourquoi Google rend-il toutes les pages HTML même celles qui n'ont pas besoin de JavaScript ?

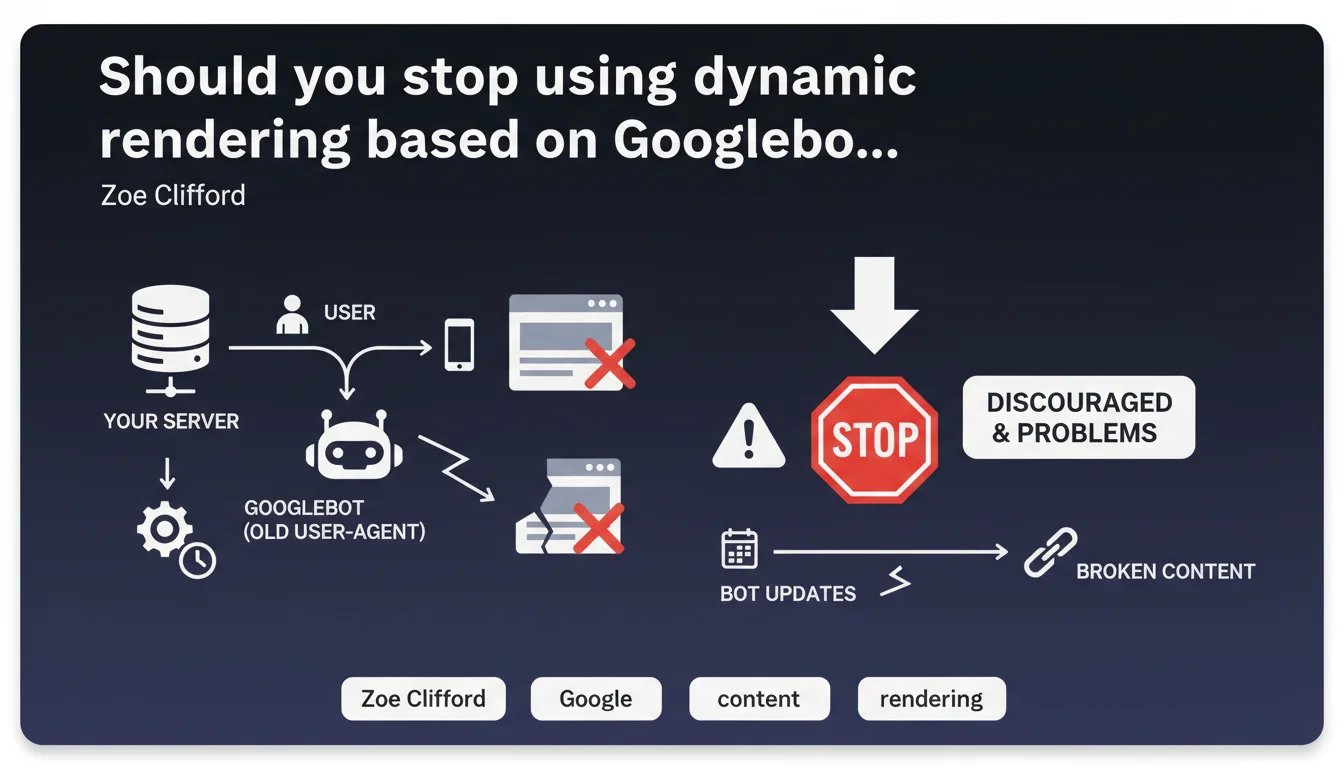

Google officially discourages serving different content to Googlebot based on its user-agent. These implementations become obsolete as the bot updates and risk displaying broken content only to the bot. The approach creates maintenance problems and inconsistencies between what Googlebot sees and what real users experience.

What you need to understand

Why is Google taking a stance against user-agent-based dynamic rendering?

Dynamic rendering involves serving different versions of a page depending on the visitor — typically a pre-rendered HTML version for bots and client-side JavaScript for users. For years, Google tolerated this practice, particularly to work around the limitations of its JavaScript rendering engine.

Today, the position is changing. Google indicates that specifically targeting Googlebot via its user-agent poses risks: these implementations age poorly, break when the bot is updated, and create divergences between what the crawler sees and what users actually see.

How does this differ from cloaking?

Cloaking involves serving deceptive content to search engines — a clear violation of guidelines. Dynamic rendering, on the other hand, aims to facilitate crawling and indexing without manipulating search results.

But the line is thin. If the version served to Googlebot diverges too much from the one intended for users, Google may consider this involuntary cloaking. The current statement pushes sites toward converging to a single version for everyone.

What are the concrete consequences of an obsolete implementation?

Googlebot evolves regularly: new JavaScript capabilities, user-agent changes, Chromium updates. If your dynamic rendering relies on strict user-agent detection, an update can break your detection logic.

Result: Googlebot receives incomplete or broken content, or even a 500 error. Your indexation degrades without you understanding why — especially if you never test the version served to the bot.

- Google now discourages serving different content to Googlebot based solely on user-agent

- These implementations age poorly and can break during bot updates

- Main risk: displaying incomplete or broken content only to Googlebot without realizing it

- The boundary between legitimate dynamic rendering and involuntary cloaking becomes blurred

- Google is pushing toward convergence: a single version for bots and users

SEO Expert opinion

Does this statement really mark a turning point?

Let's be honest: Google has been recommending for years avoiding dynamic rendering when possible. This statement hardens the tone, but doesn't come out of nowhere. What's changing is the explicit assertion that targeting Googlebot via user-agent poses a problem.

In practice, many sites still use this method — notably e-commerce platforms or complex SPAs. The question isn't whether Google likes this approach, but whether your implementation can withstand bot evolution. [To verify]: Google doesn't specify how often these "problems" actually occur, or whether they impact ranking or just indexation.

In what cases doesn't this rule really apply?

Google doesn't ban dynamic rendering outright. If you serve a pre-rendered version to all bots (not just Googlebot), and that version is strictly equivalent to what a user sees, you stay within bounds.

The problem emerges when you create logic specific to Googlebot — for example, serving only a stripped-down version to this bot, or adding content invisible to users. There, you enter a gray area that Google is beginning to penalize.

Do field observations confirm this position?

Yes and no. We do observe cases where Googlebot receives broken content because of poorly maintained dynamic rendering — notably after major Chromium updates. But quantifying the scope of the problem remains difficult.

What's certain: Google prefers you invest in server-side rendering (SSR) or static site generation (SSG) rather than bot detection. These approaches eliminate divergence at the source.

Practical impact and recommendations

What should you concretely do if you're using dynamic rendering?

First step: audit your implementation. Are you serving exactly the same content to Googlebot and users? Test with the URL inspection tool in Search Console — compare the rendering with what a standard browser displays.

If you detect Googlebot via user-agent, list all cases where the logic diverges. Ask yourself whether these divergences are justified or if they stem from convenience solutions that have aged.

What mistakes should you absolutely avoid?

Never serve Googlebot content that users don't see — even if your intention is to facilitate indexation. Google considers this cloaking, intentional or not.

Also avoid freezing your list of user-agents. If you must detect bots, use an actively maintained library — and regularly test that detection works after each update.

How do you migrate to a more robust architecture?

Ideally: server-side rendering (SSR) or static site generation (SSG). These approaches guarantee that everyone — bots and users — receives pre-rendered HTML. No more divergence, no more risk of breaking indexation.

If you can't migrate immediately, ensure at minimum that your JavaScript renders correctly client-side for all visitors. Test with standard browsers, without dynamic rendering — if it works, disable Googlebot detection.

- Test Googlebot rendering via the URL inspection tool in Search Console

- Compare pixel by pixel with what a standard browser displays for a real user

- List all divergences between the bot version and the user version

- If you use a user-agent list, verify that it's actively maintained

- Prioritize SSR or SSG to eliminate any divergence at the source

- If you keep dynamic rendering, serve the same version to all bots — not just Googlebot

- Set up automatic alerts if Googlebot rendering breaks (Search Console API monitoring)

❓ Frequently Asked Questions

Le dynamic rendering est-il complètement interdit par Google ?

Comment savoir si mon dynamic rendering pose problème ?

Quelle est la meilleure alternative au dynamic rendering basé sur user-agent ?

Si je désactive mon dynamic rendering, est-ce que Googlebot saura indexer mon JavaScript ?

Cette déclaration impacte-t-elle le ranking ou uniquement l'indexation ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 11/07/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.