Official statement

Other statements from this video 12 ▾

- □ La balise meta robots noindex suffit-elle vraiment à empêcher l'indexation d'une page ?

- □ Peut-on vraiment piloter Googlebot News et Googlebot Search avec des balises meta robots distinctes ?

- □ Peut-on vraiment empiler plusieurs directives meta robots dans une seule balise ?

- □ L'en-tête HTTP X-Robots peut-il remplacer la balise meta robots ?

- □ Où faut-il vraiment placer le fichier robots.txt pour qu'il soit pris en compte ?

- □ Faut-il gérer un robots.txt distinct pour chaque sous-domaine ?

- □ Le fichier robots.txt est-il vraiment respecté par tous les moteurs de recherche ?

- □ Faut-il utiliser les wildcards dans robots.txt pour mieux contrôler son crawl ?

- □ Faut-il vraiment déclarer son sitemap XML dans le fichier robots.txt ?

- □ Pourquoi robots.txt empêche-t-il Google de désindexer vos pages ?

- □ Robots.txt bloque-t-il vraiment l'indexation de vos pages ?

- □ Le rapport robots.txt de Google Search Console change-t-il vraiment la donne pour le crawl ?

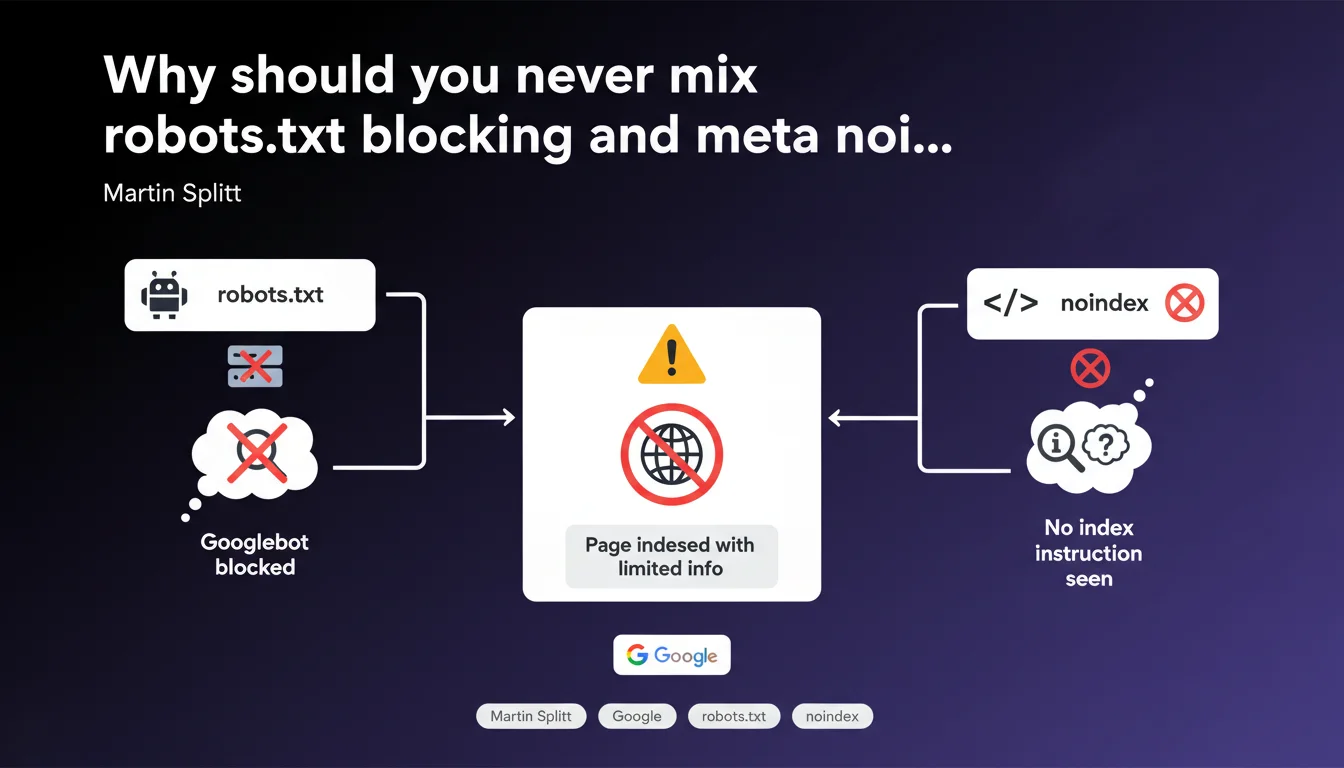

Blocking a page in robots.txt while placing a meta robots noindex tag on it creates a paradox: Googlebot cannot access the page to read the noindex directive, which can paradoxically result in its indexation with limited information. This is the opposite of the intended effect.

What you need to understand

What exactly is this directive conflict about?

The problem is based on a simple sequential logic: robots.txt acts upstream of the crawl, while the meta noindex tag is only read once the bot is on the page. If you block access in robots.txt, Googlebot will never fetch the HTML — so it will never see your noindex instruction.

Result? Google knows the page exists (via backlinks, sitemaps, or internal links), but cannot explore its content to understand that it should not be indexed. It may then decide to index it anyway, with a generic title and no description.

Why would Google index a page it cannot crawl?

Let's be honest: it seems counter-intuitive. But Google sometimes indexes URLs blocked in robots.txt if it detects enough external signals (link anchors, mentions, etc.). The URL appears in search results with the note "No information available about this page".

This is exactly what you wanted to avoid with your noindex — except you made the tag inaccessible. A classic case of shooting yourself in the foot.

What is the technical logic behind this behavior?

The robots.txt file works as a crawl filter, not an indexation directive. Google respects it scrupulously: no access = no crawl. Period.

The meta noindex, on the other hand, requires a crawl to be detected. It says "you can come see, but don't index me". If you combine the two, you create a contradictory instruction that Google resolves in its own way — generally not the one you hoped for.

- robots.txt blocks access before any exploration

- meta noindex requires a crawl to be read

- Combining the two prevents Google from seeing the noindex directive

- Google can still index the URL with limited info if it is referenced elsewhere

- The solution: choose one or the other, never both simultaneously

SEO Expert opinion

Does this statement truly reflect observed real-world behavior?

Absolutely. We regularly observe this scenario: pages blocked in robots.txt that still appear in the index with the famous "No information available" notice. It's frustrating, but consistent with the mechanics explained by Splitt.

What's missing — and it's a shame — is clarification on the frequency of this phenomenon. Not all blocked pages end up indexed. It depends on the volume of backlinks, domain authority, possible social signals. [To verify]: Is there a quantifiable threshold of external links beyond which Google forces indexation despite robots.txt?

In what cases should this rule be nuanced?

There are situations where you have no choice — at least temporarily. Imagine a site migration: you want to deindex the old one while blocking its crawl to concentrate the budget on the new one. Blocking in robots.txt + noindex may seem logical.

But be careful: if the old site still has active backlinks, you risk the opposite effect. It's better to use only noindex with crawl allowed, even if you manage crawl budget differently (server speed, pagination, etc.).

What is the true best practice according to advanced SEO observations?

If you want to deindex a page, use meta noindex (or X-Robots-Tag) and allow Googlebot to access it. Once deindexed, you can then block in robots.txt to save crawl budget — but only after confirming removal from the index.

If you want to prevent crawling without risk of partial indexation, use robots.txt alone and remove all internal links + backlinks to this URL. No signals = no phantom indexation. But this is rarely 100% achievable.

Practical impact and recommendations

What should you concretely do if you're in this situation?

First step: audit your site to identify pages blocked in robots.txt that still contain a noindex tag. Screaming Frog can do this, but be careful — it respects robots.txt by default. Configure it to ignore this file during the audit.

Then, decide which directive to keep. You want to prevent indexation? Remove the robots.txt block and let noindex do its job. You want to block crawling? Delete the noindex tag and make sure no internal links or active backlinks point to this page.

How do you verify that your configuration is consistent?

Use Google Search Console, Coverage tab. Pages blocked by robots.txt but indexed often appear under "Excluded" with the status "Blocked by robots.txt file". If they're still in the index, you'll see a conflict.

Also test with the URL inspection tool. If a page is blocked in robots.txt, GSC will tell you clearly before even testing the render. You'll immediately know if your noindex is inaccessible.

- Crawl your site ignoring robots.txt to detect duplicate directives

- Identify pages blocked in robots.txt that still have active backlinks

- Choose: noindex (with crawl allowed) OR robots.txt (without incoming links)

- Use GSC to confirm that noindex pages are properly crawled then deindexed

- Only block in robots.txt after confirming deindexation if you want to save crawl budget

- Remove internal links to any page blocked in robots.txt to prevent phantom indexation

❓ Frequently Asked Questions

Peut-on utiliser X-Robots-Tag au lieu de meta noindex si la page est bloquée dans robots.txt ?

Que se passe-t-il si une page bloquée dans robots.txt reçoit beaucoup de backlinks ?

Faut-il d'abord retirer le blocage robots.txt ou d'abord ajouter le noindex ?

Est-ce que Google Search Console signale ce type de conflit ?

Peut-on utiliser robots.txt pour bloquer temporairement une page en cours de développement ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/12/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.