Official statement

Other statements from this video 12 ▾

- □ Peut-on vraiment piloter Googlebot News et Googlebot Search avec des balises meta robots distinctes ?

- □ Peut-on vraiment empiler plusieurs directives meta robots dans une seule balise ?

- □ L'en-tête HTTP X-Robots peut-il remplacer la balise meta robots ?

- □ Où faut-il vraiment placer le fichier robots.txt pour qu'il soit pris en compte ?

- □ Faut-il gérer un robots.txt distinct pour chaque sous-domaine ?

- □ Le fichier robots.txt est-il vraiment respecté par tous les moteurs de recherche ?

- □ Faut-il utiliser les wildcards dans robots.txt pour mieux contrôler son crawl ?

- □ Faut-il vraiment déclarer son sitemap XML dans le fichier robots.txt ?

- □ Pourquoi ne faut-il jamais combiner robots.txt et meta noindex sur la même page ?

- □ Pourquoi robots.txt empêche-t-il Google de désindexer vos pages ?

- □ Robots.txt bloque-t-il vraiment l'indexation de vos pages ?

- □ Le rapport robots.txt de Google Search Console change-t-il vraiment la donne pour le crawl ?

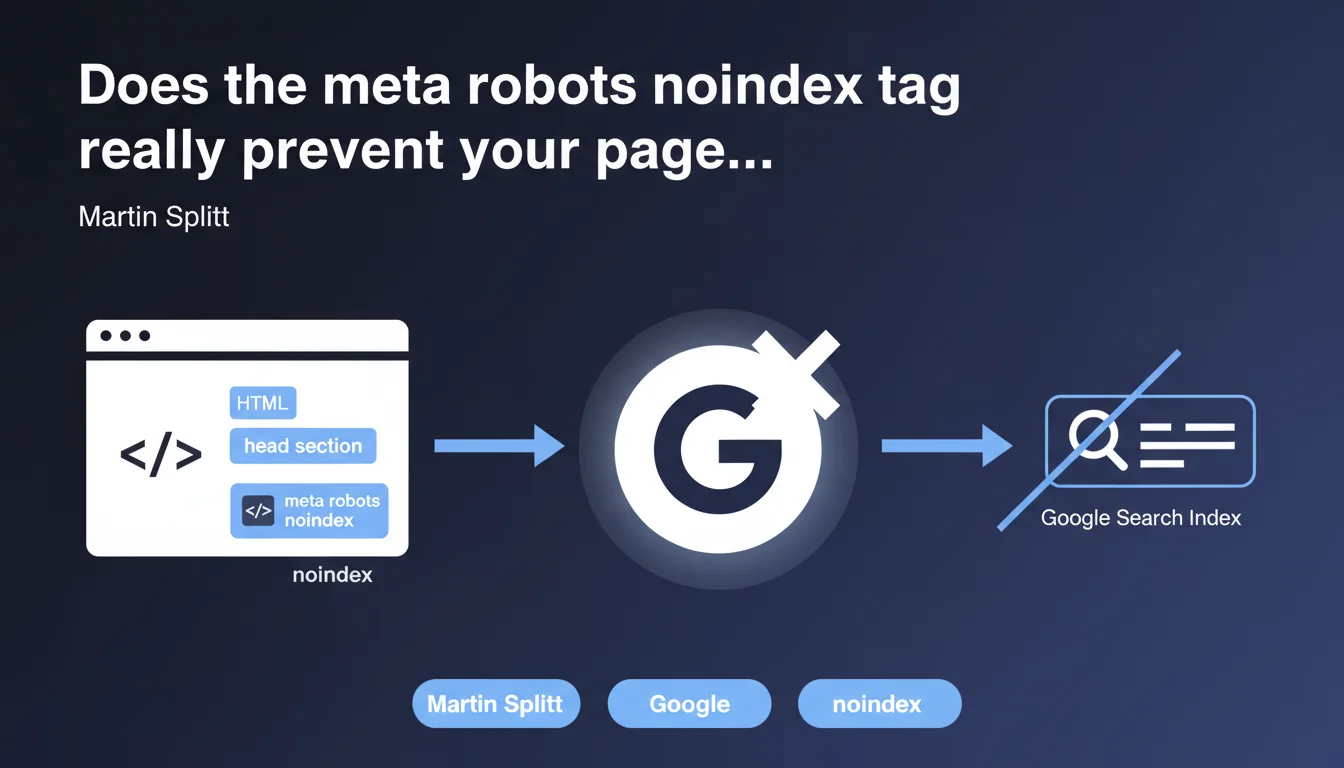

Google confirms that the meta robots noindex tag placed in the <head> of an HTML page prevents its indexing in search results. This directive remains the preferred method for excluding pages from the index, provided that Google can crawl the page to read this instruction.

What you need to understand

Why does this tag remain the standard for deindexation?

The meta robots noindex tag functions as an explicit instruction to search engines during crawling. Unlike robots.txt which blocks access upstream, this directive allows Google to access the content while asking it not to index it.

This approach offers a decisive advantage: Google can follow the outgoing links from the page and transmit PageRank, which is not possible with a robots.txt block. The page remains in the link graph but disappears from the SERPs.

What conditions must be met for this tag to work?

Google must be able to crawl the page to read the tag. If you block the URL in robots.txt, the bot will never see the noindex instruction and the page could remain indexed with a generic snippet.

The tag must be placed in the <head> section of the HTML, before any JavaScript that could delay its interpretation. Google typically detects it on the first crawl, but removal from the index can take several days.

Does this method cover all scenarios?

No. The meta robots tag does not protect against indexing if other sites create massive backlinks to the URL before Google crawls it. In this case, the URL can appear in the index with the note "No information available".

For sites generated client-side in JavaScript, verify that Google actually executes the JS and sees the tag. Use the URL inspection tool in Search Console to confirm that the final rendering includes the noindex.

- The meta robots noindex tag prevents indexing if Google can crawl the page

- Never block a noindexed URL in robots.txt, otherwise Google won't read the directive

- Removal from the index takes several days after the first crawl with the tag

- External backlinks can force partial indexing before crawling

- Check JavaScript rendering for dynamic sites

SEO Expert opinion

Is this statement aligned with real-world observations?

Yes, in 95% of cases. The noindex tag works reliably when properly implemented. Failures usually occur due to configuration errors: simultaneous robots.txt blocking, tag placed after <body>, or JavaScript that injects it too late.

A frequent pitfall: sites that switch a page from "index" to "noindex" and then block it in robots.txt before Google has recrawled. Result: the URL remains in the index indefinitely because Google can no longer read the deindexation instruction.

What nuances does Martin Splitt not mention here?

He doesn't discuss the propagation delay. Between when you add the tag and when it's actually removed from the index, several weeks can elapse for rarely crawled pages. Search Console remains vague on this timing.

Another point: Google respects noindex, but other engines like Bing or Yandex may have different delays. If your issue spans multiple engines, you need to test each separately. [To be verified] according to Bing Webmaster Tools feedback.

In what cases is this rule insufficient?

For emergencies. If a confidential or duplicate page appears in the index and you need to remove it immediately, the noindex tag isn't enough—it requires a recrawl. In this case, use the URL removal tool in Search Console for temporary removal within 24-48 hours.

For e-commerce facets or paginated archives, combining noindex with canonical can create conflicting signals. Google typically follows the canonical as a priority, so if you canonicalize to an indexable page, the noindex may be ignored.

Practical impact and recommendations

What should you concretely do to properly implement noindex?

Place the tag <meta name="robots" content="noindex"> in the <head> of your pages to exclude. Verify that it appears in the raw source code, before any late JavaScript injection.

Test with the URL inspection tool in Search Console. If the tag doesn't appear in the final HTML rendering, Google won't see it. Fix your JS implementation or switch to server-side rendering.

Never combine noindex with robots.txt blocking on the same URL. If you must absolutely block crawling (to save crawl budget), accept that the page may remain in the index with an empty snippet.

What errors should you avoid in noindex management?

Don't remove the tag too quickly. If you deindex a page then reindex it 48 hours later, Google may consider it unstable and slow down its crawl. Wait at least 2 weeks before changing your mind.

Avoid cascading noindex on entire sections without a clear strategy. Each noindexed page loses its ability to rank but continues to consume crawl budget. It's often better to permanently delete with 404 or 410 responses.

Watch out for CMS systems that apply noindex by default to certain templates (author pages, tags, archives). Systematically check after a migration or theme change.

How do you audit and fix a site with indexation issues?

- Crawl your site with Screaming Frog or Sitebulb to list all pages with meta robots noindex

- Cross-reference this list with indexed URLs in Search Console (Coverage report)

- Identify inconsistencies: noindex pages still in the index, or strategic pages mistakenly noindexed

- Check JavaScript rendering for each critical page type (PDP, categories, articles)

- Verify that robots.txt doesn't interfere with noindexed URLs

- Track the evolution of indexed page count week after week to detect anomalies

❓ Frequently Asked Questions

Combien de temps faut-il pour qu'une page noindexée disparaisse de l'index Google ?

Peut-on utiliser noindex et canonical sur la même page ?

Le noindex empêche-t-il Google de suivre les liens sortants ?

Faut-il retirer les pages noindexées du sitemap XML ?

Le noindex fonctionne-t-il dans les en-têtes HTTP ou seulement en HTML ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/12/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.