Official statement

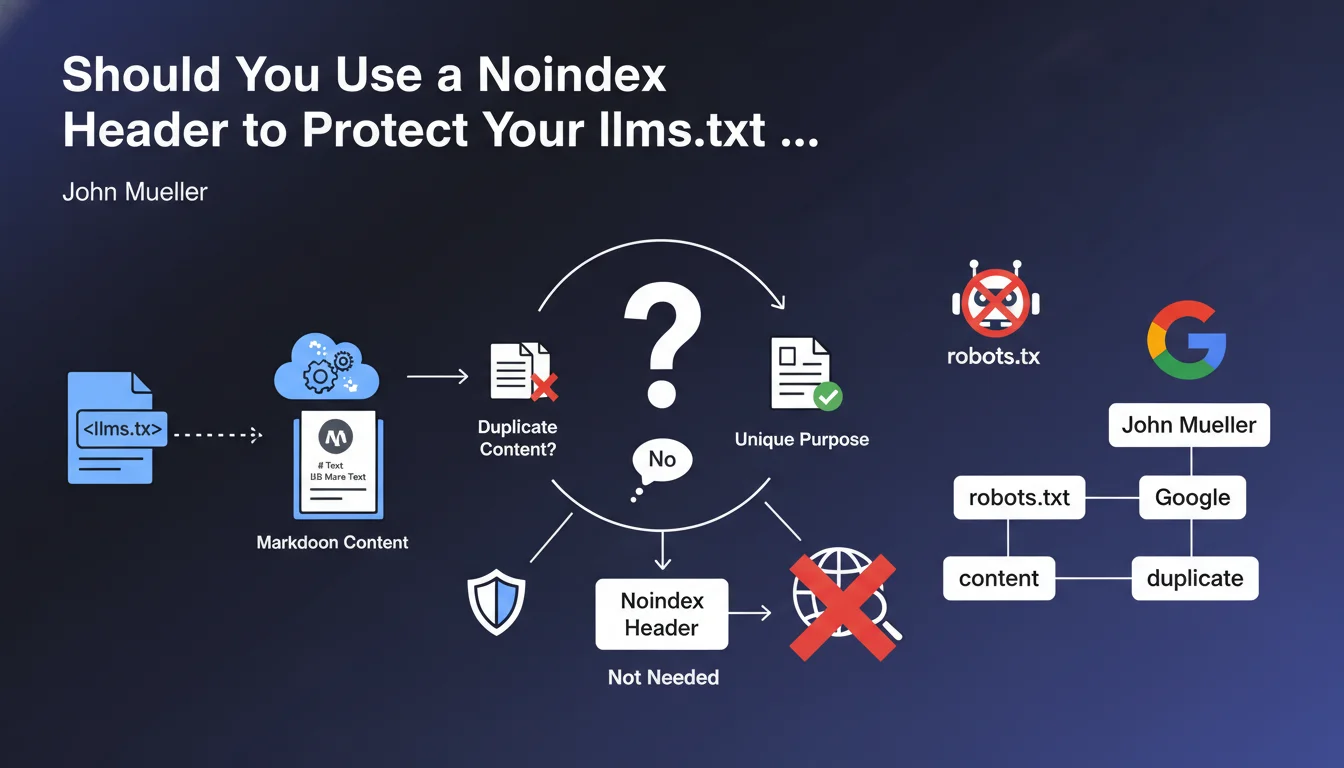

However, Mueller recommends using a noindex header for these files. His justification is purely practical: external sites could create links to these llms.txt files, which could cause them to be indexed by Google and create a "strange" experience for users who come across them in search results. Using noindex prevents the content from entering Google's index, unlike blocking via robots.txt which would simply prevent Google from crawling the file and therefore from seeing the noindex directive.

What you need to understand

llms.txt files are an emerging new practice in the SEO and artificial intelligence ecosystem. They enable language models (LLMs) to receive a structured Markdown version of a website's main content.

Unlike robots.txt files that control crawling, llms.txt files serve a completely different purpose: they facilitate content understanding by AI. They are placed at the domain root and contain a formatted representation of editorial content.

The question of duplicate content legitimately arises: do these files contain duplicate content? The official answer is reassuring. An llms.txt file will only be considered duplicate if its content is strictly identical to an HTML page, which would make no practical sense.

Key points to remember:

- llms.txt files do not create duplicate content issues by nature

- They use a Markdown format distinct from classic HTML pages

- They can be linked from external sites, which poses an indexation risk

- Using a noindex header is recommended to prevent their appearance in SERPs

- You should not block these files via robots.txt as this would prevent Google from seeing the noindex directive

SEO Expert opinion

This recommendation is perfectly consistent with SEO best practices regarding indexation management. Using a noindex header rather than robots.txt blocking demonstrates a nuanced understanding of the difference between crawling and indexation.

The logic is flawless: if you block the llms.txt file in robots.txt, Googlebot will never be able to crawl it and therefore never see the noindex directive. The file could then be indexed based on external signals (inbound links, mentions) without Google knowing its actual content. This is exactly the scenario to avoid.

The user experience argument is also relevant. A user who encounters an llms.txt file in search results would be faced with raw Markdown content, difficult to read and out of context. This poor experience could affect the perception of quality of your site.

Practical impact and recommendations

Following this official clarification, here are the concrete actions to implement to properly manage your llms.txt files:

- Create or update your llms.txt file with a structured Markdown version of your main content

- Add an HTTP noindex header to your llms.txt file (X-Robots-Tag: noindex)

- Verify that the llms.txt file is not blocked in robots.txt to allow crawling and reading of the noindex directive

- Test the header with tools like curl or your browser's DevTools to confirm its presence

- Monitor in Search Console that the file does not appear in indexed pages

- Document this configuration in your technical SEO documentation for future updates

- Avoid creating internal links to this file from your classic HTML pages

- If you use a CDN or cache, ensure that HTTP headers are correctly transmitted

Managing llms.txt files represents a new technical dimension of modern SEO, at the intersection of traditional optimization and the artificial intelligence era.

Proper implementation of HTTP headers, nuanced understanding of indexation directives, and the balance between accessibility for AI and protection against inappropriate indexation require in-depth technical expertise.

For high-volume sites or complex architectures, these optimizations can prove tricky to orchestrate without risk. Support from a specialized SEO agency allows you to benefit from a personalized analysis of your context, secure implementation, and impact monitoring, thus ensuring that your AI optimization strategy doesn't compromise your classic organic visibility.

💬 Comments (0)

Be the first to comment.