Official statement

Other statements from this video 15 ▾

- □ Hreflang booste-t-il vraiment le ranking dans un pays ciblé ?

- □ Faut-il vraiment réduire le nombre de pages pour optimiser son SEO international ?

- □ Comment Google détermine-t-il vraiment la langue d'une page multilingue ?

- □ Pourquoi Google ignore-t-il vos titres de page si la langue ne correspond pas au contenu ?

- □ Google utilise-t-il vraiment l'autorité de domaine pour classer les sites ?

- □ Pourquoi Googlebot refuse-t-il de cliquer sur vos boutons ?

- □ Les interstitiels JavaScript sont-ils vraiment sans risque pour le SEO ?

- □ Les problèmes techniques peuvent-ils vraiment déclencher une chute lors d'un Core Update ?

- □ La traduction de contenu est-elle pénalisée par Google ?

- □ Les traductions automatiques de mauvaise qualité peuvent-elles vraiment saboter votre SEO international ?

- □ Faut-il vraiment utiliser l'API d'indexation pour tous vos contenus ?

- □ Googlebot peut-il accéder à votre fichier .htaccess ?

- □ Google favorise-t-il réellement ses propres plateformes dans les résultats de recherche ?

- □ La meta description influence-t-elle vraiment le classement dans Google ?

- □ Faut-il vraiment choisir ses données structurées en fonction des résultats enrichis visés ?

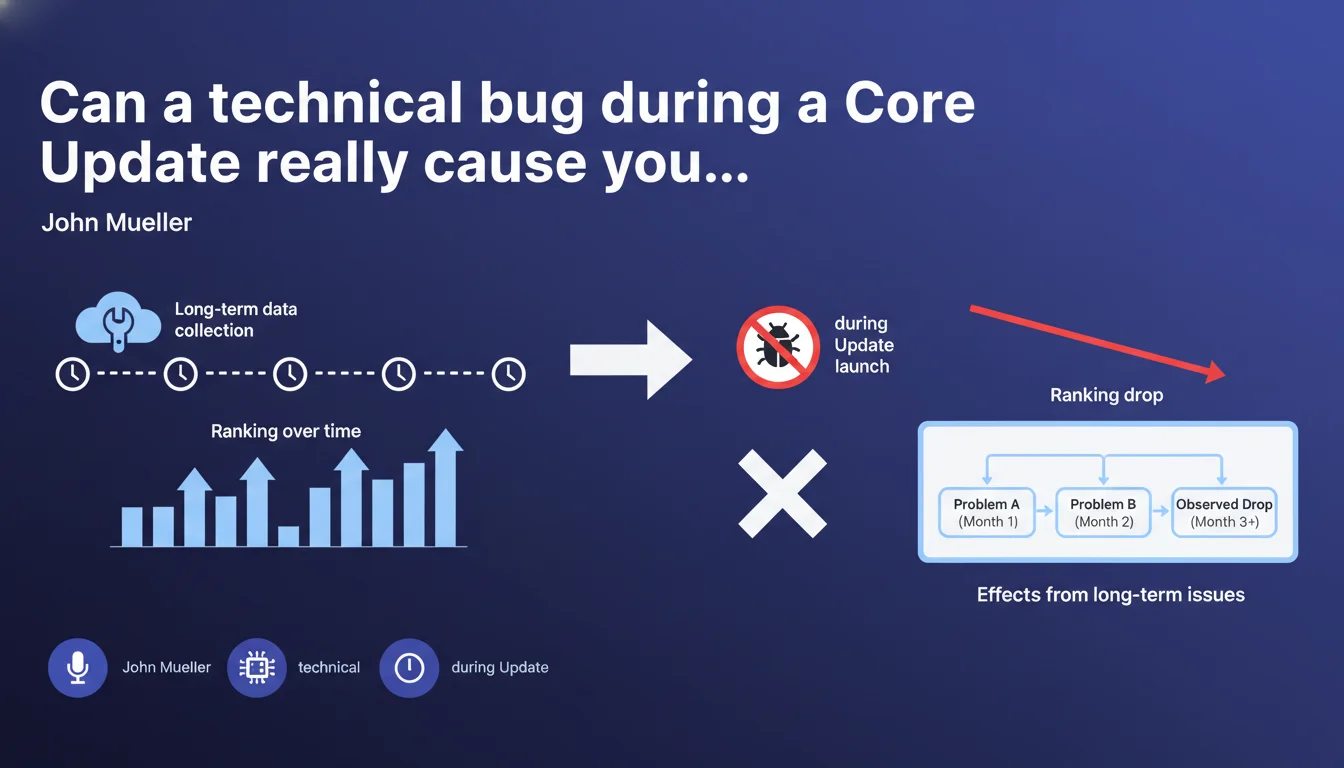

Core Updates are based on data collected over the long term. A technical problem occurring right at the moment of an update deployment will not cause a sudden drop. The drops observed reflect problems accumulated over several months, not a one-off incident.

What you need to understand

Why this clarification about Core Update timing?

Mueller is responding here to a persistent belief in the SEO community: the idea that a technical bug occurring at the wrong time — during the rollout of a Core Update — could trigger a visibility disaster.

It's false. Core Updates are not real-time analyses. Google compiles data over weeks or even months. When the update rolls out, it relies on this consolidated history, not on an instantaneous snapshot of your site.

What does this change for analyzing drops?

If your site drops during a Core Update, it's not because your server crashed on the Tuesday Google hit the button. It's because quality signals have degraded progressively, often without you noticing.

This challenges the "firefighter" approach where you frantically search for what broke the day before the update. The problem goes back further.

How does Google collect this data over the long term?

Google observes continuously: user behavior, content quality, E-E-A-T signals, editorial consistency. These metrics are aggregated, smoothed, compared across sites in the same sector.

When a Core Update rolls out, it applies new weights to these already-collected signals. Your position results from a global reevaluation, not from a one-off technical audit.

- Core Updates are based on historical data, not the instantaneous state of your site

- A technical bug occurring during the update will not cause a sudden drop directly linked to that update

- Drops reflect progressive degradation of quality signals over several weeks or months

- The reactive approach (finding the Day One bug) is less relevant than analyzing long-term trends

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, and it explains why some sites see their technical metrics "in the green" but still crash anyway. I've analyzed dozens of cases where Core Web Vitals were perfect, crawl was flawless, zero Search Console errors — yet 40% organic traffic loss.

The problem? Diluted content, weak engagement, declining authority against competitors. Quality signals degrading slowly, invisible in GSC until the update strikes.

What nuances should we add to this rule?

Careful: Mueller says a bug just at the moment of the update doesn't cause the drop. But if that bug has been lingering for weeks before the update, it absolutely could have impacted the data Google collected.

Concrete example — your XML sitemap breaks in early January, Google loses track of 30% of your pages. Two months later, Core Update in March: the damage is done. It's not the timing that matters, it's the duration of exposure.

[To verify] Mueller's phrasing remains vague about the exact collection window. Is it 3 weeks? 3 months? 6 months? Google never gives a precise figure, which complicates retrospective analysis.

In what cases does this rule not apply?

If your site suffers a critical indexation problem (accidental noindex on the entire site, robots.txt blocking Googlebot), that's different. It's no longer a Core Update at play, it's pure and simple deindexation.

Similarly, a manual spam action or targeted algorithmic penalty (Spam Update, Link Spam) can strike independently of the Core Update cycle. Don't mix everything together.

Practical impact and recommendations

What should you do concretely before and after a Core Update?

Stop obsessively monitoring your logs the day before an announced Core Update. Instead, install a continuous monitoring routine: editorial quality, engagement metrics (time on page, scroll depth), thematic authority.

Before the update, conduct a qualitative content audit: thin pages, outdated content, poorly covered topics. That's where you'll find real vulnerabilities, not in a Lighthouse test.

What mistakes to avoid if your rankings drop post-update?

Don't panic and don't overhaul your entire site in 48 hours. Google needs time to stabilize positions after a Core Update (often 2-3 weeks). A hasty reaction can make things worse.

Also avoid focusing solely on technical aspects. If your competitors are producing more in-depth content, better sourced, with real expertise — that's the problem, not your page load time.

How do you identify signals that have degraded over the long term?

Compare your metrics over 3 to 6 month rolling periods: bounce rate, pages per session, social shares, backlinks gained vs lost. Watch whether your flagship pages are gradually losing positions, even outside Core Update periods.

Use tools like Google Trends to see if your topic is declining in interest, or if new players are emerging. Sometimes the "drop" is just that the market evolved and you didn't follow.

- Set up continuous monitoring of qualitative signals (engagement, authority) and not just technical ones

- Audit your content every quarter to identify thin, outdated, or poorly positioned pages

- Don't make massive changes to your site within 48 hours after a drop — let the update stabilize

- Analyze your competitors: which content performs better than yours and why?

- Monitor your sector's evolution via Google Trends and semantic analysis of SERPs

- Document changes to your site with precise timestamps to correlate or rule out technical causes

❓ Frequently Asked Questions

Si un bug technique survient pendant une Core Update, mon site peut-il quand même baisser à cause de ce bug ?

Combien de temps avant une Core Update Google collecte-t-il les données utilisées ?

Mon site a chuté pendant une Core Update sans bug technique visible — que faire ?

Un concurrent a monté pendant l'update alors qu'il a plein d'erreurs techniques — comment c'est possible ?

Faut-il attendre la prochaine Core Update pour récupérer après une chute ?

🎥 From the same video 15

Other SEO insights extracted from this same Google Search Central video · published on 29/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.