Official statement

Other statements from this video 12 ▾

- □ La balise meta robots noindex suffit-elle vraiment à empêcher l'indexation d'une page ?

- □ Peut-on vraiment piloter Googlebot News et Googlebot Search avec des balises meta robots distinctes ?

- □ L'en-tête HTTP X-Robots peut-il remplacer la balise meta robots ?

- □ Où faut-il vraiment placer le fichier robots.txt pour qu'il soit pris en compte ?

- □ Faut-il gérer un robots.txt distinct pour chaque sous-domaine ?

- □ Le fichier robots.txt est-il vraiment respecté par tous les moteurs de recherche ?

- □ Faut-il utiliser les wildcards dans robots.txt pour mieux contrôler son crawl ?

- □ Faut-il vraiment déclarer son sitemap XML dans le fichier robots.txt ?

- □ Pourquoi ne faut-il jamais combiner robots.txt et meta noindex sur la même page ?

- □ Pourquoi robots.txt empêche-t-il Google de désindexer vos pages ?

- □ Robots.txt bloque-t-il vraiment l'indexation de vos pages ?

- □ Le rapport robots.txt de Google Search Console change-t-il vraiment la donne pour le crawl ?

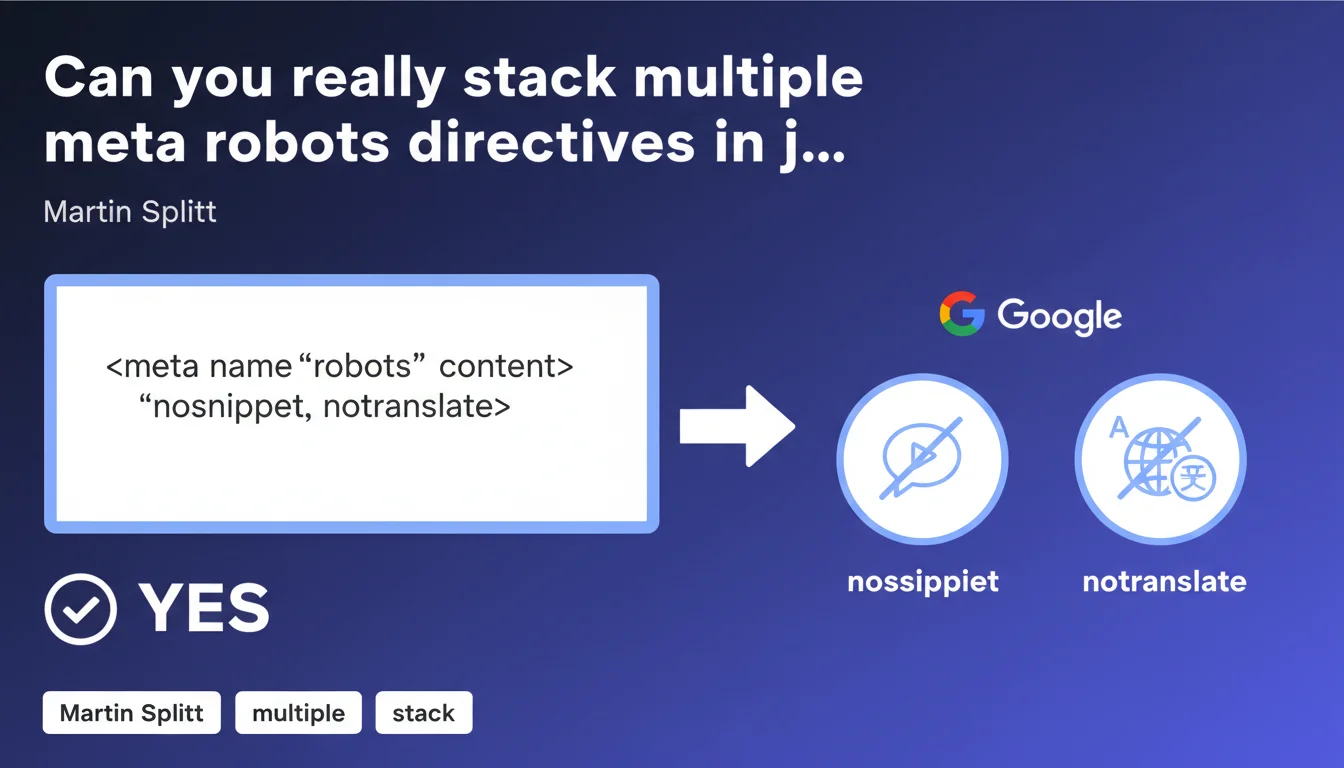

Google confirms you can combine multiple directives in the same meta robots tag — for example nosnippet AND notranslate simultaneously. This practice eliminates the need for multiple tags and simplifies your HTML code. Concretely, it's a win for technical cleanliness with no negative impact on crawling.

What you need to understand

Why is Google clarifying this now?

The question keeps coming up: should you create multiple meta robots tags for each directive, or can you group them together? Martin Splitt puts the debate to rest by explicitly validating the combination in a single tag. This approach has actually been recommended in official documentation for a long time, but many SEOs were still hesitant.

The doubt mostly came from contradictory field reports — some observing strange behaviors with complex combinations. Google clarifies things: technically, it works perfectly. The engine parses the tag and applies each listed directive without hierarchy or conflict.

What directives can you actually combine?

All standard meta robots directives are compatible: noindex, nofollow, nosnippet, notranslate, noarchive, noimageindex, max-snippet, max-image-preview, max-video-preview. You can write <meta name="robots" content="noindex, nofollow, nosnippet"> without risk.

However, watch out for contradictory directives. Combining index AND noindex in the same tag makes no sense — Google will apply the most restrictive directive. Similarly, max-snippet:0 is equivalent to nosnippet, so there's no point stacking them.

What's the impact on crawling and indexing?

No measurable negative impact. Googlebot processes the meta robots tag like any other HTML element: it reads it, parses the directives separated by commas, and applies each one. No crawl budget overhead, no indexing slowdown. The number of tags has no influence on crawl budget or indexing speed.

- A single meta robots tag is enough for all your directives — no need to multiply them.

- Directives are separated by commas and processed independently by Googlebot.

- No hierarchy between directives: they all apply simultaneously.

- Avoid duplicates or contradictions in the same tag (e.g., index, noindex).

- This syntax works equally well with

name="robots"as withname="googlebot"to target Google specifically.

SEO Expert opinion

Does this statement really change anything in the field?

Let's be honest: no. Most experienced SEOs were already stacking multiple directives in a single meta robots tag without asking questions. Martin Splitt's confirmation is more of an official validation than a technical revelation. It reassures those who were still in doubt, nothing more.

The real value is in clarifying edge cases. Some CMS platforms or WordPress plugins were generating multiple tags by default — creating redundant code. Now we can state without equivocation that a single tag grouping all directives is strictly equivalent and even preferable for HTML cleanliness.

Are there cases where this rule might fail?

Yes, in differentiated targeting scenarios. If you want to apply specific directives to Googlebot and others to Bingbot, you'll need to create two separate tags: name="googlebot" and name="bingbot". It's impossible to group everything in a single name="robots" tag if you're targeting different behaviors per search engine.

Another nuance: some directives like max-snippet or max-image-preview accept numeric values. If you combine max-snippet:150, max-image-preview:large, make sure your CMS or framework isn't adding unwanted spaces after commas — some poorly coded parsers might misinterpret. [Verify this] on your specific environments, especially if you're using JavaScript to generate tags client-side.

Should you audit your site to fix multiple tags?

Not a critical priority. If your site displays multiple successive meta robots tags for different directives, Google will mentally merge them and apply the entire set. No measurable negative impact — just messier code.

That said, during a redesign or technical optimization pass, take the opportunity to consolidate your tags. Fewer HTML lines equals better readability for audits, easier debugging, clearer CMS templates. It's a developer convenience thing, not an SEO emergency.

Practical impact and recommendations

What should you actually do on your site?

Audit your page templates to identify multiple meta robots tags. If you find several per page for different directives, combine them into a single tag separated by commas. Example: instead of <meta name="robots" content="noindex"><meta name="robots" content="nofollow">, write <meta name="robots" content="noindex, nofollow">.

On WordPress, Yoast SEO and Rank Math already handle this syntax correctly — but some third-party plugins or custom code can generate duplicates. Check the HTML source of your critical pages (homepage, categories, product sheets) to detect anomalies.

What errors should you absolutely avoid?

Never combine contradictory directives in the same tag. Writing content="index, noindex" makes no sense — Google will apply the most restrictive directive (noindex). Same logic applies to follow/nofollow.

Also avoid stray spaces after commas if your technical environment is sensitive. HTML standard accepts both noindex, nofollow and noindex,nofollow, but some custom parsers might break. Test on your internal tools.

How do you verify everything is working correctly?

Use the URL inspection tool in Google Search Console. Paste the URL in question, click "Test live URL", then check the "HTML" tab in the details. Google will display the detected meta robots directives — you'll see if all your combinations are properly parsed.

Complete this with a Screaming Frog or Sitebulb crawl to scan your entire site. Filter pages with multiple meta robots tags, then check if they carry identical directives (unnecessary redundancy) or different directives (needs consolidation).

- Group all meta robots directives in a single tag per page

- Separate directives by commas without contradiction (no index+noindex)

- Verify syntax in Google Search Console's URL inspection tool

- Crawl the site with Screaming Frog to detect multiple or duplicate tags

- Test critical pages under real conditions after modification (CDN cache, client-side JavaScript)

- Document your directive choices in an internal guide to prevent future errors

❓ Frequently Asked Questions

Peut-on combiner noindex et nofollow dans la même balise meta robots ?

Si j'ai deux balises meta robots successives avec des directives différentes, que se passe-t-il ?

Les directives X-Robots-Tag HTTP header prennent-elles le dessus sur les meta robots ?

Faut-il mettre des espaces après les virgules dans content="noindex, nofollow" ?

Peut-on combiner max-snippet:150 et nosnippet dans la même balise ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/12/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.