Official statement

Other statements from this video 13 ▾

- □ Tirets vs underscores dans les URLs : quel impact réel sur votre SEO ?

- □ Le noindex follow garantit-il vraiment l'exploration des liens par Google ?

- □ Pourquoi Google ignore-t-il les fragments d'URL avec # en SEO ?

- □ Les erreurs 503 brèves impactent-elles vraiment le crawl de votre site ?

- □ Pourquoi noindex est-il plus efficace que robots.txt pour masquer un site de Google ?

- □ Changer d'hébergeur web impacte-t-il réellement votre référencement naturel ?

- □ Faut-il vraiment limiter l'API d'indexation aux offres d'emploi et événements ?

- □ Faut-il vraiment bannir le texte intégré directement dans les images ?

- □ Les menus burger dupliqués dans le DOM nuisent-ils au référencement ?

- □ Peut-on vraiment cibler plusieurs pays avec une seule page grâce à hreflang ?

- □ Les erreurs 404 externes nuisent-elles vraiment au classement Google ?

- □ Faut-il vraiment un sitemap.xml pour bien ranker sur Google ?

- □ Faut-il vraiment abandonner les URLs mobiles séparées (m-dot) pour le SEO ?

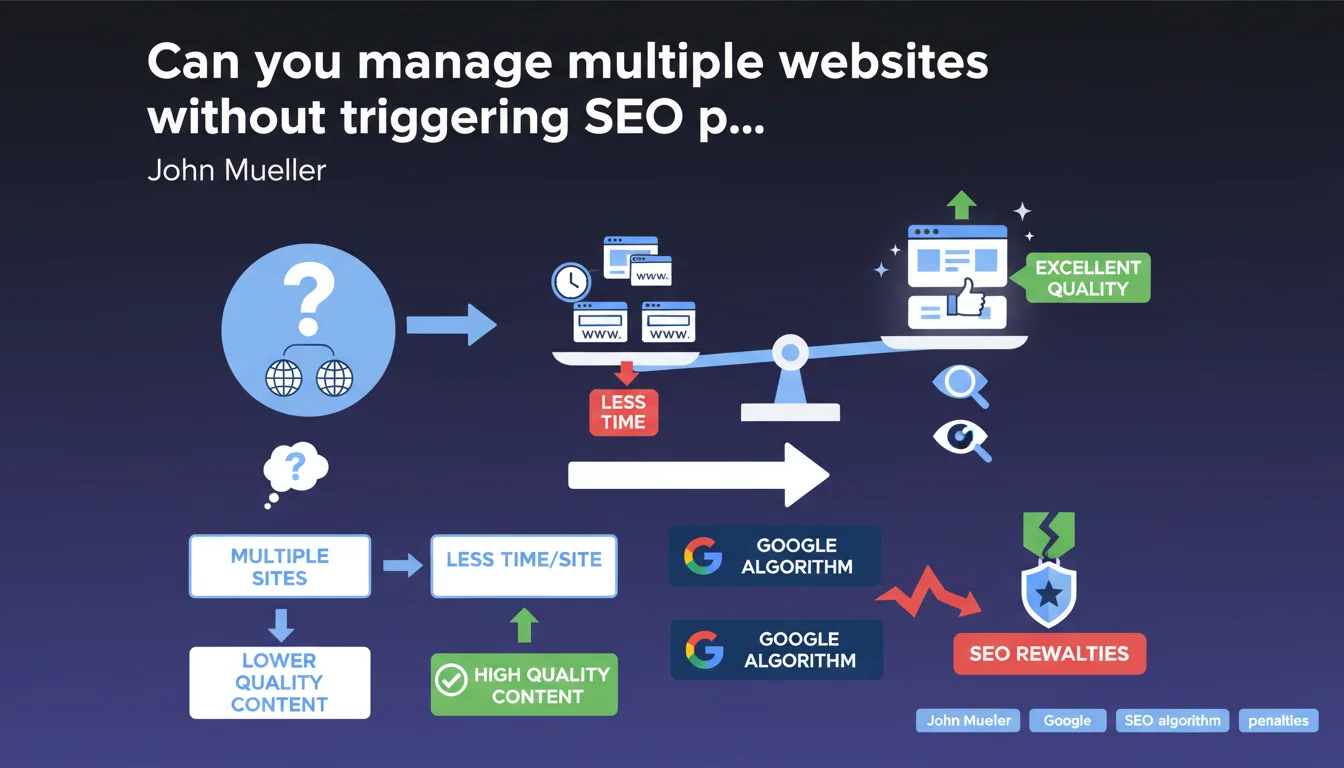

Google states that owning multiple websites is not penalizing in itself. The real issue? Spreading your time and resources across multiple projects mechanically dilutes quality — and it's this decline in quality that Google's algorithms detect and penalize.

What you need to understand

Does Google penalize owning multiple websites?

No. Owning 2, 10, or 50 websites doesn't trigger any automatic algorithmic penalty. Google doesn't count your domains and won't apply a demotion just because you're diversifying your online presence.

The nuance — and it's crucial — is that multiplying sites mechanically dilutes your capacity to produce quality content, maintain solid technical infrastructure, manage backlinks, and optimize UX. It's this decline in quality that Google detects.

What does Google mean by "lower quality"?

When Mueller talks about quality that algorithms can detect, he's targeting several dimensions: duplicate or paraphrased content across your sites, hollow pages without real added value, neglected internal linking, mediocre loading speed, weak engagement signals.

E-E-A-T indicators (Experience, Expertise, Authoritativeness, Trustworthiness) also play a role: a single well-maintained site projects more credibility than a galaxy of microsites abandoned after a few months.

In what cases does this multiplication truly become a problem?

Typically, Private Blog Networks (PBN) created to manipulate backlinks, automated content farms, or clone microsites on similar niches. Google recognizes these patterns — especially when sites share the same servers, templates, or ghost authors.

Conversely, managing multiple distinct brands, each with its own audience and original content, poses no issues as long as each site meets an acceptable quality threshold.

- No automatic penalty for multi-site ownership

- The real risk: time dilution and mechanical quality decline per site

- Google detects quality through content, technical structure, UX signals

- Manipulative site networks (PBN) remain clearly in the crosshairs

SEO Expert opinion

Is this position consistent with field observations?

Broadly, yes. We observe that media groups or multinational brands manage dozens of sites without penalty, as long as each property maintains solid editorial standards. The Washington Post, Condé Nast, Le Groupe Marie Claire — no issues whatsoever.

Where it breaks down — and Mueller hints at this without naming it — is when SEO professionals create low-cost satellite sites to drain traffic to a main site or monetize long-tail niches. Google doesn't penalize the multi-site model; it penalizes mediocrity at scale.

What nuances should be added to this statement?

Mueller remains intentionally vague about the acceptable quality threshold. [To verify]: at what point does dispersion become algorithmically detectable? No official metrics. We're working blind.

Another blind spot: the treatment of affiliate or comparison sites. Many players manage multiple thematic domains (finance, tech, health) with variable content quality. Google claims to detect quality — yet the SERPs are still full of mediocre microsites that rank reasonably well.

Finally, the question of shared footprints (same Analytics, Search Console, servers, templates) isn't addressed. Can Google link your sites together? Officially, no — but observations suggest recurring patterns (structure, content, links) can signal a network.

In what cases doesn't this rule really apply?

If you have the resources — a substantial editorial team, per-site SEO budget, technical expertise — managing 10 or 20 quality sites is perfectly viable. Mueller's advice is aimed mainly at solopreneurs or small structures spreading themselves too thin.

Another exception: event or seasonal sites. Creating a dedicated domain for a trade show, a time-limited campaign, then abandoning it poses no issues as long as the content stays online and doesn't become a digital graveyard.

Practical impact and recommendations

What should you do if you're already managing multiple sites?

Audit each property individually as if it were your only site. Ask yourself: does this site provide unique value, or is it a clone of another domain with a few permuted keywords?

If some sites are languishing — stagnant traffic, outdated content, neglected maintenance — consolidate. Redirect to your main domain via clean 301s, or shut them down entirely if SEO juice is non-existent. Fewer, better-maintained sites always beat a galaxy of zombie projects.

What mistakes must you absolutely avoid?

Don't create artificial link networks between your sites. Google can detect unnatural linking patterns, especially if your domains have no editorial reason to cite each other.

Avoid duplicate or near-duplicate content across your properties. If you republish an article from site A on site B, use the canonical tag and clearly indicate the original source.

Don't share the same templates or cloned structures without customization. Google recognizes visual and technical fingerprints — a network of 15 sites with the same poorly customized WordPress theme sends a negative signal.

How do you verify that your multi-site strategy remains healthy?

- Each site has its own editorial identity, unique angle, distinct audience

- Content on each domain is original and kept up to date — no copy-pasting between sites

- Each site has dedicated resources (time, budget, team) to guarantee consistent quality

- Your sites' backlink profiles are natural and independent — no artificial cross-link schemes

- You can justify the existence of each domain with clear business or editorial logic — not just SEO traffic capture

- Your sites don't share duplicate content or identical technical structures without reason

Managing multiple sites is not a problem if each property meets a solid quality threshold. The key? Don't disperse your resources to the point of lowering overall standards. One excellent site beats five mediocre ones.

If you notice that certain domains are stagnating or no longer receiving necessary attention, consolidate. Cleanly redirect to your main site or shut down zombie projects. Quality always trumps quantity.

These trade-offs — quality audits, domain consolidation, multi-site architecture optimization — demand pointed expertise and time. If you manage a complex portfolio, support from a specialized SEO agency can help you structure a coherent strategy, avoid technical pitfalls, and maximize ROI from each property.

❓ Frequently Asked Questions

Combien de sites peut-on gérer sans risque SEO ?

Google peut-il détecter que plusieurs sites appartiennent au même propriétaire ?

Faut-il éviter les liens entre mes différents sites ?

Vaut-il mieux fermer un site peu performant ou le laisser en ligne ?

Les PBN (réseaux de sites privés) sont-ils toujours détectés par Google ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 18/04/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.