Official statement

Other statements from this video 12 ▾

- □ Is Google's AI Mode about to completely reshape your long-tail keyword strategy?

- □ Are query groups in Search Console really changing how you should analyze your SEO performance?

- □ Will custom Search Console annotations really transform how you track your SEO performance?

- □ Can Google Discover really boost your visibility without driving traffic to your website?

- □ How will creator profiles in Discover reshape the SEO traffic landscape?

- □ Should you declare shipping and return policies at the organization level in structured data?

- □ Should you still implement structured data if Google strips away their visual display?

- □ Why does Google insist on flexible configuration for your structured data?

- □ Is Chrome about to make HTTPS mandatory by default in 2026? Here's what you need to know

- □ Is your JavaScript paywall destroying your crawl budget? Here's how to fix it without cloaking

- □ Do you really need to create an llms.txt file to appear in Google's AI search results?

- □ Can Google's AI actually book a table on your restaurant site without human intervention?

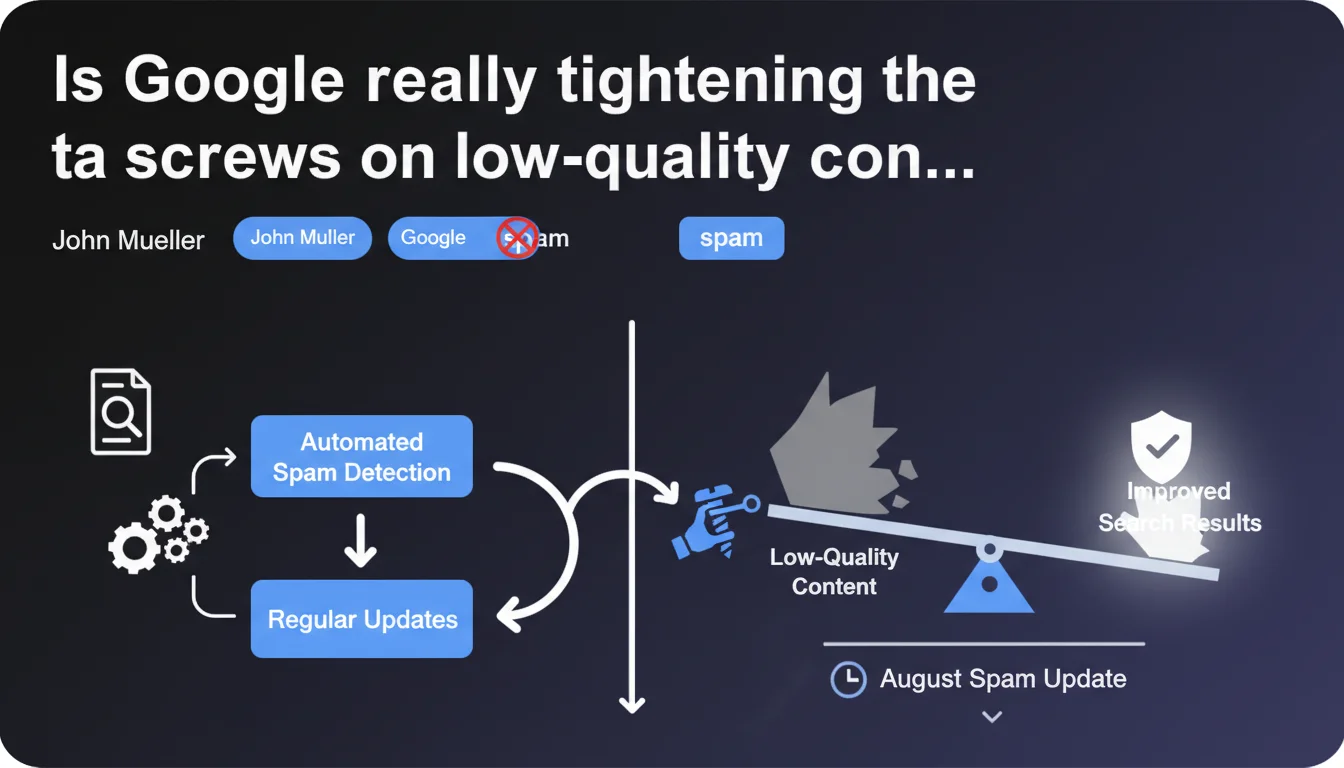

Google announces yet another update to its anti-spam systems in August. The stated goal: improve content quality in the SERPs. In practice, this means sites that push the boundaries of Google's guidelines risk getting hit hard — or not, depending on how mature the deployed algorithms really are.

What you need to understand

Why is Google communicating so sparingly about this update?

John Mueller's statement is deliberately minimal. Google reveals neither the spam techniques being targeted, nor the scale of deployment, nor priority sectors.

This restraint isn't accidental. By staying vague, Google avoids giving spammers a roadmap to circumvent the new filters. It's a classic strategy: announce the action without revealing the mechanics.

What does "improving content quality" really mean in practical terms?

Google never precisely defines what "quality content" is. In this context, understand that automated systems will likely target repetitive patterns, content farms, satellite site networks, and link manipulation schemes.

But be careful — "improving quality" doesn't mean all mediocre content will plummet. Google is primarily seeking to eliminate flagrant abuse, not rewrite its entire index.

Is this a manual update or entirely automated?

The phrasing "automated systems" is clear: this is an algorithmic intervention, not manual actions. Google's teams won't review your site individually.

This also means false positives are possible. Legitimate sites can be impacted if their signals resemble — in the algorithm's eyes — those of a spam site.

- Automated update: no human intervention, so no direct recourse

- Vague communication: Google won't reveal its criteria to avoid helping spammers

- Focus on abuse: mediocre but honest content is probably not the priority target

- False positive risk: clean sites can be hit by mistake

SEO Expert opinion

Is this statement consistent with what we're seeing in the real world?

Let's be honest: Google has been announcing "regular updates" for years. Yet we still see garbage sites occupying the first page on certain competitive queries.

That doesn't mean Google is doing nothing — but the effectiveness of these systems varies enormously depending on the vertical. In certain sectors (health, finance), filters are draconian. In others (local niches, long tail), you still find well-ranked content farms.

What types of spam might this update target?

If we go by typical patterns, Google will probably crack down on unedited AI-generated content produced at scale, poorly disguised PBN networks, and sites that recycle third-party content without adding value.

The timing — August — is interesting. Historically, Google often rolls out updates before the e-commerce high season at year-end. The idea: clean up the SERPs before the rush of transactional searches.

Should we expect brutal traffic swings?

Probably not for most sites. Spam updates aren't core updates — they target identifiable abuse cases, not global relevance criteria.

If you've never played fast and loose with the guidelines, you shouldn't see anything move. If you've taken some liberties... it's time to check your analytics every morning.

Practical impact and recommendations

What should you concretely do if you think you're in the gray zone?

First step: audit your backlink sources. Use Search Console, Ahrefs, or Majestic to identify suspicious referring domains. If you've bought links or participated in site networks, now's the time to disavow.

Second step: review your content production. If you're publishing 50 ChatGPT-generated articles per week with zero human editing, you're probably in the crosshairs. The solution? Slow down the pace, add value, personalize.

How can I verify if my site has been impacted?

Monitor your impressions and clicks in Search Console on a weekly basis. A sudden drop after mid-August should alert you.

Also check your rankings on strategic keywords. If you drop from page 1 to page 3 overnight without changing anything, it's probably the algorithm.

- Audit suspect backlinks and disavow if necessary

- Reduce unedited AI-generated content production

- Check Search Console metrics every week

- Track rankings on key queries with a rank tracking tool

- Avoid any new artificial link schemes for at least 3 months

- Document observed changes to identify patterns

Should you completely overhaul your SEO strategy?

No. If your strategy is built on useful content, natural backlinks, and real user value, you don't need to change anything.

However, if you've been navigating murky waters for months — site networks, spinning content, link schemes — this update is a signal. Either adjust now, or wait for the next wave and risk losing everything at once.

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 17/11/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.