Official statement

Other statements from this video 12 ▾

- □ L'AI Mode de Google va-t-il bouleverser votre stratégie de mots-clés longue traîne ?

- □ Les groupes de requêtes dans Search Console changent-ils la façon d'analyser vos performances SEO ?

- □ Les annotations personnalisées Search Console vont-elles vraiment changer votre analyse de performance ?

- □ Mise à jour spam d'août : Google resserre-t-il vraiment l'étau sur les contenus bas de gamme ?

- □ Discover peut-il vraiment booster votre visibilité sans passer par votre site web ?

- □ Comment les profils créateurs dans Discover vont-ils redistribuer les cartes du trafic SEO ?

- □ Faut-il déclarer ses politiques d'expédition et de retour au niveau organisation dans les données structurées ?

- □ Faut-il encore implémenter des données structurées si Google supprime leur affichage visuel ?

- □ Pourquoi Google insiste-t-il sur une configuration flexible pour vos données structurées ?

- □ HTTPS par défaut dans Chrome : la fin du HTTP non sécurisé en 2026 ?

- □ Comment configurer un paywall JavaScript sans ruiner votre crawl budget ?

- □ Faut-il créer un fichier llms.txt pour être visible dans les résultats IA de Google ?

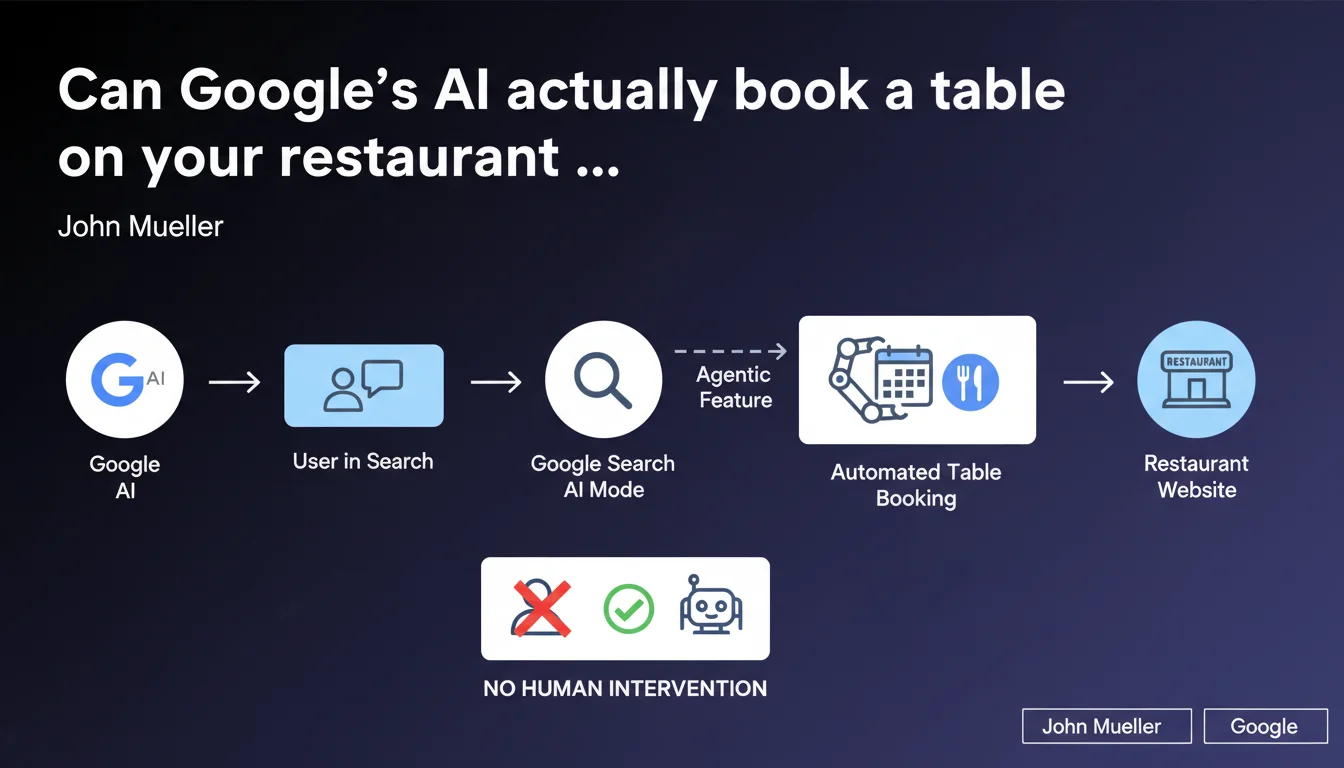

Google is testing an agentic AI Mode capable of completing tasks directly from search — for example, booking a table on a restaurant site without the user having to navigate manually. This shift transforms Search from a discovery interface into an action interface. For transactional sites, it means rethinking technical architecture to be 'actionable' by an AI agent.

What you need to understand

How does this agentic mode concretely change search?

Google is no longer just directing users to pages. With this experimental mode, the AI can interpret complex requests ("find me an Italian restaurant tonight in Paris and book a table for two"), identify the relevant site, navigate through its pages, fill out a reservation form, and complete the action.

The user stays within the Google ecosystem. Your site becomes a service provider working behind the scenes, not a visible destination. This is a fundamental shift in the classic user journey — and the traffic you measure in Analytics.

Why is Google pushing in this direction now?

Two reasons. First, competition from ChatGPT and conversational assistants that promise to solve end-to-end tasks. Second, monetization: a user completing an action within Search stays captive longer, and Google can position itself as a transaction intermediary.

It's also a response to evolving user behavior: people want actionable answers, not lists of blue links. "Zero-click search" becomes "zero-navigation action."

What are the technical implications for affected sites?

For an AI agent to act on your site, you need accessible and structured architecture. This includes clear structured data (schema.org), identifiable forms, logical user journeys without unnecessary barriers (overly aggressive captchas, obfuscated JS).

Google also needs to "understand" your business intents: does a reservation require validation? Immediate payment? These nuances must be explicitly marked up.

- AI becomes an autonomous user that navigates without asking your permission

- Your site must be technically "readable" by an agent, not just by classic Googlebot

- The traffic visible in your stats may decrease even if conversion volume remains stable or increases

- Sites unable to support this interaction risk visibility loss if Google favors those who "play along"

SEO Expert opinion

Is this claim consistent with what we're seeing in the field?

Yes and no. Google has been testing these kinds of features for years via Google Assistant and Actions on Google — but with very mixed results. The idea of automating transactional tasks isn't new. What's changing here is the direct integration into main Search, with LLMs capable of navigating much more fluidly.

Let's be honest: we haven't seen massive rollout yet. It's currently limited to experimental trials. But the direction is clear, and underestimating this trend would be a mistake.

What are the risks for sites dependent on organic traffic?

The first risk is complete disintermediation. You lose direct contact with the user. They never see your brand, your design, your unique value proposition. Google becomes the touchpoint; you're just a backend.

Second problem: data capture. If Google orchestrates the transaction, it collects behavioral data you'll never see. You lose analytical capability, remarketing potential, and deep audience understanding.

In which cases won't this agentic functionality work?

Not all sites are equally affected. Simple, standardized tasks (reservations, appointment booking, basic orders) are ideal candidates. But anything requiring rich interaction, personalized advice, complex configuration will remain out of reach for AI agents — at least for the coming years.

Regulated sectors (healthcare, finance, legal) also pose challenges. Google won't risk automatically managing sensitive actions without explicit human validation. And that's where it breaks: if the user still has to manually validate, the agent's value proposition collapses.

Practical impact and recommendations

What should you concretely do to prepare your site?

First, structure your data. If you offer transactional services (reservations, appointments, purchases), ensure your pages use appropriate schema.org schemas: Reservation, Event, Product, Service as needed.

Next, simplify your user journeys. A form with 15 mandatory fields and three validation steps will never be navigable by an AI agent. Think fluidity and minimalism. Fields must have clear labels, correct semantic HTML attributes (autocomplete, required, type).

Finally, test technical compatibility. Does your site work without heavy client-side JavaScript? Are critical actions accessible via standard HTTP requests? An AI agent won't simulate a human browser in the same way a typical user does.

What mistakes should you absolutely avoid?

Don't block access to your transactional pages behind systematic captchas or mandatory login walls. If Google can't "see" your reservation form without creating an account, the agent won't be able to act. This doesn't mean opening everything to the public, but providing progressive access logic.

Another trap: ignoring this trend thinking it doesn't affect you. Even if you're not a transactional site today, Google may tomorrow consider that actionable sites deserve preferential treatment in results. Anticipating gives you room to maneuver.

- Implement relevant schema.org structured data for your services

- Simplify forms: fewer fields, clear labels, accessible validation

- Verify critical journeys work without heavy client-side JS

- Test accessibility of transactional pages without aggressive captcha

- Track evolution of Google guidelines on "actionable results"

- Anticipate potential visible traffic drop while monitoring actual conversions

How can you ensure your site stays competitive in this new paradigm?

The key is not to be passive about this evolution but to leverage it as an opportunity. If your site becomes "actionable," you potentially gain conversions even if apparent traffic drops. But it requires often complex technical and strategic overhaul.

For many sites, this transition requires cross-functional expertise: advanced technical SEO, API-oriented development, structured data strategy, deep understanding of automated user journeys. Few internal teams master this full skillset.

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 17/11/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.