Official statement

Other statements from this video 14 ▾

- □ Robots.txt vs no-index : pourquoi tant de pros SEO mélangent encore ces deux mécanismes ?

- □ Faut-il vraiment optimiser tout le site après une mise à jour algorithmique ?

- □ Search Console intègre les données IA : mais savez-vous vraiment ce que vous mesurez ?

- □ Faut-il vraiment optimiser différemment son site pour les AI Overviews de Google ?

- □ Google Trends est-il vraiment un outil stratégique pour orienter sa ligne éditoriale SEO ?

- □ Comment Search Console peut-il vraiment révéler ce que cherche votre audience ?

- □ Le SEO est-il vraiment mort ou juste en train de muter sous nos yeux ?

- □ Un sitemap suffit-il vraiment à garantir l'indexation de vos pages ?

- □ Votre CDN ou firewall bloque-t-il Googlebot sans que vous le sachiez ?

- □ Comment Google Trends utilise-t-il réellement le Knowledge Graph pour identifier les topics ?

- □ L'index Google a-t-il vraiment une limite de capacité ?

- □ Le marketing traditionnel est-il devenu indispensable pour ranker sur Google ?

- □ Les données structurées sont-elles vraiment inutiles pour le classement SEO ?

- □ Faut-il vraiment faire vérifier toutes vos traductions automatiques pour le SEO ?

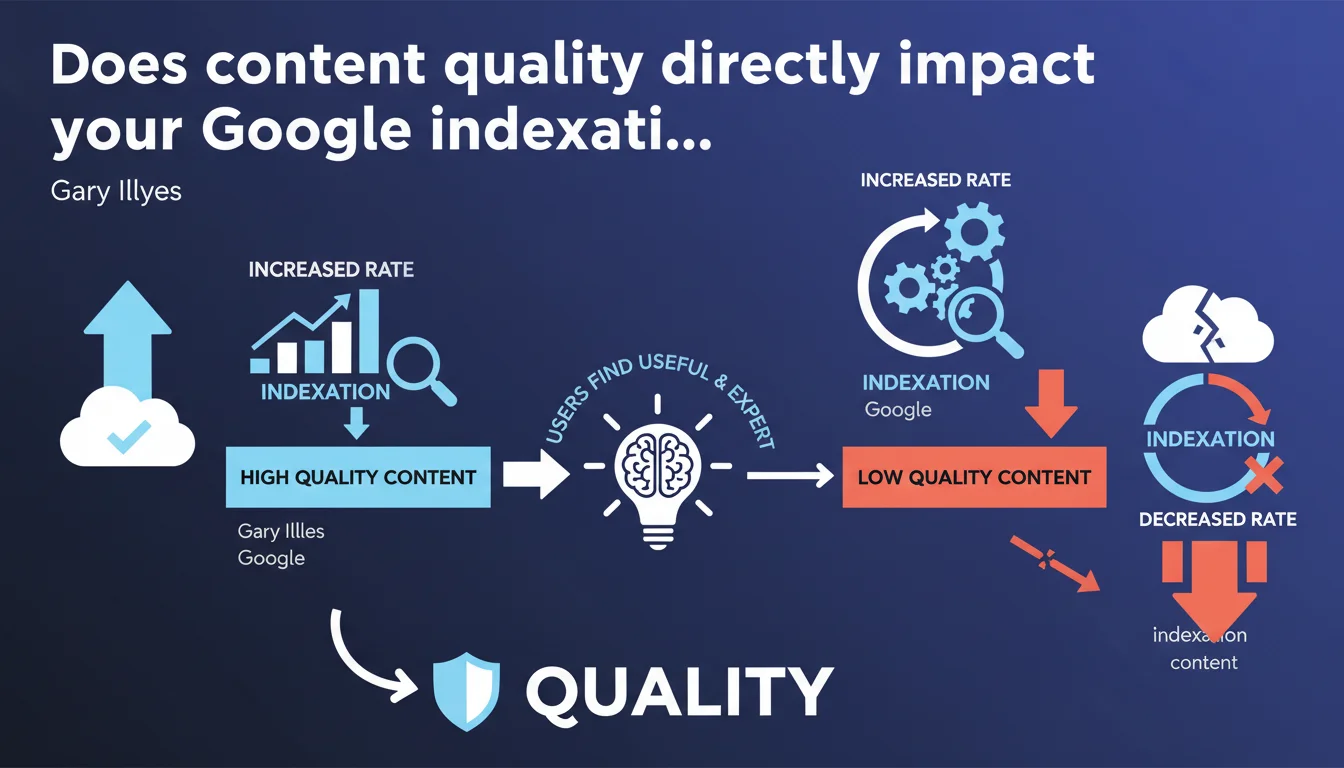

Google states that indexation rate depends directly on the quality and usefulness of content for users. Publishing low-utility or low-expertise content leads to a drop in indexation rates. A volume-without-quality strategy therefore becomes counterproductive for organic visibility.

What you need to understand

What does Google really mean by "indexation rate"?

The indexation rate refers to the proportion of pages on a site that Google actually chooses to add to its index among all those it has crawled. It's not simply a crawl issue — Googlebot can visit a page without ever indexing it.

This distinction is fundamental. Many sites suffer from insufficient crawl budget, but even more suffer from a selectivity problem: Google crawls, but refuses to index. And according to this statement, it's the perceived quality of content that determines this decision.

Why does Google tie indexation to content usefulness?

Google optimizes its resources. Indexing billions of pages costs in storage and computing power. If content brings nothing to users, indexing it becomes wasteful — and Google now actively avoids it.

This logic follows from the Helpful Content Updates. The algorithm no longer just ranks: it filters upstream. Sites that multiply weak pages see their indexation rate drop, even if technically everything is crawlable.

What does "content created with expertise" mean in this context?

Expertise here doesn't just come down to E-E-A-T. Google looks for concrete signals: depth of treatment, original data, first-hand experience, thematic consistency.

"Expert" content answers user intent comprehensively and credibly. It's not about length — short but dense pages can outperform generic walls of text.

- Indexation rate becomes a quality indicator, not just a technical one

- Google actively filters pages deemed unhelpful before indexation

- Expertise and added value are now indexation criteria, not just ranking factors

- Volume-without-quality strategies are penalized at the indexation stage

- A site can be technically perfect but under-indexed if content is weak

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Since late 2022, we're seeing massive indexation drops on sites that were churning out generic or automated content in bulk. Thousands of pages disappear from the index with no apparent technical error.

Tools like Search Console show "Crawled, currently not indexed" pages exploding. The pattern is clear: Google crawls, evaluates, then rejects. It's no longer a robots.txt or sitemap issue — it's an automated editorial judgment.

What nuances should we add to this claim?

Let's be honest: "useful" remains vague. Google publishes no quantifiable threshold. The same content can be indexed on an authoritative site and rejected on a new domain — domain authority still matters.

And that's where it gets tricky. This statement suggests that quality alone is enough, but we know that technical structure, trust signals, and domain history weigh heavily in the indexation decision. A new site with excellent content can struggle for months.

Does this approach really benefit the end user?

In theory yes, in practice it's debatable. Google reduces noise, which is positive. But the algorithm sometimes confuses "niche" with "unhelpful". Specialized content with a real but limited audience can be excluded from the index.

In reality? Ultra-technical B2B sites, academic resources, expert blogs on niche topics lose visibility. Google favors the mainstream, risking impoverishment of indexed information diversity.

Practical impact and recommendations

What should you audit first on your site?

Start with Search Console, "Pages" report. Filter for "Crawled, currently not indexed" and "Discovered, currently not indexed." These pages are your top priority.

Analyze their profile: are they truly useful? Do they bring something unique? If yes, you need to strengthen their quality signals. If not, it's better to merge or delete them — polluting the index with weak content affects your entire site.

What mistakes must you absolutely avoid?

First mistake: thinking increased volume will compensate for the indexation drop. It's the opposite. Publishing more mediocre content accelerates indexation rate degradation.

Second mistake: neglecting existing content updates. Google re-evaluates usefulness continuously. Pages indexed for years can shift to "not indexed" if they become obsolete or outpaced by competitors.

Third mistake: underestimating engagement signals. Google observes user behavior after the click. Content no one reads, even if indexed, eventually disappears.

How do you adapt your content strategy concretely?

Shift from a volume logic to a density logic. Better to have 10 exceptional pages than 100 adequate ones. Concentrate your resources on content that demonstrates true expertise.

Integrate original data, case studies, hands-on experience. These are the usefulness markers Google values. Generic reformulated content has no place anymore — even if well-written.

- Audit "Crawled, currently not indexed" pages in Search Console

- Honestly evaluate the real usefulness of each page: does it bring something unique?

- Merge or delete weak content rather than let it pollute your site

- Reduce publication volume and increase depth of treatment

- Enrich existing content with original data and first-hand experience

- Regularly update old pages to maintain their relevance

- Monitor engagement signals (time spent, scroll, interactions) as usefulness indicators

- Abandon "content farm" or automated production strategies

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 18/12/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.