Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google remplace-t-il vos balises title par des H1 ?

- □ Google indexe-t-il vraiment les titres modifiés par JavaScript côté client ?

- □ Faut-il abandonner le rendu JavaScript côté client pour réussir son SEO ?

- □ Faut-il abandonner le dynamic rendering pour le SEO ?

- □ L'outil d'inspection d'URL montre-t-il vraiment ce que Google voit lors du rendu JavaScript ?

- □ Le contenu modifié après le HTML initial pose-t-il vraiment problème pour l'indexation Google ?

- □ Google maîtrise-t-il vraiment le JavaScript ou reste-t-il des pièges à éviter ?

- □ Lighthouse peut-il vraiment diagnostiquer vos problèmes de rendu critique pour Google ?

- □ Faut-il vraiment crawler son site tous les trois mois pour éviter les problèmes techniques ?

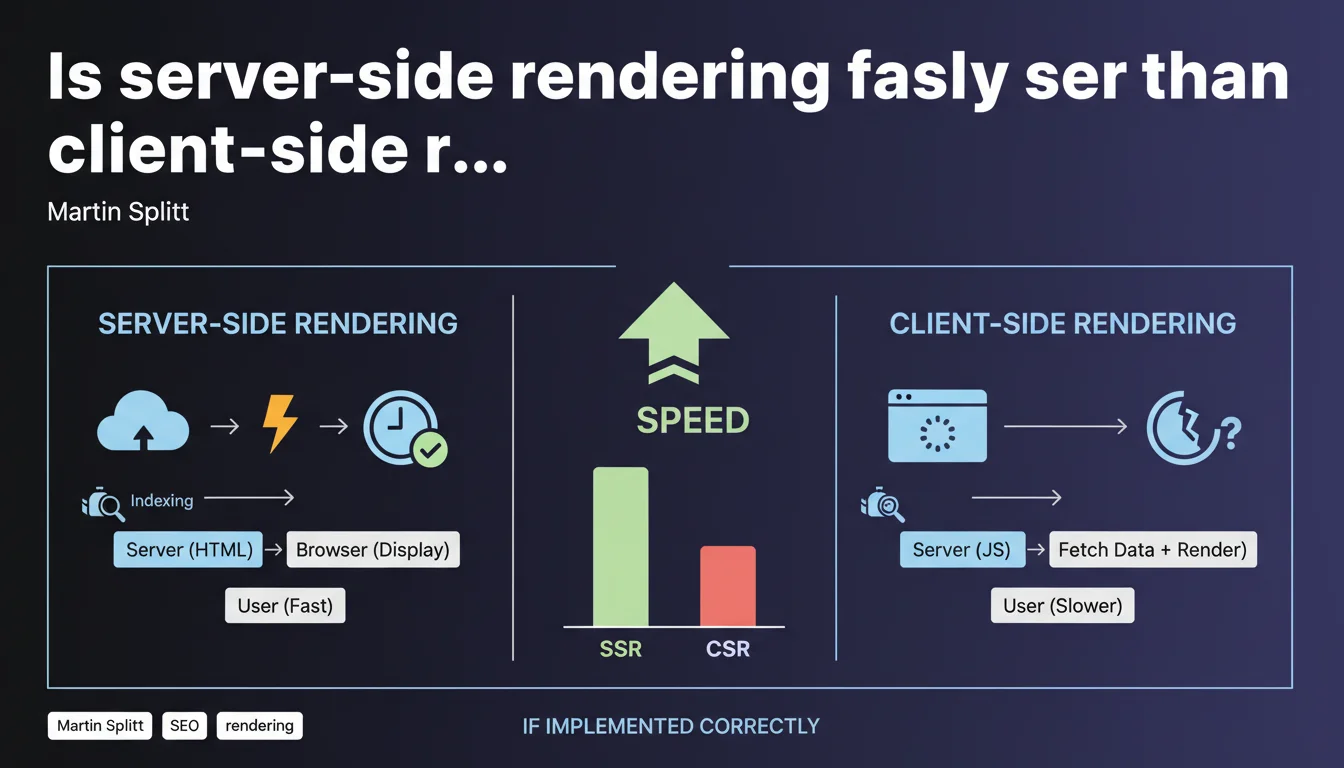

Google confirms that SSR (Server-Side Rendering) generally offers better user performance than CSR (Client-Side Rendering), but with one crucial condition: the implementation must be correct. This nuance is critical — a poorly configured SSR can degrade the experience rather than improve it.

What you need to understand

Martin Splitt, Developer Advocate at Google, reminds us of a truth that is often idealized: server-side rendering is not an automatic guarantee of speed. Many developers adopt SSR thinking it will solve their performance problems without understanding the implementation pitfalls.

This statement is part of the ongoing debate between SSR, CSR, and hybrid strategies like progressive hydration. Google doesn't make a brutal choice — it contextualizes.

Why does Google insist on "correct implementation"?

Because poorly configured SSR can generate catastrophic server response times. If your server takes 2 seconds to compile the HTML before sending it, you lose all the advantage of server-side rendering.

The classic problem: frameworks like Next.js or Nuxt.js make SSR accessible, but create a false impression of simplicity. Poor caching, blocking API requests in cascade, an undersized server — and your TTFB explodes.

Is client-side rendering doomed then?

No. Google is not saying that client-side rendering is inherently slow. It's saying that SSR is "generally" faster for the end user because the browser receives already-built HTML.

But if your CSR application uses aggressive prefetching, intelligent code-splitting, and a well-configured CDN, the gap can be minimal. The real issue remains First Contentful Paint and Largest Contentful Paint — metrics where SSR often takes the advantage.

What does this change for Googlebot?

Googlebot can now execute JavaScript, but that doesn't mean it waits indefinitely. With SSR, content is immediately crawlable without depending on a JavaScript rendering budget.

CSR requires double work: Googlebot must download empty HTML, load the JS, execute it, then index. This delay can impact your crawl budget and delay indexing of new pages.

- Well-implemented SSR = complete HTML from the first request, reduced processing time for Googlebot

- CSR = dependency on JavaScript rendering budget, risk of invisible content if JS fails

- Hybrid approach (initial SSR + hydration) = optimal compromise for most modern projects

- TTFB is critical: SSR with TTFB > 1s cancels out its own benefits

- Core Web Vitals: LCP naturally favors SSR if the server responds quickly

SEO Expert opinion

Is this statement really surprising?

Frankly, no. Google has been repeating for years that user-perceived speed is paramount. What's interesting is the caution in the wording: "generally" and "correctly implemented".

Splitt deliberately avoids demonizing CSR or glorifying SSR. Why? Because Google knows that giants like Gmail, Google Maps, or Twitter (before its redesign) run on intensive CSR with excellent performance.

Where does this rule not really apply?

For complex web applications (dashboards, SaaS, business tools), SSR can be counterproductive. If your interface depends on real-time interactions and frequent updates, CSR with optimized state management will often beat SSR.

SSR shines especially for content sites: e-commerce, media, blogs, marketing websites. Where the goal is to quickly deliver static or semi-static content.

What nuances are missing from this statement?

Google doesn't talk about streaming SSR (React Server Components, for example) or Islands Architecture (Astro, Fresh). These hybrid approaches allow rendering on the server only the critical parts while keeping CSR for interactivity.

[To verify]: Google doesn't specify how Googlebot handles SSR errors. If your server returns a 500 during rendering, what happens? Fallback to a cached version? Delayed recrawl? This area remains unclear in the official documentation.

Practical impact and recommendations

What should you do concretely if you're on pure CSR?

Don't panic. If your Core Web Vitals are in the green and Google is correctly indexing your content, migrating to SSR out of SEO dogmatism is probably unnecessary.

However, if your LCP exceeds 2.5s or if certain pages struggle to get indexed despite clean sitemaps, SSR becomes a serious option. But be careful — don't confuse SSR with static pre-rendering (SSG).

How to verify that your SSR is "correctly implemented"?

Three key indicators: TTFB, FCP, and LCP. If your TTFB exceeds 600-800ms, your SSR is misconfigured. The server is taking too long to generate the HTML.

Use monitoring tools like WebPageTest in Waterfall mode to identify bottlenecks. Often, the problem comes from synchronous API requests at render time — each call blocks HTML generation.

- Measure TTFB in real-world conditions (not just locally) — target < 600ms

- Enable a server cache for semi-static pages (Redis, Varnish, CDN edge caching)

- Use server-side data prefetching to avoid API waterfall issues

- Test rendering with Mobile-Friendly Test and verify that source HTML contains the content

- Compare Core Web Vitals before/after SSR migration on a sample of pages

- Monitor 500 errors in production — SSR that crashes often returns empty HTML

- Assess infrastructure cost: SSR = more CPU/RAM load than a simple static file server

What mistakes should you absolutely avoid?

Not properly sizing your server. Node.js-based SSR can consume 10 to 50 times more resources than a static site. If your traffic spikes, plan for horizontal scaling or serverless (Vercel, Netlify Functions).

Also avoid the "SSR everywhere" trap. Some interactive pages (shopping cart, user account, dashboards) have no SEO value and can remain pure CSR. The hybrid approach is often the most pragmatic.

❓ Frequently Asked Questions

Le SSR améliore-t-il automatiquement le référencement ?

Peut-on mixer SSR et CSR sur un même site ?

Quelle est la différence entre SSR et SSG ?

Google pénalise-t-il les sites en CSR pur ?

Un mauvais SSR peut-il être pire qu'un bon CSR ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 05/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.