Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google remplace-t-il vos balises title par des H1 ?

- □ Google indexe-t-il vraiment les titres modifiés par JavaScript côté client ?

- □ Faut-il abandonner le dynamic rendering pour le SEO ?

- □ L'outil d'inspection d'URL montre-t-il vraiment ce que Google voit lors du rendu JavaScript ?

- □ Le contenu modifié après le HTML initial pose-t-il vraiment problème pour l'indexation Google ?

- □ Le rendu côté serveur est-il vraiment plus rapide que le rendu côté client pour le SEO ?

- □ Google maîtrise-t-il vraiment le JavaScript ou reste-t-il des pièges à éviter ?

- □ Lighthouse peut-il vraiment diagnostiquer vos problèmes de rendu critique pour Google ?

- □ Faut-il vraiment crawler son site tous les trois mois pour éviter les problèmes techniques ?

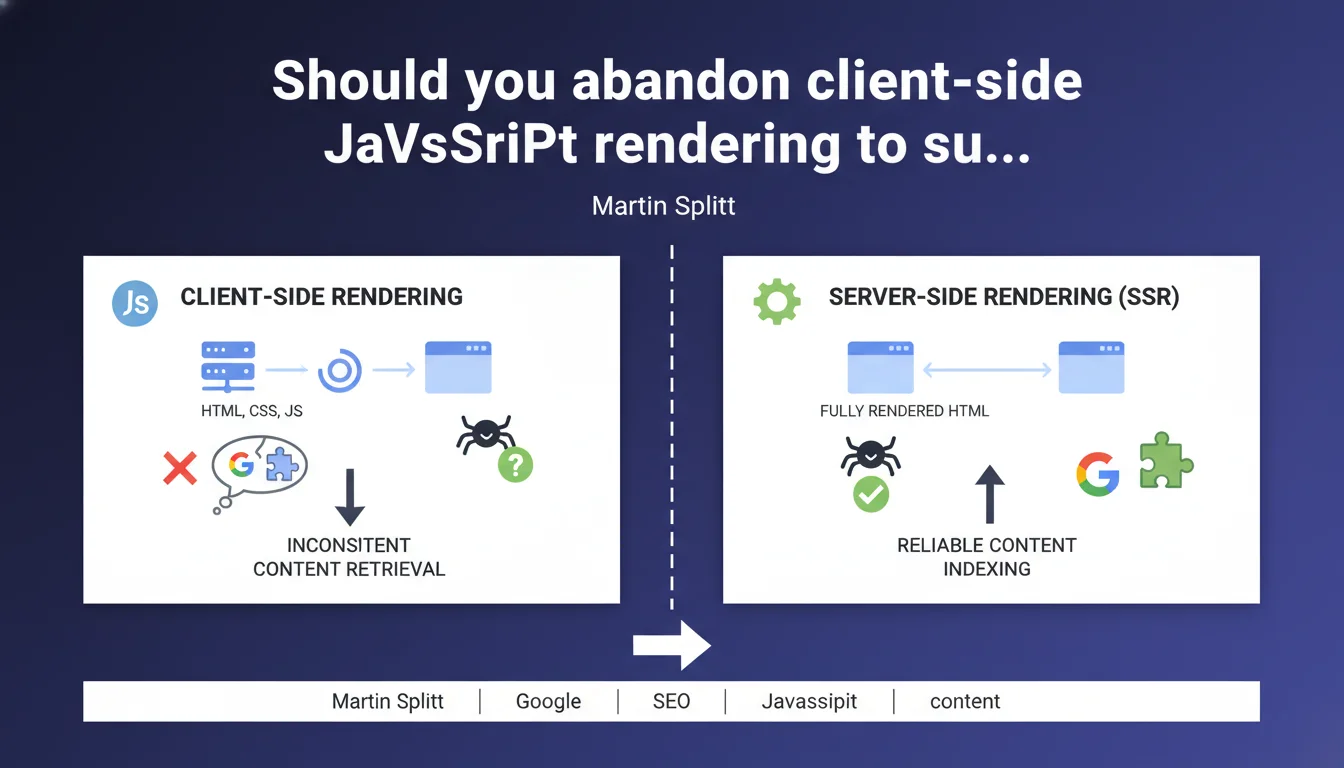

Google confirms that server-side rendering (SSR) remains the best SEO practice because the search engine cannot always properly retrieve content generated by client-side JavaScript. This statement confirms that despite Googlebot's improvements with JS, indexation reliability remains better with server-side rendering. In concrete terms: if your critical content depends on client-side JavaScript, you're taking a measurable risk.

What you need to understand

Why does Google prefer server-side rendering over client-side rendering?

The distinction is straightforward: server-side rendering (SSR) sends complete HTML directly to the browser, while client-side rendering (CSR) delivers an empty shell and lets JavaScript build the content in the browser.

For Googlebot, SSR means everything is immediately readable on first access. With CSR, Google must first download the HTML, identify scripts, execute them, wait for API calls, then retrieve the final content — a multi-step process where each link in the chain can break.

What does it practically mean that Google "cannot always correctly retrieve" JS-generated content?

Google uses careful wording that masks a rougher on-the-ground reality. In some cases, Googlebot simply fails to execute JavaScript: timeouts, code errors, resources blocked by robots.txt, exceeded rendering budgets.

In other cases, execution works but arrives too late in the crawl process. Content rendered by JS then goes into a deferred rendering queue, which delays indexation by days or even weeks for some sites.

What are the concrete risks of a full client-side JavaScript site?

- Partial or missing indexation of pages whose content depends entirely on JS

- Significantly longer indexation delays compared to static HTML

- Content loss if JavaScript generates critical elements (titles, descriptions, internal links)

- Increased crawl budget consumption because Google must revisit the same URL multiple times

- Inconsistencies between what Googlebot sees and what the end user sees

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. For years, audits have revealed that sites built with React, Vue or Angular without SSR encounter recurring indexation problems. While Google improves its JavaScript rendering engine, reality remains stubborn: an SSR site is indexed faster and more completely.

The most frequent problematic cases? E-commerce sites with pure JS filters and pagination, Single Page Applications (SPAs) that load content via asynchronous API calls, and sites whose meta tags and structured data are injected by JavaScript. On these latter ones, we regularly observe missing or incorrect rich snippets.

In which cases does client-side rendering remain acceptable?

Let's be honest: everything isn't black or white. If your critical content — titles, descriptions, main body text, internal linking — is present in the initial HTML, you can afford to enrich user experience with client-side JS for secondary elements.

Areas where CSR poses fewer problems: interactive user interfaces (accordions, tabs), post-load functionality (comments, recommended content), purely UX elements with no SEO value (animations, transitions). But once an element impacts SEO, caution dictates rendering it server-side.

What if migrating to SSR isn't feasible in the short term?

Pre-rendering or dynamic rendering can serve as transitional solutions. Pre-rendering generates static HTML versions of your pages during build, while dynamic rendering detects bots and serves them complete HTML while keeping CSR for users.

[To verify]: Google claims not to penalize legitimate cloaking (different rendering for bots), but this tolerance remains vague and may evolve. The risk exists, even if it's low for purely technical use without ranking manipulation.

Practical impact and recommendations

What should you concretely do on an existing CSR site?

First step: audit what Googlebot actually sees. Use the URL inspection tool in Google Search Console, compare raw HTML (Ctrl+U in Chrome) with the rendered DOM, and verify that all your critical elements appear in the "crawled" version.

If you spot significant gaps — missing content, absent links, empty meta tags — you have a JS rendering problem that directly impacts your indexation. This is where it gets tricky: your SEO rests on a fragile process that Google doesn't guarantee.

Which architectures should you prioritize for new projects?

For a brochure website or blog, favor static site generators (Gatsby, Next.js in export mode, Hugo, Eleventy) that produce pure HTML. Maximum performance, guaranteed SEO, simple and inexpensive hosting.

For a complex web application or e-commerce site, opt for SSR with hydration: Next.js, Nuxt.js, SvelteKit. You keep JavaScript interactivity on the client side while serving complete initial HTML. The best of both worlds, even if the infrastructure is heavier.

How do you verify your SSR implementation works correctly?

- Disable JavaScript in your browser and verify that main content remains visible

- Test your URLs with Google Search Console's "Inspect URL" tool

- Compare source code (view-source:) with what the browser displays

- Verify that your meta tags, titles and structured data appear in initial HTML

- Check server response times — SSR can slow TTFB if poorly optimized

- Monitor your indexation progress in Search Console after migration

❓ Frequently Asked Questions

Le rendu côté client est-il complètement à proscrire pour le SEO ?

Googlebot exécute-t-il vraiment JavaScript en 2025 ?

Quelle est la différence entre SSR, SSG et pre-rendering ?

Le rendu dynamique (cloaking pour bots) est-il autorisé par Google ?

Puis-je utiliser un framework moderne comme React tout en faisant du SEO ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 05/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.