Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google remplace-t-il vos balises title par des H1 ?

- □ Google indexe-t-il vraiment les titres modifiés par JavaScript côté client ?

- □ Faut-il abandonner le rendu JavaScript côté client pour réussir son SEO ?

- □ Faut-il abandonner le dynamic rendering pour le SEO ?

- □ L'outil d'inspection d'URL montre-t-il vraiment ce que Google voit lors du rendu JavaScript ?

- □ Le rendu côté serveur est-il vraiment plus rapide que le rendu côté client pour le SEO ?

- □ Google maîtrise-t-il vraiment le JavaScript ou reste-t-il des pièges à éviter ?

- □ Lighthouse peut-il vraiment diagnostiquer vos problèmes de rendu critique pour Google ?

- □ Faut-il vraiment crawler son site tous les trois mois pour éviter les problèmes techniques ?

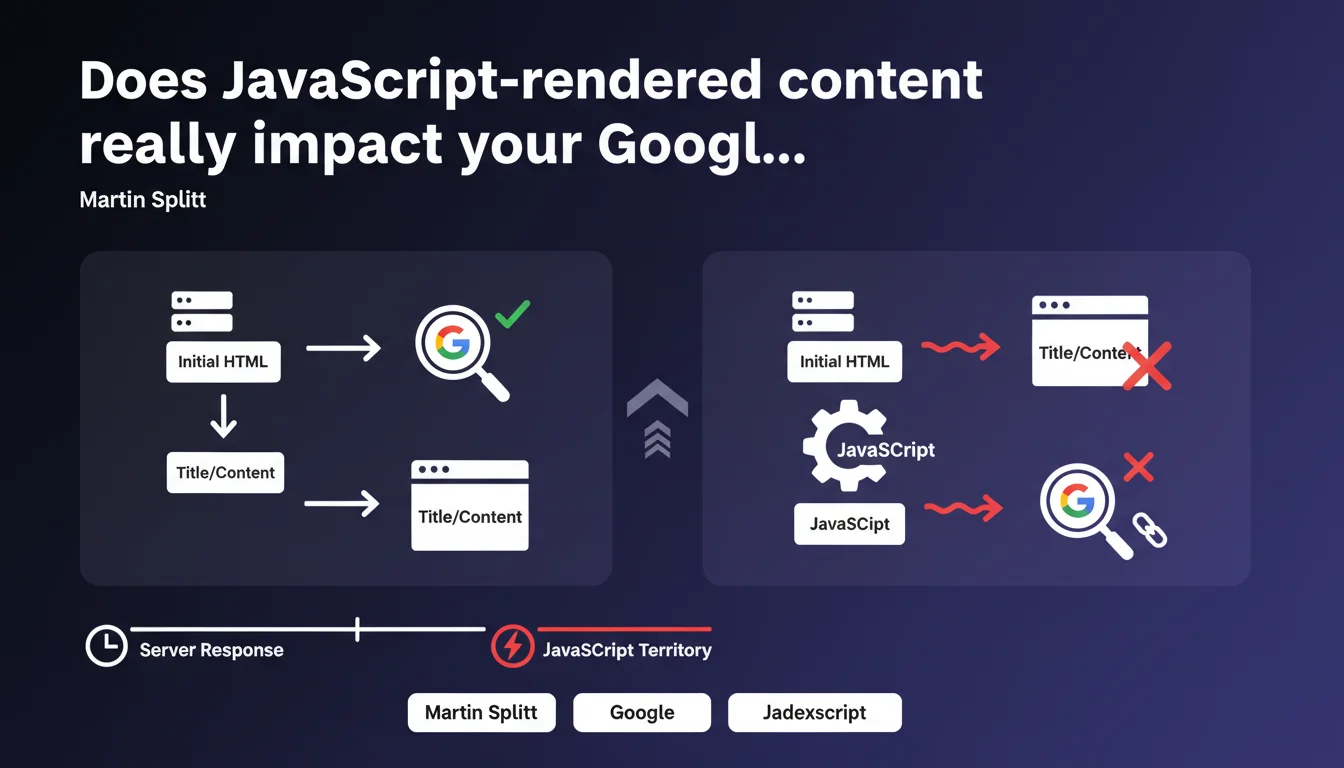

Google treats everything that arrives after the server sends the initial HTML as JavaScript territory. If your title or content changes after this critical moment, you risk indexation issues. Martin Splitt draws a clear line between what's immediately crawlable and what requires additional JS processing.

What you need to understand

Where exactly does the boundary between static HTML and JavaScript lie?

Splitt's statement establishes a precise temporal demarcation: the moment when the server sends its initial HTML response. Everything that exists in this response is considered static content, directly accessible to the crawler.

Everything that gets added, modified, or loaded afterward — via fetch(), DOM manipulation, React hydration, etc. — belongs to JavaScript territory. This distinction isn't trivial for Google's indexation process, which treats these two types of content in fundamentally different ways.

Why is the timing of content modifications so critical?

The problem emerges when elements critical for SEO — title, meta description, main content — aren't present in the initial HTML but are added by JavaScript. Google must then make two passes: a first read of the raw HTML, then a JavaScript render.

Between these two passes, there can be a significant time lag. Crawl budget is consumed differently, and nothing guarantees that JS rendering will be systematically executed or indexed with the same priorities. This is where indexation problems begin.

What types of modifications pose the greatest risks?

Martin Splitt specifically mentions title and content. These aren't random examples — they're the strongest signals for indexation. A title modified by JavaScript can create an inconsistency between what the initial crawler sees and what appears after rendering.

- Initial HTML: directly crawlable content, immediate and reliable indexation

- JavaScript modifications: require additional rendering, potentially delayed or incomplete

- Critical elements involved: title tags, H1, main text content, structured data

- Primary risk: desynchronization between what Google crawls and what it ultimately indexes

- Practical consequence: partial, inconsistent, or absent indexation of content that's otherwise visible to users

SEO Expert opinion

Does this position really reflect how Googlebot actually works?

Yes, but with important nuances. In practice, we observe that Google does execute JavaScript on the majority of pages. However, this execution is neither instantaneous nor guaranteed. It depends on crawl budget, site complexity, and factors Google doesn't publicly document.

Splitt's statement confirms what we suspected: there's a clear hierarchy in how content is treated. Initial HTML takes priority. JavaScript comes after, sometimes well after. This temporality may seem technical, but it has direct consequences on ranking.

Do modern frameworks make this rule obsolete?

No, and that's precisely the trap. Next.js, Nuxt, and other frameworks offer Server-Side Rendering (SSR) specifically to work around this limitation. If you're using pure Client-Side Rendering (CSR), you fall exactly into the scenario Splitt describes.

Even with SSR, watch out for hydrations that modify the initial content. A component that displays a placeholder then loads the real content via JavaScript remains problematic. [To verify]: Google doesn't clearly document how it handles differences between SSR HTML and post-hydration state — it's a gray area.

Can you really avoid JavaScript entirely for critical content?

Let's be honest: not always. Modern websites have functional needs that require JavaScript. The issue isn't eliminating it, but ensuring that SEO-critical content exists in the initial HTML.

User personalization, A/B testing, location-based dynamic content — all scenarios where JavaScript is unavoidable. In these cases, you must accept a compromise: either serve generic content in the initial HTML and personalize afterward, or handle personalization server-side before HTML is sent.

Practical impact and recommendations

How do you check if your site falls into this trap?

First step: disable JavaScript in your browser and visit your key pages. Anything that disappears or changes isn't in the initial HTML. It's a brutal test, but devastatingly effective.

Second approach: use Google Search Console and the Rich Results testing tool. Compare the rendered screenshot with your View Source. Differences show you exactly where JavaScript modifications occur. If your H1 or title don't appear in View Source, you have a problem.

What technical changes should you prioritize?

Focus on elements critical for ranking: title, meta description, H1, main text content, structured data. These elements absolutely must be present in the initial HTML sent by the server.

If you're using a JavaScript framework, migrate to SSR or Static Site Generation (SSG) for your strategic pages. Next.js, Nuxt, SvelteKit — they all offer these options. The technical overhead is more than compensated by indexation reliability.

For secondary elements — interactive features, below-the-fold content, purely UX elements — JavaScript remains acceptable. But draw a clear line: SEO critical = initial HTML. Everything else = JavaScript allowed.

Are there cases where this rule can be relaxed?

Yes, in some very specific configurations. If you have an established site, excellent crawl budget, and Google consistently renders your JavaScript (verifiable in Search Console), the risk decreases. But even then, no absolute guarantee.

News sites, high-frequency e-commerce, or platforms with daily crawls have more flexibility. Google invests more resources in their rendering. But this tolerance is never officially documented — you're navigating blind.

- Systematically audit the View Source of your strategic pages

- Verify that title, H1, and main content are present before any JavaScript

- Regularly compare initial HTML with final render in Search Console

- Migrate to SSR/SSG for SEO-critical pages if using a JS framework

- Test your pages with JavaScript disabled to identify problematic content

- Monitor coverage reports in Search Console to detect indexation issues

- Clearly document which elements of your site depend on JavaScript and which are static

❓ Frequently Asked Questions

Le rendu JavaScript par Google est-il garanti sur toutes mes pages ?

Si j'utilise Next.js en SSR, suis-je complètement protégé ?

Comment savoir si mes problèmes d'indexation viennent du JavaScript ?

Puis-je utiliser du lazy loading pour mon contenu principal ?

Les frameworks comme Angular ou Vue sont-ils incompatibles avec cette règle ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 05/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.