Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google remplace-t-il vos balises title par des H1 ?

- □ Google indexe-t-il vraiment les titres modifiés par JavaScript côté client ?

- □ Faut-il abandonner le rendu JavaScript côté client pour réussir son SEO ?

- □ L'outil d'inspection d'URL montre-t-il vraiment ce que Google voit lors du rendu JavaScript ?

- □ Le contenu modifié après le HTML initial pose-t-il vraiment problème pour l'indexation Google ?

- □ Le rendu côté serveur est-il vraiment plus rapide que le rendu côté client pour le SEO ?

- □ Google maîtrise-t-il vraiment le JavaScript ou reste-t-il des pièges à éviter ?

- □ Lighthouse peut-il vraiment diagnostiquer vos problèmes de rendu critique pour Google ?

- □ Faut-il vraiment crawler son site tous les trois mois pour éviter les problèmes techniques ?

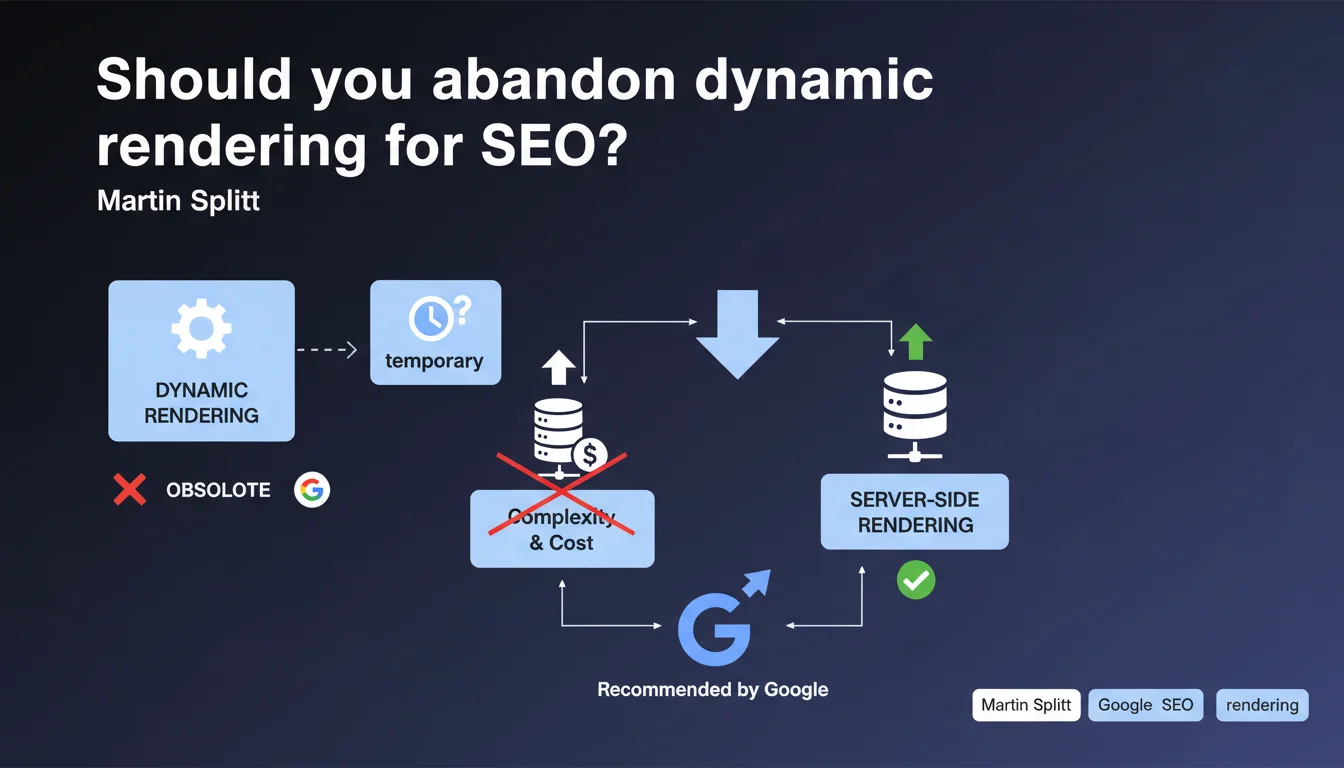

Google is officially burying dynamic rendering, relegating it to the status of an obsolete interim solution. Martin Splitt recommends server-side rendering, highlighting the complexity and infrastructure costs of dynamic rendering. Only those who technically cannot do otherwise should still use it.

What you need to understand

Why is Google now discouraging dynamic rendering?

Dynamic rendering involves serving a pre-rendered version of your pages to bots while keeping a JavaScript version for users. This approach was tolerated for years as a workaround for JS-heavy sites that Googlebot struggled to crawl effectively.

Except here's the thing: Googlebot has improved. Google's JavaScript rendering has gotten better, making this crutch less necessary. Martin Splitt drives the point home — dynamic rendering generates too much technical complexity and costs too much in infrastructure for a benefit that no longer justifies the investment.

What does Google recommend instead?

The official line: server-side rendering (SSR) or hybrid solutions like SSG (Static Site Generation). These approaches serve pre-rendered HTML directly, without branching between bot and user. Less technical gymnastics, fewer points of failure.

Google is clearly pushing toward an architecture where what the crawler sees is what the user sees. Dynamic rendering breaks this principle — two versions, two pipelines, two potential sources of bugs.

When is dynamic rendering still acceptable?

The nuance: Google doesn't formally forbid it. If your technical stack makes SSR impossible in the short term (legacy code, organizational constraints, redesign outside budget), dynamic rendering remains tolerated as a temporary solution.

But "temporary" is the key word. Google is clearly signaling that this tolerance has an expiration date. No official timeline, but the message is unequivocal: prepare your migration.

- Dynamic rendering = deprecated solution, not forbidden but discouraged

- Google favors SSR/SSG to align what bots and users see

- Complexity and infrastructure costs cited as major obstacles

- Tolerance maintained only for those technically blocked in the short term

SEO Expert opinion

Is Google's position consistent with what we observe in the field?

Yes and no. On paper, Google is right: dynamic rendering adds a layer of complexity. Any expert who has debugged a site with broken user-agent detection or misconfigured CDN caching knows that every technical fork is a risk.

But in real life? Some JS-heavy sites continue to index better with dynamic rendering than by letting Googlebot fend for itself. [To be verified] — Google claims its JavaScript rendering is solid, but field tests still show latencies, timeouts, blocked resources that break rendering.

The major problem: Google doesn't publish transparent benchmarks. It's hard to know if your specific site would actually benefit from switching to SSR or if you're just trading one set of problems for another.

What nuances should be added to this statement?

Be careful about dogmatism. SSR isn't free either — it requires server expertise, adapted infrastructure, development time that not all teams have. Migrating a massive React SPA to Next.js with SSR is months of work.

Dynamic rendering can remain relevant in certain contexts: e-commerce sites with thousands of client-generated SKUs, platforms with highly personalized content, applications where SSR would break the user experience. Google says "temporary," but temporary could mean 18 months if your technical roadmap is full.

In what cases does this recommendation not fully apply?

Sites with ultra-dynamic content (real-time news feeds, personalized dashboards) where SSR provides no real SEO benefit. If your content changes every 30 seconds and doesn't need fine-grained indexing, this battle isn't about you.

SaaS applications behind login where SEO only applies to static marketing pages. There, a split is possible: SSR for public landing pages, classic SPA for the app itself. No need for dynamic rendering at all.

Practical impact and recommendations

What should you do concretely if you're using dynamic rendering?

First, audit your current situation. Is dynamic rendering solving a real problem anymore or has it become technical debt you're dragging along by inertia? Test your site by temporarily disabling dynamic rendering on a few non-critical pages — use Google Search Console and Mobile-Friendly Test to see if Googlebot copes well.

If the results are acceptable without dynamic rendering, plan a gradual migration. If Googlebot still struggles (missing content, JavaScript errors), you have some time — but still prepare an SSR/SSG roadmap for the medium term.

What mistakes should you avoid during this transition?

Don't switch everything at once. A sharp cutover from dynamic rendering to SSR on a large site = risk of massive traffic drops if something goes wrong. Test by segments: one category of pages, a subdomain, a percentage of traffic.

Also avoid assuming SSR = automatically better. Some poorly configured SSR frameworks generate HTML so heavy that TTFB explodes. An optimized dynamic rendering setup can beat a sloppy SSR — it's not magic.

And most importantly: don't drop your user-agent detection logs too quickly. Keep a period of dual monitoring (dynamic rendering + SSR) to compare indexation metrics, crawl patterns, rankings. Factual data matters more than Google's statements.

How can you verify that your implementation meets Google's expectations?

Use the URL Inspection Tool in Search Console. Compare the render "as seen by Google" with what a real user sees. If both versions are identical (same content, same structure), you're aligned with what Google recommends.

Monitor your Core Web Vitals metrics after migration. Poorly optimized SSR can degrade LCP and TBT. Google wants SSR, sure, but not at the cost of user experience — the balance remains delicate.

- Audit the real effectiveness of your current dynamic rendering

- Test native Googlebot rendering on non-critical pages before full migration

- Plan a phased SSR/SSG roadmap, by site segments

- Compare indexation/crawl performance before/after via Search Console

- Monitor Core Web Vitals during and after transition

- Keep logs and metrics from the old system during transition period

- Train technical teams on SSR specifics (caching, TTFB, hydration)

❓ Frequently Asked Questions

Le dynamic rendering va-t-il pénaliser mon site dans les résultats Google ?

Dois-je migrer vers SSR immédiatement ?

Le SSR est-il toujours meilleur que le dynamic rendering pour le SEO ?

Peut-on combiner SSR et dynamic rendering sur un même site ?

Googlebot gère-t-il vraiment bien le JavaScript moderne maintenant ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 05/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.