Official statement

Other statements from this video 9 ▾

- □ Search Console est-elle vraiment LA référence pour mesurer le trafic organique Google ?

- □ Search Console ne mesure-t-elle vraiment que les données avant l'arrivée sur le site ?

- □ Pourquoi les clics Search Console et les sessions Analytics ne correspondent-ils jamais ?

- □ Pourquoi Search Console et Google Analytics affichent-ils des données contradictoires ?

- □ Pourquoi Search Console et Analytics affichent-ils des écarts de trafic sur vos contenus non-HTML ?

- □ Pourquoi les données de trafic diffèrent-elles entre Search Console et Analytics ?

- □ Pourquoi Search Console et Google Analytics affichent-ils des chiffres de trafic différents ?

- □ Faut-il s'inquiéter des écarts entre Search Console et Google Analytics ?

- □ Faut-il vraiment croiser les données de Search Console et Google Analytics pour optimiser son SEO ?

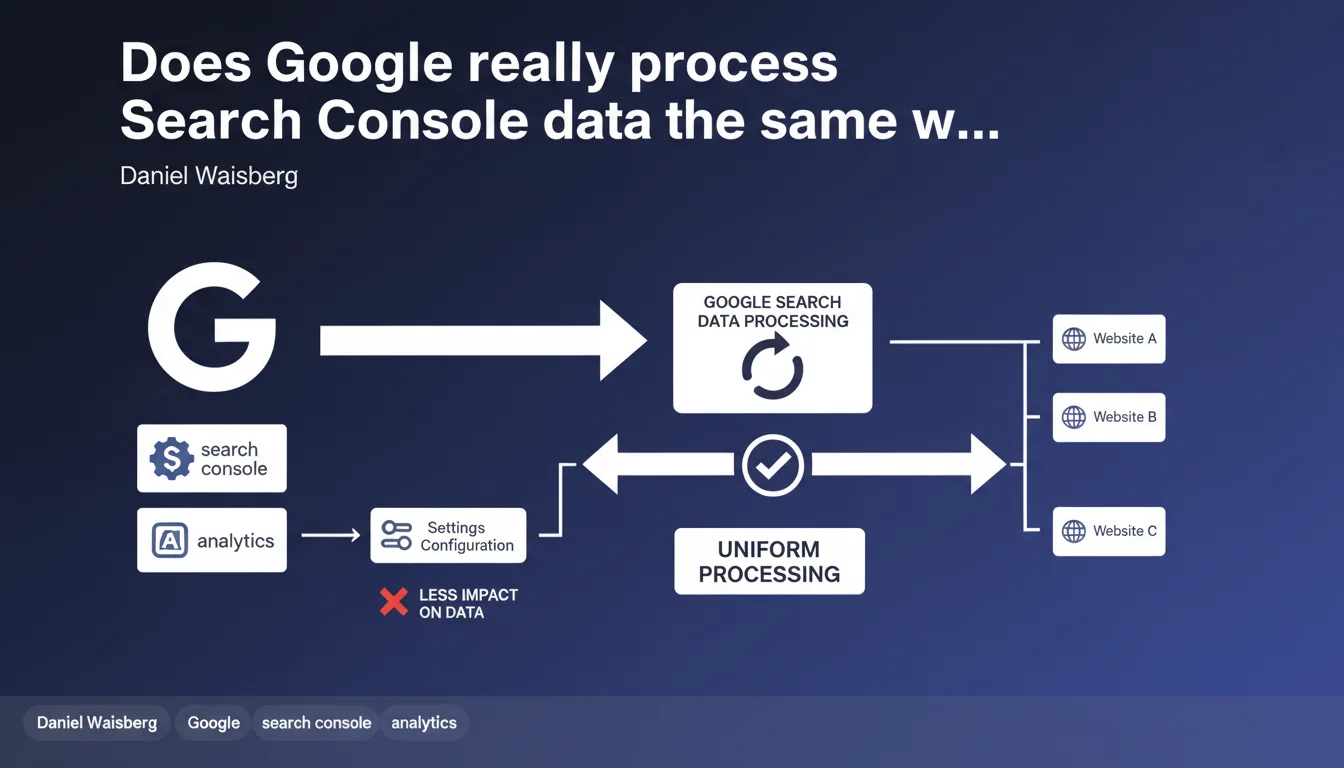

Google claims that Search Console applies uniform data treatment across all properties, unlike Google Analytics where configuration has a greater influence on metrics. The way you set up your Search Console property would therefore have limited impact on the data returned.

What you need to understand

Why does Google emphasize this uniform treatment?

Daniel Waisberg's statement aims to reassure Search Console users about the reliability of collected data. Unlike Google Analytics where tags, filters, and tracking parameters can substantially modify displayed metrics, Search Console relies directly on Google's server logs.

Concretely, regardless of how you configure your property — domain versus URL prefix, segmentation by subfolder — the raw data collected by Google remains identical. What changes is only how it's presented to you in the interface.

What does this mean for SEO reporting?

This uniformity means that two sites with similar volumes should obtain comparable metrics in terms of crawl frequency, detected errors, or Core Web Vitals signals. The discrepancies you observe therefore reflect real performance differences, not configuration artifacts.

It's an argument in favor of Search Console as a source of truth for organic visibility analysis, where Analytics can present biases depending on tracking code implementation or IP exclusion rules.

What are the limitations of this uniformity?

The statement remains vague about what « uniform treatment » exactly means. We're talking here about the process of aggregating and calculating metrics, not collection itself which depends on crawling — and crawling varies enormously between sites.

- The data displayed in Search Console comes from Google search logs, so it's not influenced by your local technical configuration

- The granularity of data (grouping by page, query, country) remains identical regardless of the property type chosen

- Anonymization thresholds are applied uniformly: below a certain volume, Google masks data to protect privacy

- The data reporting latency (typically 24-48 hours) is standard for everyone

- Export limitations (maximum 1000 lines in the interface) affect everyone equally

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. The uniformity of Google's processing is technically credible: the data does come from the same log pipelines. But saying that « configuration has less impact » remains a half-truth.

In practice, choosing between domain property and URL prefix property changes radically what you see. A domain aggregates all subdomains, a prefix isolates a segment. The raw data might be the same in Google's servers, but usability for you differs completely.

What nuances should we add?

The statement obscures a crucial point: Search Console applies anonymization thresholds so that certain queries or pages never appear in your reports if the volume is too low. Two sites can therefore have « uniformly treated » data but radically different visibility depending on their traffic.

Another blind spot: performance data (CWV) relies on the Chrome User Experience Report. A site with few Chrome visitors will have partial, or even absent, metrics. It's uniform treatment, certainly, but producing highly heterogeneous results. [To verify] whether Google applies weighting or smoothing for small sites — nothing is publicly documented.

In what cases does this rule cause problems?

For multilingual or multi-regional sites, uniform treatment processing can work against you. If Google decides to group certain language versions under a single entity in its logs, you lose granularity — and no setting in Search Console will change that.

Practical impact and recommendations

What should you do concretely with this information?

First direct consequence: there's no point in multiplying Search Console properties hoping to get different data. Metrics will remain the same, only the presentation changes. Focus on a clear property structure that reflects your analysis needs (global domain versus separate subdomains).

Second point: don't try to « optimize » your Search Console data the way you would with Analytics. No filters to configure, no custom segments that modify figures. What's reported is what it is, making it a reliable source for auditing the reality of crawling and indexation.

How do you avoid misinterpretation errors?

The trap would be believing that « uniform treatment » means « exhaustive data ». Search Console only shows you a sample of actual queries, especially for low-volume long tail searches. If a query doesn't appear, it doesn't mean it generates no traffic — maybe it's just below the anonymization threshold.

Another common mistake: directly comparing Search Console and Analytics and alarming at the gaps. The counting methodologies differ (clicks vs. sessions, source attribution, processing delays). « Uniform treatment » only concerns Search Console itself, not consistency across tools.

What strategy should you adopt to exploit this data?

Use Search Console as a technical health barometer: crawl errors, indexation coverage, CWV signals. These metrics reflect what Google actually sees, without configuration bias. For audience and conversion analysis, cross-reference with Analytics — the two tools are complementary, not competitors.

- Verify that your Search Console property properly covers all relevant subdomains if you use a domain property

- Don't create redundant properties — one per canonical version is enough (HTTPS preferred)

- Regularly export data via the API to bypass the 1000-line limit and maintain clean historical records

- Monitor alert messages in Search Console: penalties, indexation errors, security issues

- Cross-reference Search Console data with your server logs to identify crawled but non-indexed pages

❓ Frequently Asked Questions

Les données Search Console sont-elles exactes à 100% ?

Faut-il créer plusieurs propriétés Search Console pour un même site ?

Pourquoi les chiffres Search Console diffèrent-ils de ceux d'Analytics ?

Peut-on améliorer les données remontées dans Search Console ?

Les petits sites ont-ils accès aux mêmes données que les gros ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 29/01/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.