Official statement

Other statements from this video 9 ▾

- □ Search Console est-elle vraiment LA référence pour mesurer le trafic organique Google ?

- □ Search Console ne mesure-t-elle vraiment que les données avant l'arrivée sur le site ?

- □ Pourquoi les clics Search Console et les sessions Analytics ne correspondent-ils jamais ?

- □ Search Console traite-t-il vraiment les données de la même façon pour tous les sites ?

- □ Pourquoi Search Console et Google Analytics affichent-ils des données contradictoires ?

- □ Pourquoi Search Console et Analytics affichent-ils des écarts de trafic sur vos contenus non-HTML ?

- □ Pourquoi les données de trafic diffèrent-elles entre Search Console et Analytics ?

- □ Faut-il s'inquiéter des écarts entre Search Console et Google Analytics ?

- □ Faut-il vraiment croiser les données de Search Console et Google Analytics pour optimiser son SEO ?

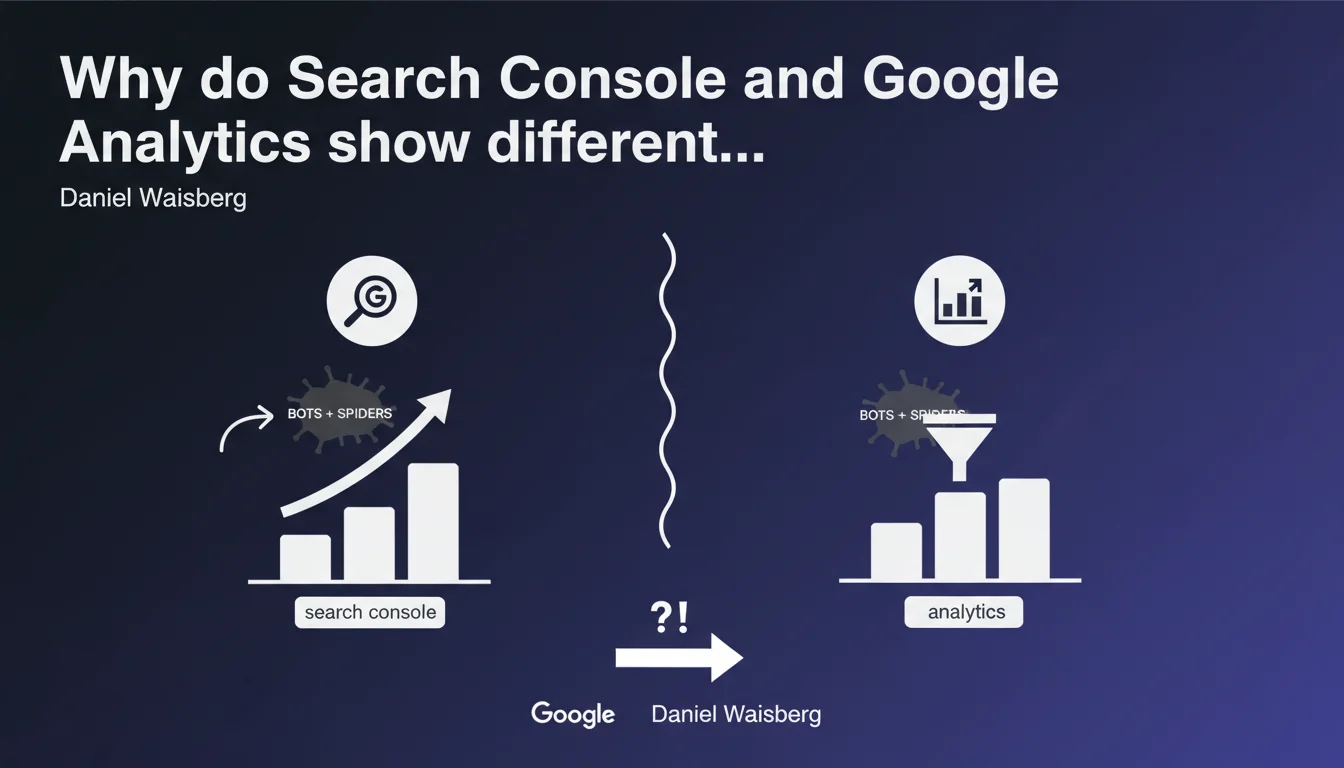

Google Analytics automatically filters out traffic from known bots and spiders, whereas Search Console doesn't systematically do so. This methodological difference explains why the two tools report different traffic volumes — and why your Search Console data may include non-human visits.

What you need to understand

What is the fundamental difference between the two tools?

Google Analytics applies an automatic filter to exclude traffic identified as coming from bots, crawlers and other non-human agents. This approach aims to provide a view of actual user behavior.

Search Console, on the other hand, doesn't necessarily filter out these visits. The tool aggregates organic performance data as Google sees it — impressions, clicks, positions — without systematically distinguishing between human and automated traffic.

Why does this distinction pose a problem for SEOs?

Because we end up with two sources of truth that don't tell the same story. When a client compares GA sessions and GSC clicks, the gap can reach 20-30%, or even more on certain sites.

The problem isn't that one tool is wrong. It's that their data collection methodologies differ fundamentally — GA measures what happens on the site, GSC measures what Google sees in its SERPs.

In which cases does this gap become significant?

On sites heavily crawled by third-party bots (price aggregators, SEO tools, scraping), the gap widens. News sites, e-commerce and directories are particularly affected.

Another common case: sites with heavy JavaScript. If GA doesn't load properly, some real clicks aren't tracked, whereas GSC counts them. The gap can then work in the other direction.

- GA automatically filters known bots, GSC doesn't do so systematically

- The gap between the two tools can reach 20-30% depending on site types

- Sites heavily crawled or with heavy JS show increased divergences

- Neither of the two tools is "wrong" — they simply measure different things

SEO Expert opinion

Is this statement consistent with field observations?

Yes, absolutely. For years, practitioners have observed these systematic gaps between GA and GSC without always understanding why. Waisberg's statement finally clarifies this point.

But let's be honest: this explanation remains incomplete. Google doesn't specify which types of bots are filtered in GA, or according to which criteria. We know that "legitimate" crawlers (Googlebot, Bingbot) are normally excluded, but what about aggressive scrapers or third-party SEO tools? [To be verified]

What nuances should be added to this assertion?

First point: the GA/GSC gap isn't explained solely by bots. Other factors come into play — tracking latency, GA filters (geographical, internal IPs), poorly configured UTM parameters, redirects that break tracking.

Second point: saying that GSC "doesn't necessarily filter" is ambiguous. In concrete terms? Google does filter certain types of suspicious traffic in GSC (detected fraudulent clicks, for example), but not in as systematic a way as GA. This gray area creates methodological confusion.

In which cases does this rule change the game?

For SEO audits, this distinction becomes critical. If you notice abnormally high GSC traffic without equivalent conversion in GA, the problem may not be a tracking issue — it may be bot traffic.

Conversely, GA traffic that exceeds GSC clicks may signal failing Search Console tracking (improperly configured canonical tags, GSC property misconfigured, domain filters).

Practical impact and recommendations

What should you concretely do to correctly interpret this data?

First step: never directly compare the raw figures from GA and GSC. These tools measure different things with different methodologies. Accept a 10-20% gap as normal.

Second step: identify the gap patterns. If the gap widens suddenly, that's a signal. Check server logs to detect increased bot activity or a recent GA tracking issue.

What errors should you avoid when analyzing these metrics?

Classic mistake: using GSC as absolute source of truth for SEO traffic. No. GSC measures the clicks Google records, not necessarily what actually arrives on your server.

Another pitfall: ignoring legitimate bots in your server load analyses. If GSC shows a high click volume but GA remains low, your servers are still under that load — even if it's bot traffic.

How do you reconcile these two data sources?

Use server logs as the arbiter. They show exactly who's knocking on the door — humans, Googlebot, scrapers. Compare with GSC and GA to identify divergences.

Set up a unified dashboard that cross-references GSC (organic performance), GA4 (user behavior) and server logs (technical reality). This is the only way to get a complete picture.

- Accept a 10-20% gap between GA and GSC as normal

- Systematically cross-reference GSC, GA4 and server logs for critical analyses

- Monitor sudden variations in the gap — signal of a technical issue or bot activity

- Never use GSC alone to calculate ROI or justify a budget

- Set up automatic alerts for abnormal gaps between the two tools

- Document your analysis methodology for stakeholders (clients, management)

❓ Frequently Asked Questions

Quel écart entre GA et GSC est considéré comme normal ?

Les bots SEO comme Ahrefs ou Semrush faussent-ils les données GSC ?

Pourquoi GA4 affiche parfois plus de trafic que GSC ?

Faut-il filtrer les bots dans les logs serveur pour l'analyse SEO ?

Google peut-il améliorer la cohérence entre GA et GSC ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 29/01/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.