Official statement

Other statements from this video 8 ▾

- □ Google supporte-t-il vraiment JavaScript pour le SEO ou est-ce un leurre ?

- □ Le JavaScript ralentit-il réellement l'indexation de votre site ?

- □ Pourquoi la configuration JavaScript de votre site est-elle un point de contrôle critique pour Google ?

- □ Faut-il vraiment choisir SSR ou CSR selon le type de site ?

- □ Faut-il vraiment maîtriser Chrome DevTools pour faire du SEO technique ?

- □ Faut-il vraiment maîtriser le fonctionnement des navigateurs pour faire du SEO technique ?

- □ Faut-il vraiment se fier uniquement à la documentation officielle de Google ?

- □ Pourquoi le trafic ne devrait-il jamais être votre seule métrique SEO ?

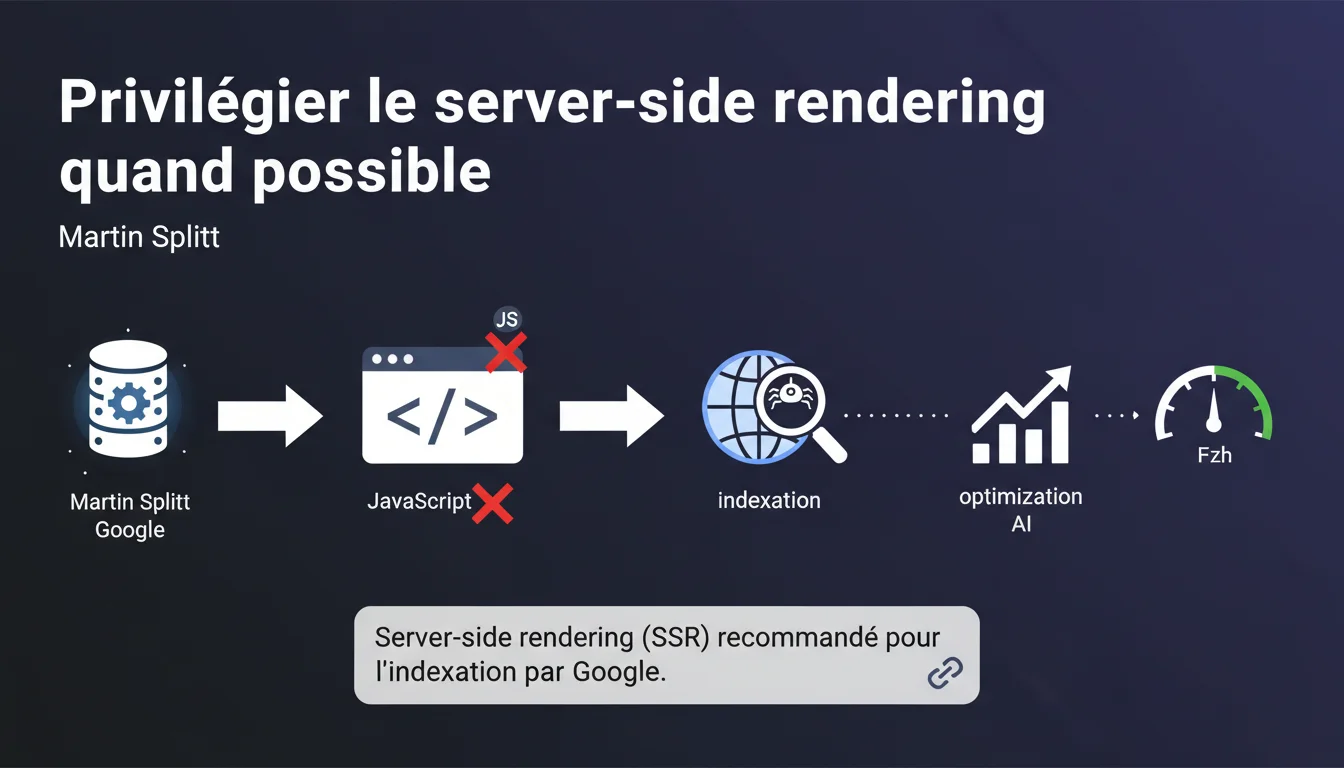

Google officially recommends server-side rendering (SSR) over client-side JavaScript to facilitate indexing. Martin Splitt is clear: if a site can be built without JS, that’s the way to go. This stance contrasts with the current trend of modern frameworks.

What you need to understand

Why does Google put so much emphasis on SSR?

Martin Splitt's statement reveals a strong preference for server-generated content. Google's crawler can execute JavaScript, but this capability has its limits: computing resources, processing delays, error risks. SSR removes these frictions by providing directly usable HTML.

Practically, an SSR site presents its complete content on the first HTTP request. No need to wait for the browser to load, parse, and execute JavaScript to reveal text, links, or meta tags. The crawler immediately indexes what it sees.

Does this recommendation apply to all types of sites?

Google remains pragmatic: “if a site can be built without JavaScript”. This phrasing leaves room for complex web applications (dashboards, SaaS, interactive tools) where JS is structurally necessary.

For a blog, a showcase site, or a classic e-commerce site? SSR becomes the expected norm. The problem is that many CMS and frameworks default to client-side rendering (CSR) or SPA, creating friction with this directive.

What are the real risks of client-side JavaScript?

- Indexing delays: content may take longer to be crawled and indexed, sometimes several days or even weeks

- Wasted crawl budget: Googlebot consumes additional resources to render JS, to the detriment of other pages

- Silent errors: a script that fails in production can render content invisible to the bot without your knowledge

- Cache issues: CDNs and proxies may serve non-rendered versions to bots

- No fallback: if JS doesn't execute (timeout, error), the page remains blank for the crawler

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Tests show that SSR significantly speeds up indexing — sometimes from several days to just a few hours. Sites migrated from CSR to SSR often notice improvements in crawl and content freshness.

However, Google remains vague on one point: the correlation between SSR and ranking. Splitt talks about indexing, not positioning. [To be verified]: does SSR directly impact rankings or only the speed of indexing? Public data is lacking on this precise point.

In what cases does this rule not strictly apply?

Interactive web applications (Gmail, Google Docs, SaaS tools) cannot reasonably function without client-side JavaScript. Google knows this and does not expect them to implement pure SSR.

The trap: many sites mentally classify themselves in this category when they are simply content or e-commerce sites. Dynamic product filtering or a contact form do not justify a full SPA. Let’s be honest: 80% of websites have no technical reason to rely on CSR — it’s a stack choice, not a functional necessity.

What nuance should be added to the SSR recommendation?

SSR is not binary. There’s a spectrum: pure SSR, partial hydration, static rendering, pre-rendering, ISR (Incremental Static Regeneration). All these models generate HTML server-side, but with different trade-offs in terms of performance and freshness.

Practical impact and recommendations

What specific actions should you take to transition to SSR?

The answer depends on your current stack. A WordPress or Drupal site already does SSR by default — the problem often arises from plugins or themes that inject critical content via JS. Audit what loads after the initial HTML.

For sites using React, Vue, or Angular: Next.js, Nuxt.js, and Angular Universal allow SSR without complete overhaul. But be careful — client-side hydration remains costly if it loads mega JS bundles. SSR doesn’t solve everything if the Time to Interactive skyrockets.

What mistakes should be avoided when migrating to SSR?

First mistake: confusing SSR with performance. Poorly implemented SSR (slow server, high TTFB) can be worse than well-optimized CSR. SSR shifts the load from the browser to the server — the server must keep up.

Second trap: forgetting about caching. An SSR without a caching strategy (CDN, edge rendering, application cache) will saturate your servers as traffic increases. Static rendering or ISR can be more robust alternatives for semi-dynamic content.

How can I check if my site is correctly rendered server-side?

- Disable JavaScript in Chrome DevTools and navigate your site — all critical content should be visible

- Inspect the source code (Ctrl+U): content should be present in the initial HTML, not injected afterwards

- Use the “URL Inspection” tool in Search Console to see what Googlebot really receives

- Compare the “View Page Source” with “Inspect Element”: if they differ massively, you’re on CSR

- Test with curl or wget: if the content doesn’t appear in the raw HTTP response, it’s client-side JS

- Check the server logs: an SSR generates CPU load on the server, while a CSR just serves static files

Google's message is clear: SSR should be the norm, not the exception. If your site loads critical content via client-side JavaScript, you are taking an unnecessary indexing risk.

The transition to SSR can be technically complex depending on your current stack — it often involves architectural choices, infrastructure adjustments, and rigorous validation. If these challenges exceed your internal resources, working with a specialized SEO agency can accelerate the transition while avoiding classic technical pitfalls. The goal remains the same: to make your content immediately accessible to crawlers, without compromise.

❓ Frequently Asked Questions

Le SSR est-il obligatoire pour être indexé par Google ?

Un site en Next.js ou Nuxt.js fait-il automatiquement du SSR ?

Le dynamic rendering est-il une alternative acceptable au SSR ?

Comment tester si mon contenu est bien en SSR ?

Le SSR impacte-t-il directement le classement dans Google ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.