Official statement

Other statements from this video 8 ▾

- □ Does Google really support JavaScript for SEO, or is it just a trap?

- □ Does JavaScript really slow down your site's indexing?

- □ Should you really abandon JavaScript for SSR in SEO?

- □ Should you really choose SSR or CSR based on the type of site?

- □ Should you really master Chrome DevTools to excel in technical SEO?

- □ Is it really necessary to master browser functionality for technical SEO?

- □ Should you really rely solely on Google's official documentation?

- □ Is Traffic Really the Only SEO Metric That Matters?

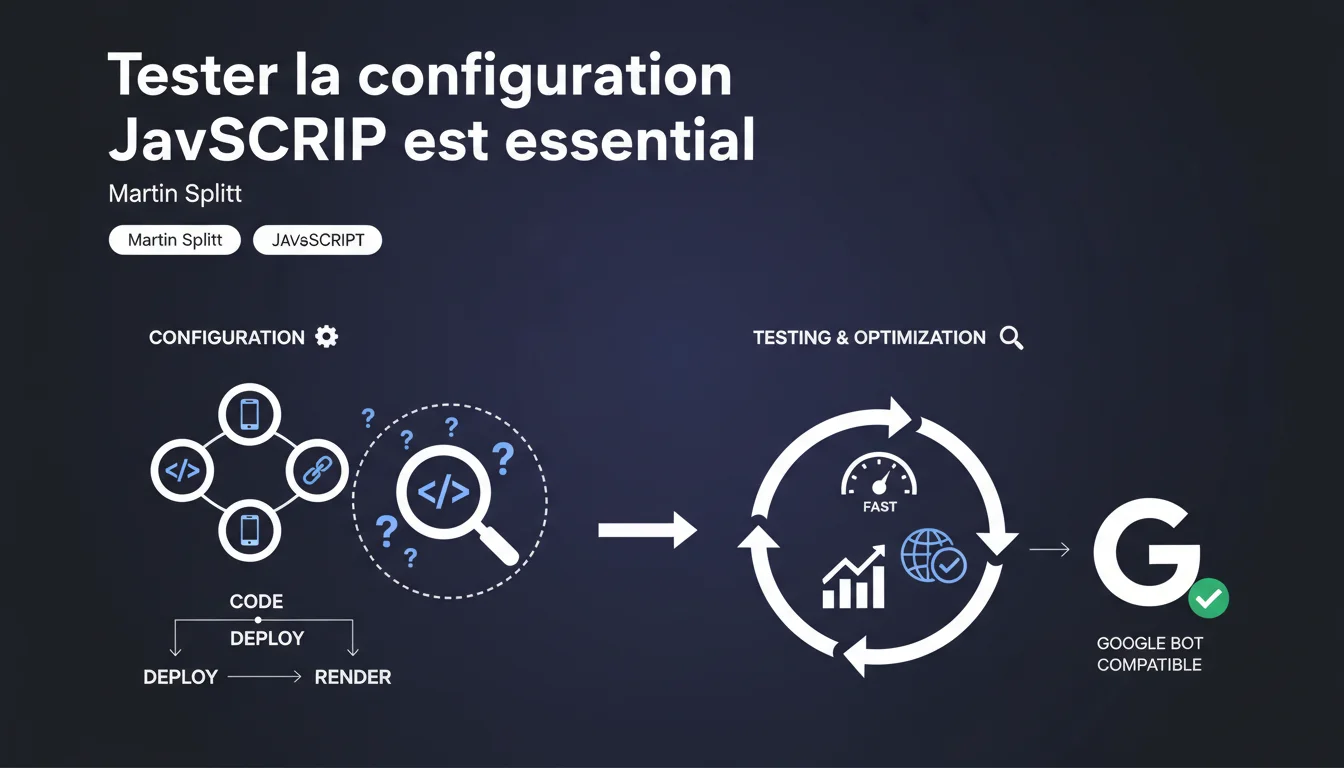

Google emphasizes: JavaScript sites need to adhere to strict technical best practices and undergo extensive testing to be properly crawled and indexed. Without rigorous validation of your setup, you risk invisible indexing losses at first glance.

What you need to understand

Why does Google place such importance on testing JavaScript configuration?<\/h3>

Crawling and indexing JavaScript remain fundamentally different<\/strong> from traditional HTML rendering. Google must execute the code, wait for resources, build the DOM — a process that multiplies potential failure points.<\/p> A configuration error (blocked resources, timeouts, missing dependencies) can render your content invisible to Googlebot<\/strong> while everything seems to work on the user side. This is why testing is so emphasized: what works in standard navigation can fail silently on the crawl side.<\/p> Google remains purposely vague<\/strong> on the details, but it is known to cover: server-side rendering or pre-generation (SSR/SSG), optimization of critical resources, state management, and proper DOM hydration.<\/p> The issue? Each framework (React, Vue, Angular, Next, Nuxt…) has its own requirements and pitfalls<\/strong>. A "best practice" for Next.js does not necessarily apply as is to a vanilla SPA.<\/p> The official tools<\/strong> (Search Console, Mobile Optimization Test, URL Inspection) show the final rendering seen by Googlebot. But they don't detect everything: intermittent timeouts, non-blocking JS errors, late-loaded content.<\/p> You need to cross-reference multiple sources: server logs, analysis of actual crawls, comparison of source DOM vs. rendered DOM, monitoring of Core Web Vitals in real conditions.<\/p>What technical best practices are being discussed?<\/h3>

How can you identify if your site is problematic for Google?<\/h3>

SEO Expert opinion

Does this statement align with what is observed in the field?<\/h3>

Absolutely. Poorly configured JavaScript sites consistently show massive discrepancies<\/strong> between crawled pages and indexed pages. We often see losses of 30 to 60% of content, without the technical team initially understanding why.<\/p> The catch: Google provides no operational definition<\/strong> of what constitutes a "best practice" or "thorough test." As a result, everyone interprets with their own criteria — and often misses the essentials. [To be verified]<\/strong>: what are the actual timeout thresholds applied by Googlebot according to site types?<\/p> Not all JavaScript sites are created equal. A strict server-side rendered<\/strong> site poses infinitely fewer problems than a pure client-side SPA. Between the two lies a whole spectrum of hybrid configurations with varying risks.<\/p> Let's be honest — Google's "best practices" are often behind modern frameworks<\/strong>. Next.js 13+ with App Router, React Server Components… Google is still communicating based on foundations designed for Angular 1.x.<\/p> If your architecture relies on complete SSG<\/strong> (100% static generation), you serve pure HTML to the crawl. JavaScript only comes into play afterwards for interactivity — Google sees the content immediately, without waiting for execution.<\/p> Another case: sites with solid HTML fallback<\/strong>, where essential content already exists in the source DOM before any JS execution. There, the risks are minimal even if the JavaScript crashes.<\/p>What nuances should be added to this recommendation?<\/h3>

In what cases does this rule become secondary?<\/h3>

Practical impact and recommendations

What should be prioritized in an audit of a JavaScript site?<\/h3>

Start by ensuring that all critical resources<\/strong> (JS, CSS, polyfills) are accessible to Googlebot — no robots.txt blocking, no 403/401 on CDNs. Then, compare the source DOM and the DOM after hydration: essential content should be present from the initial HTML.<\/p> Test with JavaScript disabled<\/strong>: what remains? If the answer is "nothing," you are in the red zone. Analyze server logs to spot Googlebot timeouts, intermittent 5xx errors, API requests failing on the crawl side.<\/p> Unmanaged timeouts<\/strong>: if your API calls take 8-10 seconds, Googlebot gives up before the rendering finishes. Critical external dependencies<\/strong> (Google Fonts, analytics) that delay rendering — if blocked, everything collapses.<\/p> Poorly initialized JavaScript states<\/strong>: content that only appears on user-triggered events (scroll, click), infinite scroll without HTML pagination, lazy-loading without SSR fallback. Google does not simulate these interactions.<\/p> Deploy a synthetic monitoring<\/strong> that crawls your site like Googlebot, with the same constraints (user-agent, timeout, no interactions). Always compare with Search Console data — any significant deviation is a warning signal.<\/p> Set up automatic alerts<\/strong> on critical metrics: ratio of crawled/indexed pages, 5xx errors, API timeouts, Core Web Vitals at P75. And above all: test after each major deployment, not just once a quarter.<\/p>What technical errors systematically block indexing?<\/h3>

How can you implement reliable monitoring of JavaScript rendering?<\/h3>

❓ Frequently Asked Questions

Les outils Google (Search Console, Test d'optimisation mobile) suffisent-ils pour valider ma configuration JavaScript ?

Le SSR (Server-Side Rendering) résout-il automatiquement tous les problèmes d'indexation JavaScript ?

À quelle fréquence faut-il tester la configuration JavaScript d'un site ?

Google pénalise-t-il les sites JavaScript par rapport aux sites HTML classiques ?

Quels frameworks JavaScript posent le moins de problèmes à Google ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2021

🎥 Watch the full video on YouTube →Related statements

Get real-time analysis of the latest Google SEO declarations

Be the first to know every time a new official Google statement drops — with full expert analysis.

💬 Comments (0)

Be the first to comment.