Official statement

Other statements from this video 8 ▾

- □ Google supporte-t-il vraiment JavaScript pour le SEO ou est-ce un leurre ?

- □ Le JavaScript ralentit-il réellement l'indexation de votre site ?

- □ Faut-il vraiment abandonner JavaScript pour le SSR en SEO ?

- □ Pourquoi la configuration JavaScript de votre site est-elle un point de contrôle critique pour Google ?

- □ Faut-il vraiment maîtriser Chrome DevTools pour faire du SEO technique ?

- □ Faut-il vraiment maîtriser le fonctionnement des navigateurs pour faire du SEO technique ?

- □ Faut-il vraiment se fier uniquement à la documentation officielle de Google ?

- □ Pourquoi le trafic ne devrait-il jamais être votre seule métrique SEO ?

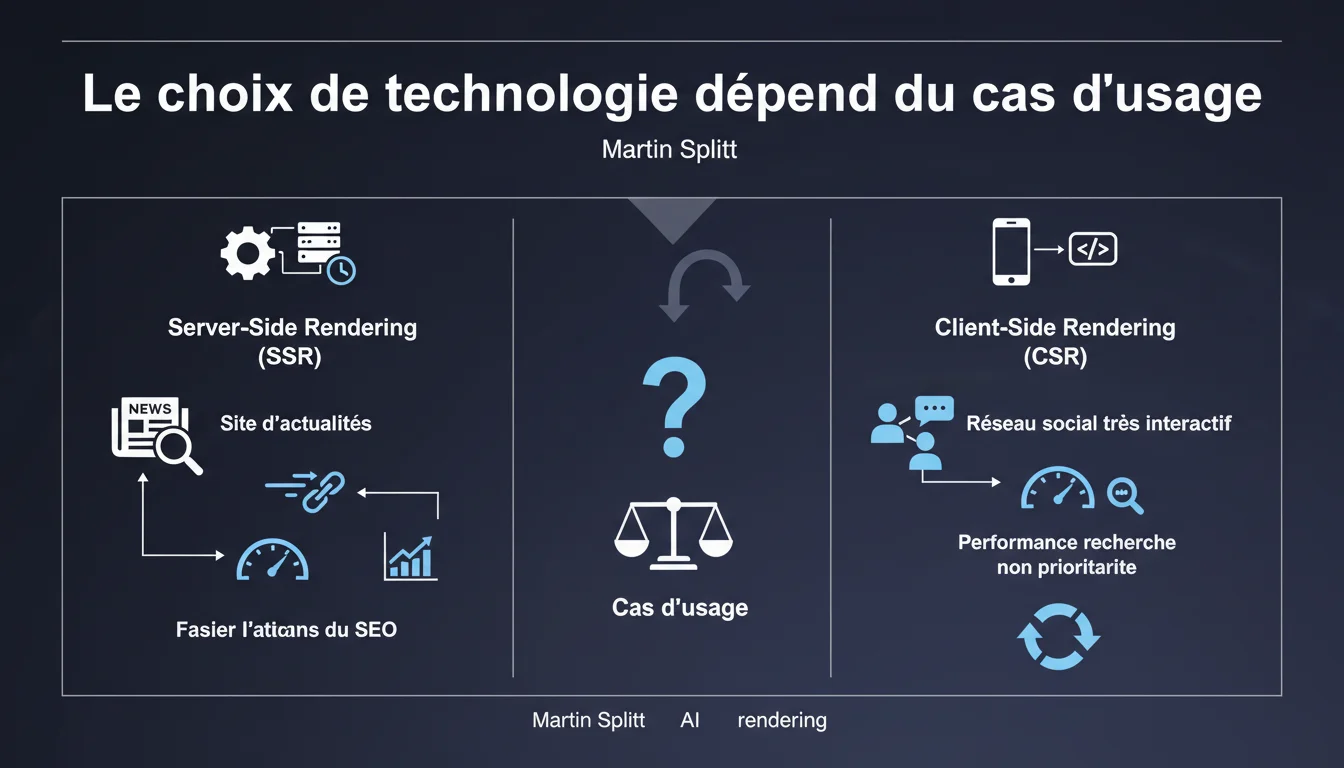

Martin Splitt argues that the choice between SSR and CSR should depend on the use case: SSR is recommended for news sites, while CSR is tolerated for social networks where SEO performance is not a priority. This position dangerously oversimplifies the technical reality and ignores the nuances of the modern JavaScript ecosystem.

What you need to understand

What exactly does Google say about the SSR vs CSR choice?

Martin Splitt proposes an apparently simple principle: adapt the rendering strategy according to the project's nature. For a news site that relies heavily on organic traffic, SSR (Server-Side Rendering) remains the official recommendation. For a social network where interaction is prioritized over discoverability, CSR (Client-Side Rendering) becomes acceptable.

This distinction is based on the idea that not all sites have the same business objectives. A media outlet lives off its SEO. A social network thrives on its captive user base — SEO plays a marginal role compared to the real-time experience.

Why does Google maintain this differentiation?

Because Googlebot handles JavaScript, but with limitations that have never been fully lifted. Crawl budget, rendering time, and dependence on external resources are sources of friction that penalize pure CSR sites.

By recommending SSR for critical content, Google indirectly admits that its infrastructure still prefers static HTML. It’s less expensive to crawl, faster to index, and more reliable in content delivery.

Does this approach truly reflect real-world usage?

Not entirely. The SSR/CSR dichotomy is outdated — it ignores hybrid architectures that dominate today: initial SSR with client hydration, incremental rendering (ISR), streaming SSR. Next.js, Nuxt, and SvelteKit have made these binary distinctions obsolete.

A modern news site can very well serve SSR for articles while keeping CSR for comment sections or customized modules. Splitt simplifies a technical landscape that has become significantly more complex.

- SSR recommended for critical indexable content (news, e-commerce, institutional)

- CSR tolerated for applications where SEO is not a primary driver (dashboards, closed social networks)

- Hybrid architectures not mentioned, even though they represent the majority of modern projects

- Crawl performance remains the decisive criterion for Google, even if Googlebot executes JavaScript

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Yes and no. Google’s preference for SSR on content-focused sites is confirmed by repeated tests: the time-to-index is consistently shorter with static HTML. No surprise there.

But — and this is where it gets tricky — claiming that a social network can afford pure CSR because "search performance is not a priority" reflects a binary view that fails to account for the actual SEO ambitions of these platforms. LinkedIn, Twitter (X), and Pinterest are heavily investing in SSR for their public pages. Why? Because organic traffic matters, even for them.

What nuances is Splitt purposely omitting?

First omission: the impact of Core Web Vitals. A poorly optimized CSR site accumulates penalties on LCP, CLS, and INP. It’s no longer just a crawl issue; it’s a direct ranking problem. Splitt doesn’t mention this.

Second omission: the actual cost of server-side rendering at scale. SSR = CPU load, network latency, infrastructure complexity. For a small media outlet, it’s manageable. For a high-traffic site, it becomes a major architectural challenge. [To be verified]: Does Google measure the impact of this load on server response times in its ranking signals?

Third blind spot: modern frameworks allow for conditional SSR (only for Googlebot) or static pre-rendering. These approaches circumvent the issue — Splitt acts as if they don’t exist.

In what cases does this recommendation not apply?

Concretely? Whenever you step outside the caricatured "news site vs social network" framework. A B2B SaaS with a strong SEO blog will need SSR for the blog, but CSR for the app itself. An e-commerce site may want SSR for product pages, and CSR for the cart and checkout.

The real question is never "SSR or CSR?". It’s "which part of my site needs to be crawlable immediately?". Everything else is architecture.

Practical impact and recommendations

What should you actually do with this information?

Map your site into two categories: critical SEO content (product pages, articles, landing pages) and interactive features (filters, comparison tools, member areas). The former should be in SSR or pre-rendered. The latter can remain in CSR.

If you are on a modern framework (Next.js, Nuxt, Astro), enable SSR by default and switch to CSR only for components that justify it. This is the safest approach — and the one that Google prefers without openly stating it.

What mistakes should you absolutely avoid?

Don’t tell yourself "we’re a social network, so CSR is fine". Unless you are really Facebook or LinkedIn, you need organic traffic. And that traffic comes through publicly indexable pages — so SSR.

Also, avoid overinterpreting this statement as validation for generalized CSR. Splitt speaks of specific use cases. He doesn’t say "CSR works just as well as SSR for Google" — he says "in certain contexts, CSR is tolerated".

How can you verify that your rendering strategy is appropriate?

Test the actual indexing. Use the URL Inspection Tool in Search Console to compare the raw HTML (what the server sends) and the rendered HTML (what Googlebot sees after executing JS). If there’s a significant gap, you have a problem.

Also monitor the time-to-index on new pages. A well-configured SSR site sees its pages indexed within hours. A CSR site may wait several days — this is a sign that Googlebot is struggling with your JS.

- Identify high SEO stake pages and set their rendering to SSR or SSG (Static Site Generation)

- Keep CSR for non-indexable functional areas (dashboards, advanced filters, private areas)

- Systematically test indexing with the Search Console tool on new critical pages

- Measure Core Web Vitals in real conditions — a poorly optimized CSR harms LCP and INP

- If you use a hybrid framework, configure rendering rules by route (SSR for /blog, CSR for /app)

- Monitor crawl budget: a CSR site consumes more Googlebot resources than an equivalent SSR site

❓ Frequently Asked Questions

Googlebot indexe-t-il aussi bien les sites CSR que les sites SSR ?

Un site e-commerce peut-il utiliser du CSR pour ses fiches produits ?

Quels frameworks permettent de mixer SSR et CSR facilement ?

Le pre-rendering statique est-il équivalent au SSR pour Google ?

Comment savoir si mon CSR pose problème pour l'indexation ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2021

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.