Official statement

Other statements from this video 12 ▾

- □ Faut-il abandonner les acronymes AEO et GEO au profit du bon vieux SEO ?

- □ Faut-il vraiment ignorer l'AI Overview dans sa stratégie SEO ?

- □ Faut-il vraiment encore croire au mantra « contenu pour les humains » en 2025 ?

- □ Faut-il arrêter d'optimiser pour les AI Overviews de Google ?

- □ Le SEO technique est-il vraiment devenu automatique grâce aux CMS modernes ?

- □ Le contenu original et authentique est-il vraiment votre meilleure arme face à l'IA ?

- □ Le contenu factuel basique est-il devenu inutile pour le SEO ?

- □ Le contenu de première main va-t-il vraiment devenir un critère de classement dominant ?

- □ Le contenu multimodal est-il vraiment la clé pour multiplier votre visibilité dans Google ?

- □ Les données structurées sont-elles vraiment inutiles pour l'IA de Google ?

- □ Faut-il arrêter de mesurer les clics organiques pour se concentrer sur les conversions qualitatives ?

- □ Pourquoi votre site n'apparaît-il pas dans l'AI Overview alors qu'il est bien positionné dans les résultats classiques ?

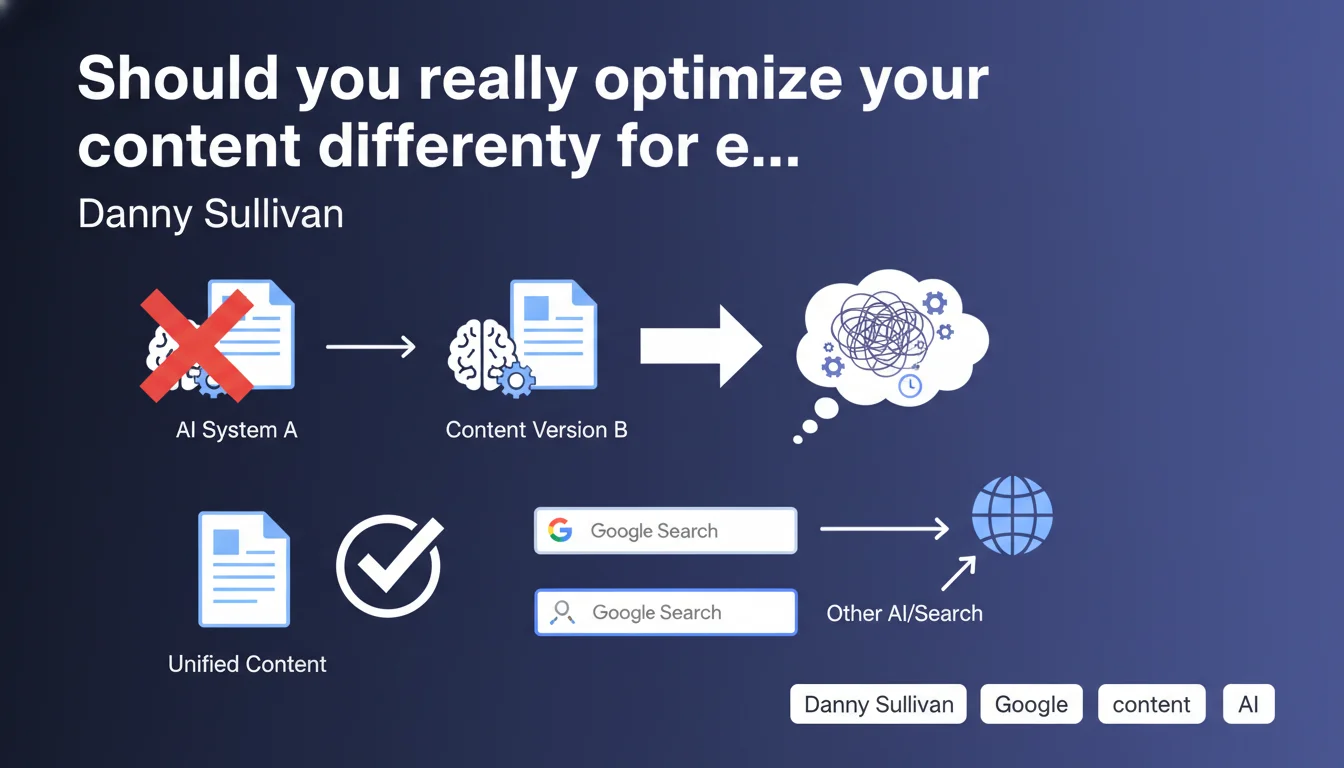

Google formally advises against creating multiple content versions tailored to different AI systems or platforms. This strategy unnecessarily complicates editorial management and becomes counterproductive as algorithms evolve. Focus on a single high-quality piece of content rather than technical variations.

What you need to understand

Why is Google taking a stand against multiple versions?

The multiplication of AI systems — ChatGPT, Perplexity, Bard, Claude, not to mention Google Search variants — could tempt some to create versions optimized specifically for each platform. One page for featured snippets, another for SGE, yet another for voice assistants.

Google puts an end to this drift. The reason? Systems evolve constantly. What works today for a given model becomes obsolete in three months. You end up maintaining five versions of the same content, four of which are already outdated.

Does this directive really apply to all formats?

The nuance matters. Google isn't saying to ignore structured formats — schema.org, structured data, properly marked-up FAQs remain relevant. What's targeted is the creation of distinct and redundant content meant to "speak" differently to each AI.

A long-form article remains a long-form article. Whether Google Bot, GPT-4, or another system crawls it, the substance doesn't change. Adaptation should happen at the technical level (markup, structure), not at the editorial level by duplicating content.

What are the concrete risks of this multi-version approach?

First pitfall: cannibalization. Multiple URLs with nearly identical content slightly reformulated — Google doesn't like it, and your ranking signals dilute. Duplicate content isn't dead.

Second problem: maintenance becomes a nightmare. Every update must be replicated across all versions. One mistake on any of them, and you have contradictory information indexed.

- Avoid multiplying URLs for the same topic under the guise of AI optimization

- Prioritize unique, structured content over technical variations

- Structured data is sufficient to make your content usable by different systems

- Maintenance complexity increases exponentially with each additional version

- Algorithms change too quickly for hyper-targeted optimizations to remain relevant

SEO Expert opinion

Is this position consistent with what we observe in practice?

Yes and no. Google is right fundamentally — multiplying versions is a dead-end. But in practice, we observe that certain format adjustments do work well. A structured FAQ page performs differently from a long-form article, even if the topic is identical.

Sullivan's real message is: don't create five distinct URLs. But nothing prevents you from having multiple sections within a single page — a TL;DR for featured snippets, extended development for semantic depth, an FAQ for direct questions. All on a single canonical URL.

What nuances should be added to this directive?

The statement clearly targets over-optimization excesses — sites that automatically generate content variants "for Gemini", "for ChatGPT", etc. That's spam in disguise, and Google hits hard there.

Conversely, adapting the internal structure of a page based on target queries remains relevant. An article can contain both an executive summary, detailed sections, comparison tables — each element serving a different intent without fragmenting the content.

In what cases might this rule have exceptions?

Multilingual sites, for starters. But that's about language versions, not "AI-optimized" versions. Each language gets its own URL and hreflang — that's different.

Another edge case: radically different formats for a distinct user experience. A mobile AMP version (even if less current) or a PWA aren't "versions for AI". They're technical implementations, not editorial variations. Critical distinction.

Practical impact and recommendations

What should you concretely do with this directive?

Audit your existing content. If you've created separate pages explicitly targeting different AI systems, consolidate. One main URL, well-structured, with all semantic variations built into it.

Focus your efforts on structured markup rather than multiplying content. An article rich in schema.org, with clearly delimited sections (proper headers, lists, tables), will be usable by all systems — present and future.

What mistakes should you absolutely avoid?

Don't fall into the trap of "content generated for SGE" vs "content generated for classic Search". That's exactly what Google condemns here. Good content is good content, period. Systems adapt, not the other way around.

Also avoid creating satellite pages "optimized for AI" with slightly reformulated auto-generated content. Google detects these patterns and — let's be honest — it smells like spam from a mile away. You'll waste more time maintaining this mess than producing real in-depth content.

- Consolidate redundant content on a single strong canonical URL

- Enrich your pages with structured data (schema.org, FAQ, HowTo)

- Structure your content with clear sections addressing different intents

- Remove or redirect "optimized for X system" versions if they exist

- Prioritize semantic depth on a single page rather than fragmentation

- Maintain simple architecture: fewer URLs, more substance per page

How can you ensure your strategy is aligned?

Test the coherence of your site architecture. If you have multiple URLs covering the same topic with only slightly different angles, you're probably off-track. The rule: one search intent = one primary URL.

Use your crawl tools to identify clusters of similar content. If you see groups of pages with 80% semantic similarity, that's an alarm bell. Merge, enrich, redirect.

In summary: Google confirms what experienced SEOs already know — multiplying content versions is a false good idea. Focus on unique, rich pages that are well-structured technically. AIs and search systems will evolve; your in-depth content will remain relevant.

Implementing this approach — consolidating content, redesigning architecture, enriching with structured semantics — can be complex on medium or large sites. These optimizations require a global strategic vision and specialized technical expertise to avoid redirect errors or ranking loss. In these cases, partnering with a specialized SEO agency helps secure the transition and optimize each decision based on your specific context.

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 17/12/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.