Official statement

Other statements from this video 12 ▾

- □ Faut-il abandonner les acronymes AEO et GEO au profit du bon vieux SEO ?

- □ Faut-il vraiment ignorer l'AI Overview dans sa stratégie SEO ?

- □ Faut-il vraiment encore croire au mantra « contenu pour les humains » en 2025 ?

- □ Faut-il arrêter d'optimiser pour les AI Overviews de Google ?

- □ Le contenu original et authentique est-il vraiment votre meilleure arme face à l'IA ?

- □ Le contenu factuel basique est-il devenu inutile pour le SEO ?

- □ Le contenu de première main va-t-il vraiment devenir un critère de classement dominant ?

- □ Le contenu multimodal est-il vraiment la clé pour multiplier votre visibilité dans Google ?

- □ Les données structurées sont-elles vraiment inutiles pour l'IA de Google ?

- □ Faut-il arrêter de mesurer les clics organiques pour se concentrer sur les conversions qualitatives ?

- □ Pourquoi votre site n'apparaît-il pas dans l'AI Overview alors qu'il est bien positionné dans les résultats classiques ?

- □ Faut-il optimiser son contenu différemment pour chaque IA et système de recherche ?

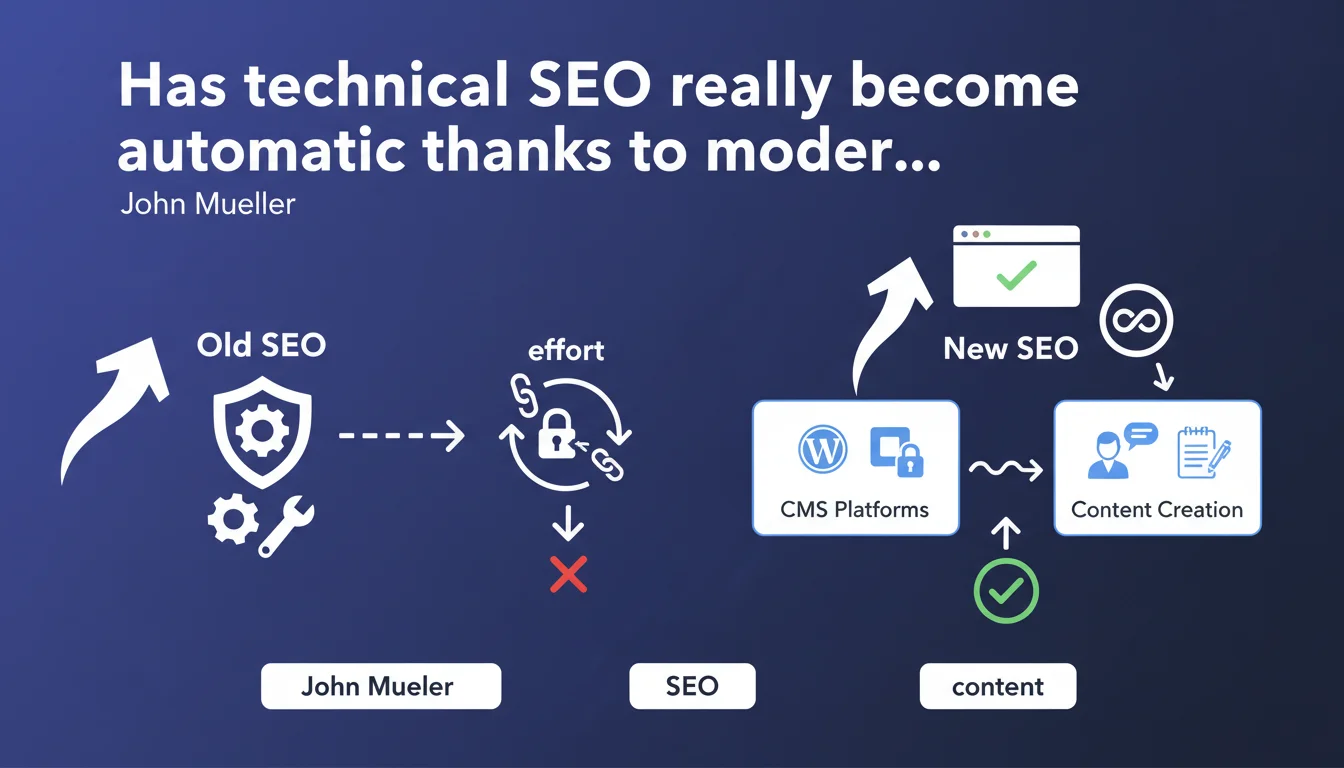

Google claims that popular CMS platforms now automate most of the technical SEO essentials, freeing creators to focus on content. According to John Mueller, WordPress, Wix and similar platforms handle optimizations that once demanded significant resources. A vision that deserves to be tested against real-world reality.

What you need to understand

What exactly does Google say about technical SEO automation?

John Mueller makes a bold assertion: technical aspects of search engine optimization no longer require the time and resource investment they once demanded. According to him, mainstream CMS platforms like WordPress or Wix natively integrate these optimizations.

Concretely? Meta tags, URL structure, XML sitemaps, robots.txt file, basic structured data — everything that constituted the technical foundation of a well-optimized site would now be automatically handled. The stated objective: enable content creators to focus on their core business rather than on SEO plumbing.

Why is Google communicating about this topic now?

This statement fits into a democratization of the web strategy that Google has been promoting for several years. By highlighting CMS platforms that simplify technical SEO, Mountain View indirectly encourages creators to get started without technical barriers.

There's also a quality dimension: if webmasters stop struggling with code to concentrate on content, Google bets that overall web quality improves. Fewer technically broken sites, more sites with actual content to index.

What are the direct implications for SEO professionals?

If this vision proves accurate, the profession necessarily evolves. Purely technical skills — those consisting of fixing poorly formatted title tags or manually optimizing crawl budget — would lose their differentiating value.

Conversely, expertise in content strategy, information architecture, user experience, and data analysis would increase in importance. Technical SEO doesn't disappear; it shifts toward more complex issues that CMS platforms cannot resolve alone.

- Modern CMS platforms effectively automate many basic technical aspects of search optimization

- Google pushes this vision to simplify web access and improve the overall quality of indexed content

- The SEO profession refocuses on less automatable expertise: strategy, complex architecture, advanced analysis

- This automation mainly concerns sites of small to medium scale, not complex platforms

SEO Expert opinion

Does this vision really match ground reality?

Let's be honest: for a standard WordPress blog or a typical Shopify store, Mueller isn't wrong. Technical fundamentals are indeed handled correctly by default, and that's considerable progress compared to ten years ago.

But — and here's where it gets tricky — this reality applies only to a fraction of websites. The moment you venture beyond the beaten path (complex architecture, advanced multilingual, heavy JavaScript, migrations, redesigns), automation shows its limits. CMS platforms can't handle specific use cases, crawl budget issues at scale, or the subtleties of canonicalization on e-commerce platforms with faceted filters.

Which technical aspects remain absolutely critical despite this automation?

Even with the best CMS in the world, you'll still need to manually manage: actual loading speed (not just installing a caching plugin), crawl optimization on large sites, subtle duplicate content issues, silo architecture for large catalogs, or complex technical migrations.

And let's talk about Core Web Vitals: no CMS magically optimizes them. You can install WordPress, but it won't fix your Cumulative Layout Shift problems caused by your ad banners or poorly coded theme. Automation stops where personalization begins.

Is Google's communication hiding another message?

There may be subtext here: Google wants to see fewer technically mediocre but content-rich sites, and more technically correct but value-poor sites. By encouraging creators to delegate technical aspects to CMS platforms, Mountain View hopes to raise the average level.

But this also means SEO becomes more competitive on other dimensions — those where human expertise remains irreplaceable. If everyone has a technically correct site by default, what makes the difference is strategy, competitive analysis, understanding search intent, and creating truly differentiated content.

Practical impact and recommendations

Should you abandon technical SEO audits to focus only on content?

No, obviously not. But you need to adjust priorities based on your context. If you're launching a blog on WordPress with properly configured Yoast SEO, then indeed concentrate on content and authority.

However, if you manage an e-commerce site with 50,000 products, a news site with continuous publication, or a complex multilingual platform, technical SEO remains your number one priority. CMS platforms automate the basics, not the optimizations that drive growth at scale.

What mistakes should you avoid in light of this Google statement?

The first mistake would be believing that a modern CMS dispenses with all technical verification. Even WordPress can generate canonicalization issues with certain plugins, or poorly configured XML sitemaps. Automation is never perfect.

Second pitfall: neglecting actual performance because "the CMS takes care of it." A WordPress theme can be technically correct on paper but load 2 MB of unnecessary JavaScript. Core Web Vitals don't fix themselves.

Third mistake: thinking this statement applies uniformly to all sectors. A 10-page brochure site and a marketplace with faceted filters have absolutely different technical requirements.

How can you concretely verify that your site really benefits from this automation?

Start with a classic, even quick technical audit. Check in Google Search Console that your pages are properly indexed, that there are no massive coverage errors, and that the sitemap is correctly submitted.

Monitor your meta tags on main pages: title, description, canonical, hreflang if multilingual. Look at whether structured data is present and valid via Google's rich results test. And most importantly, test your Core Web Vitals in real conditions with PageSpeed Insights or Lighthouse.

- Audit your Search Console to detect indexation errors that the CMS might have missed

- Manually verify essential tags on your main templates (title, meta description, canonical)

- Test your structured data with Google's rich results testing tool

- Measure your real Core Web Vitals with PageSpeed Insights, not just synthetic scores

- Check your robots.txt file and XML sitemap for potential configuration errors

- If you have a large site, analyze your crawl budget in server logs — no CMS does this automatically

- For complex sites, evaluate whether your internal link architecture is truly optimized or just functional

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 17/12/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.