Official statement

Other statements from this video 8 ▾

- □ Pourquoi la limite de 15 Mo de Googlebot n'est-elle documentée que maintenant ?

- □ Quelles sont les 3 seules exigences techniques absolues pour être indexé par Google ?

- □ Faut-il vraiment ignorer ce que Google ne supporte pas ?

- □ Pourquoi Google a-t-il divisé ses guidelines en règles strictes et simples recommandations ?

- □ Comment prioriser vos actions SEO selon le système de classification de Google ?

- □ L'accessibilité Googlebot est-elle vraiment une condition binaire pour l'indexation ?

- □ Google distingue-t-il vraiment les changements de documentation des changements d'algorithme ?

- □ HTTPS et vitesse : peut-on vraiment s'en passer pour ranker sur Google ?

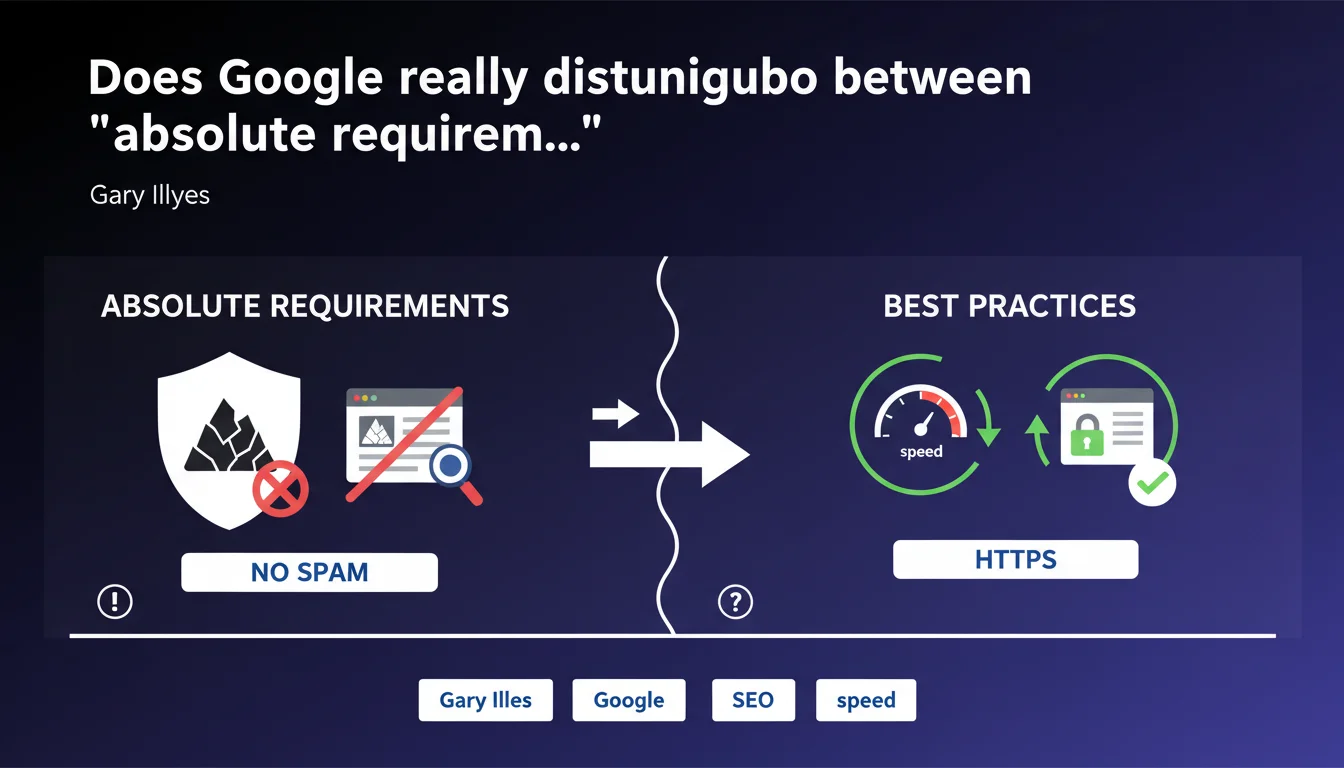

Google is now drawing a clear line: compliance with spam policies is an absolute requirement to remain indexed, unlike site speed or HTTPS which remain ranking factors but are not eliminating. This distinction between "hard requirements" and "best practices" changes how you should prioritize SEO initiatives — some points become non-negotiable.

What you need to understand

Why is Google formalizing this distinction now?

For years, Google mixed recommendations and requirements without clarifying what fell into the "nice to have" or "must have" category. This statement marks a turning point: non-spam becomes a sine qua non condition for inclusion in the index, just like technical accessibility of the site.

This change in communication is not insignificant. It reflects a desire to hold publishers accountable and justify more severe manual or algorithmic actions. By clearly separating spam policies and best practices, Google also gives itself a stronger legal framework to defend its decisions to exclude content.

What shifts into the "absolute requirement" category?

Specifically, everything related to spam policies: low-quality automated content, cloaking, aggressive keyword stuffing, deceptive redirects, large-scale manipulative links, massive scraping. If your site violates these rules, it can be partially or completely deindexed, regardless of its technical performance.

Conversely, speed, HTTPS, Core Web Vitals, mobile compatibility remain ranking factors. A slow site with HTTP can still be indexed and rank — poorly, certainly, but it remains in the game. A site that spams is taken out of the game.

Does this hierarchy really change things for practitioners?

In practice, most serious SEO professionals were already not crossing these red lines. But this formalization clarifies priorities, especially for clients who want to optimize everything at once without sufficient budget.

It also allows you to justify certain recommendations: no, you cannot "test" chain auto-generated content hoping it will slip through. The risk is no longer just a downrank, it's deindexing.

- Compliance with spam policies becomes a condition for indexation, not just a ranking factor

- Speed, HTTPS, CWV remain important but are not eliminating

- This distinction clarifies prioritization of SEO initiatives in the face of limited budgets

- Google gives itself a firmer framework to justify manual or algorithmic penalties

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes and no. For years, we have observed that certain spammy sites survive in the index despite flagrant violations — massive scraping, link farms, auto-generated content. The gap between official doctrine and real-world application sometimes remains stark.

This statement therefore does not change much in the short term for those exploiting loopholes. It mainly serves to set an official framework for future actions. [To be verified] whether Google really strengthens automated detection or manual actions following this communication.

What nuances should be added to this "absolute requirement"?

Let's be honest: Google cannot manually review the billions of pages in its index. The requirement is "absolute" in theory, but its application depends on detection capability — algorithmic or following a report.

Some gray areas persist. For example, how far can AI assistance go in generating content before falling under "spammy automatically-generated content"? Google remains deliberately vague. Similarly, the boundary between natural linking and "link schemes" remains a matter of casuistic interpretation.

In what cases does this rule not protect?

A site can scrupulously comply with all spam policies and never rank if it has neither authority, relevant content, nor positive user signals. The absence of spam is a necessary but not sufficient condition.

Conversely, sites that push the limits (borderline content, somewhat questionable backlinks) can continue to perform if they provide real value and generate engagement. Context and intent still matter, even if Google toughens its stance.

Practical impact and recommendations

What do you need to do concretely to stay on the right side of the line?

Before chasing Core Web Vitals or HTTPS, audit your compliance with spam policies. Review each section of the guidelines: automated content, cloaking, keyword stuffing, suspicious redirects, bulk-purchased or exchanged links.

If you use AI to produce content, make sure a human reviews, enriches and validates each publication. Generated content must provide real value, not duplicate reworded text. And document this process — in case of manual review, you must be able to prove your good faith.

What mistakes should you avoid to not cross the red line?

Never rely on non-detection as a strategy. What passes today can be caught up by an algorithm tomorrow — and Google does not warn before taking action. Avoid "borderline" tactics: discreet PBN, even "improved" spinner content, disguised link purchases.

Be especially careful with third-party content: comments, UGC, directories, forums. If your site hosts spam without moderation, you are responsible in Google's eyes. Implement strict moderation and noindex/nofollow on risky sections.

How can I verify that my site meets these absolute requirements?

Use Search Console to detect any manual actions already underway. Check the "Security Issues and Manual Actions" report regularly — some penalties go unnoticed if you don't verify.

Have a third party audit your link profile, especially if you inherited an opaque SEO history. Disavow what is clearly toxic, but without paranoia — too aggressive a cleanup can also harm.

- Audit compliance of each section of the site with official spam policies

- Document editorial processes, especially if you use AI for production

- Implement strict moderation on all user-generated content

- Monitor Search Console to detect any manual action as soon as it appears

- Have your backlink profile audited by an external expert to identify risks

- Disavow clearly toxic links without overreacting

- Prioritize anti-spam compliance before technical optimization or speed

❓ Frequently Asked Questions

Un site lent peut-il être désindexé par Google ?

HTTPS est-il obligatoire pour rester dans l'index Google ?

Quelles sont exactement les spam policies dont la violation entraîne une désindexation ?

Si mon site a été pénalisé pour spam, puis-je revenir dans l'index ?

Cette distinction entre exigences et bonnes pratiques change-t-elle la stratégie SEO globale ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 22/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.