Official statement

Other statements from this video 8 ▾

- □ Pourquoi la limite de 15 Mo de Googlebot n'est-elle documentée que maintenant ?

- □ Faut-il vraiment ignorer ce que Google ne supporte pas ?

- □ Pourquoi Google a-t-il divisé ses guidelines en règles strictes et simples recommandations ?

- □ Comment prioriser vos actions SEO selon le système de classification de Google ?

- □ L'accessibilité Googlebot est-elle vraiment une condition binaire pour l'indexation ?

- □ Google distingue-t-il vraiment les « exigences absolues » des « bonnes pratiques » en SEO ?

- □ Google distingue-t-il vraiment les changements de documentation des changements d'algorithme ?

- □ HTTPS et vitesse : peut-on vraiment s'en passer pour ranker sur Google ?

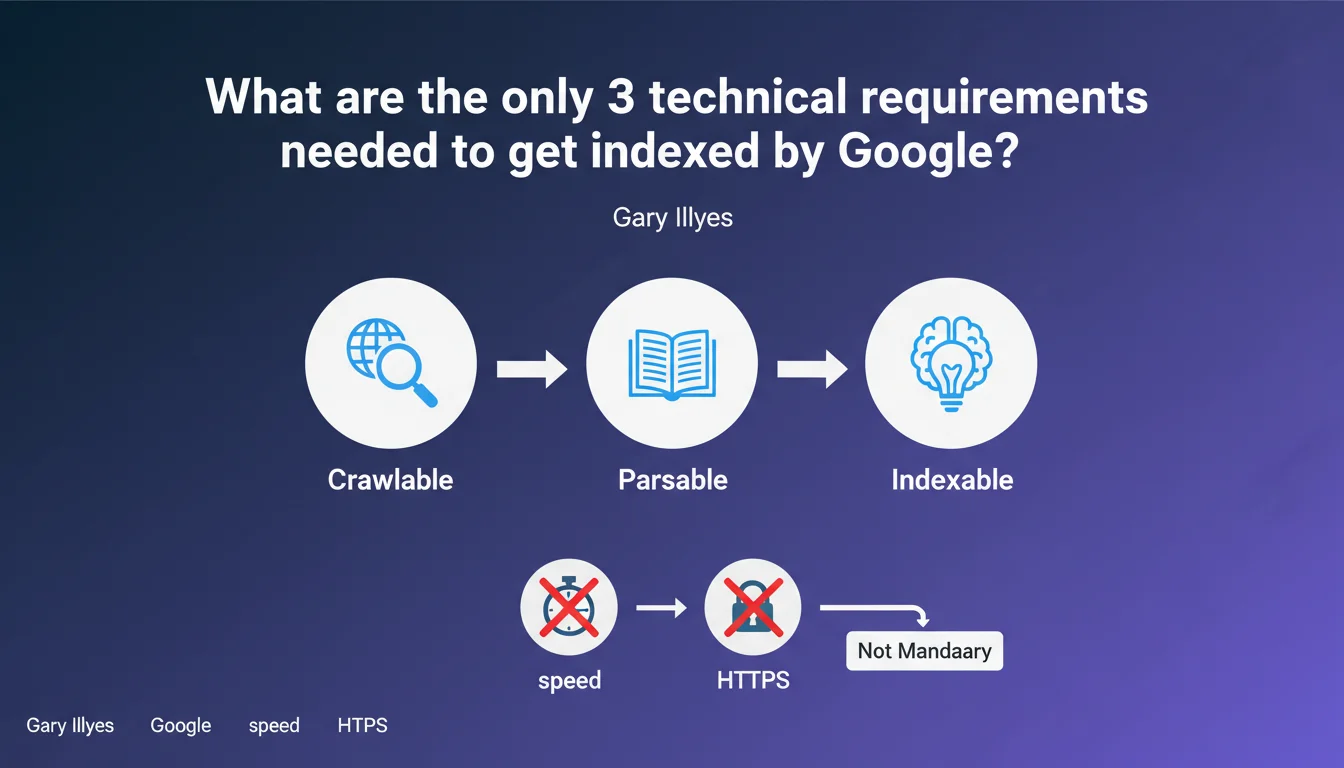

Google confirms there are only three strict technical prerequisites to appear in its search results. All other factors — speed, HTTPS, Core Web Vitals — remain important for ranking but do not block indexation. A site can technically be indexed without being fast or secure.

What you need to understand

Why does Google insist on these three requirements only?

The distinction is crucial: indexation vs ranking. Gary Illyes reminds us that for a page to exist in Google's index, it must meet three minimal technical conditions. Nothing more.

Other signals — performance, security, user experience — then come into play in the ranking equation. But technically, a slow HTTP-only site can be perfectly crawled and indexed.

What exactly are these three requirements?

Google doesn't detail them explicitly in this statement — and that's where it gets tricky. The statement remains vague. We can reasonably assume they are: crawlability (Googlebot can access pages), exploitable content (readable HTML, no blocking by critical JavaScript), and absence of exclusion instructions (no noindex, no robots.txt blocking of essential resources).

But this is an interpretation — Gary Illyes doesn't list these three points explicitly. [To verify]

What happens to other SEO factors in this logic?

They remain essential for performing, but are not technical locks. HTTPS improves trust and slightly boosts ranking. Core Web Vitals influence user experience and conversion rates. Speed impacts crawl budget on large sites.

But none of these elements technically prevents Google from indexing a page. The nuance is important: a technically mediocre site can be indexed — it simply will never be visible.

- Indexation: minimum technical access guaranteed by three non-detailed prerequisites

- Ranking: the fruit of hundreds of signals (speed, HTTPS, content, backlinks, etc.)

- A site can be indexed without being performant, but it will never rank correctly

- The statement remains unclear about the exact nature of the three absolute requirements

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On substance, it's true: we regularly observe technically mediocre sites that are indexed. Slow pages, HTTP, poorly structured HTML — Google crawls them anyway.

But the wording is problematic. Saying there are only three absolute requirements without naming them precisely is frustrating. Gary Illyes could have been more explicit. SEO practitioners know that crawlability, absence of noindex and exploitable content are basics — but is that really what he's talking about? [To verify]

What risks are there in misinterpreting this statement?

The main danger: downplaying the importance of non-blocking factors. A site can be indexed without HTTPS, true — but it will be penalized in ranking. It can be slow — but Google will reduce its crawl budget and user experience will be disastrous.

Some might read this statement as a green light to neglect performance or security. Bad idea. Indexation does not mean visibility. An indexed but poorly ranked site is useless.

In what cases does this rule not apply?

Heavy JavaScript sites are a borderline case. Technically, Google can index them — but if rendering requires blocked resources or excessive execution time, indexation becomes partial or fails. The three absolute requirements then become insufficient in practice.

Another case: sites with chronic server problems (frequent timeouts, 5xx errors). Google can theoretically crawl them, but eventually drastically reduces crawl frequency. Result: sporadic, incomplete indexation. The theory of three requirements bumps up against operational reality.

Practical impact and recommendations

What should you do concretely following this statement?

First, don't change your technical priorities. The fact that a site can be indexed without HTTPS or optimal performance doesn't mean you should ease up on these aspects.

On the other hand, if you're managing a site undergoing redesign or a project with tight budget constraints, this statement confirms that it's better to prioritize indexation before optimization. Make sure Google can crawl your pages, no noindex is blocking access, content is technically exploitable — only then work on speed and HTTPS.

How do I verify my site meets these three minimum requirements?

Start with Search Console: Coverage section, analyze excluded pages. If pages are flagged as "Discovered, not currently indexed" or "Crawled, not currently indexed," it's often related to crawlability issues, weak content, or low priority.

Next, check your robots.txt: no Disallow directive should block critical resources (CSS, JavaScript needed for rendering). Test with Search Console's URL inspection tool to see how Googlebot interprets your pages.

- Search Console audit: excluded pages, coverage errors, crawl warnings

- robots.txt check: no critical resources blocked

- Googlebot rendering test: verify main content is properly extracted

- Absence of unintended noindex tags (HTTP headers, meta tags)

- Server response time: avoid frequent timeouts

- URL accessibility: no infinite redirects, no massive 4xx/5xx errors

What mistakes should you avoid after reading this statement?

Don't fall into the trap of "as long as it's indexed, it's good". Indexation is a necessary but not sufficient condition. An indexed site with mediocre content, catastrophic performance and no HTTPS will never generate quality traffic.

Another common mistake: neglecting crawl budget on the grounds that Google indexes anyway. On large sites (e-commerce, media, directories), a poor crawl budget delays adoption of updates and dilutes exploration on useless pages. Theoretical indexation doesn't compensate for a crawl prioritization problem.

In summary: this statement confirms you can technically be indexed with a bare minimum. But in reality, a site that settles for the bare technical minimum will never succeed. Continue to optimize performance, security, structure — and if the scope of technical work exceeds your internal resources, working with a specialized SEO agency can make the difference between an indexed site and one that actually performs.

❓ Frequently Asked Questions

Quelles sont précisément les trois exigences techniques absolues mentionnées par Google ?

Un site en HTTP peut-il être indexé par Google ?

La vitesse de chargement bloque-t-elle l'indexation ?

Si mon site respecte ces trois exigences, pourquoi certaines pages ne sont-elles pas indexées ?

Faut-il arrêter d'optimiser la performance et le HTTPS après cette déclaration ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 22/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.