Official statement

Other statements from this video 8 ▾

- □ Quelles sont les 3 seules exigences techniques absolues pour être indexé par Google ?

- □ Faut-il vraiment ignorer ce que Google ne supporte pas ?

- □ Pourquoi Google a-t-il divisé ses guidelines en règles strictes et simples recommandations ?

- □ Comment prioriser vos actions SEO selon le système de classification de Google ?

- □ L'accessibilité Googlebot est-elle vraiment une condition binaire pour l'indexation ?

- □ Google distingue-t-il vraiment les « exigences absolues » des « bonnes pratiques » en SEO ?

- □ Google distingue-t-il vraiment les changements de documentation des changements d'algorithme ?

- □ HTTPS et vitesse : peut-on vraiment s'en passer pour ranker sur Google ?

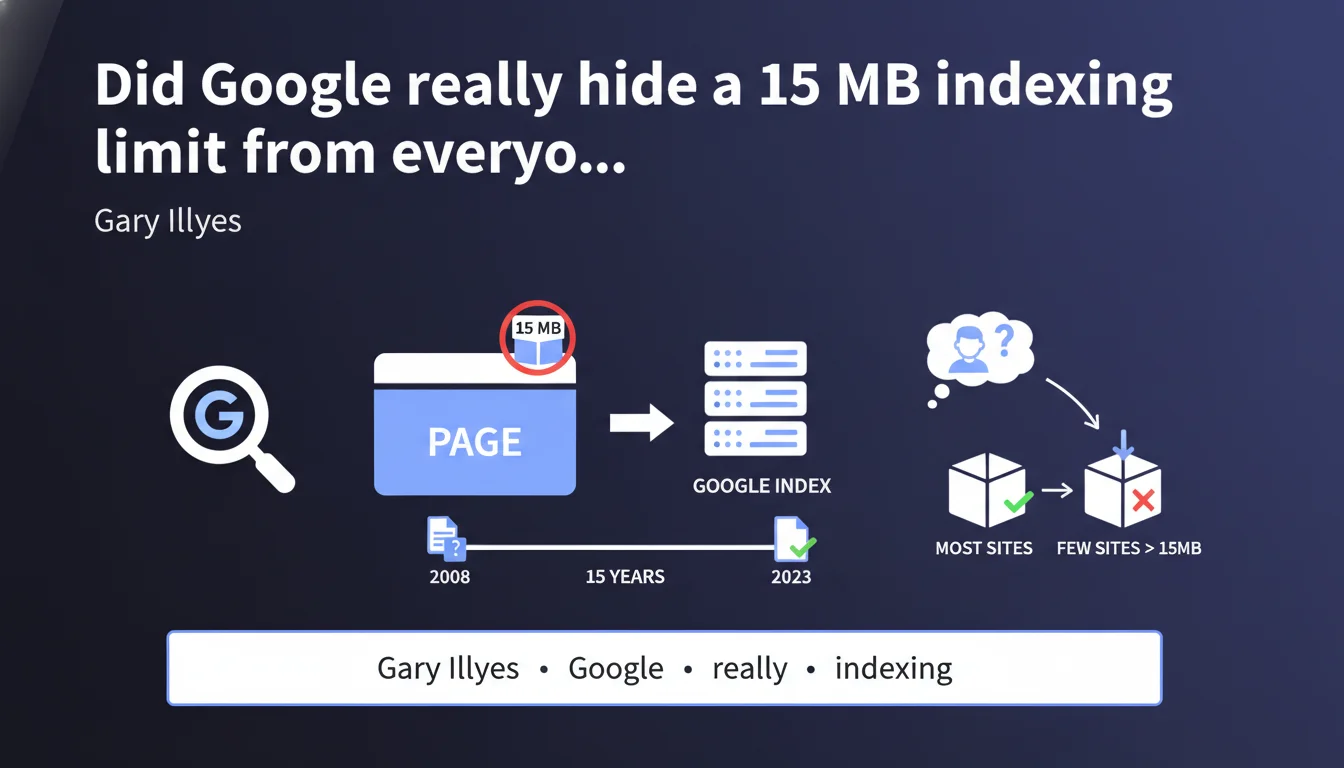

Googlebot has refused to index pages exceeding 15 MB — and it's been doing so for 15 years. Google simply just publicly documented this technical limit that already existed. According to Gary Illyes, very few sites are affected, but those that are risk partial or complete non-indexation of their heaviest content.

What you need to understand

Has this 15 MB limit really existed for 15 years?

Yes, and it's confirmed in black and white by Gary Illyes. The technical limit of 15 megabytes per page is not new — it was simply undocumented until recently.

In practice, if a page HTML (with all its inlined resources) exceeds this threshold, Googlebot cuts off indexation beyond this limit. Everything after that? Invisible to Google.

Why is Google documenting this limit now?

Good question. Gary Illyes speaks of "clarification" rather than a change in functionality. Official documentation was behind the technical reality.

This formalization probably came about because edge cases emerged — sites with ultra-heavy JavaScript pages, massive dynamic content, or development errors creating monstrous pages. Documenting the limit avoids unnecessary support tickets.

How many sites are actually affected?

According to Illyes: very few. And that's probably true for the majority of standard editorial sites.

But be careful — "very few" doesn't mean "none". Sites with complex JavaScript rendering, poorly paginated infinite scroll listing pages, or certain misconfigured CMS systems can easily exceed this ceiling without realizing it.

- The limit applies to final indexable HTML, not external resources (CSS, JS, images).

- A 15 MB page is huge in pure text — but far more common than you'd think with dynamic or poorly optimized content.

- Googlebot won't warn you if you exceed the limit — it cuts off silently.

- This limit has existed for approximately 15 years, so it has survived several generations of Google algorithms.

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, broadly. We've known for a long time that Googlebot has crawl and resource limitations — the 15 MB limit fits into this logic.

What's more interesting is the radio silence for 15 years. This means Google deliberately chose not to document a major technical constraint. Why? Probably because it affected so few sites that it didn't justify official communication — until problematic cases began to multiply.

What nuances need to be added?

Gary Illyes' wording remains vague on one critical point: what exactly happens to content beyond 15 MB? Is it simply ignored, or does Google index the first 15 MB and abandon the rest?

Observations suggest that Googlebot simply truncates after 15 MB. If your strategic content is at the bottom of the page, that's problematic. But we don't have precise official documentation on the exact behavior — just observed use cases. [To be verified]

Another point: this limit concerns raw HTML. External resources (images, CSS, JS loaded via tags) don't count toward this quota. But inlined JavaScript content or server-side generated content does.

In which cases does this limit really cause problems?

Three main scenarios emerge from the field:

Sites with broken or missing pagination — E-commerce category pages that load hundreds of products with infinite scroll without proper pagination can exceed the limit if the generated HTML is too heavy.

Poorly optimized JavaScript applications — Some front-end frameworks (React, Vue, Angular poorly configured) generate massive initial HTML with inlined state management. Result: technically compliant pages but too heavy for Googlebot.

Development errors — Infinite loops in server-side rendering, involuntary inclusion of entire databases in the DOM, or templating errors creating massive content duplication.

Practical impact and recommendations

How do I check if my site exceeds this limit?

Three complementary methods to audit your pages:

Search Console → URL Inspection — Use "Inspect URL" and review the rendered HTML. If the page is over 15 MB, Search Console may display a warning or abnormal indexing behavior.

Crawl with Screaming Frog or Oncrawl — Configure your crawler to measure the HTML size of each page. Filter results to identify pages > 10 MB (reasonable alert threshold).

Server analysis — Check Apache/Nginx logs to identify Googlebot requests with abnormally heavy responses. Correlation with non-indexed pages = alarm signal.

What should I do if a critical page exceeds 15 MB?

Three priority optimization strategies:

- Implement proper pagination for listing pages — never load more than 50-100 items per page.

- Move non-critical JavaScript content to external files rather than inlining it in the HTML.

- If you're using SSR (Server-Side Rendering), optimize the size of hydrated state — avoid including redundant data in the initial DOM.

- Audit templates to detect involuntary rendering loops or massive content inclusions.

- Split monolithic pages into multiple sub-pages with coherent internal linking.

Should I worry if I'm below 15 MB?

No, but safety margin is important. If your pages are 12-13 MB, you're technically compliant, but vulnerable to any change — content additions, CMS updates, new features.

Aim for a maximum of 5 MB per page for standard content. Beyond that, question the architecture and real utility of this weight.

The 15 MB limit has existed for a long time and affects few sites — but those exceeding it risk silent partial indexation. The issue is less the limit itself than the ability to detect problematic pages before Google truncates them.

These technical optimizations — crawling, render analysis, architecture redesign — can quickly become complex to implement alone, especially on sites with advanced JavaScript stacks or custom CMS systems. Working with an SEO-specialized agency provides accurate diagnosis and customized action plan, without risking breaking existing functionality through blind experimentation.

❓ Frequently Asked Questions

La limite de 15 Mo inclut-elle les images et fichiers CSS/JS ?

Que se passe-t-il exactement si une page dépasse 15 Mo ?

Comment savoir si mes pages dépassent cette limite ?

Les sites e-commerce avec de longues pages de produits sont-ils concernés ?

Cette limite va-t-elle évoluer à l'avenir ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 22/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.