Official statement

Other statements from this video 14 ▾

- □ What exactly is a web crawler and why does Google insist on this definition?

- □ Is Googlebot really just crawling without making any indexing decisions?

- □ How does Googlebot actually crawl your web pages?

- □ Does your crawl budget really depend on Search demand?

- □ Does crawl budget really exist at Google?

- □ Should you block certain pages from Google crawl to optimize your crawl budget?

- □ Is Google really running out of storage space to index your content?

- □ Does linking from your homepage really accelerate the crawl of your new pages?

- □ Should you really restrict the Indexing API to only Google's recommended use cases?

- □ Why does Google restrict the Indexing API to specific use cases instead of making it universally available?

- □ Can the Indexing API Remove Your Content as Fast as It Indexes It?

- □ Does improving content quality really trigger Google to crawl your site more frequently?

- □ Does removing your low-quality pages really boost your crawl budget and rankings?

- □ Can the URL inspection tool really speed up the indexing of your improvements?

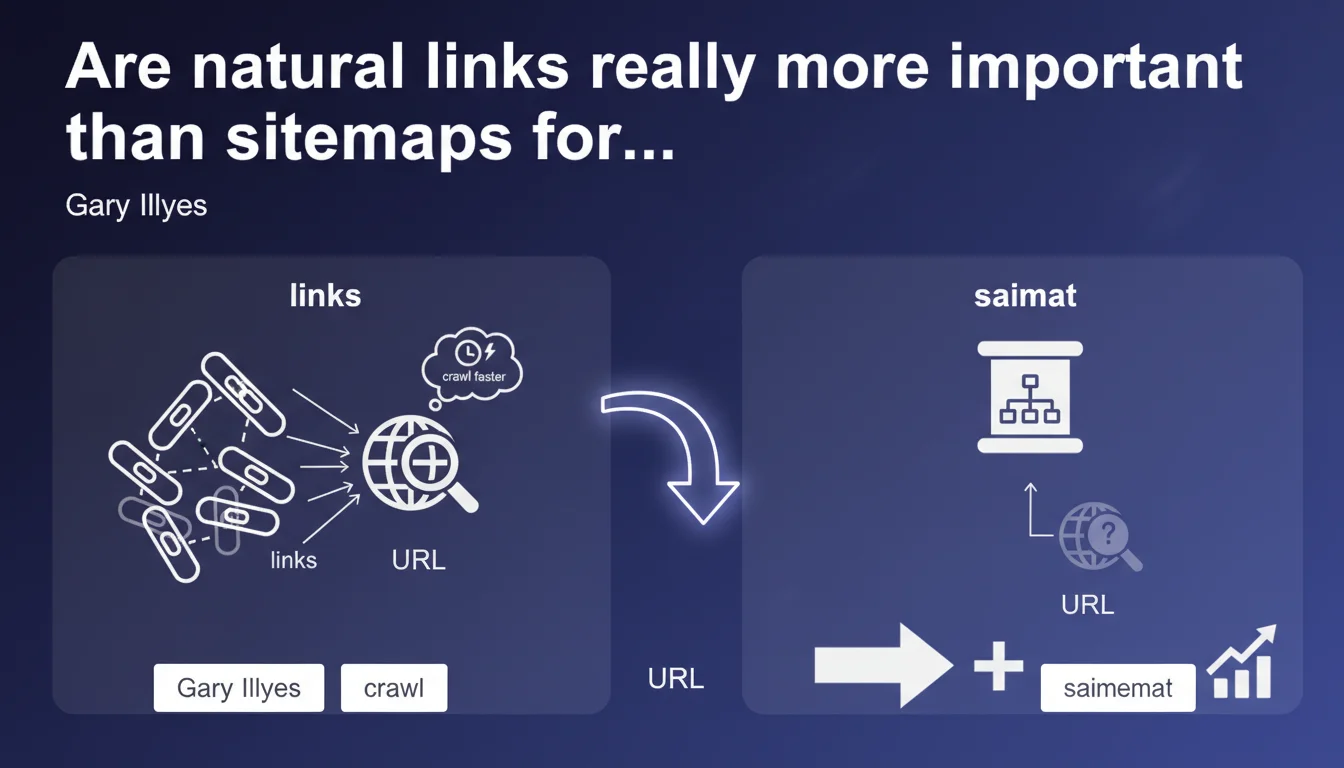

Google prioritizes natural links over sitemaps for discovering new URLs. A link provides context and crawl priority signals, whereas a sitemap merely lists pages. This hierarchy directly impacts crawl speed and indexation timing.

What you need to understand

Why does Google distinguish between discovery and indexation?

Discovery is the phase where Googlebot identifies that a URL exists. Indexation comes after — it's the processing and storage of content. Gary Illyes is specifically talking about discovery here, not guaranteed indexation.

An XML sitemap tells Google: "Here are my URLs". A natural link says: "This page is connected to my ecosystem, here's its semantic context through the anchor text and surrounding content". This nuance is critical.

What does a natural link provide that a sitemap doesn't?

Semantic context first. The link anchor, the paragraph surrounding it, the source page — all of this informs Googlebot about the topic of the target page before even crawling it.

Then, an implicit priority signal. If a page receives links from multiple already-crawled pages, Google understands it deserves attention. A sitemap treats all URLs equally — no hierarchy.

Is the sitemap useless then?

No. It remains a safety net for orphaned URLs, sites with poor internal linking, or very large sites where certain deep pages might escape standard crawling.

But relying solely on the sitemap to discover strategic content? Bad idea. It's a passive tool, not a prioritization lever.

- Natural links provide context and crawl priority signals

- The sitemap is a passive list without hierarchy or semantic context

- Google crawls URLs discovered via internal or external links faster

- Good internal linking remains the foundation of an effective discovery strategy

- The sitemap stays useful for URLs difficult to reach via links

SEO Expert opinion

Does this statement really reflect ground reality?

Yes, largely. We've observed for years that well-linked pages are crawled faster and more frequently than those listed only in a sitemap. Server logs confirm this consistently.

Where it gets unclear: Gary doesn't specify from what threshold internal linking becomes sufficient. Three links from the homepage? Ten from deep pages? No concrete metrics. [To verify] according to your industry and usual crawl frequency.

What are the limitations of this claim?

On a new or low-authority site, relying solely on internal linking to discover 10,000 pages can take weeks. The sitemap mechanically accelerates initial discovery here, even without priority.

Another case: heavy JavaScript sites where internal linking isn't immediately accessible on first crawl. The sitemap becomes the lifeline to avoid ghost URLs.

Finally, Google says nothing about link quality. Does a link from a page crawled once monthly have the same weight as a link from the homepage crawled daily? Radio silence.

Should you overhaul your sitemap strategy?

No, don't throw away your sitemaps. But stop bloating them with non-strategic URLs or endless paginated pages. A 50,000-URL sitemap where 40,000 are rarely updated dilutes the signal.

Focus the sitemap on strategic editorial content, priority landing pages, deep pages difficult to reach. Anything accessible in 2-3 clicks from the homepage with good internal linking? No need to include it.

Practical impact and recommendations

How do you optimize internal linking for discovery?

Prioritize links from pages with high crawl frequency — homepage, main categories, recently updated articles. A link from a page crawled daily transmits this rhythm to target pages.

Use descriptive anchor text that contextualizes target content. "Learn more" tells Googlebot nothing. "Complete guide to crawl budget optimization" informs about the topic before even clicking.

Avoid excessive deep linking. A page accessible only after 5-6 clicks from the homepage will be discovered, sure, but with far lower priority than a page 2 clicks away.

What should you actually do with your sitemap?

Clean it up. Remove non-strategic URLs, purely technical pages, valueless filters. A lean, targeted sitemap is more effective than an exhaustive directory.

Segment if needed: one sitemap for editorial content, another for product sheets, another for resources. Google can then prioritize differently by content type.

Monitor Search Console coverage reports. If URLs submitted in a sitemap remain "Discovered – not currently indexed" for months while also having internal links, the problem isn't discovery but quality or relevance.

What errors should you absolutely avoid?

- Never rely solely on the sitemap to discover strategic content

- Avoid giant unsegmented sitemaps (>50,000 URLs) — split them

- Don't list in sitemap URLs with no internal links — that's a mixed signal

- Don't overlook linking from recent pages to evergreen content you want to boost

- Stop submitting in sitemaps pages blocked by robots.txt or marked noindex

- Remember that discovery ≠ indexation — a link doesn't guarantee indexation, just a visit

❓ Frequently Asked Questions

Un sitemap XML est-il encore utile en 2025 ?

Combien de liens internes faut-il pour qu'une page soit découverte rapidement ?

Les liens externes comptent-ils aussi pour la découverte ?

Faut-il retirer les URLs bien maillées de son sitemap ?

Pourquoi certaines pages en sitemap ne sont-elles jamais crawlées ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 14/03/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.