Official statement

Other statements from this video 16 ▾

- □ Le SEO Starter Guide de Google est-il vraiment le meilleur point de départ pour apprendre le référencement ?

- □ Faut-il vraiment définir objectifs et conversions avant d'optimiser son SEO ?

- □ Faut-il vraiment adapter sa stratégie SEO à l'audience avant d'optimiser techniquement ?

- □ Les CMS courants comme WordPress suffisent-ils vraiment pour le SEO technique ?

- □ Faut-il vraiment tester l'indexation d'un site en cherchant son nom de domaine sur Google ?

- □ Faut-il vraiment interroger vos clients pour bâtir votre stratégie SEO ?

- □ Faut-il vraiment renoncer aux requêtes génériques quand on est une petite entreprise ?

- □ Les petits sites peuvent-ils vraiment tester librement sans risque SEO ?

- □ Pourquoi Martin Splitt insiste-t-il autant sur l'installation de Search Console et d'outils de mesure ?

- □ Combien de temps faut-il vraiment pour qu'une modification de contenu soit visible dans Google ?

- □ Peut-on vraiment rechercher son propre site sur Google sans risque ?

- □ Faut-il embaucher un expert SEO uniquement quand on peut mesurer son ROI ?

- □ Les promesses de classement #1 sont-elles toutes des arnaques SEO ?

- □ Les Search Essentials de Google sont-elles vraiment le mode d'emploi du SEO ?

- □ Pourquoi certaines optimisations SEO prennent-elles des mois à produire des résultats ?

- □ Votre site web est-il toujours indispensable à l'ère de l'IA générative ?

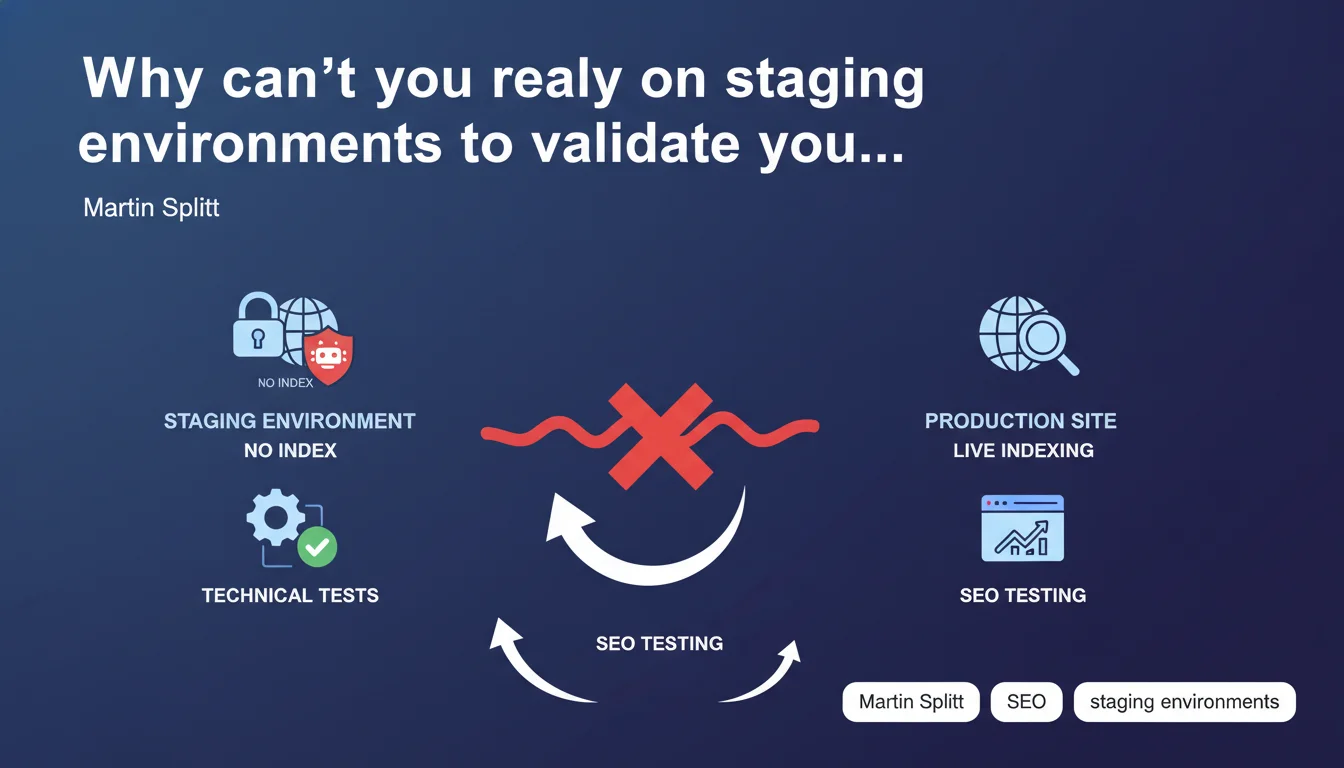

Martin Splitt argues that staging sites don't allow effective SEO testing because their indexation is intentionally blocked by search engines. Real SEO testing must happen in production, where Google can actually crawl and index your modifications.

What you need to understand

Why do staging environments create problems for SEO?

A staging site is by definition isolated from search engines. Blocked robots.txt, meta noindex, HTTP authentication — multiple barriers are put in place to prevent accidental indexation. The problem? These same barriers prevent you from measuring the real impact of SEO modifications.

Google cannot evaluate your content, your actual load times, your internal linking, or the quality of your tags if its crawler never has access to these pages. You're testing in a vacuum.

What's the difference from standard technical tests?

Functional tests — form validation, user journeys, browser compatibility — work perfectly in staging. You control all the variables.

SEO is different. Indexation is an external process you don't fully control. You can't simulate how Google will interpret your modifications, what priority it will give them, or what impact they'll have on your rankings without real exposure to crawling.

How does Google justify this position?

The logic is straightforward: if you block access to robots, you're not testing anything on the SEO side. At best, you're verifying that your code doesn't crash, but not that your optimizations actually work.

To measure SEO impact — ranking evolution, crawler behavior, indexation speed — Google needs to access the site, crawl it regularly, and index the modifications. This only happens in production.

- Staging sites intentionally block search engine access

- It's impossible to measure the real impact of an optimization without crawling and indexation

- SEO testing requires actual exposure to Google's behavior

- Production is the only environment where SEO hypotheses can be validated

SEO Expert opinion

Is this statement really applicable to all cases?

In principle, Martin Splitt is right. But in reality, nobody deploys major SEO modifications without prior validation. You're not going to completely overhaul your URL structure or modify your Title tag templates directly in production without first checking that the code works.

What Splitt doesn't say is that you can — and should — test technically in staging (does the code generate the correct tags? Do redirects work?), then validate the SEO impact in production, ideally on a subset of pages or via a gradual rollout. These are two distinct steps.

What concrete alternatives exist to limit risks?

Saying "test in prod" without nuance is risky for revenue-generating sites. Concretely, you can isolate a subset of pages to test modifications: a specific category, a language, a less critical template.

SEO A/B tests — with segmentation by URL or user-agent — allow you to measure real impact without exposing 100% of your traffic. Google has even validated this approach, as long as you're not cloaking. [To verify]: Splitt's statement doesn't mention this possibility, even though it's used by many high-traffic sites.

What does Google say about gradual rollouts?

Google regularly recommends testing modifications on a sample before a large-scale rollout. Let's be honest: this statement partially contradicts these recommendations, since it suggests you can only test in production.

In practice, high-traffic sites use hybrid strategies: technical validation in staging, gradual production deployment with tight monitoring. This is what works. Splitt's position remains theoretically correct, but operationally incomplete.

Practical impact and recommendations

What should you concretely do before deploying an SEO modification?

Validate in staging everything that doesn't require Google's intervention: correct tag generation, load times, HTML structure, redirects, internal linking. Use crawl tools like Screaming Frog or OnCrawl to simulate robot behavior.

Once this technical validation is complete, prepare a gradual production rollout. Start with a sample of pages — 5 to 10% of your site — and monitor metrics for 2 to 4 weeks before generalizing.

What mistakes should you absolutely avoid?

Never deploy a complete redesign without a testing phase in production on a limited scope. SEO disasters often happen due to overconfidence in staging tests.

Avoid blocking your staging completely with robots.txt if you want to occasionally test page indexation. You can use a combination of meta noindex + targeted allow in robots.txt to finely control what's accessible.

Don't confuse "technical test" with "SEO validation". The first is done in staging, the second requires real exposure to Google's crawler. These are two distinct phases of the same process.

How do you monitor the impact of a production deployment?

Set up alerts in Google Search Console to quickly detect any indexation drops or error spikes. Monitor your rankings on a sample of strategic keywords using a ranking tool.

Compare crawler behavior before/after: crawl frequency, pages crawled per day, crawl budget consumed. A sudden change may signal a problem.

- Technically validate all modifications in staging (tags, redirects, structure)

- Crawl staging with a tool to detect errors before deployment

- Deploy to production on a sample of pages (5-10% of your site)

- Monitor Search Console, rankings and crawler behavior for 2-4 weeks

- Generalize only if metrics remain stable or improve

- Prepare for quick rollback in case of unexpected drops

❓ Frequently Asked Questions

Peut-on quand même utiliser un environnement de staging pour valider certains aspects SEO ?

Comment tester des modifications SEO en production sans risquer une catastrophe ?

Les tests A/B SEO sont-ils une alternative valable au staging ?

Quels outils utiliser pour valider techniquement un site en staging avant déploiement ?

Faut-il complètement abandonner les environnements de staging pour le SEO ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 10/07/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.