Official statement

Other statements from this video 16 ▾

- □ Le SEO Starter Guide de Google est-il vraiment le meilleur point de départ pour apprendre le référencement ?

- □ Faut-il vraiment définir objectifs et conversions avant d'optimiser son SEO ?

- □ Faut-il vraiment adapter sa stratégie SEO à l'audience avant d'optimiser techniquement ?

- □ Les CMS courants comme WordPress suffisent-ils vraiment pour le SEO technique ?

- □ Faut-il vraiment interroger vos clients pour bâtir votre stratégie SEO ?

- □ Faut-il vraiment renoncer aux requêtes génériques quand on est une petite entreprise ?

- □ Les petits sites peuvent-ils vraiment tester librement sans risque SEO ?

- □ Pourquoi Martin Splitt insiste-t-il autant sur l'installation de Search Console et d'outils de mesure ?

- □ Combien de temps faut-il vraiment pour qu'une modification de contenu soit visible dans Google ?

- □ Peut-on vraiment rechercher son propre site sur Google sans risque ?

- □ Pourquoi les environnements de staging sont-ils inefficaces pour tester vos optimisations SEO ?

- □ Faut-il embaucher un expert SEO uniquement quand on peut mesurer son ROI ?

- □ Les promesses de classement #1 sont-elles toutes des arnaques SEO ?

- □ Les Search Essentials de Google sont-elles vraiment le mode d'emploi du SEO ?

- □ Pourquoi certaines optimisations SEO prennent-elles des mois à produire des résultats ?

- □ Votre site web est-il toujours indispensable à l'ère de l'IA générative ?

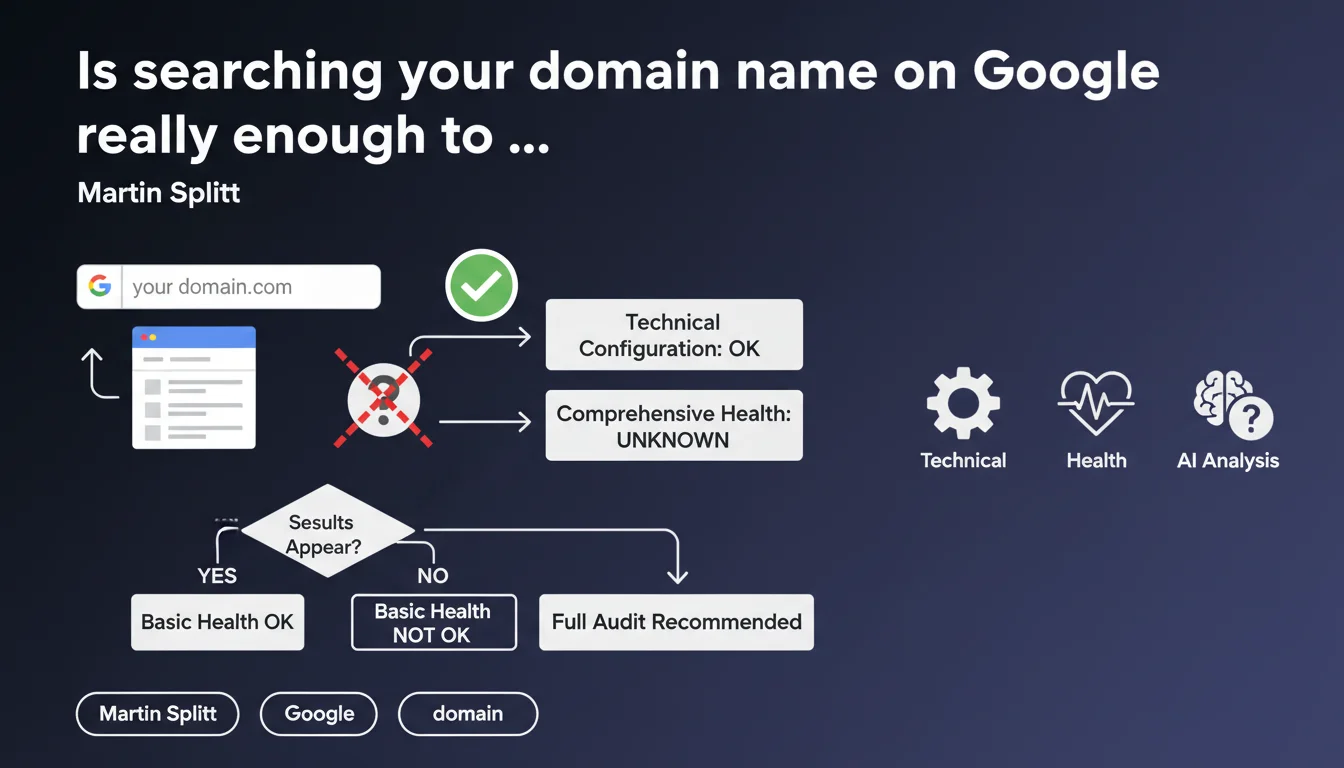

Martin Splitt suggests searching a site's name and domain on Google to verify that its technical configuration is correct. If results appear, indexation is probably working. A simple method, but one that deserves nuance for serious SEO audits.

What you need to understand

Is this verification method really reliable for a technical diagnosis?

Splitt's statement proposes a basic indexation test: type the site's name or domain into Google and see if anything comes up. The underlying idea? If Google displays pages, it means the engine can crawl, index, and return content.

This is an "entry-level" approach that makes it possible to detect major blockages — robots.txt forbidding everything, global noindex, inaccessible server. But it says nothing about indexation quality, the number of pages actually indexed as relevant, or finer structural issues.

What does "technically correct" mean in this context?

Splitt deliberately uses cautious wording: "probably acceptable". Not "perfect", not "optimal", just "acceptable". That means we're in a superficial validation, not a deep technical audit.

For a site that's just starting out or has just been launched, it's enough to confirm that Google isn't blocked. For an established site with SEO performance objectives, this method is no substitute for Search Console, log analysis, or simulated crawls.

What are the risks of relying solely on this test?

A site can appear in domain name search and have hundreds of non-indexed orphaned pages, canonicalization issues, duplicate content, or 4xx/5xx errors across entire sections. This search will detect none of these problems.

It can also create a false sense of security. Because the homepage or a few institutional pages come up, you might think everything is fine when strategic pages (categories, product sheets, blog articles) aren't indexed at all.

- Domain name search confirms only that Google can technically index the site

- It validates neither the quality of indexation, nor the coverage rate of important pages

- It does not replace analysis via Search Console (coverage report, sitemap, crawl errors)

- Useful as a quick first filter, insufficient for a complete diagnosis

SEO Expert opinion

Is this statement consistent with field practices?

Yes, provided you don't read more into it than it claims. Splitt proposes a common-sense test, not an audit method. We do observe that completely blocked sites (503 server error, overly restrictive robots.txt, global noindex) never show up in brand-name searches.

However, technically dysfunctional sites can appear in brand searches while having deep structural problems — broken pagination, poorly managed facets, JavaScript blocking content. The correlation "I see results = everything is fine" is misleading.

What nuances should be added to this recommendation?

First, this search in no way replaces the site: operator which allows you to see how many pages are indexed. Second, it says nothing about the relevance of indexed pages or their rankings on strategic queries.

A concrete example? An e-commerce site with 10,000 product sheets can easily appear in brand-name search on its homepage and a few categories, while 95% of its products are never crawled due to failing internal linking or poorly distributed crawl budget. [To verify]: Splitt doesn't specify whether this search should show multiple pages or just one.

In what cases is this test insufficient or even misleading?

For sites with more than 100 pages, this method only serves to eliminate obvious critical blockages. It detects neither crawl depth issues, nor soft 404 errors, nor orphaned pages, nor poorly indexed conversion channels.

It can also give a temporary false negative: a new site may take several days to appear in brand-name search, even if technically everything is correct. Conversely, a site under manual penalty can appear in brand search while being invisible on any other query.

Practical impact and recommendations

What should you concretely do to verify indexation correctly?

Use the domain name search as a quick first filter, but don't stop there. If nothing comes up, immediately check the robots.txt file, the homepage's HTTP code, and the presence of noindex tags in the <head>.

If results appear, move to finer analysis: site: operator to estimate the volume of indexed pages, coverage report in Search Console, server logs to see if Googlebot regularly visits strategic sections.

What mistakes should you avoid when applying this method?

Never conclude "the site is technically OK" simply because the homepage shows up in brand-name search. This test validates neither internal linking quality, nor URL structure, nor parameter management, nor crawl budget.

Another pitfall: confusing "indexed" with "well-ranked". A site can be perfectly indexed and never rank on its target queries because the content is weak, popularity signals are nonexistent, or competition is too strong. Indexation is a necessary but not sufficient condition.

How should you structure an efficient indexation verification workflow?

Start with the domain name search as a smoke test. If it passes, follow with a site:example.com search to see approximate indexed volume. Then cross-reference with Search Console data (coverage report, submitted vs. indexed sitemaps).

For complex sites, add a simulated crawl (Screaming Frog, Oncrawl, Botify) to detect orphaned pages, excessive depths, redirect chains, duplicate content. Analyze server logs to see if Googlebot explores strategic sections well and how often.

- Search for the domain name on Google: first filter, not a complete validation

- Use

site:example.comto estimate the volume of indexed pages - Consult the Search Console coverage report to detect errors and exclusions

- Verify that strategic pages (categories, flagship products) are individually indexed

- Crawl the site with a dedicated tool to identify structural issues invisible in manual search

- Analyze server logs to validate that Googlebot regularly accesses important sections

❓ Frequently Asked Questions

La recherche par nom de domaine suffit-elle pour valider l'indexation d'un site e-commerce ?

Si mon site n'apparaît pas en recherche par nom de domaine, quel est le premier réflexe ?

Combien de temps faut-il pour qu'un nouveau site apparaisse en recherche par nom de domaine ?

L'opérateur site: est-il plus fiable que la recherche par nom de domaine ?

Un site peut-il remonter en recherche par nom de domaine et avoir des problèmes d'indexation graves ?

🎥 From the same video 16

Other SEO insights extracted from this same Google Search Central video · published on 10/07/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.