Official statement

Other statements from this video 18 ▾

- □ Canonical seul ne suffit pas pour bloquer le contenu syndiqué dans Discover : faut-il vraiment ajouter noindex ?

- □ Deux domaines pour un même pays : où commence vraiment la manipulation ?

- □ Les failles JavaScript de vos bibliothèques font-elles chuter votre positionnement Google ?

- □ Peut-on vraiment empêcher Google de crawler certaines parties d'une page HTML ?

- □ Pourquoi les données structurées Schema.org ne suffisent-elles pas toujours pour obtenir des résultats enrichis Google ?

- □ Les en-têtes HSTS ont-ils vraiment un impact sur votre référencement ?

- □ Google retraite-t-il vraiment votre sitemap à chaque crawl ?

- □ Sitemap HTML vs XML : pourquoi Google insiste-t-il sur leur différence de fonction ?

- □ Les données structurées avec erreurs sont-elles vraiment ignorées par Google ?

- □ Les chiffres dans vos URLs pénalisent-ils vraiment votre référencement ?

- □ L'index bloat existe-t-il vraiment chez Google ?

- □ Comment bloquer définitivement Googlebot de votre site ?

- □ Google délivre-t-il vraiment des certifications SEO officielles ?

- □ Plusieurs menus de navigation nuisent-ils vraiment au SEO ?

- □ Les host groups indiquent-ils vraiment une cannibalisation à corriger ?

- □ Peut-on désavouer des backlinks toxiques en ciblant leur adresse IP ?

- □ Faut-il supprimer la balise meta NOODP de vos sites Blogger ?

- □ Comment obtenir une vignette vidéo dans les SERP : qu'entend Google par « contenu principal » ?

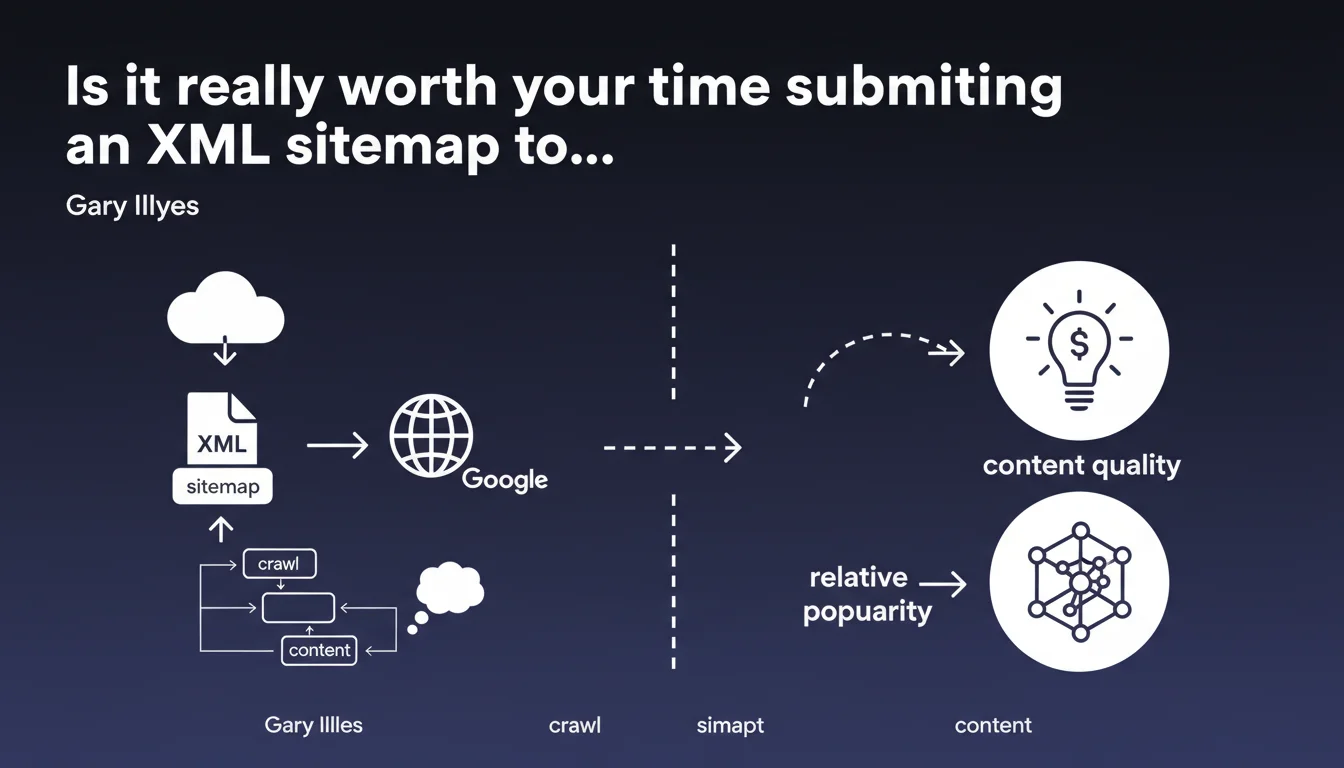

Submitting a sitemap guarantees absolutely nothing: neither crawl nor indexing. Google will decide based on content quality and relative popularity. The sitemap is merely a location indicator, not a guaranteed pass.

What you need to understand

What is the real purpose of a sitemap if Google makes no promises?

An XML sitemap essentially serves as a roadmap: it tells Googlebot where to find your URLs, especially those buried deep in your site structure or poorly linked internally. But it absolutely does not force the search engine to crawl or index them.

Google uses this file as input data among many others (internal logs, discovered links, crawl history). The sitemap does not bypass crawl quotas, quality criteria, or popularity signals. It facilitates discovery — nothing more.

What truly determines crawl and indexing?

Two main factors: the intrinsic quality of your content and its relative popularity on the web. Concretely, Google evaluates relevance, originality, expertise, and compares your page to what already exists on the same topic.

Popularity — measured by backlinks, engagement signals, domain authority — also plays a key role. An orphaned URL with no incoming links and no quality signals can remain in the sitemap without ever being crawled.

Can a sitemap harm your site if misused?

Absolutely. Submitting thousands of low-quality URLs (duplicate content, thin pages, pages without value) pollutes the signal you send to Google. The search engine may interpret this as a lack of editorial judgment and reduce your site's overall crawl frequency.

A bloated sitemap generates noise. Better to have a file with 200 strategic URLs than 20,000 mediocre pages.

- The sitemap guarantees neither crawl nor indexing, only URL discovery

- Content quality and relative popularity determine Google's final decision

- A poorly designed sitemap can send negative signals and harm your crawl budget

- Submitting a sitemap remains useful for accelerating discovery, but it does not replace solid internal linking

SEO Expert opinion

Does this statement contradict what SEO professionals observe in the field?

Not really. Experienced SEOs have known for years that the sitemap is not magic. We regularly observe URLs present in the sitemap that are never crawled — or crawled only months later — if they lack backlinks or editorial depth.

However, Google remains vague about the quality and popularity thresholds that actually trigger crawling. No precise metrics are communicated. [To verify]: Does content freshness or update frequency influence crawl priority beyond popularity? No official data exists.

What nuances should be added to this statement?

Gary Illyes is intentionally oversimplifying. In practice, certain site types benefit more from a sitemap: news sites (with Google News XML), e-commerce platforms with frequently updated catalogs, or sites with deep architecture where internal linking is complex.

For these sites, the sitemap genuinely does accelerate discovery — even though final indexing remains conditional on quality. So we shouldn't throw the baby out with the bathwater.

In what cases does this rule apply most severely?

On sites with low authority or new domains. A site without crawl history and without backlinks can submit a sitemap of 10,000 URLs and see only a few dozen crawled in the first months.

Conversely, an established domain with good authority will see its URLs discovered and crawled more quickly — with or without a sitemap. Relative popularity amplifies or diminishes the sitemap's effect.

Practical impact and recommendations

What should you actually do with your sitemap?

Adopt a selective approach: submit only quality URLs with original, useful content. Exclude duplicate pages, thin content, facet filters with no added value, pointless archives.

Use <lastmod> and <priority> tags sparingly — Google often ignores them, but they can serve as secondary signals. Update your sitemap whenever important URLs are added or modified.

- Audit your current sitemap: remove all low-quality URLs or those without clear SEO objectives

- Segment your sitemaps by content type (blog, products, categories) to facilitate tracking

- Check in Search Console which sitemap URLs are crawled, indexed, or ignored

- Strengthen internal linking for strategic URLs: do not rely on the sitemap alone

- Avoid submitting thousands of automatically generated URLs without editorial value

- Monitor actual indexation rates and cross-reference with server logs to detect URLs crawled but not indexed

What critical mistakes should you absolutely avoid?

Never submit a sitemap containing URLs with noindex tags, 404 errors, or 301 redirects. Google interprets this as a lack of technical consistency and it damages the trust accorded to the rest of your file.

Also avoid creating massive sitemaps (tens of thousands of URLs) if your crawl budget is limited. You risk diluting Googlebot's attention on secondary pages at the expense of strategic ones.

How do you verify that your sitemap strategy is effective?

Cross-reference Search Console data (Sitemaps section) with your server logs. Identify submitted URLs that were never crawled: this is a clear signal that they lack quality or popularity.

Also analyze the delay between submitting a new URL and its first crawl. If this delay is consistently long (several weeks), strengthen your internal linking and backlink strategy rather than relying on the sitemap.

❓ Frequently Asked Questions

Un sitemap accélère-t-il vraiment l'indexation des nouvelles pages ?

Dois-je supprimer les URLs non indexées de mon sitemap ?

Le sitemap influence-t-il le crawl budget ?

Faut-il soumettre un sitemap pour un petit site bien maillé ?

Google respecte-t-il les balises priority et lastmod dans le sitemap ?

🎥 From the same video 18

Other SEO insights extracted from this same Google Search Central video · published on 07/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.