Official statement

Other statements from this video 12 ▾

- □ Le keyword stuffing est-il vraiment pénalisé par Google ?

- □ Le texte caché est-il toujours considéré comme du spam par Google ?

- □ Le contenu généré aléatoirement fait-il vraiment partie des pratiques spam selon Google ?

- □ Les backlinks sont-ils devenus inutiles pour le référencement naturel ?

- □ Le HTML valide est-il vraiment nécessaire pour bien se classer dans Google ?

- □ Pourquoi Google insiste-t-il autant sur les vraies balises <a href> ?

- □ Faut-il vraiment abandonner les images CSS au profit des balises <img> pour le SEO ?

- □ HTTPS est-il vraiment obligatoire pour être indexé par Google ?

- □ Pourquoi Google recommande-t-il d'abandonner les plugins pour afficher du contenu web ?

- □ Pourquoi Google ne déclenche-t-il pas les événements de scroll ou de clic pour crawler votre contenu ?

- □ L'alt text des images reste-t-il vraiment indispensable face à la vision par ordinateur de Google ?

- □ Les directives SEO de Google sont-elles vraiment fiables sur la durée ?

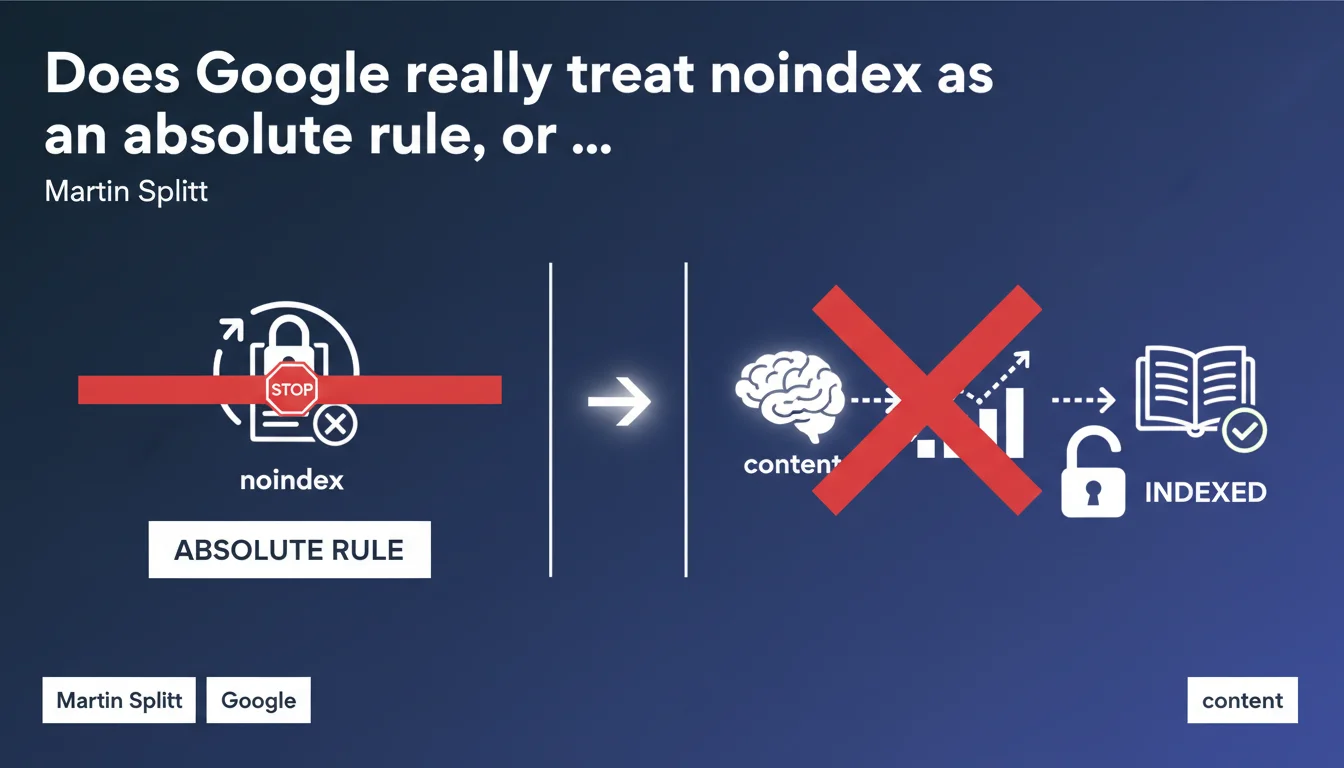

Google strictly respects the noindex tag without exception: a page with this directive will never be indexed, even if its content seems relevant or useful. This is a non-negotiable technical instruction, not a simple recommendation that the algorithm could bypass depending on context.

What you need to understand

Why does Google insist so much on the strict nature of noindex?

Martin Splitt's statement puts an end to a persistent belief: Google makes no exceptions to the noindex directive. Some SEOs thought that pages with exceptionally useful content could still be indexed — that's false.

This rule is part of a user control logic. Noindex is an explicit instruction from the webmaster, not a weak signal that the algorithm can ignore if it suits it. Google treats this tag as a technical imperative in the same way as robots.txt that blocks crawling.

What's the difference between noindex and other indexation directives?

Unlike canonical or URL parameters that Google can sometimes interpret differently, noindex is not subject to negotiation. It's binary: present or absent.

Other signals like duplicate content or low quality can influence indexation, but remain algorithmic recommendations. Noindex is a direct command that bypasses the entire evaluation process.

In which cases does this rule change the game for practitioners?

For SEOs managing seasonal or temporary content with conditional noindex, this confirmation removes any gray area. There's no "maybe Google will index it anyway if it's really good."

Strategies for soft launches or A/B testing with noindex need to be rethought: a noindexed page is invisible, period. No algorithmic miracle will come to index it despite the directive.

- Noindex is an absolute technical instruction, not a recommendation

- Google makes no exceptions even for content deemed useful or high quality

- This directive bypasses any algorithmic evaluation of content

- No difference in treatment depending on site type or domain authority

- Noindex should be used carefully as it completely removes a page from the index

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, completely. In practice, we never observe cases where a page with correctly implemented noindex appears in search results. Zero documented exceptions.

What sometimes differs is the detection timeframe. Google may take several weeks to detect a noindex that has been added, especially on pages that are rarely crawled. But once detected, it's implacable — the page disappears.

What implementation errors create confusion?

The main pitfall: a noindex in a JavaScript file loaded asynchronously. If Googlebot cannot execute the JS or if the noindex arrives after the initial HTML rendering, the directive could be technically ignored.

Another source of confusion: noindex in an X-Robots-Tag HTTP header versus the meta tag. Both work, but the HTTP header takes priority if both are contradictory. And some SEOs forget to check both locations during audits.

Redirects also add complexity. A page A with noindex that redirects to B doesn't prevent B from being indexed — obvious in theory, but a source of errors in practice.

Should you always trust official statements?

This one is among the most reliable because it concerns a simple, observable technical mechanism. No opaque algorithm, no mysterious weighting — just a binary instruction.

However, Google sometimes remains vague about edge cases. What happens if a noindex disappears and then reappears quickly? How long does a page remain cached after noindex removal? These nuances are never detailed publicly. [To verify] in your own tests if you manage complex implementations.

Practical impact and recommendations

What should you verify immediately on your site?

Start with a comprehensive audit of noindex tags via your preferred crawler — Screaming Frog, Oncrawl, or even Search Console if the volume is manageable. Identify any strategically important pages accidentally noindexed.

Also check X-Robots-Tag HTTP headers, often forgotten because they're invisible in HTML source code. A server directive can block indexation without anyone noticing for months.

Control paginated pages, filters, and facets: these areas are often noindexed by default in e-commerce CMS platforms. Make sure this configuration still matches your current strategy.

What errors should you absolutely avoid?

Never combine noindex and disallow in robots.txt for the same URL. This is the classic error that prevents Google from seeing the noindex directive, leaving the page in the index with an empty description.

Avoid conditional noindex based on fragile parameters (cookies, geolocation, device). If the condition changes between two crawls, Google may see noindex one time and nothing the next — a source of erratic behavior.

Don't rely solely on JavaScript to deploy a noindex. Put it in the initial HTML or via HTTP header to ensure Googlebot catches it on the first pass, before even executing the JS.

How do you adjust your indexation strategy?

Rethink pages in noindex "just to be safe". If a page adds nothing to SEO but could be useful through other channels, noindex is drastic — maybe just a lack of internal links would suffice.

For temporary or seasonal content, prefer reversible mechanisms: removal from the sitemap, reduction of internal linking, or even 410 Gone if it's truly obsolete. Noindex is definitive as long as it remains in place.

Document your indexation choices in a centralized dashboard. When a page is intentionally noindexed, note why and who made the decision. This avoids "Why is this category no longer in Google?" six months later.

- Audit all noindex tags (meta + X-Robots-Tag HTTP)

- Eliminate any noindex + disallow robots.txt conflicts

- Verify that strategic pages are indexable

- Test implementation on both server and JavaScript side

- Document each noindex decision in a centralized registry

- Review "historical" noindex directives that may no longer have a reason to exist

- Set up alerts if important pages switch to noindex

❓ Frequently Asked Questions

Le noindex empêche-t-il aussi le crawl de la page ?

Peut-on noindexer une page tout en la gardant dans le sitemap XML ?

Combien de temps faut-il pour qu'une page noindexée disparaisse de l'index ?

Si je retire le noindex, la page sera-t-elle réindexée automatiquement ?

Le noindex affecte-t-il le PageRank transmis par les liens internes ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 03/02/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.