Official statement

Other statements from this video 11 ▾

- □ Le H1 a-t-il vraiment l'impact SEO que Google prétend ?

- □ Pourquoi la Search Console est-elle la seule source de vérité sur votre performance réelle ?

- □ Google indexe-t-il vraiment le JavaScript aussi bien que le HTML classique ?

- □ Faut-il vraiment forcer le rendu côté serveur pour toutes les applications JavaScript ?

- □ Faut-il vraiment migrer ses microdata en JSON-LD pour les données structurées ?

- □ Combien de liens faut-il vraiment placer sur votre page d'accueil pour optimiser le crawl ?

- □ Pourquoi Google insiste-t-il sur la collaboration entre développeurs et SEO ?

- □ Pourquoi tester votre site sur différents navigateurs peut-il sauver votre SEO ?

- □ View Source et DevTools suffisent-ils vraiment pour diagnostiquer vos problèmes SEO ?

- □ Faut-il vraiment attendre un an avant d'évaluer les performances SEO d'un site saisonnier ?

- □ Faut-il vraiment attendre 6 mois avant de juger les performances d'un nouveau site ?

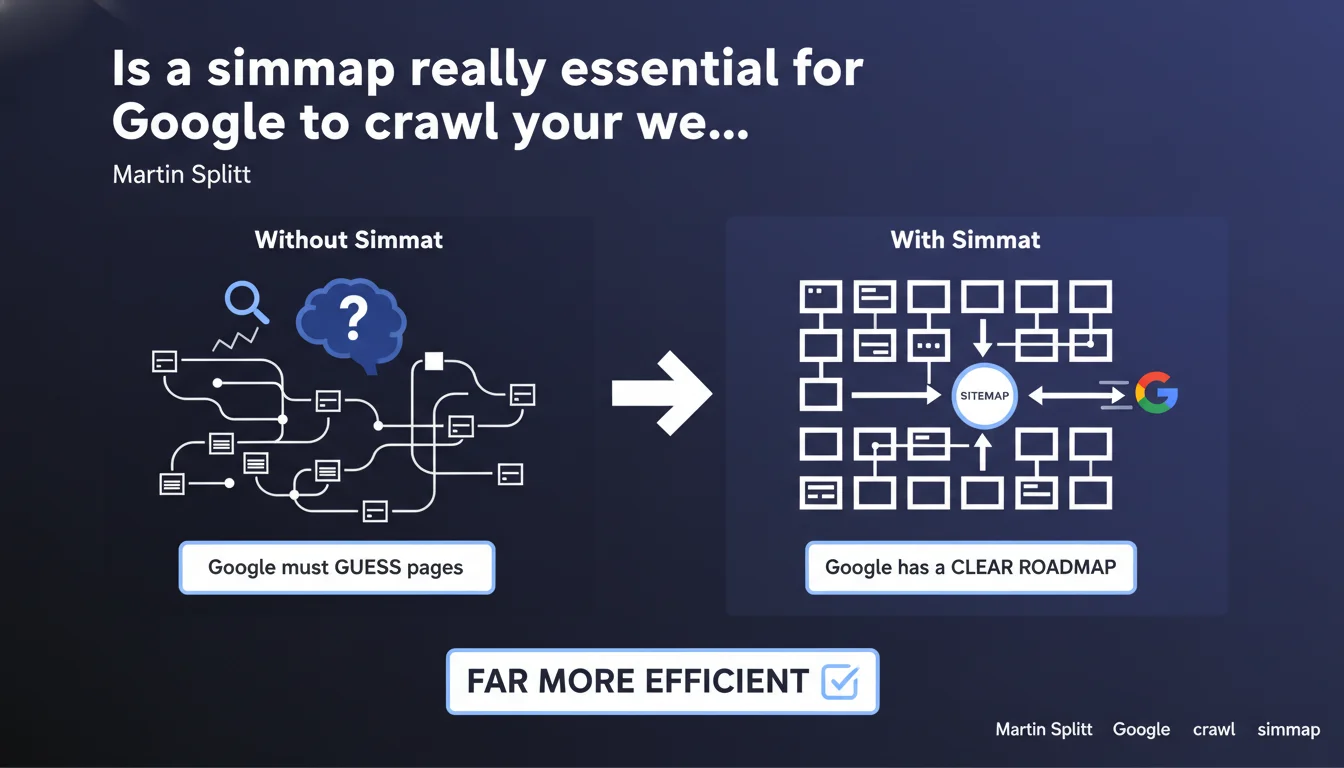

Google states that a sitemap is essential to prevent it from having to guess which pages exist on your site. Although its engine is capable of discovering pages on its own, providing a sitemap significantly improves crawl efficiency. Without a sitemap, you're leaving Google to navigate blindly.

What you need to understand

Why does Google insist so much on sitemaps?

Martin Splitt's statement repositions the sitemap as a crawl control tool, not just a "nice to have". Google is clear: without a sitemap, its engine proceeds by deduction and progressive exploration — a process far less optimal than an explicit roadmap.

This position is explained by the technical reality of crawling. Google does not visit all your pages on every pass. It prioritizes according to its crawl budget, perceived content freshness, and internal link structure. A sitemap then becomes your lever to signal priority pages, new content, or poorly linked pages.

What does "guessing" mean for Google?

When Google talks about "guessing", it refers to its link discovery process. The robot follows internal links from pages it already knows, progressively reconstructing your site's architecture. The problem: if a page isn't properly linked, or is linked from excessive depth, it remains invisible.

The sitemap circumvents this limitation. It exposes all your URLs in a single file, regardless of their position in the hierarchy or the number of clicks needed from the homepage. This is particularly critical for e-commerce sites with thousands of product pages or news sites with very fresh content.

Is a strong internal linking structure enough?

In theory, flawless internal linking should allow Google to discover everything. In practice, it rarely is. Orphaned pages, recently published content not yet promoted, or dynamically generated URLs often escape the radar.

The sitemap acts as a safety net. Even with excellent linking, it ensures your strategic pages are explicitly signaled. It doesn't replace work on internal links, but it compensates for their inevitable imperfections.

- The sitemap is a direct communication tool with Google, not a substitute for internal linking

- Without a sitemap, Google relies solely on link discovery, a slow and incomplete process

- Essential for sites with frequently updated content or complex architectures

- Allows you to signal the priority and freshness of your pages via the

<priority>and<lastmod>tags

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, but with a significant caveat. Sites with solid internal linking and sufficient crawl budget do very well without a sitemap. I've seen sites with 500-1000 pages that are perfectly indexed without ever submitting an XML sitemap.

However, once you exceed 5000 pages or publish daily, the sitemap becomes an essential control tool. Google isn't lying when it says it "can" discover your pages — it just omits to mention how long it takes and whether your crawl budget will allow it.

What nuances should be added to this claim?

Martin Splitt somewhat oversells the role of the sitemap. It doesn't improve your rankings: it improves your discovery rate and indexing speed. A critical distinction. A poorly designed site with a sitemap is still a poorly designed site.

Another point: the <priority> tags in sitemaps are largely ignored by Google.

<priority> tag to influence crawling. Google has confirmed multiple times that it is purely indicative and rarely taken into account.<lastmod> (last modification date) can indeed speed up reindexing if it's reliable and kept up to date correctly.In what cases is a sitemap really critical?

Three situations make a sitemap absolutely essential. First, sites with weak internal linking — typically e-commerce sites with products in isolated silos. Second, news sites or blogs with high publishing frequency: the sitemap allows you to signal new content before it's even linked from the homepage.

Finally, sites with dynamically generated URLs or filtering facets. Without a sitemap, these pages often remain in limbo. [To be verified] The question remains whether Google actually crawls all URLs in a 50,000-entry sitemap with the same efficiency as a 500-entry file — there's no official data on this.

Practical impact and recommendations

What should you concretely do to optimize your sitemap?

First step: generate a clean sitemap that contains only indexable URLs. Exclude pages with noindex, redirects, 404s, and canonicalized URLs. A polluted sitemap slows down crawling instead of speeding it up.

Second point: segment your sitemaps by content type if your site exceeds 10,000 pages. Create one sitemap for articles, one for products, one for category pages. Google can then prioritize according to its own criteria and you make tracking easier via Search Console.

Third imperative: automate sitemap updates. A static sitemap generated six months ago has no value. Ideally, it should regenerate with each content publication or modification. The <lastmod> tag must reflect reality, otherwise Google will eventually ignore it.

What mistakes should you absolutely avoid?

Classic mistake: including non-canonical URLs in the sitemap. If you have URL variants (with/without www, with/without trailing slash, tracking parameters), only the canonical version should appear in the sitemap. Otherwise, you create confusion.

Another trap: submitting an oversized sitemap. The official limit is 50,000 URLs or 50 MB per file. Beyond that, use a sitemap index file. And most importantly, don't artificially inflate your sitemap with low-value pages — it dilutes the signal.

- Generate a clean XML sitemap without error pages or redirects

- Segment by content type for sites with more than 10,000 pages

- Automate updates and keep

<lastmod>current - Submit the sitemap via Google Search Console and monitor errors

- Exclude noindex pages, canonicalized ones, and low-value URLs

- Respect the 50,000 URL limit per file

How can you verify that your sitemap is being properly used?

Head to Google Search Console, Sitemaps section. You'll see the number of submitted URLs versus discovered ones. A significant gap signals a problem: either error URLs, insufficient crawling, or pages blocked by robots.txt.

Also monitor the coverage report. If pages present in the sitemap remain in "Discovered, currently not indexed", it means Google saw them but doesn't consider them priority. This can indicate a content quality issue or crawl budget problem.

❓ Frequently Asked Questions

Un sitemap améliore-t-il le positionnement de mes pages ?

Dois-je inclure toutes mes URLs dans le sitemap ?

La balise priority dans le sitemap est-elle vraiment prise en compte ?

Combien de temps après soumission Google crawle-t-il le sitemap ?

Faut-il un sitemap séparé pour les images et les vidéos ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 22/03/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.