Official statement

Other statements from this video 9 ▾

- □ Pourquoi Googlebot signale-t-il des soft 404 sur vos pages géolocalisées vides ?

- □ Afficher du contenu national par défaut est-il considéré comme du cloaking par Google ?

- □ Le cloaking est-il vraiment un problème si l'utilisateur n'est pas trompé ?

- □ Googlebot crawle-t-il vraiment votre site depuis plusieurs pays ?

- □ Faut-il attendre avant de juger l'impact d'une mise à jour algorithmique Google ?

- □ Pourquoi l'analyse des fichiers logs est-elle indispensable pour les gros sites ?

- □ Pourquoi une page vide détruit-elle votre expérience utilisateur et votre SEO ?

- □ Comment garantir une expérience cohérente avec les attentes utilisateur sans risquer une pénalité pour cloaking ?

- □ Faut-il vraiment comparer l'état réel des pages avant et après une baisse de trafic ?

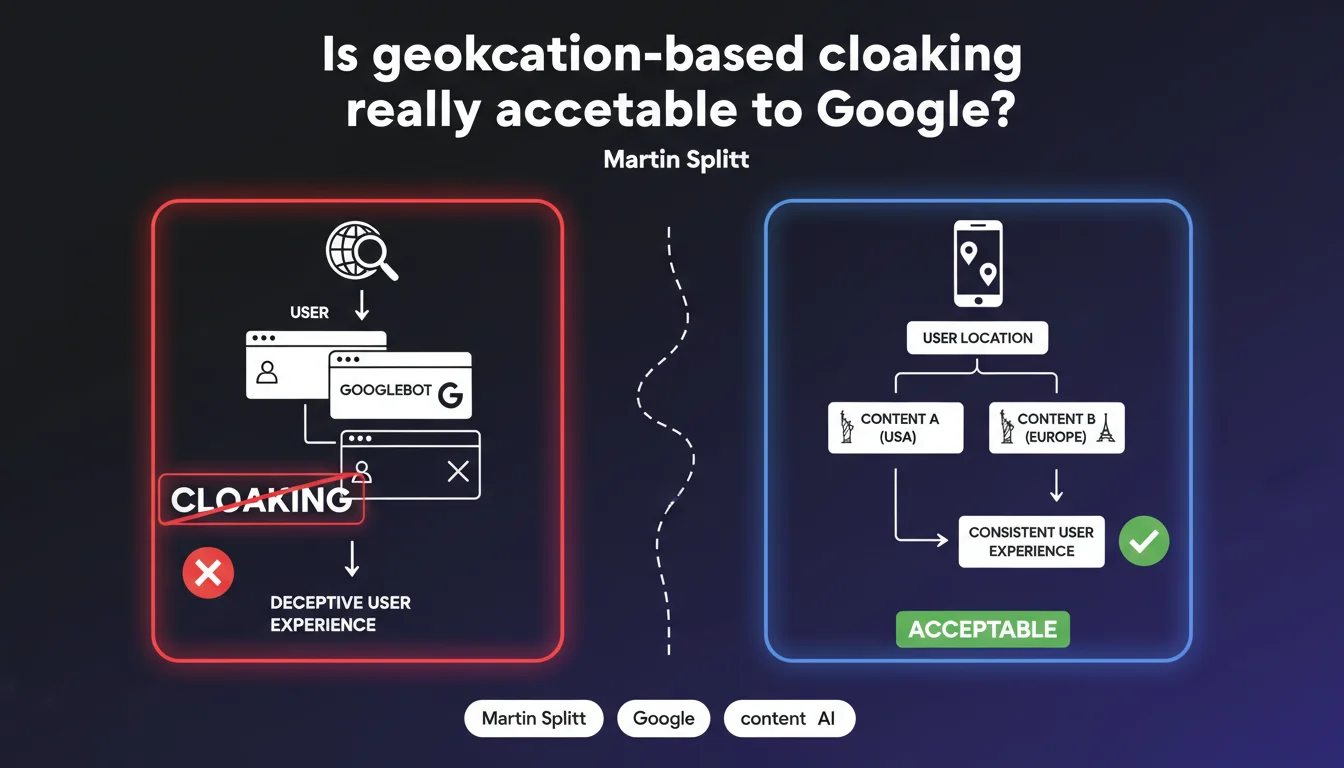

Google redefines cloaking: displaying different content based on geolocation isn't necessarily manipulation, as long as the user experience remains consistent and it's not specifically targeted at Googlebot. The nuance is critical: it's the intention to deceive that characterizes cloaking, not simply content variation.

What you need to understand

What really distinguishes cloaking from legitimate personalization?

Martin Splitt shifts the goalposts: cloaking is not defined by content differences themselves, but by the intention to deceive. An e-commerce site that displays prices in euros for France and dollars for the United States is not cloaking — it's adapting its content to its audience.

The distinction comes down to one word: consistency. If a French user lands on a page and sees content relevant to France, there's no deception. If Googlebot sees a keyword-stuffed optimized version while the user sees empty or different content, then you cross into abuse territory.

Why does Google specifically say "not targeted at Googlebot"?

This is the main friction point. Any content served differently to Googlebot compared to users triggers a red flag. Google tolerates geolocation as long as it applies uniformly — both users AND crawlers.

Concretely: if your site detects Googlebot's IP and serves it a supercharged version while real visitors see something else, you're in violation. The rule is simple: Googlebot must see what an average user sees under the same geographic conditions.

What are the implications for multi-regional strategies?

This clarification opens the door to acknowledged geolocation-based SEO practices: country-specific pages, IP-based redirects, content adapted to local regulations. As long as it's transparent and consistent, Google won't penalize it.

The limit remains fuzzy for complex cases — CDN servers distributing content based on latency, geolocation-based A/B testing, dynamic content based on browser language. Google doesn't detail these gray areas.

- Cloaking = intent to deceive, not simply content variation

- Geolocation is tolerated if it serves the user, not just the bot

- Googlebot must see the same experience as a user in their geographic area

- Consistency between the promise (in SERPs) and delivered content is the key criterion

SEO Expert opinion

Is this definition really new or just a reminder?

Let's be honest: Google has always had a vague definition of cloaking. The guidelines mention "different content for users and search engines," without specifying edge cases. This statement brings a welcome nuance, but it remains insufficient to resolve certain common use cases.

In the field, we observe that Google has de facto tolerated certain forms of personalization for years — dynamic pricing, local stock levels, region-restricted content. What Splitt does here is formalize what was already tacitly accepted practice.

In what cases does this rule remain ambiguous?

Problem number one: how does Google determine intent to deceive? If a site displays promotions only for French IPs and Googlebot crawls from the USA, is that cloaking? [To be verified] — the official documentation doesn't settle it.

Second gray area: paywalls and premium content. Showing the full article to Googlebot and a truncated version to non-subscribing users — that's technically different content, but Google has explicitly validated this practice. Logical consistency with Splitt's definition? Debatable.

Third point: geolocation-based A/B tests. If you test two versions of a landing page by region, Googlebot will inevitably encounter one version or the other. Is that acceptable? Splitt doesn't say. Based on field observations, it passes as long as both versions are SEO-friendly and genuinely serve users.

Does field data contradict this statement?

Partially. We regularly see sites penalized for aggressive content personalization — even when it's technically "consistent" with geolocation. Google dislikes variations that are too large across regions if they smell like forced optimization.

Concrete example: a site that displays 500 words in France and 2000 words in the United States, claiming "cultural preferences." Google can interpret this as manipulation, even if the user sees the promised content. The unwritten rule: variations must be justified by real user needs, not SEO tactics.

Practical impact and recommendations

What should you audit on your site right now?

First step: audit your personalization rules. List all instances where your site serves different content based on IP, language, or region. For each one, ask yourself: does this variation genuinely improve user experience, or is it just keyword stuffing for local terms?

Second verification: test what Googlebot sees. Use the URL inspection tool in Search Console, or simulate crawls from different locations with tools like Screaming Frog configured with Googlebot user-agent. If you spot gaps between user and bot versions, dig deeper.

What mistakes should you absolutely avoid?

Classic error: blocking or redirecting Googlebot differently from users. If your CDN or firewall treats Googlebot as a special case, you're taking a major risk. Googlebot must follow the same path as a real visitor from the same region.

Another trap: "ghost" content invisible to users but present for bots. Hidden text in CSS, keyword lists in white-on-white served only to Googlebot — that's pure cloaking, no matter how you justify it with geolocation.

Last frequent mistake: failing to document your choices. If Google sends you a manual action, you'll need to prove your personalization serves the user. Keep records: analytics showing conversion rates by region, user studies, local regulations requiring specific content.

How do you secure your geolocation approach?

Golden rule: transparency. If you display different prices by country, state it clearly ("Price for France," visible region selector). If you block content for legal reasons, explain why ("This product is not available in your region").

For multi-language content, implement hreflang correctly. Google must understand your variations are linguistic/regional, not SEO spam. Each version must be indexable and accessible from its target region.

- Audit all current geographic personalization rules

- Test the version crawled by Googlebot vs. the one seen by users

- Remove any content differences targeting specifically bots

- Document business/legal reasons for each regional variation

- Implement hreflang for multi-language/regional content

- Add clear visual indicators (region selector, local price mentions)

- Monitor manual actions in Search Console

❓ Frequently Asked Questions

Puis-je afficher des prix différents selon les pays sans risquer une pénalité ?

Est-ce du cloaking si je bloque du contenu pour raisons légales dans certains pays ?

Comment savoir si mon CDN fait du cloaking involontaire ?

Les tests A/B géolocalisés sont-ils considérés comme du cloaking ?

Dois-je utiliser hreflang même si je personnalise juste quelques éléments par région ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 13/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.