Official statement

Other statements from this video 9 ▾

- □ Le cloaking géolocalisé est-il vraiment acceptable pour Google ?

- □ Afficher du contenu national par défaut est-il considéré comme du cloaking par Google ?

- □ Le cloaking est-il vraiment un problème si l'utilisateur n'est pas trompé ?

- □ Googlebot crawle-t-il vraiment votre site depuis plusieurs pays ?

- □ Faut-il attendre avant de juger l'impact d'une mise à jour algorithmique Google ?

- □ Pourquoi l'analyse des fichiers logs est-elle indispensable pour les gros sites ?

- □ Pourquoi une page vide détruit-elle votre expérience utilisateur et votre SEO ?

- □ Comment garantir une expérience cohérente avec les attentes utilisateur sans risquer une pénalité pour cloaking ?

- □ Faut-il vraiment comparer l'état réel des pages avant et après une baisse de trafic ?

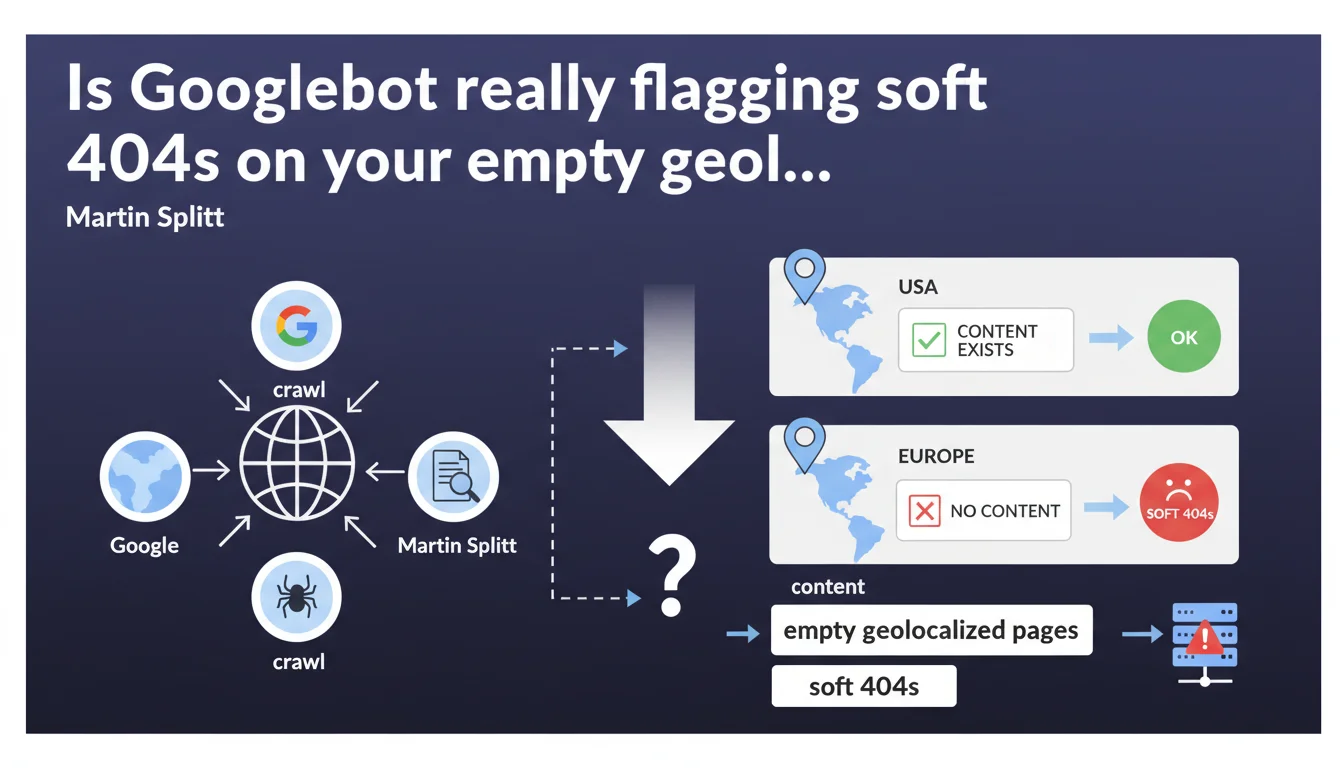

Googlebot crawls your pages from multiple geographic locations. If a page contains no relevant content for a given region (e.g., local inventory exhausted, services unavailable), Google may treat it as a soft 404 — even if it functions normally from other areas. Empty geolocalized content isn't treated as a simple blank page, but as a signal of misalignment between user intent and the response provided.

What you need to understand

Does Googlebot really crawl from multiple geographic locations?

Yes. Google doesn't limit itself to crawling from a single US datacenter. Googlebot can access your pages from different geographic locations to test the consistency of content displayed based on the user's geolocation.

This behavior primarily concerns sites that dynamically adapt their content based on the visitor's IP address: e-commerce stores with local stock, location-based services, regional directories.

What is a soft 404 in this context?

A soft 404 is a page that returns an HTTP 200 status code (success) but, from Google's perspective, contains no useful content. In this case, a page may display a complete template (header, footer, navigation) but zero products, zero inventory, zero results for the tested geographic area.

Google then considers this page as "empty," even though technically it functions. The risk? Progressive deindexation or loss of visibility for that URL.

Why is Google flagging this issue now?

Geolocalized sites are multiplying. Shopify, WooCommerce, or custom stores with region-based inventory management are exploding. Google is refining its detection to avoid indexing pages that provide no value to the end user.

If your product page displays "No results for Paris" but works perfectly in Lyon, Googlebot may crawl from Paris and flag a soft 404. Your server responds with 200, but the perceived content is zero.

- Googlebot crawls from multiple geographic locations to test content consistency

- A page without local inventory can be marked as soft 404, even if it displays a complete template

- Main risk: partial deindexation or ranking loss for these URLs

- Primarily affects e-commerce sites with geolocalized inventory, marketplaces, regional directories

SEO Expert opinion

Is this statement consistent with field observations?

Yes, but with important nuances. For years, we've observed soft 404s on empty category pages or search result pages without results. The principle isn't new.

What's changing is the explicit acknowledgment of geolocalized crawling. Until now, many SEOs assumed Googlebot crawled from a single point (typically USA). Martin Splitt confirms here that Google can test multiple locations — which complicates detection on the webmaster's side. [To verify]: Google doesn't specify frequency, the list of tested locations, or selection criteria. Complete lack of clarity on the "when" and "where."

What are the gray areas in this statement?

First gray area: what exactly is "empty" content? Is a page displaying "No products available in your region" considered empty? Or does it need alternative text, suggestions, a CTA? Google provides no precise threshold.

Second gray area: how does Google determine the relevant location? Via server IP? Via hreflang? Via page content? If your site uses a global CDN, Googlebot can access from anywhere — triggering unexpected soft 404s.

In which cases does this rule not apply?

If your site doesn't geolocalize anything, you're not affected. If you display the same content everywhere in the world, Googlebot can't encounter an "empty" page based on location.

However, if you block certain regions via robots.txt, .htaccess, or firewall, the problem doesn't arise — but you lose traffic from those areas. Geolocalized soft 404s primarily concern sites that display conditional content without intelligent fallback.

Practical impact and recommendations

What should you check as a priority on your site?

First thing: audit your geolocalized pages in Search Console. Filter URLs marked as soft 404 and verify if they correspond to pages displaying empty content for certain locations.

Next, test your pages with proxies or VPNs from different regions. Simulate Googlebot's behavior from France, Belgium, Switzerland, Canada, USA. Does your product page always display relevant content or does it switch to an empty state?

How do you avoid geolocalized soft 404s?

Solution 1: display intelligent alternative content. If no products are available in the region, suggest similar products, alternatives, or a notification form. Never leave a page completely empty.

Solution 2: use appropriate HTTP codes. If a page makes no sense for a given location, return 404 or 410, or redirect with 302 to a relevant page. Don't serve 200 with zero content.

Solution 3: segment your URLs by region. Instead of a single URL that displays conditional content, create distinct URLs (/us/, /uk/, /ca/) with real content or deindex empty pages via noindex.

- Audit soft 404s in Search Console and verify geographic consistency

- Test your pages from multiple locations (VPN, proxies) to detect empty states

- Never serve a 200 code on a page without real content — prefer 404, 410, or redirect

- Add alternative content (suggestions, similar products, form) on empty pages

- If impossible to display relevant content, use noindex or robots.txt to block indexation

- Monitor indexation fluctuations in Search Console after each modification

❓ Frequently Asked Questions

Google crawle-t-il systématiquement toutes les pages depuis plusieurs localisations ?

Un soft 404 géolocalisé entraîne-t-il une désindexation immédiate ?

Comment savoir depuis quelle localisation Googlebot a détecté un soft 404 ?

Faut-il utiliser hreflang pour éviter les soft 404 géolocalisés ?

Peut-on bloquer Googlebot pour certaines localisations sans pénalité ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 13/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.