Official statement

Other statements from this video 9 ▾

- □ Pourquoi Googlebot signale-t-il des soft 404 sur vos pages géolocalisées vides ?

- □ Le cloaking géolocalisé est-il vraiment acceptable pour Google ?

- □ Le cloaking est-il vraiment un problème si l'utilisateur n'est pas trompé ?

- □ Googlebot crawle-t-il vraiment votre site depuis plusieurs pays ?

- □ Faut-il attendre avant de juger l'impact d'une mise à jour algorithmique Google ?

- □ Pourquoi l'analyse des fichiers logs est-elle indispensable pour les gros sites ?

- □ Pourquoi une page vide détruit-elle votre expérience utilisateur et votre SEO ?

- □ Comment garantir une expérience cohérente avec les attentes utilisateur sans risquer une pénalité pour cloaking ?

- □ Faut-il vraiment comparer l'état réel des pages avant et après une baisse de trafic ?

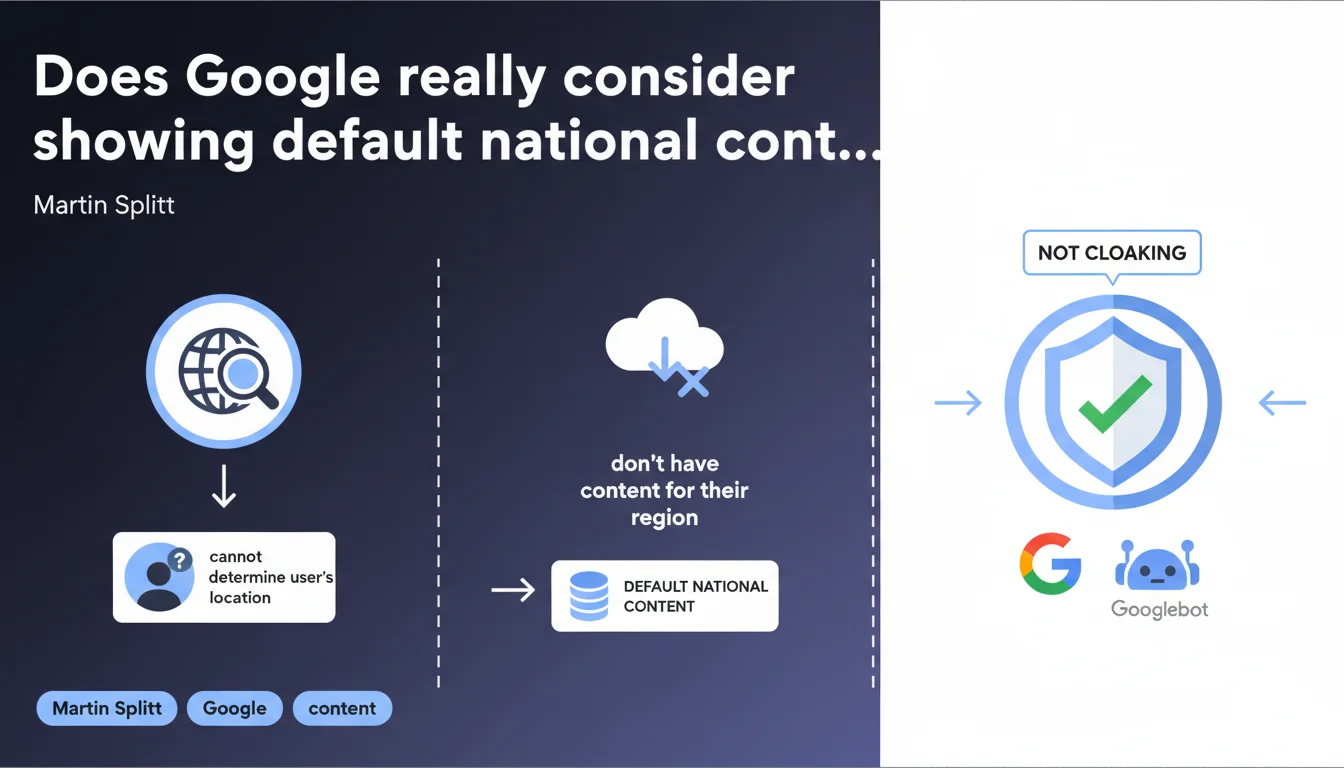

Google confirms that displaying default national content when you cannot determine a user's location (or when you don't have content for their region) is not considered cloaking, provided that Googlebot receives the same treatment. This clarification removes a major ambiguity for multi-regional sites that offer a neutral fallback experience.

What you need to understand

Why does Google clarify this stance on default national content?

Cloaking involves showing different content to search engines and real users. Google penalizes it severely because it considers this practice deceptive. Yet international sites must often manage edge cases: users with VPNs, ambiguous IP addresses, bots blocking geolocation.

Martin Splitt clarifies a specific scenario here: if you cannot detect the location or if you don't have a localized version for a given country, systematically displaying a default national version (often in English or in the language of your headquarters) is not punishable. The essential part? That Googlebot receives exactly the same experience.

What do we mean by "default national content" in this context?

This is typically a reference language or regional version that you display when you cannot make a relevant choice. For example: a French site that displays its .fr version to a visitor from a country not covered by its local versions (like Brazil, if you don't have a .br version).

This is not an automatic geographic redirect based on IP — it's a neutral display that says "here's our main content, for lack of a better option." The nuance matters: you're not forcing a redirect, you're showing stable and consistent content to everyone who falls into this scenario.

What are the conditions for this not to be cloaking?

Google sets one non-negotiable condition: Googlebot must see exactly what users see in the same situation. If your system detects Googlebot and serves it a different version than what an unknown user would receive, you fall into classic cloaking.

- Absolute consistency: Googlebot must receive the default national content if it cannot be geolocated (or if you don't geolocate bots)

- No conditional redirection: don't redirect Googlebot to a different URL than what an "anonymous" user would see

- Transparency: use hreflang to signal your regional versions, Google will understand the logic

- Document your fallback logic in your technical architecture

SEO Expert opinion

Is this statement consistent with real-world practices observed?

Overall yes, but it remains deliberately vague on certain points. In the field, we observe that Google does indeed tolerate consistent fallback strategies — provided that the default content is relevant and not optimized to manipulate the crawl. Sites that display an English .com version by default and use hreflang correctly generally encounter no issues.

What sometimes causes problems? Clunky implementations where the "default content" changes based on user-agent or opaque criteria. [To verify]: Google doesn't specify how it detects abuses in this area — we assume it compares bot vs. real user behavior through Chrome and Analytics data, but no official confirmation.

What gray areas does this statement not cover?

Martin Splitt talks about "not being able to determine location" but says nothing about cases where you choose not to geolocate. Technically, many sites could geolocate via IP but prefer not to for simplicity or to avoid errors. Is this acceptable? Probably, as long as it's consistent.

Another gray area: what happens if you display "default" content but with subtle variations (currencies, legal notices, CTAs) based on detected IP? The line between acceptable personalization and cloaking becomes thin. Let's be honest: Google has never really publicly settled this question.

In what cases might this rule not apply?

Google remains inflexible on intentional cloaking. If your "default content" is clearly designed to deceive (doorway pages, spam content different from what users see), tolerance falls away. The key is intent: logical and transparent fallback passes, disguised manipulation does not.

Also, this statement does not cover automatic redirects based on geolocation. If you systematically redirect users based on their IP to different URLs, that's another topic — and there, you must absolutely implement hreflang and user choice mechanisms to avoid SEO issues.

Practical impact and recommendations

What should you concretely do to stay compliant?

First step: identify your current fallback logic. If you're already geolocating your users, document what happens when detection fails or when you don't have a localized version. Make sure this behavior is stable and reproducible.

Next, verify that Googlebot receives exactly this fallback content. Use the URL inspection tool in Search Console to simulate the crawl and compare it with what a "neutral" user (private browsing, no geolocation) would see. The two must be identical — HTML, JSON-LD, hreflang, everything.

What critical errors should you avoid?

Never serve a different version to Googlebot just because it comes from the United States (most of Google's crawl IPs are US). If your logic automatically redirects US IPs to example.com/us/, Googlebot will go there too — but if your "non-localizable" users go elsewhere, that's cloaking.

Also avoid JavaScript-based client-side redirects without a server equivalent. Google can follow them, but with delays and variable reliability. Result: inconsistencies between what the bot sees and what fast users with JS enabled see.

- Test your default page with the URL inspection tool in Search Console

- Compare it with a real user session in private browsing, VPN disabled

- Verify that your hreflang tags correctly point to all your regional versions, including the default version

- Document your fallback logic in your technical documentation (useful for future SEO audits)

- If you use CDNs or edge workers for geolocation, ensure they handle Googlebot consistently

- Set up monitoring to detect divergences between bot rendering and user rendering

How can you verify that your implementation is solid over time?

Regular technical audits are essential, especially if your architecture evolves (migrations, redesigns, new regions). Check in particular server logs for any inconsistencies in responses served to Googlebot vs. users.

Also monitor your performance across different regional Search Consoles. If you notice unexplained drops or orphaned pages indexed in the wrong countries, it's often a sign of a geolocation or fallback configuration problem.

❓ Frequently Asked Questions

Est-ce que je peux afficher une version .com en anglais par défaut même si mon siège est en France ?

Si j'utilise un CDN qui redirige automatiquement selon l'IP, est-ce du cloaking ?

Dois-je absolument utiliser hreflang si j'affiche du contenu national par défaut ?

Que se passe-t-il si je ne peux jamais déterminer la localisation (pas de géolocalisation du tout) ?

Google peut-il détecter si je montre un contenu différent à Googlebot via l'user-agent ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 13/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.