Official statement

Other statements from this video 10 ▾

- □ Faut-il vraiment baliser son contenu payant avec la structured data 'paywall' ?

- □ Faut-il vraiment empêcher le contenu paywall de se charger dans le DOM ?

- □ Pourquoi robots.txt ne protège-t-il pas vos contenus privés de l'indexation Google ?

- □ Pourquoi robots.txt ne protège-t-il pas votre contenu privé ?

- □ Faut-il vraiment enrichir vos pages de login pour améliorer leur indexation ?

- □ Faut-il vraiment rediriger vos pages privées vers du contenu marketing plutôt qu'un simple login ?

- □ Pourquoi Google refuse-t-il d'indexer les intranets d'entreprise ?

- □ Pourquoi vos URLs peuvent trahir vos données privées malgré un contenu protégé ?

- □ Faut-il vraiment tester son site en navigation privée pour évaluer sa visibilité SEO ?

- □ Google donne-t-il vraiment des conseils SEO privilégiés à ses propres équipes ?

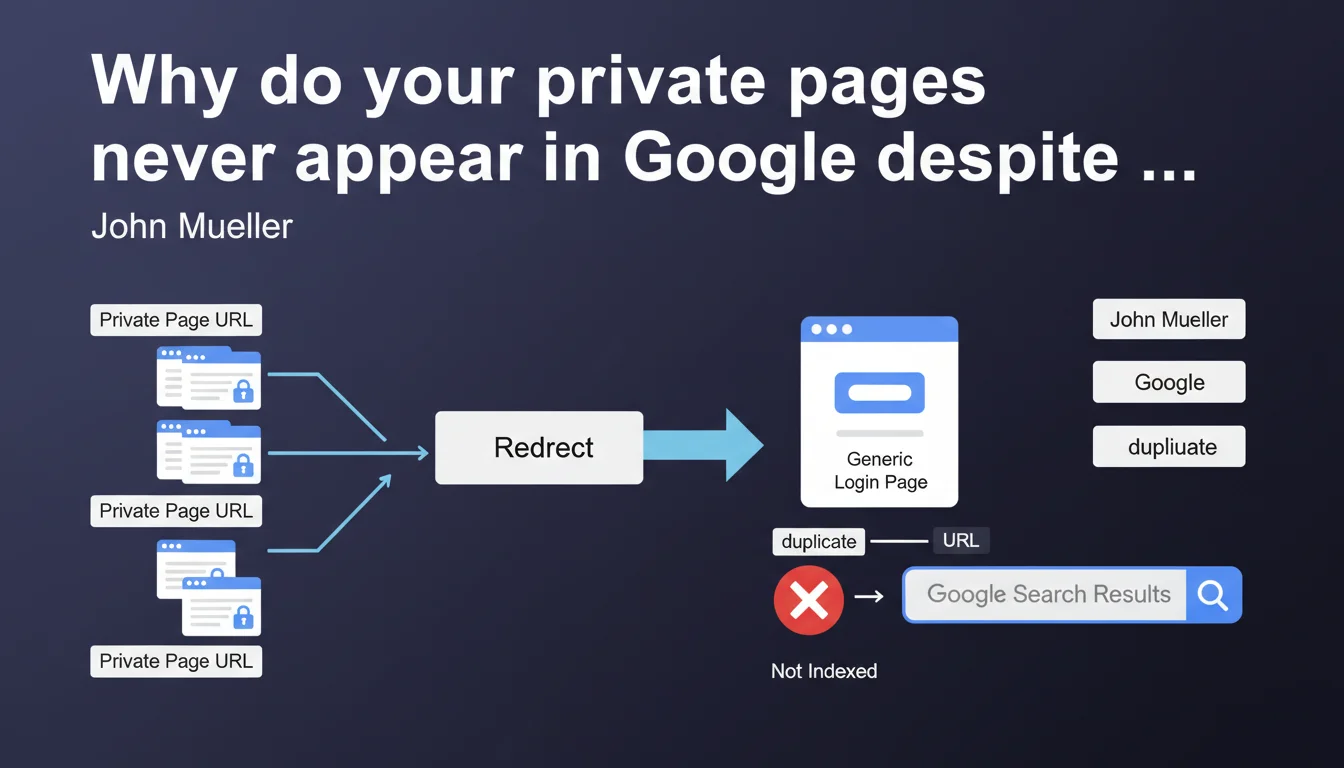

When all your private URLs redirect to a single generic login page, Google interprets these URLs as duplicates of the login page. The result: it's this login page that monopolizes indexation, at the expense of the actual content you were trying to protect. A situation that can seriously compromise your visibility if you don't correctly structure access to protected content.

What you need to understand

How does Google handle redirects to a single login page?

Imagine a website that protects 500 different URLs — product sheets, premium articles, member areas. If they all point to the same generic login page (say /login), Google will crawl these 500 URLs, notice they all display the same content, and conclude that they are duplicates.

The engine will then choose a canonical URL to represent this duplicated content — and it will likely be /login. The other 500 URLs? They will either be deindexed or ignored in the results. The content you were trying to protect simply disappears from the index.

Why does this situation harm the search experience?

Mueller talks about impact on user experience — and that's exactly it. An internet user searching for "your premium product X" lands on your generic login page in the SERPs. No context. No indication of what's hidden behind it. Just a login form.

Result: high bounce rate, frustration, and loss of qualified traffic. Google detects these negative signals and adjusts accordingly. You end up penalized twice: reduced visibility AND degraded performance on what remains indexed.

What's the difference with a well-designed architecture?

A correct structure doesn't redirect. It displays a contextual intermediate page for each protected content: title, description, teaser, and invitation to log in. Each URL retains its own identity, its unique content (even if partial), and can therefore be indexed normally.

Google understands that these are distinct pages with value — not simple duplicates of a generic entry point. And the user who lands on it knows exactly what they'll unlock by logging in.

- Systematic redirect to /login = all URLs merge into a single duplicate

- Contextual intermediate pages = each URL retains its uniqueness and ranking potential

- Google favors experiences where the user understands what they'll find behind the login

- The problem also affects intranets, B2B spaces, and poorly architected paid content

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Absolutely. We regularly see this pattern on SaaS sites, B2B platforms, or media with paywalls. The worst part? Some don't even realize that their thousands of protected URLs generate no organic traffic because they're all cannibalized by the /login page.

Google Search Console may show these URLs as "indexed," but in practice they never rank — because they're perceived as duplicates. And when you audit server logs, you find that Googlebot crawls these pages but gives them no weight.

What nuances should be added to this recommendation?

Let's be honest: not all protected content deserves to be indexed. If you have a strictly private member area with no SEO value (personal dashboards, account settings), blocking indexation via robots.txt or noindex remains the best option. No need to create intermediate pages for that.

The problem arises when you want this content to be discoverable — premium articles, reserved product sheets, recorded webinars. Then the generic redirect kills your strategy. And that's where Mueller's statement makes full sense.

In which cases does this logic not fully apply?

Sites with soft paywalls (limited to X articles per month) generally escape the problem if they serve full content to Googlebot via authorized cloaking (first-click-free, or structured Paywall schema). Google sees the real content, not the login barrier.

But beware — this exception works only if you follow Google's paywall guidelines. Otherwise, you risk being treated as abusive cloaking. And that's a guaranteed manual penalty.

Practical impact and recommendations

What concretely needs to be done to avoid this trap?

Stop using 302 or 301 redirects to a single login page whenever a protected URL is called. Instead, serve an intermediate page with a 200 OK code, which contains a preview of the content (title, meta, introduction, schema markup) and a CTA to log in.

This page must be unique enough for Google to distinguish it from others. If you have 100 premium products, you must have 100 different intermediate pages — not 100 clones with only the title changing.

For truly sensitive content or content without SEO value? Block access right from robots.txt or via noindex tag. Don't let Google crawl URLs you don't want indexed.

What mistakes to absolutely avoid?

Don't create "fake" intermediate pages with 3 lines of generic text and the same template everywhere. Google will detect the thin content and duplication, and you won't gain anything from it. Worse: you risk diluting your crawl budget on pages with no value.

Another trap: using a URL parameter for redirection (like /login?redirect=/premium-product). Google may ignore the parameter and treat all these variants as a single page. Same result: cannibalization by the login page.

How to verify that your site is compliant?

Crawl your site with Screaming Frog or Oncrawl while simulating Googlebot. Find all URLs that return a 3xx code to /login. If you find dozens or hundreds, you have a structural problem.

Also check in Search Console: inspect a few protected URLs and look at the HTML rendering that Google sees. If it systematically displays your login form without context, it means your architecture is failing the test.

- Audit all redirects to generic login pages

- Replace redirects with contextual intermediate pages (200 code)

- Ensure each intermediate page contains unique and relevant content

- Block indexation (robots.txt or noindex) of purely private spaces with no SEO value

- Implement Paywall schema markup if you use a subscription model

- Test Googlebot rendering via Search Console to validate that differentiated content is visible

- Monitor organic performance of protected URLs to detect any suspicious drops

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 04/09/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.