Official statement

What you need to understand

Why Does Google Crawl Pages That No Longer Exist?

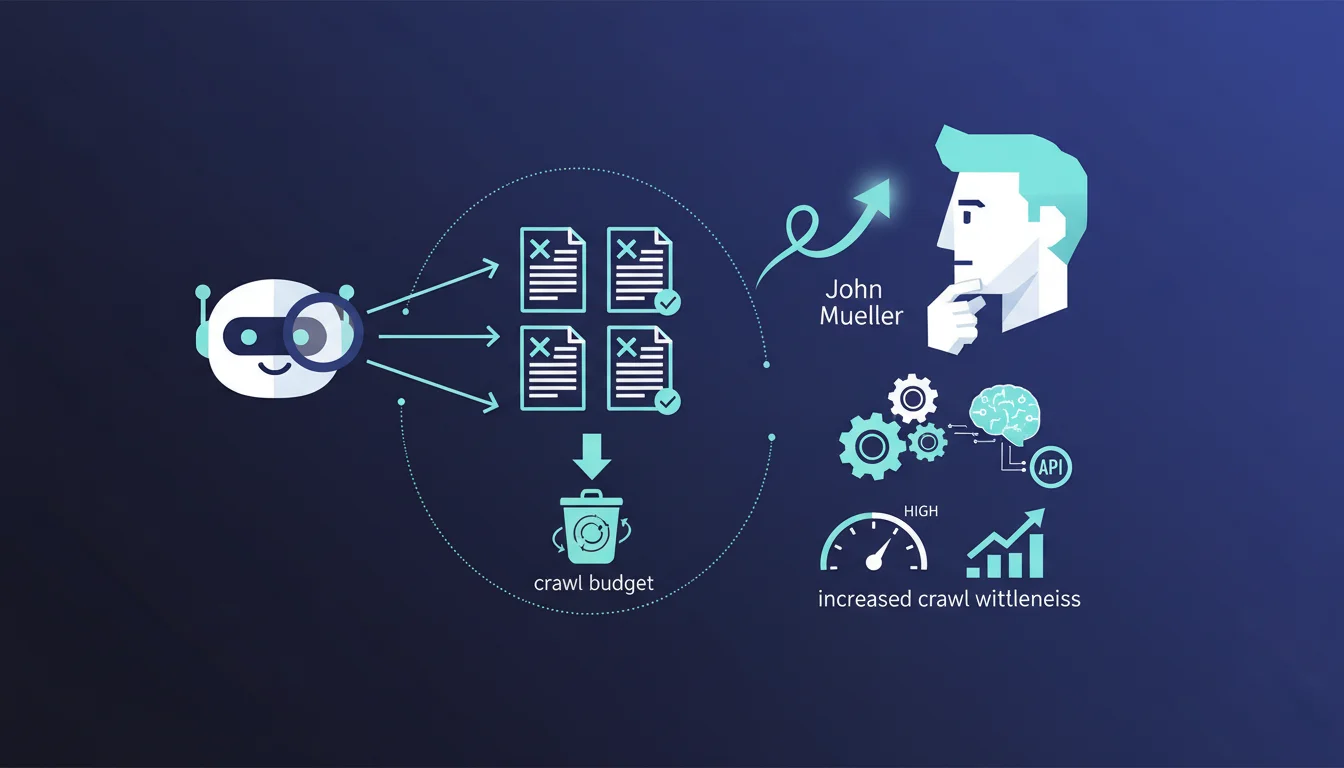

When Googlebot encounters a page returning a 404 status code, it doesn't immediately abandon it. The bot performs regular recrawls as a precaution, in case the disappearance was accidental or temporary.

This strategy allows Google to check whether the content has become available again, thus avoiding permanently losing pages that might have been deleted by mistake or during maintenance.

Do These Repeated Crawls Waste My Crawl Budget Unnecessarily?

Contrary to a common concern, these repeated visits to 404s don't constitute a problematic waste. Google intelligently manages the recrawl frequency of these pages.

According to Mueller, these crawls can even be interpreted as a positive signal: Google is willing to retrieve more content from your site, which indicates a certain level of trust in your domain.

What's the Difference Between a 404 Code and a 410 Code?

The 404 code indicates that a page cannot be found, while the 410 code signals a permanent deletion. In theory, a 410 should accelerate deindexing.

However, Mueller clarifies that the practical difference is minimal. Google might remove 410 pages from its index slightly faster, but the gain remains marginal for most sites.

- Googlebot recrawls 404s as a precaution against accidental deletions

- These repeated crawls don't harm the overall crawl budget

- A significant volume of 404 crawls can signal that Google values your site

- Switching from 404 to 410 provides few concrete advantages in most cases

- Intelligent recrawl management is automatic on Google's side

SEO Expert opinion

Is This Statement Consistent with Practices Observed in the Field?

Absolutely. For years, server logs from audited sites have indeed shown that Googlebot regularly revisits URLs returning 404s. This persistence is particularly noticeable on high-authority domains.

This statement confirms what experienced SEOs observe: Google adopts a cautious and progressive approach to errors. The search engine prefers to check multiple times rather than prematurely remove potentially relevant content.

What Important Nuances Should Be Added to This Statement?

While a few hundred 404s crawled regularly are normal, thousands of active 404 pages can still pose a problem. This typically reveals poorly planned architecture or a failed migration.

Moreover, not all 404s are created equal. 404 pages that used to receive many quality backlinks or organic traffic deserve special attention. It's preferable to redirect them with a 301 to relevant content rather than letting Google recrawl them indefinitely.

In What Cases Does This General Rule Not Apply?

On very large-scale sites (millions of pages), crawl budget becomes a critical resource. Every visit to a 404 is then a missed opportunity to crawl active, indexable content.

For small sites with few active pages, letting Google occasionally recrawl a few historical 404s has no impact. But for a marketplace or large media site, optimizing crawl toward strategic pages becomes essential.

Practical impact and recommendations

What Should You Actually Do With Your 404 Pages?

The priority is to identify strategic 404s: those with external backlinks, a significant traffic history, or that correspond to frequent searches. These pages should be redirected with a 301 to the most relevant content.

For 404s without particular value (old test URLs, expired temporary pages, etc.), the best approach is to leave them as 404s. Google will handle them naturally without intervention on your part.

Set up regular monitoring via Search Console to detect abnormal 404 spikes. A sudden increase often signals a technical problem requiring quick correction.

What Mistakes Should You Absolutely Avoid in 404 Management?

Never turn your 404s into soft 404s by displaying an error page that returns a 200 status code. Google hates this practice which creates confusion and can lead to penalties.

Avoid mass redirects of all your 404s to the homepage. This technique creates a poor user experience and unnecessarily dilutes PageRank. Only redirects to genuinely relevant content make sense.

Don't use the 410 code systematically thinking you're optimizing your crawl. The practical difference from 404 is negligible, and you risk permanently removing pages that might deserve a future redirect.

How Can I Check and Optimize Error Handling on My Site?

Start by extracting the complete list of error URLs from Search Console and your server logs. Cross-reference this data with your backlink profile to identify priorities.

Segment your 404s into categories: those to redirect (with backlinks or value), those to leave (without impact), and those revealing technical bugs to fix. This structured approach optimizes your time.

- Audit new 404 pages in Search Console monthly

- Analyze 404s that still receive quality external backlinks

- Implement 301 redirects only to relevant content

- Create a custom 404 page with useful navigation suggestions

- Monitor server logs to identify crawl patterns on errors

- Prioritize fixing technical causes generating mass 404s

- Document important redirects to facilitate future maintenance

- Avoid soft 404s and automatic redirects to the homepage

💬 Comments (0)

Be the first to comment.