Official statement

Other statements from this video 20 ▾

- □ Pourquoi Google ne peut-il jamais garantir que vos utilisateurs atterriront sur la bonne version linguistique de votre site ?

- □ Faut-il bannir les redirections automatiques pour les sites multilingues ?

- □ Faut-il bloquer l'exécution JavaScript pour les SPA avec SSR ?

- □ Faut-il baliser les mots étrangers avec l'attribut lang pour le SEO ?

- □ Le contenu dupliqué entraîne-t-il vraiment une pénalité Google ?

- □ Le rel=canonical est-il vraiment pris en compte par Google ou juste une suggestion ignorée ?

- □ Les FAQ dans les articles de blog sont-elles vraiment utiles pour le SEO ?

- □ Hreflang est-il vraiment obligatoire pour gérer un site international ?

- □ Le cache Google a-t-il un impact sur votre référencement ?

- □ Les résultats de recherche localisés : comment Google adapte-t-il vraiment son algorithme selon les pays et les langues ?

- □ Le noindex est-il vraiment inutile pour gérer le budget de crawl ?

- □ Faut-il vraiment se limiter à une seule thématique sur son site pour bien ranker ?

- □ Combien de liens peut-on vraiment mettre sur une page sans pénalité Google ?

- □ L'URL référente dans Search Console impacte-t-elle vraiment votre classement ?

- □ Le nombre de mots est-il vraiment inutile pour le référencement ?

- □ Faut-il s'inquiéter de réutiliser les mêmes blocs de texte sur plusieurs pages ?

- □ Google valide-t-il vraiment la traduction automatique sur les sites multilingues ?

- □ Les URLs bloquées par robots.txt mais indexées posent-elles vraiment problème ?

- □ Faut-il vraiment dupliquer le schema Organisation sur toutes les pages du site ?

- □ Les avis auto-hébergés peuvent-ils afficher des étoiles dans les résultats de recherche Google ?

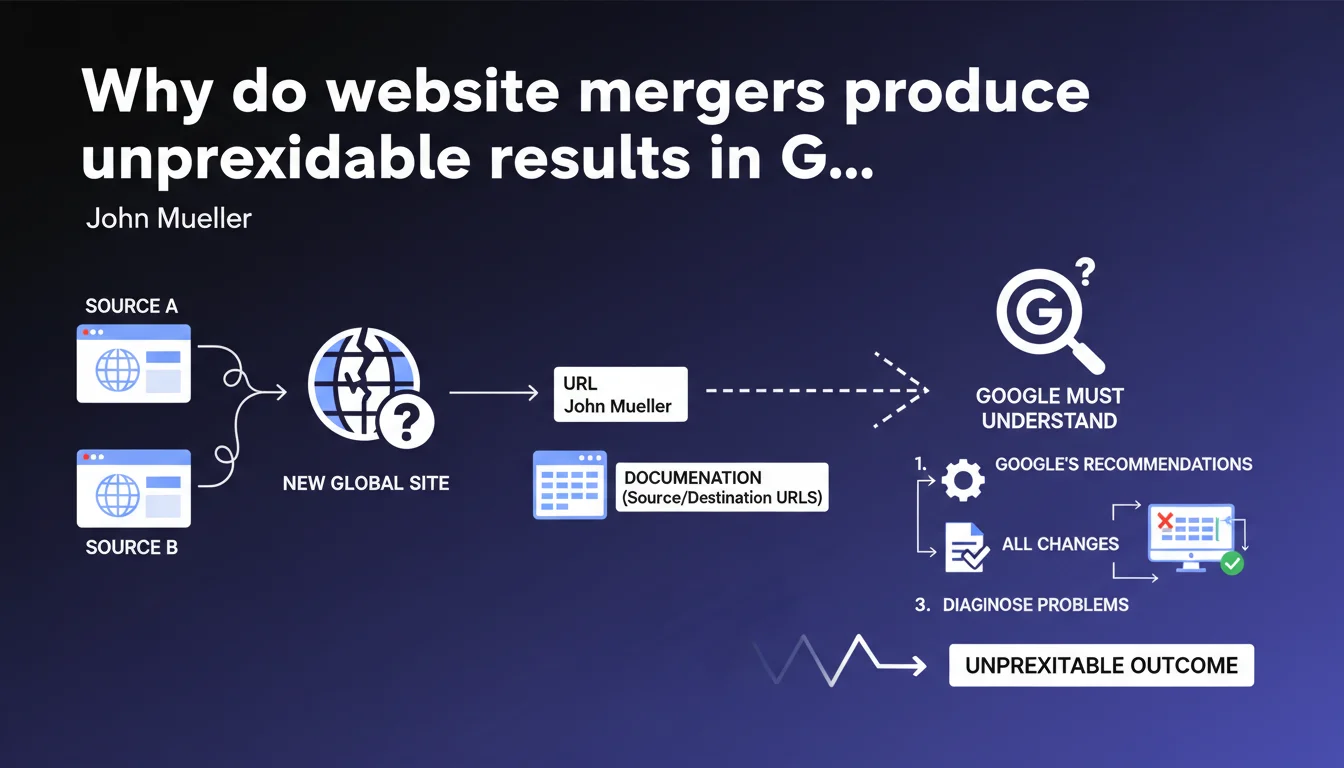

Merging two websites creates a new entity in Google's view, with behavior impossible to predict with certainty. The search engine must rebuild its overall understanding of the resulting site. Comprehensive documentation of redirects and URL changes becomes indispensable for diagnosing post-merger issues.

What you need to understand

Why does Google treat a merger as a brand new website?

When two sites merge, Google loses its historical reference points. This isn't a simple addition of two known entities — the algorithm must re-evaluate the authority, thematic relevance, link structure, and trust signals of the resulting site.

The problem is that signals from both source sites blend together with no guarantee of preservation. A site A with strong authority + a site B with relevant content doesn't automatically give you a site C that combines these advantages. Google recalculates everything from scratch.

What makes the outcome concretely unpredictable?

The variables at play exceed what you can control: quality history, contradictory backlink profiles, content cannibalization between the two sites, crawl time needed to re-index everything. Two apparently identical mergers can produce radically different results.

Google provides no predictive model. You can scrupulously follow technical recommendations and still observe a temporary drop in visibility — or conversely, an unexpected gain on certain queries.

Why does documentation become critical?

Without precise traceability, diagnosing problems borders on guesswork. A URL that loses traffic after a merger could be a victim of a misconfigured redirect, unresolved duplicate content, or simply a legitimate algorithmic re-evaluation.

Documentation allows you to differentiate a fixable technical bug from a structural positioning change. It becomes your only reference point when KPIs plummet.

- Merging = creating a new site in Google's eyes, not adding two existing sites together

- The outcome is fundamentally unpredictable despite respecting best practices

- Documenting each source/destination URL is the only way to diagnose post-merger anomalies

- Google must rebuild its overall understanding of the merged site, which takes time

SEO Expert opinion

Does this statement really reflect what we observe in the field?

Let's be honest: yes, and it can be brutal sometimes. I've seen technically perfect mergers lose 30% of traffic for 3 months before stabilizing. Others recover in 3 weeks. The luck factor plays a bigger role than we want to admit.

What Mueller doesn't explicitly state is that site size plays an enormous role. Merging two small sites (< 500 pages) is generally less chaotic than merging two giants with complex histories. Crawl budget becomes a real problem in the latter case.

What nuances should we add to this recommendation?

Documentation is good. But document what, exactly? Mueller remains vague. Concretely: source/destination URL mapping, redirect launch dates, position evolution by semantic cluster, server logs to verify actual crawling, history of backlinks retained vs. lost.

The problem — and this is where it gets tricky: Google provides no timeline for stabilization. Two weeks? Three months? Six months? Impossible to predict, which makes post-merger management extremely difficult when justifying decisions to leadership. [To verify] on publicly documented cases, but field feedback suggests a minimum of 6-12 weeks for medium-sized sites.

In which cases is this unpredictability overestimated?

When the two sites have strictly identical topics and similar backlink profiles, the merger tends to be more stable. Maximum unpredictability occurs when merging sites with different SEO DNA: a corporate site + an editorial blog, for example.

Another case where it's more stable: asymmetric merger (small site absorbed by large site). The larger site generally maintains its momentum, the smaller one just adds a few extra pages. It's the 50/50 merger that creates algorithmic chaos.

Practical impact and recommendations

What should you set up before merging two sites?

Audit both sites separately: backlink profile, internal link structure, position distribution, potential cannibalization between similar content. If both sites rank for the same keywords, anticipate brutal consolidation — and document which content you keep.

Create an exhaustive URL mapping in a spreadsheet with columns: source URL, destination URL, current HTTP status, positions before merger, incoming backlinks, monthly traffic. This file becomes your post-merger reference for any diagnosis.

What errors should you absolutely avoid during the merger?

Never launch a merger without a pre-production environment to test redirects. A classic mistake: redirecting to the homepage instead of an equivalent page, which dilutes authority and loses thematic relevance.

Another trap: merging content without a clear strategy. If two similar pages exist, choose which one becomes canonical BEFORE the merger. Letting Google decide = algorithmic roulette.

How do you monitor the merger once it launches?

Monitor daily for 2 weeks: crawl rate on old URLs (should shift to 301s), position evolution on your top 50 keywords, 404 errors in Search Console, backlinks detected on new URLs.

If after 3 weeks crawl of old URLs remains high, your redirects probably aren't being properly detected. Check server logs and the technical implementation of 301s.

- Create a complete source/destination URL mapping with SEO metrics

- Audit both sites' backlink profiles to anticipate conflicts

- Test redirects in pre-production before going live

- Define a content consolidation strategy for similar pages

- Monitor crawl, positions, and errors daily for 2-3 weeks

- Document all observed anomalies with precise timestamps

- Plan a quick rollback strategy if critical collapse occurs

❓ Frequently Asked Questions

Combien de temps faut-il à Google pour stabiliser un site fusionné ?

Peut-on prévoir l'impact SEO d'une fusion avant de la lancer ?

Faut-il fusionner les contenus similaires ou les garder séparés ?

Les redirections 301 transfèrent-elles 100% de l'autorité lors d'une fusion ?

Que faire si le trafic chute brutalement post-fusion malgré des redirections correctes ?

🎥 From the same video 20

Other SEO insights extracted from this same Google Search Central video · published on 21/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.