Official statement

Other statements from this video 8 ▾

- □ Le contenu dupliqué pénalise-t-il vraiment votre site sur Google ?

- □ Faut-il vraiment s'inquiéter des alertes de duplication dans Google Search Console ?

- □ La balise canonical : pourquoi Google ignore-t-il parfois vos instructions ?

- □ Faut-il privilégier la balise HTML ou l'en-tête HTTP pour déclarer une URL canonique ?

- □ Pourquoi Google ignore-t-il votre balise canonical et comment le corriger ?

- □ Faut-il vraiment rediriger en 301 toutes les URL non-canoniques pour le SEO ?

- □ Pourquoi fusionner des pages similaires améliore-t-il le SEO même sans duplicate content ?

- □ Faut-il vraiment fusionner vos pages pour améliorer votre SEO ?

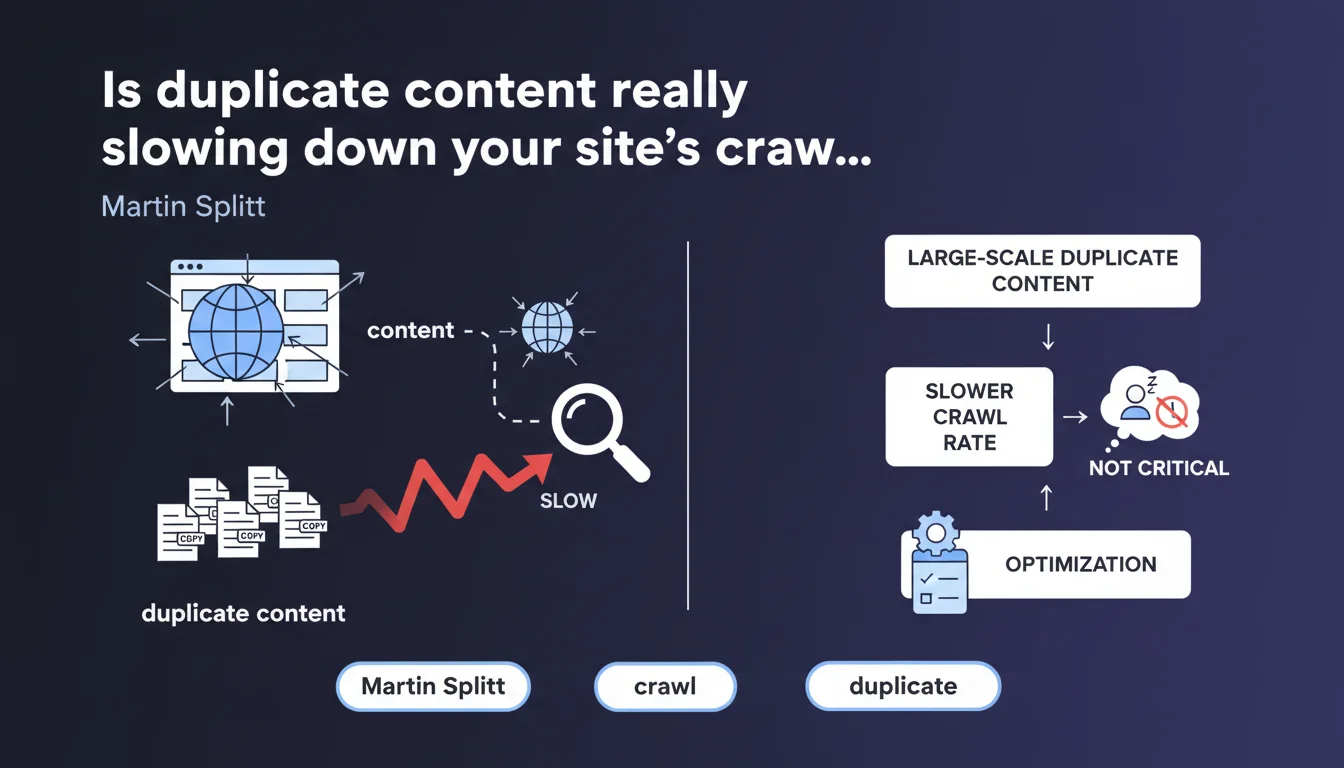

Google confirms that large-scale duplicate content slows down crawling, without constituting a penalty. Martin Splitt downplays the impact — "nothing that should keep you awake at night" — but still encourages optimization. A typically fuzzy position that deserves closer examination.

What you need to understand

What exactly does Google's statement say?

Martin Splitt acknowledges that large-volume duplicate content can cause crawl slowdown. He immediately clarifies that this is not a major concern, but it remains relevant in an optimization strategy.

The wording deliberately remains vague: at what volume does "large scale" begin? What magnitude of slowdown are we talking about? Google provides no figures, no thresholds.

Why does duplicate content affect crawling?

When Googlebot discovers pages with identical or near-identical content, it must analyze, compare, and determine which version to keep in the index. This processing consumes crawl budget — a limited resource, especially on large sites.

The bot wastes time on redundant URLs instead of exploring high-value pages. The problem mainly arises when thousands of duplicate pages saturate the site: e-commerce facets, URL parameters, printable versions, poorly managed pagination.

What's the difference between this and a duplicate content penalty?

Google insists: this is not an algorithmic penalty. Your site won't be penalized in rankings simply because it contains duplicate content.

However, the indirect effect exists: fewer pages crawled = fewer pages indexed quickly = less potential visibility. It's a mechanical bottleneck, not a punishment.

- Large-scale duplicate content slows down page crawling without constituting a direct penalty

- The impact manifests as inefficient consumption of available crawl budget

- Google provides no numerical threshold to define "large scale"

- Slowdown primarily affects large sites with thousands of redundant URLs

- High-value pages may be explored less frequently due to time wasted on duplicates

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On large e-commerce or media sites, we indeed observe that crawl rate drops when thousands of facets, pagination pages, or URL parameters generate duplicates. Server logs clearly show this: Googlebot returns less frequently to strategic pages.

But Splitt's wording downplays the problem. "Shouldn't keep you awake at night" — except on a 100,000-page site with 60% duplicate content, it can seriously tank the indexation of new and deep pages. [Needs verification]: Google provides no figures on the critical threshold.

Why does Google remain so vague about thresholds?

Because setting a percentage or volume would trigger gaming behaviors: "OK, so I can get away with 30% duplicate without risk." Google prefers to leave ambiguity so everyone optimizes to the maximum.

Another reason: crawl budget varies based on site popularity, freshness, and speed. A universal threshold would be meaningless. But this opacity complicates diagnosis for practitioners.

What nuances should be considered?

Not all duplicates are equal. A site with 500 product sheets 95% identical will pose more problems than a blog with a few redundant "About" or legal notice pages. The absolute volume matters, but so does the proportion relative to unique content.

Moreover, some crawl tools (Screaming Frog, OnCrawl) detect duplicate that Google ignores in practice: metadata, navigation blocks, footers. You must distinguish minor structural duplicate from massive editorial duplicate.

Practical impact and recommendations

What should you do concretely to limit the impact?

First, audit your site to identify duplicate sources: e-commerce facets, infinite pagination, sort parameters, AMP/mobile/desktop versions, content syndication. Use Screaming Frog or a crawl tool to map duplicates.

Next, canonicalize intelligently. The rel=canonical tag should point to the reference version. If you have 50 variants of a product page (color, size), only one URL should be indexable.

For e-commerce facets: block crawling via robots.txt or noindex on low-traffic combinations. Prioritize client-side JavaScript for filters — Googlebot doesn't follow dynamically generated links without initial HTML.

What mistakes must you avoid absolutely?

Don't canonicalize haphazardly. If page A points to B via canonical, and B points to C, you create a canonical chain — Google may ignore the directive.

Also avoid massive noindex on frequently crawled pages. If Googlebot still explores them, you're wasting crawl budget without benefit. Better to block properly via robots.txt or prevent URL generation altogether.

And above all, don't confuse duplicate content with thin content. A duplicate page rich in unique content poses less problem than a unique page empty of value.

How can you verify your site is optimized?

Analyze your server logs over at least 30 days. What proportion of Googlebot hits target strategic pages versus redundant ones? If less than 50% of crawl targets your high-value pages, you have room for optimization.

Also use Search Console: Crawl statistics section. A constantly declining crawl rate, coupled with important pages not indexed, may signal a duplicate problem consuming your budget.

- Audit sources of duplicate content (facets, pagination, URL parameters)

- Implement coherent canonicals across all page variants

- Block crawling of low-value URLs via robots.txt or noindex

- Prioritize client-side JavaScript for dynamic e-commerce filters

- Avoid canonical chains (A → B → C) that render the directive ineffective

- Analyze server logs to measure crawl proportion on strategic pages

- Monitor Crawl statistics in Search Console to detect crawl drops

- Distinguish minor structural duplicate from massive editorial duplicate

❓ Frequently Asked Questions

Le contenu dupliqué peut-il entraîner une pénalité Google ?

À partir de combien de pages dupliquées parle-t-on de grande échelle ?

La balise canonical suffit-elle à résoudre le problème de crawl ?

Comment savoir si mon site est impacté par un problème de duplicate et de crawl ?

Le duplicate dans les blocs de navigation ou footer compte-t-il aussi ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 12/11/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.