Official statement

What you need to understand

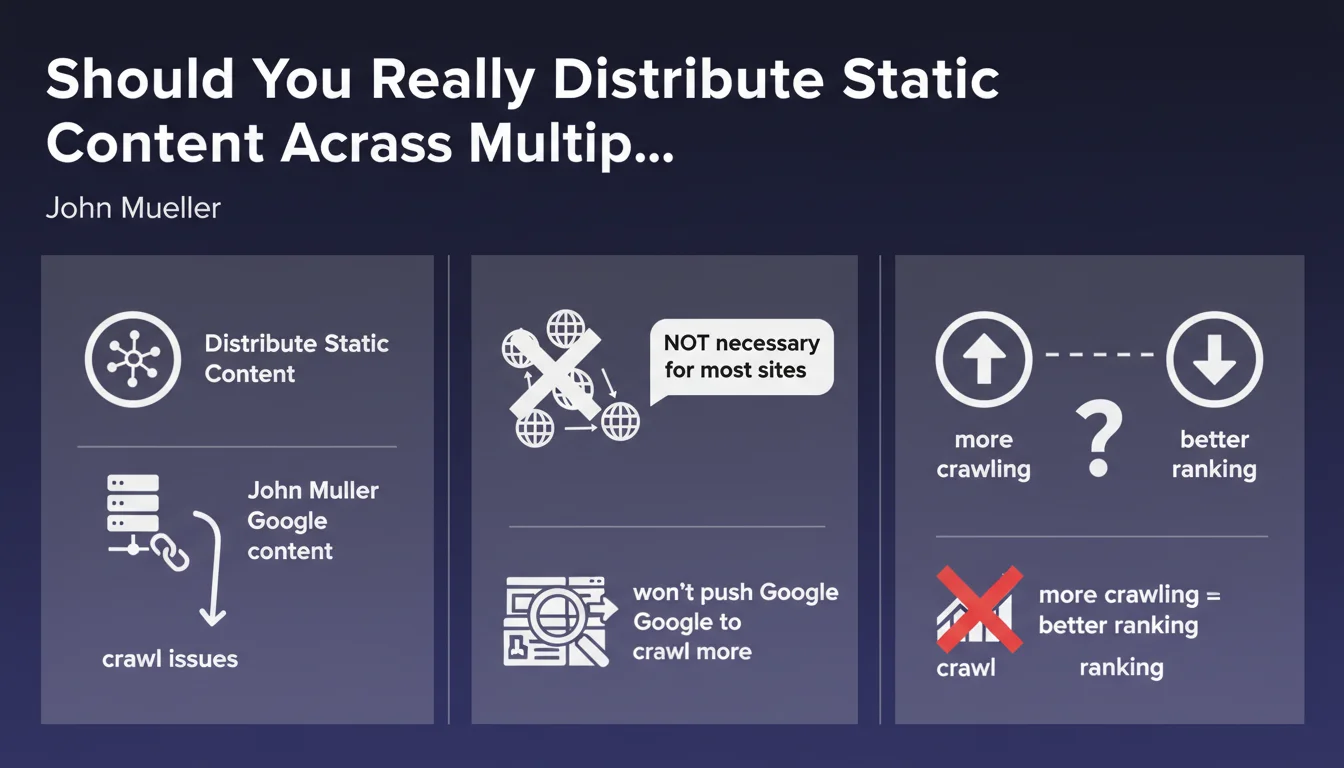

Google recalls a technique sometimes used in technical SEO: distributing static resources (images, CSS, JavaScript) across subdomains or separate domains. The initial objective is to bypass browser simultaneous connection limitations and potentially optimize crawl budget.

However, the clarification provided is essential: this approach doesn't convince Google to crawl more of your pages. The crawl budget allocated to your site won't be increased simply because you distribute your static resources. Google manages its crawling based on multiple factors such as site authority, content freshness, and overall quality.

More importantly, it's crucial to understand that more crawling absolutely doesn't guarantee better rankings. Crawling is necessary for indexation, but it's the quality, relevance, and user experience signals that determine rankings.

- Distribution across multiple domains mainly concerns very large sites with proven crawl issues

- This technique doesn't increase the crawl budget allocated by Google

- More crawled pages ≠ better rankings in search results

- The majority of sites don't need this optimization

SEO Expert opinion

This clarification is particularly welcome as it demystifies a practice that's often misunderstood. Many SEOs still think that distributing static resources is a systematic optimization, when it's actually a solution to a specific problem: sites with millions of pages and real crawl constraints.

In practice, I observe that medium-sized sites (less than 100,000 pages) generally have no measurable SEO benefit from this approach. On the other hand, it can complicate technical configuration, increase CORS error risks, and make analytics tracking more complex. The game is rarely worth the candle.

The real benefits of this technique are mainly on the user performance side (download parallelization, CDN optimization) rather than pure SEO. If you implement it, do it for the right reasons.

Practical impact and recommendations

- Don't implement static resource distribution if your site has fewer than 50,000 indexable pages

- First analyze your actual crawl budget via Search Console (Crawl Stats report) before making any decision

- Verify that your strategic pages are being crawled regularly - this is more important than total volume

- Optimize your internal linking structure and reduce click depth if certain pages aren't being explored

- Eliminate unnecessary pages from crawling (facets, duplicates) via robots.txt or noindex rather than distributing resources

- Use a high-performance CDN for your static resources - it benefits UX without complicating architecture

- Don't rely on more crawling to improve your rankings - work instead on content quality and relevance

- If you genuinely have a crawl issue on a very large site (e-commerce, media, directories), document it precisely with data before taking action

Managing crawl budget and optimizing technical architecture for large-scale sites requires specialized expertise and in-depth analysis of server logs, Search Console data, and actual Googlebot behavior. These optimizations touch on critical infrastructure aspects and can have significant consequences if poorly executed.

For complex sites facing genuine crawl challenges, support from an SEO agency specialized in technical SEO can prove valuable. A comprehensive technical audit will identify the real bottlenecks and prioritize truly impactful actions, rather than applying one-size-fits-all recipes that may not match your specific context.

💬 Comments (0)

Be the first to comment.